1.修改函数 rasterize_triangle(const Triangle& t) in rasterizer.cpp: 在此 处实现与作业 2 类似的插值算法,实现法向量、颜色、纹理颜色的插值。

与作业2的差别就是需要自己计算插值后的颜色,uv等信息。函数参数新增了一个参数是观察空间的顶点坐标。set_pixel(Vector2i(x, y), pixel_color);这个也相对作业2进行了改动,节省了空间。计算了插值后的信息以后存储到结构体中,然后将结构体的信息传递给shader计算出颜色,之后设置像素颜色并存储深度值。这里会出现异常因为模型的存储位置不对,需要更改

//Screen space rasterization

void rst::rasterizer::rasterize_triangle(const Triangle& t, const std::array<Eigen::Vector3f, 3>& view_pos)

{

auto v = t.toVector4();

// TODO : Find out the bounding box of current triangle.

float min_x = width;

float max_x = 0;

float min_y = height;

float max_y = 0;

// find out the bounding box of current triangle

for (int i = 0; i < 3; i++) {

min_x = min(v[i].x(), min_x);

max_x = max(v[i].x(), max_x);

min_y = min(v[i].y(), min_y);

max_y = max(v[i].y(), max_y);

}

for (int y = min_y; y < max_y; y++)

{

for (int x = min_x; x < max_x; x++)

{

int index = get_index(x, y);

if (insideTriangle(x+0.5, y+0.5, t.v))

{

auto [alpha, beta, gamma] = computeBarycentric2D(x, y, t.v);

float w_reciprocal = 1.0 / (alpha / v[0].w() + beta / v[1].w() + gamma / v[2].w());

float z_interpolated = alpha * v[0].z() / v[0].w() + beta * v[1].z() / v[1].w() + gamma * v[2].z() / v[2].w();

z_interpolated *= w_reciprocal;

if (z_interpolated < depth_buf[index]) {

Vector3f interpolated_color = interpolate(alpha, beta, gamma, t.color[0], t.color[1], t.color[2], 1);

Vector3f interpolated_normal = interpolate(alpha, beta, gamma, t.normal[0], t.normal[1], t.normal[2], 1);

Vector2f interpolated_texcoords = interpolate(alpha, beta, gamma, t.tex_coords[0], t.tex_coords[1], t.tex_coords[2], 1);

Vector3f interpolated_shadingcoords = interpolate(alpha, beta, gamma, view_pos[0], view_pos[1], view_pos[2], 1);

fragment_shader_payload payload(interpolated_color, interpolated_normal.normalized(), interpolated_texcoords, texture ? &*texture : nullptr);

payload.view_pos = interpolated_shadingcoords;

auto pixel_color = fragment_shader(payload);

set_pixel(Vector2i(x, y), pixel_color);

depth_buf[index] = z_interpolated;

}

}

}

}

}2. 修改函数 get_projection_matrix() in main.cpp

这一步将作业2的投影矩阵函数复制过来就可以。

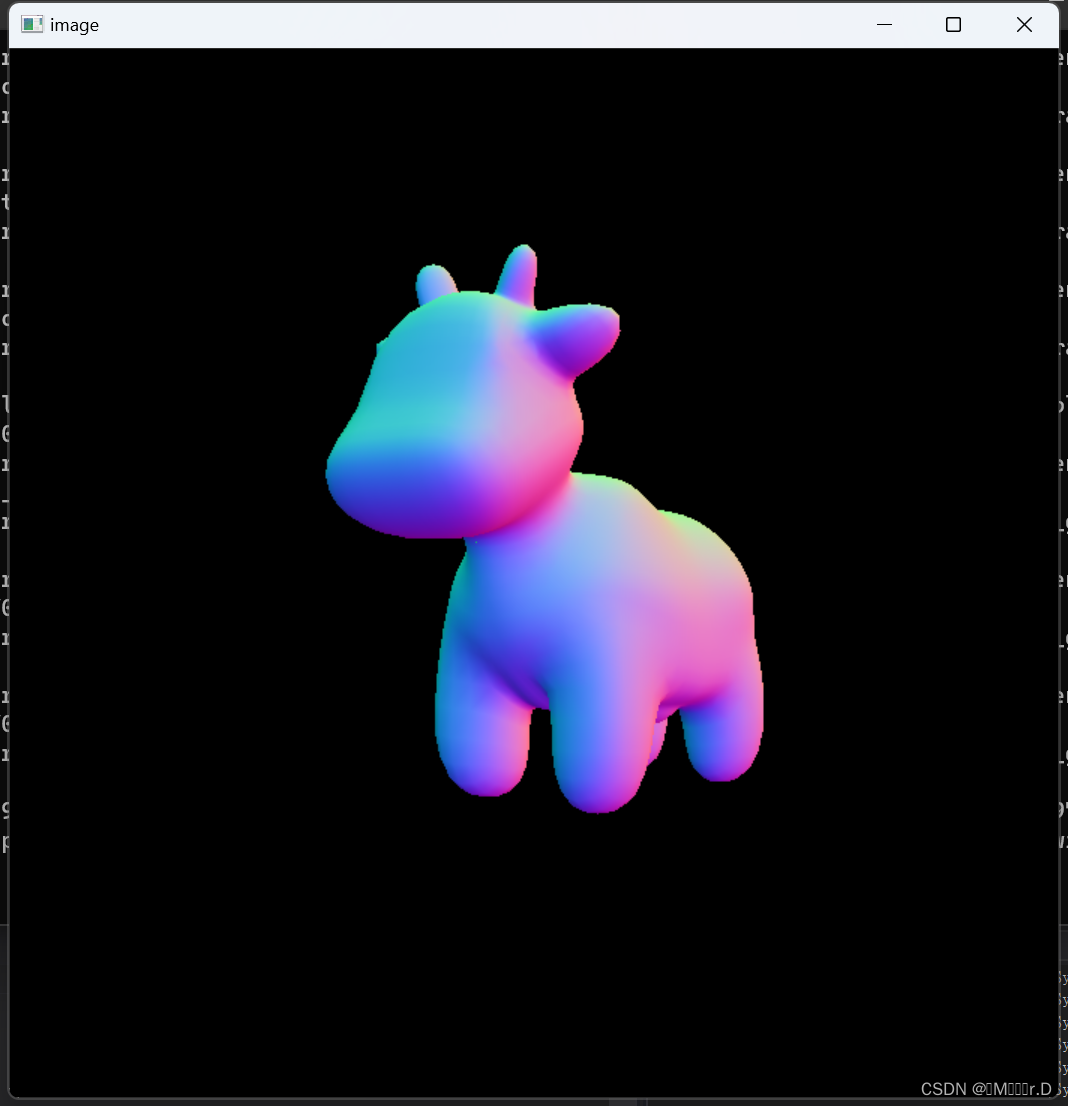

完成这两步以后就可以将main函数的着色模型先改成normal_fragment_shader显示法线颜色了

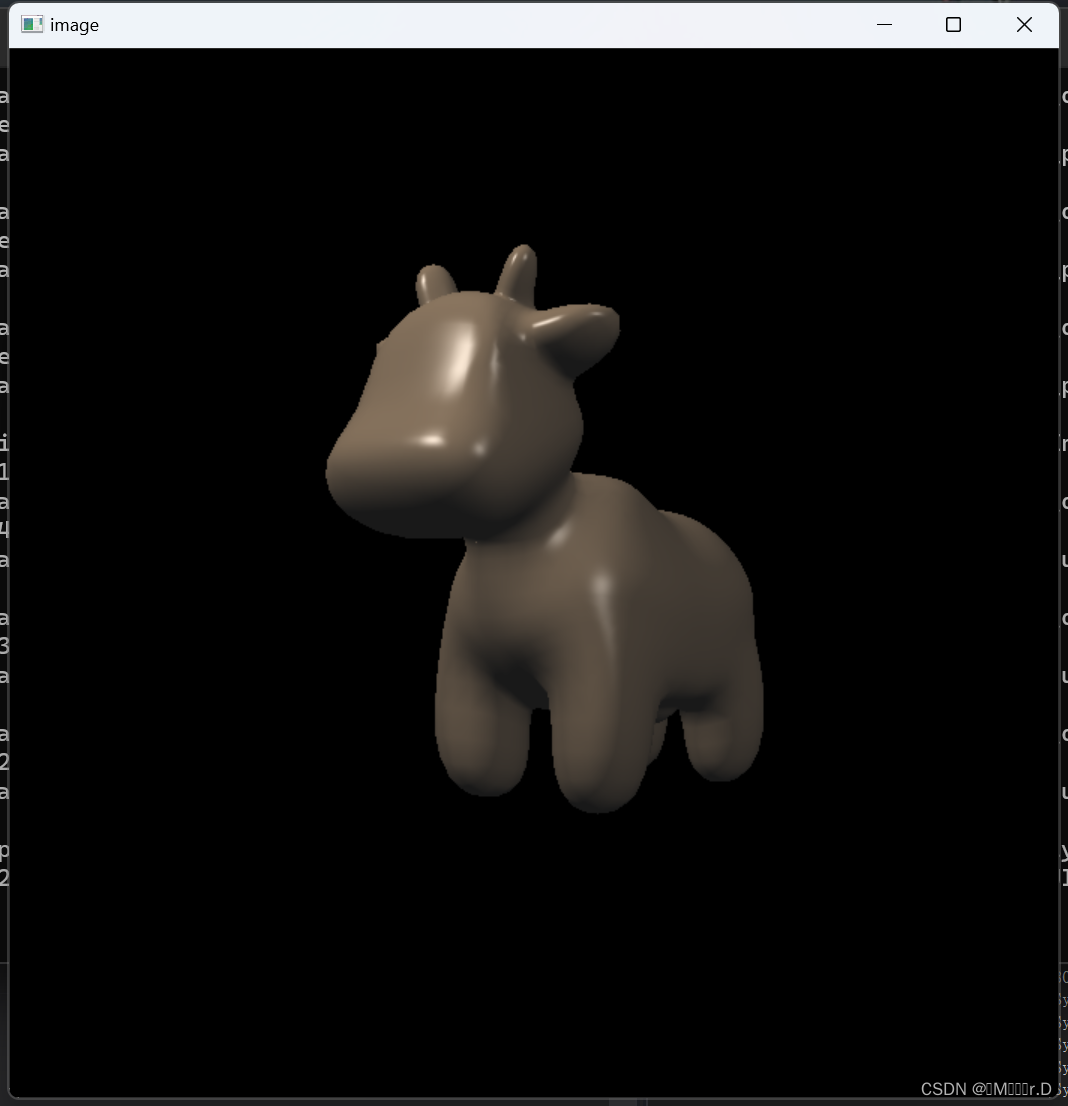

3. 修改函数 phong_fragment_shader() in main.cpp: 实现 Blinn-Phong 模型计 算 Fragment Color.

这部分要计算环境光,漫反射和高光反射,先分别计算各部分计算需要的参数,最后带入公式计算即可,这里我还顺便计算了一下phong着色模型

Eigen::Vector3f phong_fragment_shader(const fragment_shader_payload& payload)

{

Eigen::Vector3f ka = Eigen::Vector3f(0.005, 0.005, 0.005);

Eigen::Vector3f kd = payload.color;

Eigen::Vector3f ks = Eigen::Vector3f(0.7937, 0.7937, 0.7937);

auto l1 = light{{20, 20, 20}, {500, 500, 500}};

auto l2 = light{{-20, 20, 0}, {500, 500, 500}};

std::vector<light> lights = {l1, l2};

Eigen::Vector3f amb_light_intensity{10, 10, 10};

Eigen::Vector3f eye_pos{0, 0, 10};

float p = 150;

Eigen::Vector3f color = payload.color;

Eigen::Vector3f point = payload.view_pos;

Eigen::Vector3f normal = payload.normal;

Eigen::Vector3f result_color = {0, 0, 0};

for (auto& light : lights)

{

// TODO: For each light source in the code, calculate what the *amor* object.

Vector3f l = (light.position - point);

Vector3f v = eye_pos - point;

Vector3f h = l.normalized() + v.normalized();

Vector3f r = reflect(-l,normal);

float r_square = l.dot(l);

float ndotl = normal.normalized().dot(l.normalized());

float vdotr = v.normalized().dot(r.normalized());

float ndoth = normal.normalized().dot(h.normalized());

Vector3f ambientColor = ka.cwiseProduct(amb_light_intensity);

Vector3f diffuseColor = kd.cwiseProduct(light.intensity / r_square * max(0.0f, ndotl));

Vector3f specularColor = ks.cwiseProduct(light.intensity / r_square) * max(0.0f, pow(ndoth, p));

// Vector3f specularColor = ks.cwiseProduct(light.intensity / r_square * max(0.0f, pow(vdotr,p)));

result_color += ambientColor + diffuseColor + specularColor;

}

return result_color * 255.f;

}最后结果如下

4. 修改函数 texture_fragment_shader() in main.cpp: 在实现 Blinn-Phong 的基础上,将纹理颜色视为公式中的 kd,实现 Texture Shading Fragment Shader.

这一步用uv坐标对纹理采样获得颜色即可,但是直接采样的话会报错,发现是有一些u坐标出现了负值,直接将u坐标限制在0-1之间即可解决,其它代码和phong shader一样

Eigen::Vector3f return_color = {0, 0, 0};

if (payload.texture)

{

// TODO: Get the texture value at the texture coordinates of the current fragment

// return_color = payload.texture->getColor( clamp(payload.tex_coords.x(),0.0f,1.0f), payload.tex_coords.y());

float u = payload.tex_coords.x();

float v = payload.tex_coords.y();

return_color = payload.texture->getColor(clamp(u,0.0f,1.0f), v);

}

Eigen::Vector3f texture_color;

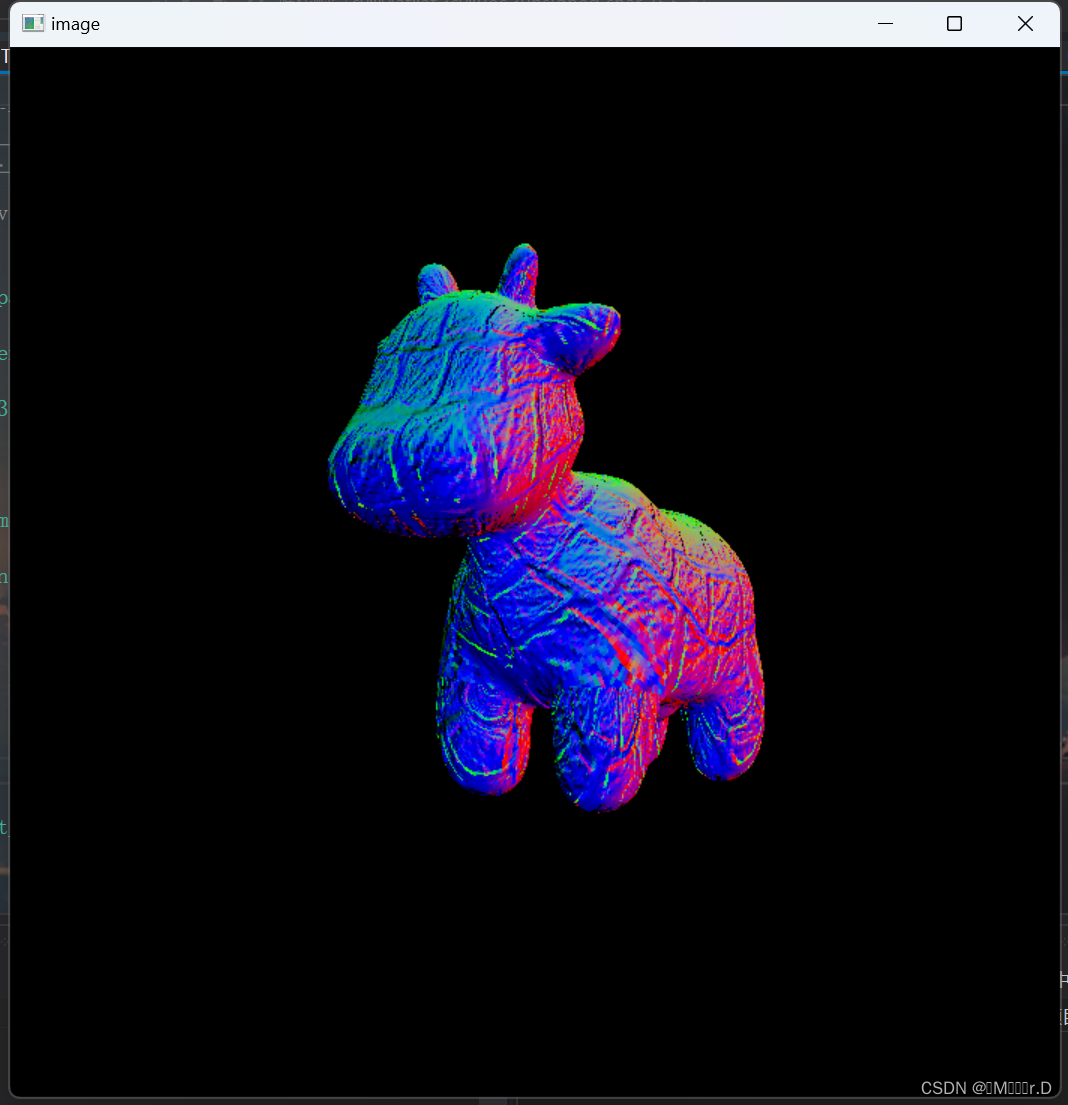

texture_color << return_color.x(), return_color.y(), return_color.z();5.修改函数 bump_fragment_shader() in main.cpp: 在实现 Blinn-Phong 的 基础上,仔细阅读该函数中的注释,实现 Bump mapping

这部分通过uv采样法线贴图之后再通过TBN矩阵将法线转换到世界空间即可

这里也出现了一个异常,定位问题会出现在texture.hpp文件的Texture类的成员函数getColor里image_data.at函数里面。发现该函数的两个参数传入,超过了图片像素大小700*700的限制,导致出现越界的情况。所以在这个函数之前增加限定。

Eigen::Vector3f getColor(float u, float v)

{

if (u < 0)u = 0.0f;

if (u > 1)u = 0.999f;

if (v < 0)v = 0.0f;

if (v > 1)v = 0.999f;

auto u_img = u * width;

auto v_img = (1 - v) * height;

auto color = image_data.at<cv::Vec3b>(v_img, u_img);

return Eigen::Vector3f(color[0], color[1], color[2]);

}Eigen::Vector3f bump_fragment_shader(const fragment_shader_payload& payload)

{

Eigen::Vector3f normal = payload.normal;

float kh = 0.2, kn = 0.1;

Vector3f n = normal;

float x = n.x(), y = n.y(), z = n.z();

Vector3f t(x * y / sqrt(x * x + z * z), sqrt(x * x + z * z), z * y / sqrt(x * x + z * z));

Vector3f b = n.cross(t);

Matrix3f TBN;

TBN << t.x(), b.x(), n.x(),

t.y(), b.y(), n.y(),

t.z(), b.z(), n.z();

int w = payload.texture->width;

int h = payload.texture->height;

float u = payload.tex_coords.x();

float v = payload.tex_coords.y();

float dU = 0, dV = 0;

if (payload.texture)

{

dU = kh * kn * (payload.texture->getColor(u + 1.0f / w, v).norm() - payload.texture->getColor(u, v).norm());

dV = kh * kn * (payload.texture->getColor(u, v + 1.0f / h).norm() - payload.texture->getColor(u, v).norm());

}

Vector3f ln(-dU, -dV, 1);

normal = (TBN * ln).normalized();

Eigen::Vector3f result_color = { 0, 0, 0 };

result_color = normal;

return result_color * 255.f;

}

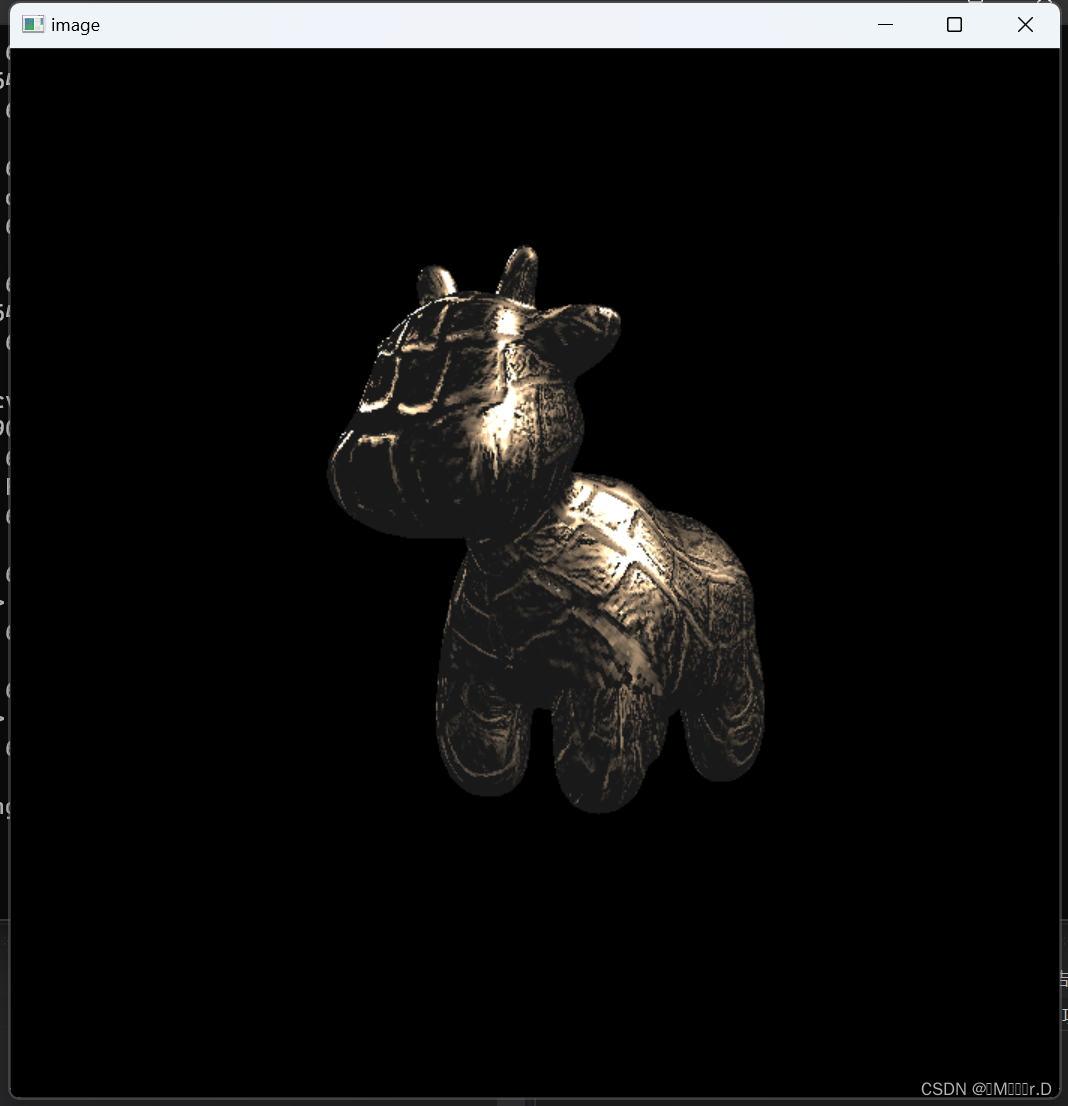

6. 修改函数 displacement_fragment_shader() in main.cpp: 在实现 Bump mapping 的基础上,实现 displacement mapping.

这一部分的区别就是不仅要更新法线位置,也要更新顶点位置,然后利用更新后的顶点重新计算光照。

Eigen::Vector3f displacement_fragment_shader(const fragment_shader_payload& payload)

{

Eigen::Vector3f ka = Eigen::Vector3f(0.005, 0.005, 0.005);

Eigen::Vector3f kd = payload.color;

Eigen::Vector3f ks = Eigen::Vector3f(0.7937, 0.7937, 0.7937);

auto l1 = light{{20, 20, 20}, {500, 500, 500}};

auto l2 = light{{-20, 20, 0}, {500, 500, 500}};

std::vector<light> lights = {l1, l2};

Eigen::Vector3f amb_light_intensity{10, 10, 10};

Eigen::Vector3f eye_pos{0, 0, 10};

float p = 150;

Eigen::Vector3f color = payload.color;

Eigen::Vector3f point = payload.view_pos;

Eigen::Vector3f normal = payload.normal;

float kh = 0.2, kn = 0.1;

// TODO: Implement displacement mapping here

// Let n = normal = (x, y, z)

// Vector t = (x*y/sqrt(x*x+z*z),sqrt(x*x+z*z),z*y/sqrt(x*x+z*z))

// Vector b = n cross product t

// Matrix TBN = [t b n]

// dU = kh * kn * (h(u+1/w,v)-h(u,v))

// dV = kh * kn * (h(u,v+1/h)-h(u,v))

// Vector ln = (-dU, -dV, 1)

// Position p = p + kn * n * h(u,v)

// Normal n = normalize(TBN * ln)

Vector3f n = normal;

float x = n.x(), y = n.y(), z = n.z();

Vector3f t(x * y / sqrt(x * x + z * z), sqrt(x * x + z * z), z * y / sqrt(x * x + z * z));

Vector3f b = n.cross(t);

Matrix3f TBN;

TBN << t.x(), b.x(), n.x(),

t.y(), b.y(), n.y(),

t.z(), b.z(), n.z();

int w = payload.texture->width;

int h = payload.texture->height;

float u = payload.tex_coords.x();

float v = payload.tex_coords.y();

float dU = 0, dV = 0;

if (payload.texture)

{

dU = kh * kn * (payload.texture->getColor(u + 1.0f / w, v).norm() - payload.texture->getColor(u, v).norm());

dV = kh * kn * (payload.texture->getColor(u, v + 1.0f / h).norm() - payload.texture->getColor(u, v).norm());

point += kn * n * (payload.texture->getColor(u, v).norm());

}

Vector3f ln(-dU, -dV, 1);

normal = (TBN * ln).normalized();

Eigen::Vector3f result_color = {0, 0, 0};

for (auto& light : lights)

{

// TODO: For each light source in the code, calculate what the *ambient*, *diffuse*, and *specular*

// components are. Then, accumulate that result on the *result_color* object.

Vector3f l = (light.position - point);

Vector3f v = eye_pos - point;

Vector3f h = l.normalized() + v.normalized();

float r_square = l.dot(l);

float ndotl = normal.normalized().dot(l.normalized());

float ndoth = normal.normalized().dot(h.normalized());

Vector3f ambient = ka.cwiseProduct(amb_light_intensity);

Vector3f diffuse = kd.cwiseProduct(light.intensity / r_square) * max(0.0f, ndotl);

Vector3f specular = ks.cwiseProduct(light.intensity / r_square) * pow(max(0.0f, ndoth), p);

result_color += ambient + diffuse + specular;

}

return result_color * 255.f;

} 7.实现双线性纹理插值

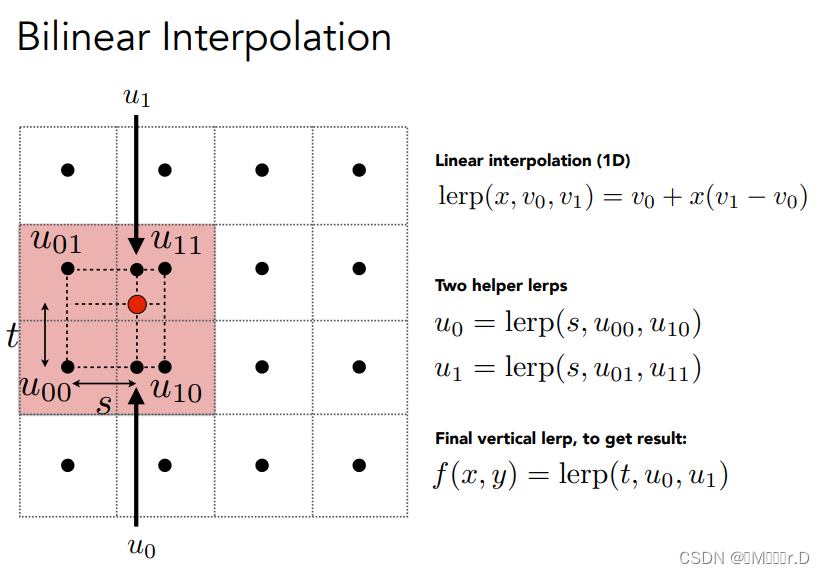

7.实现双线性纹理插值

双线性插值有两个步骤,

- 寻找目标点周边最近的4个纹理像素;

- 根据目标点与4个纹理像素中心的距离计算插值权重。

主要原理可以参照下图

第一步要计算周围的四个点就要先找到周围的最近的整数坐标值,接着就可以计算周围四个点的uv坐标,用新的四个uv坐标进行采样之后进行插值就可以了,主要代码如下

cv::Vec3b lerp(cv::Vec3b color0, cv::Vec3b color1, float t)

{

cv::Vec3b result_color;

for (int i = 0; i < 3; i++)

{

result_color[i] = color0[i] + (color1[i] - color0[i]) * t;

}

return result_color;

}

Eigen::Vector3f getColorBilinear(float u, float v)

{

if (u < 0)u = 0.0f;

if (u > 1)u = 0.999f;

if (v < 0)v = 0.0f;

if (v > 1)v = 0.999f;

float u_img = u * width;

float v_img = (1 - v) * height;

int u_center = round(u_img);

int v_center = round(v_img);

int u0 = std::clamp(u_center - 1, 0, width);

int u1 = std::clamp(u_center + 1, 0, width);

int v0 = std::clamp(v_center - 1, 0, width);

int v1 = std::clamp(v_center + 1, 0, width);

std::array<cv::Vec3b, 4> colors;

colors[0] = image_data.at<cv::Vec3b>(v0, u0);

colors[1] = image_data.at<cv::Vec3b>(v1, u0);

colors[2] = image_data.at<cv::Vec3b>(v0, u1);

colors[3] = image_data.at<cv::Vec3b>(v1, u1);

float s = u_img - std::clamp(u_center - 0.5, 0.5, width - 0.5);

float t = v_img - std::clamp(v_center - 0.5, 0.5, height - 0.5);

// TODO: Implement Bilinear Interpolation Sampler

cv::Vec3b color = lerp(lerp(colors[0], colors[1], t), lerp(colors[2], colors[3], t), s);

return Eigen::Vector3f(color[0], color[1], color[2]);

7941

7941

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?