随机梯度下降法:

import numpy as np

import random

def gen_line_data(sample_num=100):

x1 = np.linspace(0, 9, sample_num)

x2 = np.linspace(1, 10, sample_num)

x3 = np.linspace(2, 11, sample_num)

x = np.concatenate(([x1], [x2], [x3]), axis=0).T

y = np.dot(x, np.array([3, 4, 5]).T)

return x, y

# 随机梯度下降

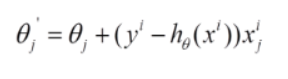

def sgd(samples, y, step_size=0.01, max_iter_count=10000):

sample_num, dim = samples.shape

y = y.flatten()

w = np.ones((dim,), dtype=np.float64)

loss = 10

iter_count = 0

while loss > 0.00001 and iter_count < max_iter_count:

loss = 0

error = np.zeros((dim,), dtype=np.float64)

for i in range(sample_num):

predict_y = np.dot(w.T, samples[i])

for j in range(dim):

error[j] += (y[i] - predict_y) * samples[i][j]

w[j] += step_size * error[j] / sample_num

for i in range(sample_num):

predict_y = np.dot(w.T, samples[i])

error = (1 / (sample_num * dim)) * np.power((predict_y - y[i]), 2)

loss += error

print("次数: ", iter_count, "损失:", loss)

iter_count += 1

return w

if __name__ == '__main__':

samples, y = gen_line_data()

w = sgd(samples, y)

print(w)

9358

9358

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?