Auto Encoder-Decoder实现图像压缩与重建“简单Demo”

附加 TensorBoard可视化网络训练过程

一、简介

通过自动编解码器实现图像压缩与重建的简单DEMO。

二、过程解释

编码器功能: 将图像从28×28的尺寸逐步压缩成4×4,最后展开为二维向量

编码器输入: 尺寸为28×28的自然图像

编码器输出: 1×16的二维向量

解码器功能: 将接收到的内容恢复为原始大小28×28

解码器输入: 1×16的二维向量

解码器输出: 尺寸为28×28的自然图像

三、具体代码

import os

import numpy as np

from PIL import Image

from tensorflow.python import keras

from tensorflow.python.keras.layers import Dense

import matplotlib.pyplot as plt

import random

from tensorflow.keras.callbacks import TensorBoard

# 读取数据

def get_data(data_dir):

datas = []

labels = []

for i in range(10):

folder_dir = os.path.join(data_dir, str(i))

file_list = os.listdir(folder_dir)

for file in file_list:

files = file.split(".")

if files[-1] == "png":

image = np.array(Image.open(os.path.join(folder_dir, file)))

image = image.flatten() # 将28x28的图片转为784为的向量

image = image / 255.

datas.append(image)

labels.append(i)

datas = np.array(datas)

labels = np.array(labels)

return datas, labels

mnist_train_data = "./mnist/training"

x_train, y_train = get_data(mnist_train_data)

print("datas shape:", x_train.shape)

print("labels shape:", y_train.shape)

# encoder

encoder = keras.Sequential([

Dense(256, activation='relu'),

Dense(64, activation='relu'),

Dense(16, activation='relu'),

Dense(2)

])

# decoder

decoder = keras.Sequential([

Dense(16, activation='relu'),

Dense(64, activation='relu'),

Dense(256, activation='relu'),

Dense(784, activation='sigmoid')

])

# autoencoderdecoder

autoencoderdecoder = keras.Sequential([

encoder,

decoder

])

# 定义一个TensorBoard回调

tb = TensorBoard(log_dir='./logs', histogram_freq=1, write_graph=True, write_images=True)

# 训练网络

autoencoder.compile(optimizer='adam', loss=keras.losses.binary_crossentropy)

autoencoder.fit(x_train, x_train, epochs=30, batch_size=256, callbacks=[tb])

# 使用训练好的网络

is_reconstruct = True

if is_reconstruct:

# 图像重构

examples_to_show = 10

indices = random.sample(range(len(x_train)), examples_to_show)

rec = autoencoder.predict(x_train[indices])

f, a = plt.subplots(2, examples_to_show, figsize=(10, 2))

for i in range(examples_to_show):

a[0][i].imshow(np.reshape(x_train[indices[i]], (28, 28)))

a[1][i].imshow(np.reshape(rec[i], (28, 28)))

plt.show()

else:

# classification

batch_x = x_train[:1000]

batch_y = y_train[:1000]

encoded = encoder.predict(batch_x)

plt.scatter(encoded[:, 0], encoded[:, 1], c=batch_y)

plt.show()

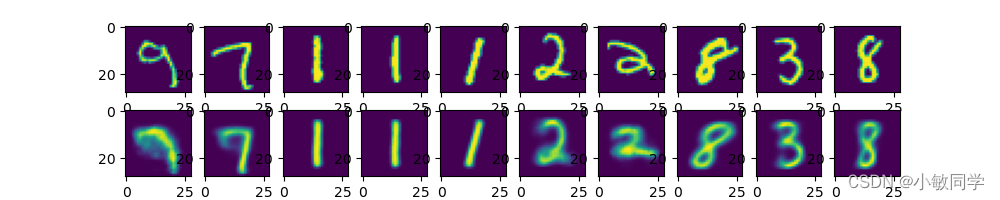

四、实现结果

可见,网络经过30个epoch的训练就可以得到良好的压缩、重建效果。

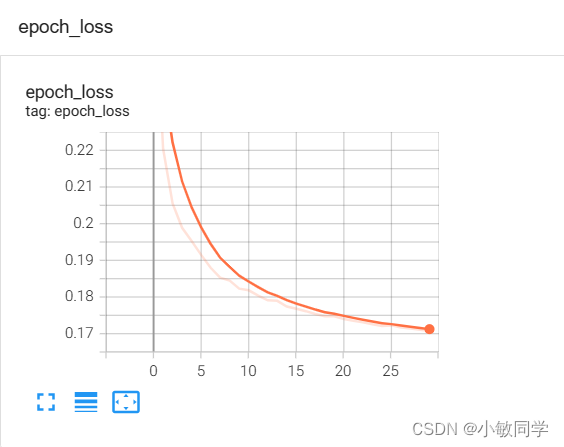

五、额外的知识

通过TensorBoard可视化网络训练过程的损失函数。

添加的代码如下:

from tensorflow.keras.callbacks import TensorBoard

# 定义一个TensorBoard回调

tb = TensorBoard(log_dir='./logs', histogram_freq=1, write_graph=True, write_images=True)

# 在训练过程中添加TensorBoard回调

autoencoder.fit(x_train, x_train, epochs=10, batch_size=256, callbacks=[tb])

在命令行执行的代码如下:

tensorboard --logdir=./logs

最终显示结果如下:

六、源码链接

后期附上

1042

1042

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?