老版本的部分api已经不能使用,所以在此更新一个1.0版本的可视化模版。

本文章主要从一个神经网络为例子,然后分别展现2个版本:第一个是展现神经网络的结构,第二个是展现训练迭代的loss及权重图

例子说明:

这个例子可参照莫烦的tensorflow神经网络完成 非线性拟合。原始代码如下

神经网络基础代码

import tensorflow as tf

import numpy as np

def add_layer(inputs, in_size, out_size, activation_function=None):

# add one more layer and return the output of this layer

Weights = tf.Variable(tf.random_normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1)

Wx_plus_b = tf.add(tf.matmul(inputs, Weights), biases)

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, )

return outputs

# Make up some real data

x_data = np.linspace(-1,1,300)[:, np.newaxis]

noise = np.random.normal(0, 0.05, x_data.shape)

y_data = np.square(x_data) - 0.5 + noise

# define placeholder for inputs to network

xs = tf.placeholder(tf.float32, [None, 1])

ys = tf.placeholder(tf.float32, [None, 1])

# add hidden layer

l1 = add_layer(xs, 1, 10, activation_function=tf.nn.relu)

# add output layer

prediction = add_layer(l1, 10, 1, activation_function=None)

# the error between prediciton and real data

loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys - prediction),

reduction_indices=[1]))

train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for i in range(1000):

# training

sess.run(train_step, feed_dict={xs: x_data, ys: y_data})

if i % 50 == 0:

# to see the step improvement

print(sess.run(loss, feed_dict={xs: x_data, ys: y_data}))

----------outer--------------

0.228757

0.0130992

0.00904156

0.00689739

0.00567161

0.00500242

0.00462556

0.00434303

0.00409423

0.00388352

0.00370815

0.00357471

0.00347333

0.00339316

0.00331399

0.00323986

0.00317976

0.0031317

0.00309585

0.00306742显示神经网络的结构

接下来我们对上面的代码进行一次可视化的修改:代码如下

import tensorflow as tf

import numpy as np

def add_layer(inputs, in_size, out_size, activation_function=None):

# add one more layer and return the output of this layer

with tf.name_scope('layer'):

Weights = tf.Variable(tf.random_normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1)

Wx_plus_b = tf.add(tf.matmul(inputs, Weights), biases)

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, )

return outputs

# Make up some real data

#x_data = np.linspace(-1,1,300)[:, np.newaxis]

#noise = np.random.normal(0, 0.05, x_data.shape)

#y_data = np.square(x_data) - 0.5 + noise

# define placeholder for inputs to network

with tf.name_scope('inputs'):

xs = tf.placeholder(tf.float32, [None, 1],name='input_x')

ys = tf.placeholder(tf.float32, [None, 1],name='input_y')

# add hidden layer

l1 = add_layer(xs, 1, 10, activation_function=tf.nn.relu)

# add output layer

prediction = add_layer(l1, 10, 1, activation_function=None)

# the error between prediciton and real data

with tf.name_scope('loss'):

loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys - prediction),

reduction_indices=[1]))

with tf.name_scope('train'):

train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

sess = tf.Session()

# save the logs

writer = tf.summary.FileWriter("logs/", sess.graph)

sess.run(tf.global_variables_initializer())

到运行python的所在目录下,打一下命令:

$ tensorboard --logdir='logs/'

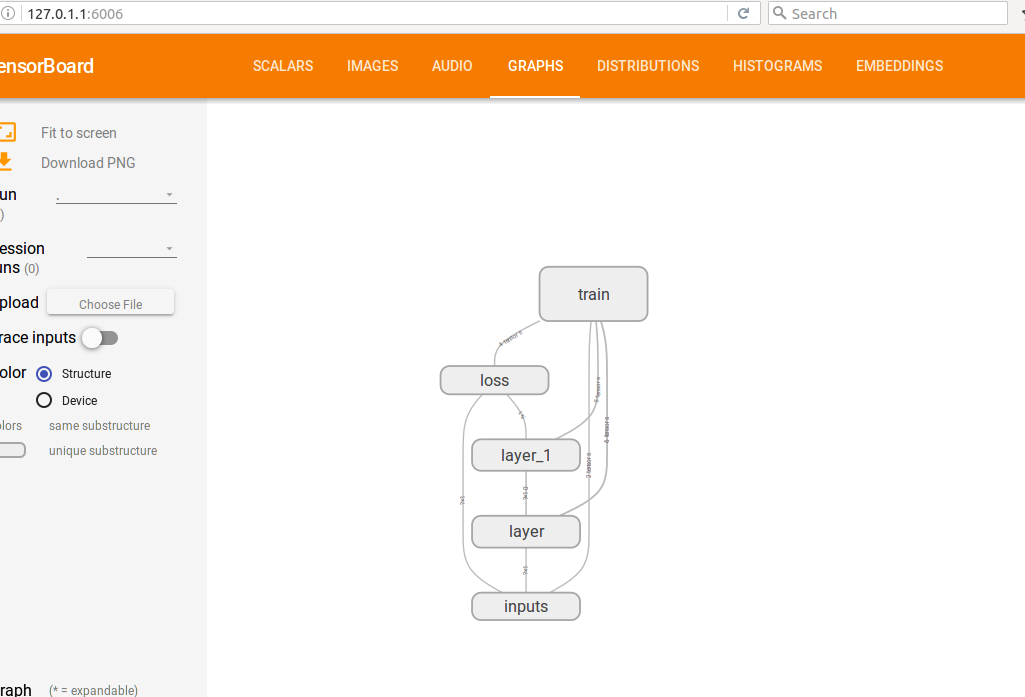

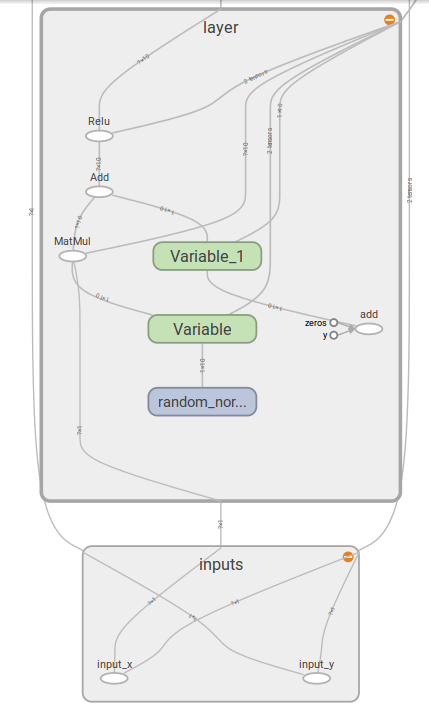

再在网页中输入链接:127.0.1.1:6006 即可获得展示:

loss及权重迭代可视化

代码如下:

import tensorflow as tf

import numpy as np

def add_layer(inputs, in_size, out_size, n_layer, activation_function=None):

# add one more layer and return the output of this layer

layer_name = 'layer%s' % n_layer

with tf.name_scope(layer_name):

with tf.name_scope('weights'):

Weights = tf.Variable(tf.random_normal([in_size, out_size]), name='W')

tf.summary.histogram(layer_name + '/weights', Weights)

with tf.name_scope('biases'):

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, name='b')

tf.summary.histogram(layer_name + '/biases', biases)

with tf.name_scope('Wx_plus_b'):

Wx_plus_b = tf.add(tf.matmul(inputs, Weights), biases)

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b, )

tf.summary.histogram(layer_name + '/outputs', outputs)

return outputs

# Make up some real data

x_data = np.linspace(-1,1,300)[:, np.newaxis]

noise = np.random.normal(0, 0.05, x_data.shape)

y_data = np.square(x_data) - 0.5 + noise

# define placeholder for inputs to network

with tf.name_scope('inputs'):

xs = tf.placeholder(tf.float32, [None, 1],name='input_x')

ys = tf.placeholder(tf.float32, [None, 1],name='input_y')

# add hidden layer

l1 = add_layer(xs, 1, 10, n_layer=1, activation_function=tf.nn.relu)

# add output layer

prediction = add_layer(l1, 10, 1, n_layer=2, activation_function=None)

# the error between prediciton and real data

with tf.name_scope('loss'):

loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys - prediction),

reduction_indices=[1]))

tf.summary.scalar('loss', loss)

with tf.name_scope('train'):

train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

sess = tf.Session()

merged = tf.summary.merge_all()

# save the logs

writer = tf.summary.FileWriter("logs/", sess.graph)

sess.run(tf.global_variables_initializer())

for i in range(1000):

# training

sess.run(train_step, feed_dict={xs: x_data, ys: y_data})

if i % 50 == 0:

# to see the step improvement

result = sess.run(merged,

feed_dict={xs: x_data, ys: y_data})

writer.add_summary(result, i)运行完上面代码.

打一下命令:

$ tensorboard --logdir='logs/'

再在网页中输入链接:127.0.1.1:6006 即可获得展示:

参考资料:

https://morvanzhou.github.io/tutorials/machine-learning/tensorflow/4-1-tensorboard1/

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?