mobilenetV1-V3架构总结

- mobilenet-V1 paper地址:https://arxiv.org/pdf/1704.04861.pdf

- mobilenet-V2 paper地址: https://arxiv.org/pdf/1801.04381.pdf

- mobilenet-V3 paper地址:https://openaccess.thecvf.com/content_ICCV_2019/papers/Howard_Searching_for_MobileNetV3_ICCV_2019_paper.pdf

文章目录

1. mobilenet-V1

1.1 基本block

深度可分离卷积

参考:https://blog.csdn.net/m0_37799466/article/details/106054111

1.2 网络架构

第一层是全卷积full convolution(也就是标准卷积)

除了最后的完全连接层(没有非线性,并馈入softmax层进行分类)外,所有层均遵循Batchnorm和ReLU非线性 ,也就是下面的右图(下右图就是上图中红色框框出来的部分)

(左图是标准的卷积,右图是深度可分离卷积)。

若将深度卷积和点卷积分开为3层看,则mobile-V1共28层。

2. mobilenet-V2

2.1 基础block

mobile-V2的基本构建block是一个带有残差的bottleneck depth-separabel convolution

2.2 网络架构

Sequential: (Conv2d, BatchNorm2d, ReLU6)

Sequential: (Conv2d, BatchNorm2d, ReLU6)

第1层:输入图像通道数3, 输出通道数32,kernel_size=3,s是2

InvertedResidualblock

(1): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第2-4层:输入通道数32,输出通道数16,kernel_size分别是3和1,t是1,s是1

(2)InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第5-7层:输入通道数16,输出通道数24,kernel_size分别是1,3,1,t是6,s是2

(3)InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第8-10层:输入通道数24,输出通道数24,kernel_size分别是1,3,1,t是6,s是2

(4)InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第11-13层:输入通道数24,输出通道数32,kernel_size分别是1,3,1,t是6,s是2

(5): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第14-16层:输入通道数32,输出通道数32,kernel_size分别是1,3,1,t是6,s是2

(6): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第17-19层:输入通道数32,输出通道数32,kernel_size分别是1,3,1,t是6,s是2

(7): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第20-22层:输入通道数32,输出通道数64,kernel_size分别是1,3,1,t是6,s是2

(8): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第23-25层:输入通道数64,输出通道数64,kernel_size分别是1,3,1,t是6,s是2

(9): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第26-28层:输入通道数64,输出通道数64,kernel_size分别是1,3,1,t是6,s是2

(10): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第29-31层:输入通道数64,输出通道数64,kernel_size分别是1,3,1,t是6,s是2

(11): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第32-34层:输入通道数64,输出通道数96,kernel_size分别是1,3,1,t是6,s是1

(12): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第35-37层:输入通道数96,输出通道数96,kernel_size分别是1,3,1,t是6,s是1

(13): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第38-40层:输入通道数96,输出通道数96,kernel_size分别是1,3,1,t是6,s是1

(14): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第41-43层:输入通道数96,输出通道数96,kernel_size分别是1,3,1,t是6,s是2

(15): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第44-46层:输入通道数160,输出通道数160,kernel_size分别是1,3,1,t是6,s是2

(16): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第47-49层:输入通道数160,输出通道数160,kernel_size分别是1,3,1,t是6,s是2

(17): InvertedResidual (conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d, ReLU6, Conv2d, BatchNorm2d)

第50-52层:输入通道数160,输出通道数320,kernel_size分别是1,3,1,t是6,s是1

(18): Sequential (conv2d, BatchNorm2d, ReLU6)

第53层:输入通道数160,输出通道数1280,kernel_size是1,s是1

Classifier: Linear

第54层:输入通道数1280,输出通道数100,kernel_size分别是1

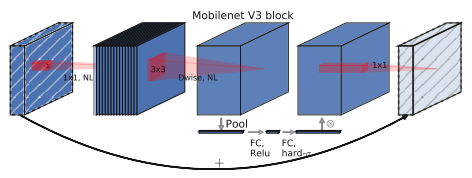

3. mobilenet-V3

3.1 基础block

3.2 网络架构

mobilenet-V3-large

mobilenet-V3-small

写得不太详细,以后再慢慢补充吧~

740

740

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?