Background

Previous distillation methods of detection have weak generalization for different detection frameworks and rely heavily on ground truth(GT), ignoring the valuable relation information between instances.

Distillation methods on multi-classification work weak on object detection, as the extremely unbalanced ratio of positive and negative instances.

Some other method:

- Distilling the positive and negative instances in a certain proportion sampled by RPN

- Distilling the area near ground truth

the problem is ignoring the information in background and working weak in abundant kinds of detection frame. And for the first method, the ratio needs to be meticulously designed

definition

General Instance (discriminative instances) : the L1 distance of classification score as GI score and choose box with higher score as GI box

and than use distillation in such areas

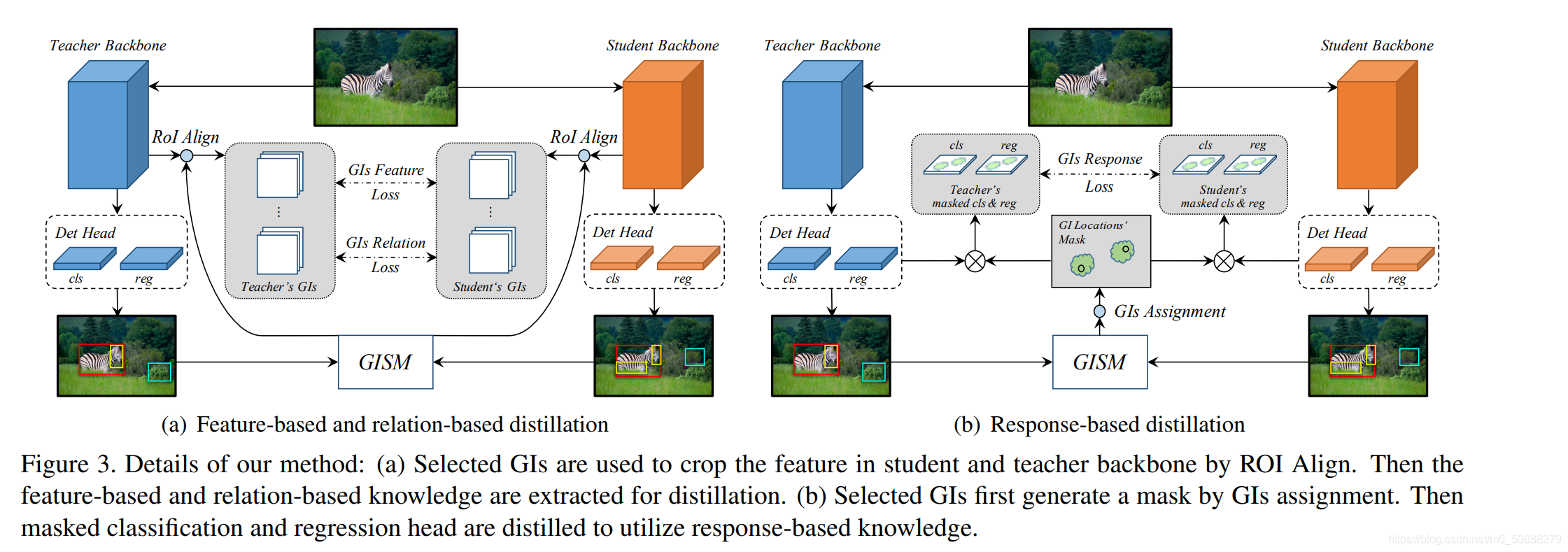

three kinds of distillation

Feature-based Distillation

We distill in the GI box. As the dimension is different, we use ROI align to unify the dimension of the feature, and then apply distillation

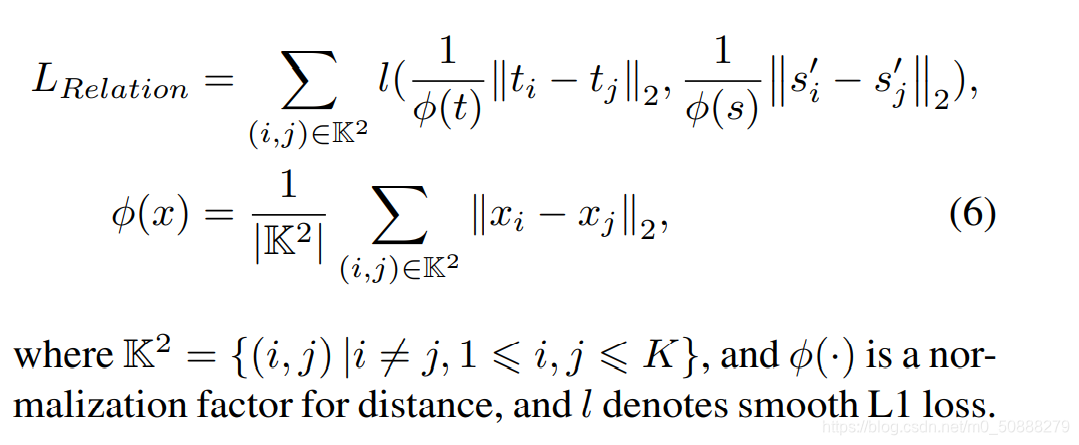

Relation-based Distillation

K is the set of GI box after ROI align

Response-based Distillation

Traditional method that distilling on the whole head is detrimental. So we use

In order to calculate the Mask of every anchor. if the anchor is completely match the GI box, the mask score is 1, else is 0. We use M to represent.

and then apply distillation on the head masked

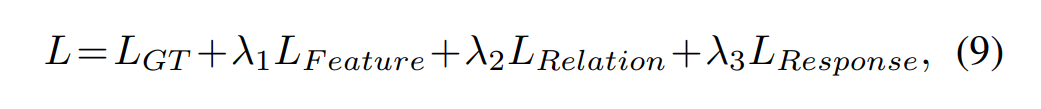

The whole loss function

1982

1982

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?