目录

主要讲的是ONNX-ONNX Runtime部署

我之前因为是讲torchserve服务器部署来着,但是发现本部分视频还没拍摄,torchserve服务器部署还等待催更(狗头,本部分内容原本应该如下:

anyway,现在只有第一个文件夹,不过由于我确实对这部分比较陌生,会看部分相关解说博客,我文中会放置相关链接。

第一个问题

什么是ONNX-ONNX Runtime,找到了一个大佬的解说(这篇文章写的很好,可看):

转自:模型部署之ONNX ONNXRuntime

前面看不懂的同学可以简单理解如下:

但是肯定不会那么简单,由于没有其他部署的对比,先姑且这么理解吧

具体内容

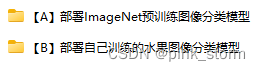

【A】部署lmageNet预训练图像分类模型

这个我还是过程都写,因为并不熟悉

【A】安装配置环境

-

安装Pytorch

!pip3 install torch torchvision --extra-index-url https://download.pytorch.org/whl/cu113 -

安装工具包

!pip install numpy pandas matplotlib tqdm opencv-python pillow onnx onnxruntime -i https://pypi.tuna.tsinghua.edu.cn/simple

【B】导出ONNX模型

把原生Pytorch训练得到的图像分类模型,导出为ONNX格式,用于后续在ONNX Runtime推理引擎上部署。

-

导入工具包

import torch from torchvision import models # 有 GPU 就用 GPU,没有就用 CPU device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu') print('device', device) -

载入lmageNet预训练图像分类模型

model = models.resnet18(pretrained=True) model = model.eval().to(device) x = torch.randn(1, 3, 256, 256).to(device) output = model(x) output.shape #torch.Size([1, 1000]) -

Pytorch模型转ONNX模型x = torch.randn(1, 3, 256, 256).to(device) with torch.no_grad(): torch.onnx.export( model, # 要转换的模型 x, # 模型的任意一组输入 'resnet18.onnx', # 导出的 ONNX 文件名 opset_version=11, # ONNX 算子集版本 input_names=['input'], # 输入 Tensor 的名称(自己起名字) output_names=['output'] # 输出 Tensor 的名称(自己起名字) )Tensor这里是

张量,相关文章:

笔记│什么是张量(tensor)&深度学习 -

验证oonx模型导出成功import onnx # 读取 ONNX 模型 onnx_model = onnx.load('resnet18.onnx') # 检查模型格式是否正确 onnx.checker.check_model(onnx_model) print('无报错,onnx模型载入成功') -

以可读的形式打印计算图print(onnx.helper.printable_graph(onnx_model.graph))这个图蛮漂亮的(数学上的好看),贴出来:

graph torch-jit-export ( %input[FLOAT, 1x3x256x256] ) initializers ( %fc.weight[FLOAT, 1000x512] %fc.bias[FLOAT, 1000] %193[FLOAT, 64x3x7x7] %194[FLOAT, 64] %196[FLOAT, 64x64x3x3] %197[FLOAT, 64] %199[FLOAT, 64x64x3x3] %200[FLOAT, 64] %202[FLOAT, 64x64x3x3] %203[FLOAT, 64] %205[FLOAT, 64x64x3x3] %206[FLOAT, 64] %208[FLOAT, 128x64x3x3] %209[FLOAT, 128] %211[FLOAT, 128x128x3x3] %212[FLOAT, 128] %214[FLOAT, 128x64x1x1] %215[FLOAT, 128] %217[FLOAT, 128x128x3x3] %218[FLOAT, 128] %220[FLOAT, 128x128x3x3] %221[FLOAT, 128] %223[FLOAT, 256x128x3x3] %224[FLOAT, 256] %226[FLOAT, 256x256x3x3] %227[FLOAT, 256] %229[FLOAT, 256x128x1x1] %230[FLOAT, 256] %232[FLOAT, 256x256x3x3] %233[FLOAT, 256] %235[FLOAT, 256x256x3x3] %236[FLOAT, 256] %238[FLOAT, 512x256x3x3] %239[FLOAT, 512] %241[FLOAT, 512x512x3x3] %242[FLOAT, 512] %244[FLOAT, 512x256x1x1] %245[FLOAT, 512] %247[FLOAT, 512x512x3x3] %248[FLOAT, 512] %250[FLOAT, 512x512x3x3] %251[FLOAT, 512] ) { %192 = Conv[dilations = [1, 1], group = 1, kernel_shape = [7, 7], pads = [3, 3, 3, 3], strides = [2, 2]](%input, %193, %194) %125 = Relu(%192) %126 = MaxPool[ceil_mode = 0, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [2, 2]](%125) %195 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%126, %196, %197) %129 = Relu(%195) %198 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%129, %199, %200) %132 = Add(%198, %126) %133 = Relu(%132) %201 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%133, %202, %203) %136 = Relu(%201) %204 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%136, %205, %206) %139 = Add(%204, %133) %140 = Relu(%139) %207 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [2, 2]](%140, %208, %209) %143 = Relu(%207) %210 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%143, %211, %212) %213 = Conv[dilations = [1, 1], group = 1, kernel_shape = [1, 1], pads = [0, 0, 0, 0], strides = [2, 2]](%140, %214, %215) %148 = Add(%210, %213) %149 = Relu(%148) %216 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%149, %217, %218) %152 = Relu(%216) %219 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%152, %220, %221) %155 = Add(%219, %149) %156 = Relu(%155) %222 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [2, 2]](%156, %223, %224) %159 = Relu(%222) %225 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%159, %226, %227) %228 = Conv[dilations = [1, 1], group = 1, kernel_shape = [1, 1], pads = [0, 0, 0, 0], strides = [2, 2]](%156, %229, %230) %164 = Add(%225, %228) %165 = Relu(%164) %231 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%165, %232, %233) %168 = Relu(%231) %234 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%168, %235, %236) %171 = Add(%234, %165) %172 = Relu(%171) %237 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [2, 2]](%172, %238, %239) %175 = Relu(%237) %240 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%175, %241, %242) %243 = Conv[dilations = [1, 1], group = 1, kernel_shape = [1, 1], pads = [0, 0, 0, 0], strides = [2, 2]](%172, %244, %245) %180 = Add(%240, %243) %181 = Relu(%180) %246 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%181, %247, %248) %184 = Relu(%246) %249 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%184, %250, %251) %187 = Add(%249, %181) %188 = Relu(%187) %189 = GlobalAveragePool(%188) %190 = Flatten[axis = 1](%189) %240 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%175, %241, %242) %243 = Conv[dilations = [1, 1], group = 1, kernel_shape = [1, 1], pads = [0, 0, 0, 0], strides = [2, 2]](%172, %244, %245) %180 = Add(%240, %243) %181 = Relu(%180) %246 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%181, %247, %248) %184 = Relu(%246) %249 = Conv[dilations = [1, 1], group = 1, kernel_shape = [3, 3], pads = [1, 1, 1, 1], strides = [1, 1]](%184, %250, %251) %187 = Add(%249, %181) %188 = Relu(%187) %189 = GlobalAveragePool(%188) %190 = Flatten[axis = 1](%189) %output = Gemm[alpha = 1, beta = 1, transB = 1](%190, %fc.weight, %fc.bias) return %output } -

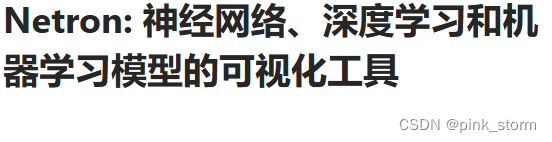

使用Netron对oonx模型可视化

网址

【C】推理引擎ONNX Runtime部署-预测单张图像

使用推理引擎 ONNX Runtime,读取 onnx 格式的模型文件,对单张图像文件进行预测。

应用场景:

以下代码在需要部署的硬件上运行

只需把onnx模型文件发到部署硬件上,并安装ONNX Runtime环境,用几行代码就可以运行模型了。

- 导入工具包

import onnxruntime

import numpy as np

import torch

-

载入onnx模型,获取ONNX Runtime推理器

ort_session = onnxruntime.InferenceSession('resnet18.onnx') -

构造输入,获取输出结果

x = torch.randn(1, 3, 256, 256).numpy() x.shape #(1, 3, 256, 256) ##注意,输入输出张量的名称需要和 torch.onnx.export 中设置的输入输出名对应 # onnx runtime 输入 ort_inputs = {'input': x} # onnx runtime 输出 ort_output = ort_session.run(['output'], ort_inputs)[0] ort_output.shape #(1, 1000) -

预处理

from torchvision import transforms # 测试集图像预处理-RCTN:缩放裁剪、转 Tensor、归一化 test_transform = transforms.Compose([transforms.Resize(256), transforms.CenterCrop(256), transforms.ToTensor(), transforms.Normalize( mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) ]) -

载入测试图像

img_path = 'banana1.jpg' # 用 pillow 载入 from PIL import Image img_pil = Image.open(img_path) print(img_pil)

-

运行预处理

input_img = test_transform(img_pil)

input_img.shape

#torch.Size([3, 256, 256])

input_tensor = input_img.unsqueeze(0).numpy()

input_tensor.shape

#(1, 3, 256, 256)

- ONNX Runtime预测

# ONNX Runtime 输入

ort_inputs = {'input': input_tensor}

# ONNX Runtime 输出

pred_logits = ort_session.run(['output'], ort_inputs)[0]

pred_logits = torch.tensor(pred_logits)

pred_logits.shape

#torch.Size([1, 1000])

import torch.nn.functional as F

pred_softmax = F.softmax(pred_logits, dim=1) # 对 logit 分数做 softmax 运算

pred_softmax.shape

#torch.Size([1, 1000])

- 柱状图可视化

import matplotlib.pyplot as plt

%matplotlib inline

plt.figure(figsize=(8,4))

x = range(1000)

y = pred_softmax.cpu().detach().numpy()[0]

ax = plt.bar(x, y, alpha=0.5, width=0.3, color='yellow', edgecolor='red', lw=3)

plt.ylim([0, 1.0]) # y轴取值范围

# plt.bar_label(ax, fmt='%.2f', fontsize=15) # 置信度数值

plt.xlabel('Class', fontsize=20)

plt.ylabel('Confidence', fontsize=20)

plt.tick_params(labelsize=16) # 坐标文字大小

plt.title(img_path, fontsize=25)

plt.show()

后续步骤:解析top-n预测结果、在图像上写英文和中文预测结果

【D1】推理引擎ONNX Runtime部署-预测摄像头实时画面-英文

使用 ONNX Runtime 推理引擎,载入 ImageNet 预训练图像分类 onnx 模型,预测摄像头实时画面。

注意事项:

本代码需在连接摄像头的本地运行,不能在云GPU平台运行。

在本地运行

pip install onnxruntime

安装onnx runtime,并准备好onnx模型文件。

-

导入工具包

import onnxruntime import torch import pandas as pd import numpy as np from PIL import Image, ImageFont, ImageDraw import matplotlib.pyplot as plt %matplotlib inline -

载入onnx模型,获取ONNX Runtime推理器

ort_session = onnxruntime.InferenceSession('resnet18.onnx') -

载入lmageNet 1000图像分类标签

df = pd.read_csv('imagenet_class_index.csv') idx_to_labels = {} for idx, row in df.iterrows(): idx_to_labels[row['ID']] = row['class'] -

图像预处理

from torchvision import transforms # 测试集图像预处理-RCTN:缩放裁剪、转 Tensor、归一化 test_transform = transforms.Compose([transforms.Resize(256), transforms.CenterCrop(256), transforms.ToTensor(), transforms.Normalize( mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) ]) -

预测摄像头单帧画面

– 调用摄像头获取一帧画面# 导入opencv-python import cv2 import time # 获取摄像头,传入0表示获取系统默认摄像头 cap = cv2.VideoCapture(1) # 打开cap cap.open(0) time.sleep(1) success, img_bgr = cap.read() # 关闭摄像头 cap.release() # 关闭图像窗口 cv2.destroyAllWindows()– 画面转成 RGB的Pillow格式

img_bgr.shape #(720, 1280, 3) img_rgb = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2RGB) # BGR转RGB img_pil = Image.fromarray(img_rgb) print(img_pil) -

预处埋

input_img = test_transform(img_pil) input_tensor = input_img.unsqueeze(0).numpy() input_tensor.shape #(1, 3, 256, 256) -

ONNX Runtime预测

# onnx runtime 预测 # onnx runtime 输入 ort_inputs = {'input': input_tensor} # onnx runtime 输出 pred_logits = ort_session.run(['output'], ort_inputs)[0] pred_logits = torch.tensor(pred_logits) pred_logits.shape #torch.Size([1, 1000]) import torch.nn.functional as F pred_softmax = F.softmax(pred_logits, dim=1) # 对 logit 分数做 softmax 运算 pred_softmax.shape #torch.Size([1, 1000]) -

解析top-n预测结果的类别和置信度

n = 5 top_n = torch.topk(pred_softmax, n) # 取置信度最大的 n 个结果 confs = top_n[0].cpu().detach().numpy().squeeze() confs #array([0.1028311 , 0.04595807, 0.02947768, 0.02589989, 0.02027929], #, dtype=float32) pred_ids = top_n[1].cpu().detach().numpy().squeeze() pred_ids #array([788, 918, 617, 457, 937]) -

在图像上写英文

for i in range(len(confs)): pred_class = idx_to_labels[pred_ids[i]] text = '{:<15} {:>.3f}'.format(pred_class, confs[i]) # 图片,添加的文字,左上角坐标,字体,字体大小,颜色,线宽,线型 img_bgr = cv2.putText(img_bgr, text, (50, 80 + 80 * i), cv2.FONT_HERSHEY_SIMPLEX, 2.5, (0, 0, 255), 5, cv2.LINE_AA) img_rgb = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2RGB) # BGR转RGB plt.imshow(img_rgb) plt.show()

-

处理单帧画面的函数(英文)

# 处理帧函数 def process_frame(img): ''' 输入摄像头拍摄画面bgr-array,输出图像分类预测结果bgr-array ''' # 记录该帧开始处理的时间 start_time = time.time() ## 画面转成 RGB 的 Pillow 格式 img_rgb = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) # BGR转RGB img_pil = Image.fromarray(img_rgb) # array 转 PIL ## 预处理 input_img = test_transform(img_pil) # 预处理 input_tensor = input_img.unsqueeze(0).numpy() ## onnx runtime 预测 ort_inputs = {'input': input_tensor} # onnx runtime 输入 pred_logits = ort_session.run(['output'], ort_inputs)[0] # onnx runtime 输出 pred_logits = torch.tensor(pred_logits) pred_softmax = F.softmax(pred_logits, dim=1) # 对 logit 分数做 softmax 运算 ## 解析top-n预测结果的类别和置信度 top_n = torch.topk(pred_softmax, 5) # 取置信度最大的 n 个结果 pred_ids = top_n[1].cpu().detach().numpy().squeeze() # 解析预测类别 confs = top_n[0].cpu().detach().numpy().squeeze() # 解析置信度 # 在图像上写英文 for i in range(len(confs)): pred_class = idx_to_labels[pred_ids[i]] text = '{:<15} {:>.3f}'.format(pred_class, confs[i]) # 图片,添加的文字,左上角坐标,字体,字体大小,颜色,线宽,线型 img = cv2.putText(img, text, (50, 160 + 80 * i), cv2.FONT_HERSHEY_SIMPLEX, 2, (0, 0, 255), 4, cv2.LINE_AA) # 记录该帧处理完毕的时间 end_time = time.time() # 计算每秒处理图像帧数FPS FPS = 1/(end_time - start_time) # 图片,添加的文字,左上角坐标,字体,字体大小,颜色,线宽,线型 img = cv2.putText(img, 'FPS '+str(int(FPS)), (50, 80), cv2.FONT_HERSHEY_SIMPLEX, 2, (255, 0, 255), 4, cv2.LINE_AA) return img -

调用摄像头获取每帧(模板)

# 调用摄像头逐帧实时处理模板 # 不需修改任何代码,只需修改process_frame函数即可 # 同济子豪兄 2021-7-8 # 导入opencv-python import cv2 import time # 获取摄像头,传入0表示获取系统默认摄像头 cap = cv2.VideoCapture(1) # 打开cap cap.open(0) # 无限循环,直到break被触发 while cap.isOpened(): # 获取画面 success, frame = cap.read() if not success: print('Error') break ## !!!处理帧函数 frame = process_frame(frame) # 展示处理后的三通道图像 cv2.imshow('my_window',frame) if cv2.waitKey(1) in [ord('q'),27]: # 按键盘上的q或esc退出(在英文输入法下) break # 关闭摄像头 cap.release() # 关闭图像窗口 cv2.destroyAllWindows()

【D2】推理引擎ONNX Runtime部署-预测摄像头实时画面-中文

也是要在本地运行的

和上面代码基本一样,除了

1.需要导入中文字体

# 下载中文字体文件

!wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/dataset/SimHei.ttf

# 导入中文字体,指定字号

font = ImageFont.truetype('SimHei.ttf', 32)

2.在处理单帧画面的函数部分

中文:

# 在图像上写字

for i in range(len(confs)):

pred_class = idx_to_labels[pred_ids[i]]

text = '{:<15} {:>.3f}'.format(pred_class, confs[i])

# 文字坐标,中文字符串,字体,rgba颜色

draw.text((50, 100 + 50 * i), text, font=font, fill=(255, 0, 0, 1))

img = np.array(img_pil) # PIL 转 array

img = cv2.cvtColor(img, cv2.COLOR_RGB2BGR) # RGB转BGR

英文:

# 在图像上写英文

for i in range(len(confs)):

pred_class = idx_to_labels[pred_ids[i]]

text = '{:<15} {:>.3f}'.format(pred_class, confs[i])

【B】部署自己训练的水果图像分类模型

【A】安装配置环境

-

安装基础工具包

!pip install numpy pandas matplotlib tqdm opencv-python pillow onnx onnxruntime -i https://pypi.tuna.tsinghua.edu.cn/simple -

安装Pytorch

!pip3 install torch torchvision --extra-index-url https://download.pytorch.org/whl/cu113 -

创建目录

import os # 存放测试图片 os.mkdir('test_img') # 存放结果文件 os.mkdir('output') # 存放训练得到的模型权重 os.mkdir('checkpoints') # 下载测试图像文件 至 test_img 文件夹 # 草莓图像,来源:https://www.pexels.com/zh-cn/photo/4828489/ !wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/test/0818/test_草莓.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_fruits.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_orange_2.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_bananan.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_kiwi.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_石榴.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_orange.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_lemon.jpg -P test_img !wget https://zihao-openmmlab.obs.myhuaweicloud.com/20220716-mmclassification/test/0818/test_火龙果.jpg -P test_img !wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/test/watermelon1.jpg -P test_img !wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/test/banana1.jpg -P test_img -

下载中文字体文件

!wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/dataset/SimHei.ttf -

下载训练好的模型文件# 下载样例模型文件 !wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/checkpoints/fruit30_pytorch_20220814.pth -P checkpoints

【B】导出ONNX模型

- 导入工具包

- 导入训练好的模型

- Pytorch模型转ONNX模型

- 验证onnx模型导出成功

- 以可读的形式打印计算图

- 使用Netron对onnx模型可视化

【C】推理引擎ONNX Runtime部署-预测单张图像

-

导入工具包

-

载入onnx模型,获取ONNX Runtime推理器

-

构造输入,获取输出结果

-

预处理

-

载入测试图像

-

运行预处理

-

ONNX Runtime预测

-

解析预测结果(A中这部分是柱状图可视化)

–载入类别和对应IDidx_to_labels = np.load('idx_to_labels.npy', allow_pickle=True).item() print(idx_to_labels){0: ‘哈密瓜’,

, 1: ‘圣女果’,

, 2: ‘山竹’,

, 3: ‘杨梅’,

, 4: ‘柚子’,

, 5: ‘柠檬’,

, 6: ‘桂圆’,

, 7: ‘梨’,

, 8: ‘椰子’,

, 9: ‘榴莲’,

, 10: ‘火龙果’,

, 11: ‘猕猴桃’,

, 12: ‘石榴’,

, 13: ‘砂糖橘’,

, 14: ‘胡萝卜’,

, 15: ‘脐橙’,

, 16: ‘芒果’,

, 17: ‘苦瓜’,

, 18: ‘苹果-红’,

, 19: ‘苹果-青’,

, 20: ‘草莓’,

, 21: ‘荔枝’,

, 22: ‘菠萝’,

, 23: ‘葡萄-白’,

, 24: ‘葡萄-红’,

, 25: ‘西瓜’,

, 26: ‘西红柿’,

, 27: ‘车厘子’,

, 28: ‘香蕉’,

, 29: ‘黄瓜’}

– 设置matplotlib中文字体

# Linux操作系统,例如 云GPU平台:https://featurize.cn/?s=d7ce99f842414bfcaea5662a97581bd1

# 如果遇到 SSL 相关报错,重新运行本代码块即可

!wget https://zihao-openmmlab.obs.cn-east-3.myhuaweicloud.com/20220716-mmclassification/dataset/SimHei.ttf -O /environment/miniconda3/lib/python3.7/site-packages/matplotlib/mpl-data/fonts/ttf/SimHei.ttf

!rm -rf /home/featurize/.cache/matplotlib

import matplotlib

matplotlib.rc("font",family='SimHei') # 中文字体

import matplotlib.pyplot as plt

%matplotlib inline

plt.figure(figsize=(22, 10))

x = idx_to_labels.values()

y = pred_softmax.cpu().detach().numpy()[0] * 100

width = 0.45 # 柱状图宽度

ax = plt.bar(x, y, width)

plt.bar_label(ax, fmt='%.2f', fontsize=15) # 置信度数值

plt.tick_params(labelsize=20) # 设置坐标文字大小

plt.title(img_path, fontsize=30)

plt.xticks(rotation=45) # 横轴文字旋转

plt.xlabel('类别', fontsize=20)

plt.ylabel('置信度', fontsize=20)

plt.show()

【D】水果分类-预测摄像头实时画面-中文

-

注意事项

本代码需在连接摄像头的本地运行,不能在云GPU平台运行 -

导入工具包

-

导入中文字体

-

载入onnx模型,获取ONNX Runtime推理器

-

载入类别和ID对应字典

-

图像预处理

-

预测摄像头单帧画面

– 调用摄像头获取一帧画面

–画面转成RGB的Pillow格式img_bgr.shape #(720, 1280, 3) img_rgb = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2RGB) # BGR转RGB img_pil = Image.fromarray(img_rgb) print(img_pil) -

预处理

-

ONNX Runtime预测

-

解析top-n预测结果的类别和置信度

-

在图像上写中文

-

处理单帧画面的函数(中文)

-

调用摄像头获取每帧(模板)

2996

2996

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?