作者 群号 C语言交流中心 240137450 微信 15013593099

直方图均衡理论研究

我们先来看看原图的直方图

我们发现高亮区域的像素点很少,主要像素点集中在中低亮度区域

我们先设置一个阈值,也就是图中的那根粉色的线,

当某一亮度值的像素点的个数低于这个值时,我们认为这些像素点是无关紧要的。

灰度图的亮度值范围是0-255,若亮度值为1的像素点的个数低于阈值,我们可简单的把亮度为1的像素点的亮度

全设为0,同理,我们从高往低找,若亮度值为254的像素点的个数低于阈值,我们可以把这些像素点的亮度设为255

这样我们可以从小到大,从大到小分别找到两个亮度,它们的像素点的个数恰大于阈值

他们之间的区域,我们可以认为是有效区域,也就是蓝色框出来的区域

我们把这一区域扩展到0-255的区域去,可实现均衡化效果

编程实现为

cv::Mat Histogram::stretch1(const cv::Mat& image, int minValue) {

cv::MatND hist = getHistogram(image);

int imin =0;

for (; imin < histSize[0]; imin++) {

if (hist.at<float>(imin) > minValue) {

break;

}

}

int imax = histSize[0] -1;

for (; imax >=0; imax--) {

if (hist.at<float>(imax) > minValue) {

break;

}

}

cv::Mat lookup(cv::Size(1, 256), CV_8U);

for (int i =0; i <256; i++) {

if (i < imin) {

lookup.at<uchar>(i) =0;

} elseif (i > imax) {

lookup.at<uchar>(i) =255;

} else {

lookup.at<uchar>(i) = static_cast<uchar>(255.0* (i - imin)

/ (imax - imin) +0.5);}

}

cv::Mat result;

cv::LUT(image, lookup, result);

return result;

}对于cv::LUT函数,我之前就介绍过了

可以看出拉伸后的直方图和原直方图形状是一致的

再来看看另一种直方图均衡化的思路

理想的直方图均衡化效果是希望每个亮度的像素点的个数都相同

我们设原亮度为 i 的点均衡化后亮度为S(i),原亮度为 i 的点的个数为N(i)

其占总像素点的概率为p(i) = N(i) / SUM; SUM为像素点的总和

可以得到公式

S(0) = p(0)*255

S(1) = [p(0)+p(1)]*255

S(2) = [p(0)+p(1)+p(2)]*255

........

S(255) = [p(0)+p(1)+......+p(255)]*255 = 255

我们在原图中将亮度为 i 的像素点赋值为 S(i),就可以实现均衡化了

cv::Mat Histogram::stretch2(const cv::Mat& image) {

cv::MatND hist = getHistogram(image);

float scale[256];

float lookupF[256];

cv::Mat lookup(cv::Size(1, 256), CV_8U);

int pixNum = image.cols * image.rows;

for (int i =0; i <256; i++) {

scale[i] = hist.at<float>(i) / pixNum *255;

if (i ==0) {

lookupF[i] = scale[i];

} else {

lookupF[i] = lookupF[i -1] + scale[i];

}

}

for (int i =0; i <256; i++) {

lookup.at<uchar>(i) = static_cast<uchar>(lookupF[i]);

}

cv::Mat result;

cv::LUT(image, lookup, result);

return result;

}

cv::Mat Histogram::stretch3(const cv::Mat& image) {

cv::Mat result;

cv::equalizeHist(image, result);

return result;

}在这里,我们定义了两个函数,一个按照刚才的思路来实现

另一个是OpenCV2 提供的标准的均衡化函数

我们来看看效果

两种方法得到的效果和直方图的形状几乎一模一样

可见,标准的均衡化方法也是按此思路实现的

具体的源代码就不研究了

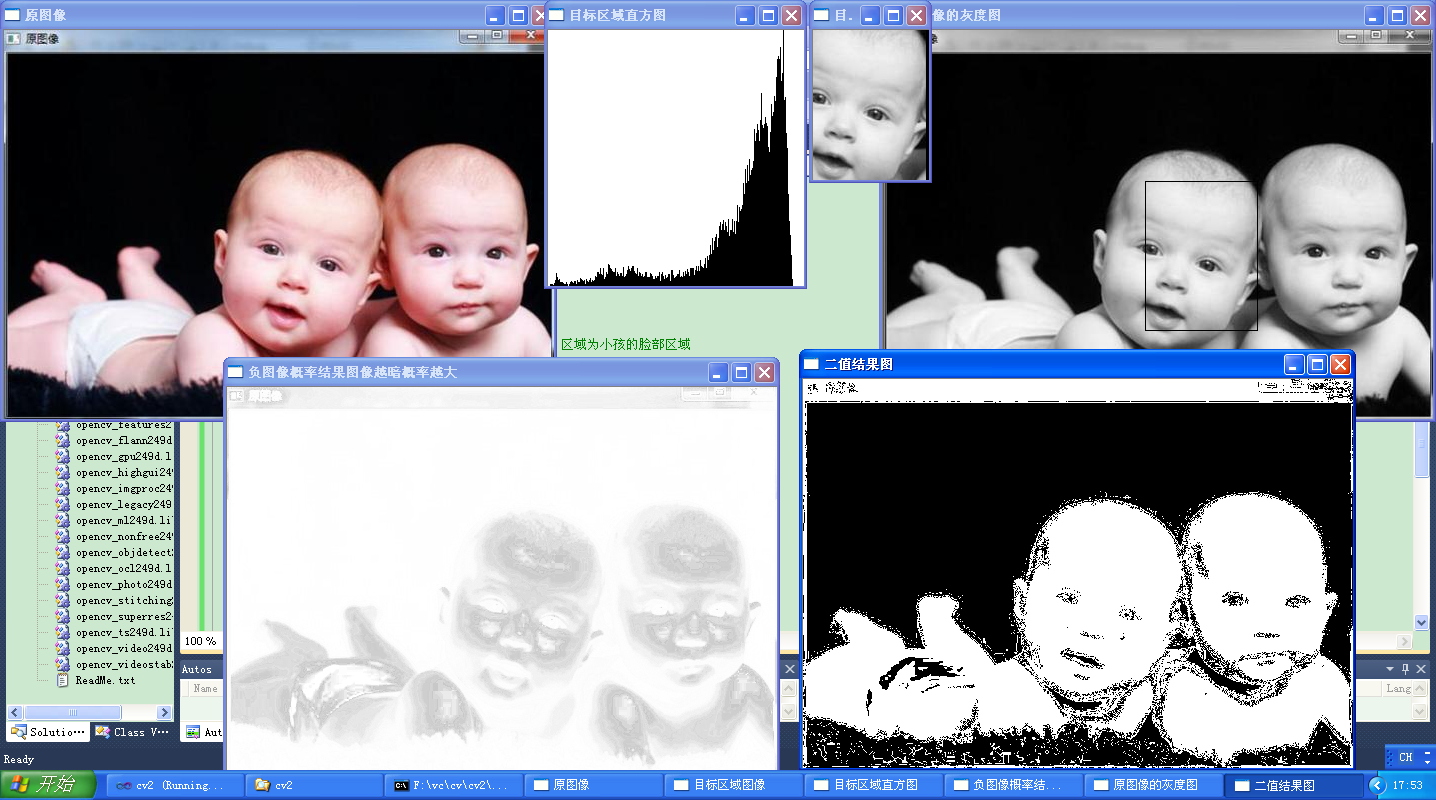

反投影直方图以检测待定的图像内容

#include <opencv2/opencv.hpp>

using namespace cv;

using namespace std;

#include "Histogram1D.h"

class ObjectFinder {

private:

float hranges[2];

const float* ranges[3];

int channels[3];

float threshold;

cv::MatND histogram;

cv::SparseMat shistogram;

public:

ObjectFinder() : threshold(0.1f){

ranges[0]= hranges;

ranges[1]= hranges;

ranges[2]= hranges;

}

// 设置阈值

void setThreshold(float t) {

threshold= t;

}

// 返回阈值

float getThreshold() {

return threshold;

}

// 设置目标直方图,进行归一化

void setHistogram(const cv::MatND& h) {

histogram= h;

cv::normalize(histogram,histogram,1.0);

}

// 查找属于目标直方图概率的像素

cv::Mat find(const cv::Mat& image) {

cv::Mat result;

hranges[0]= 0.0;

hranges[1]= 255.0;

channels[0]= 0;

channels[1]= 1;

channels[2]= 2;

cv::calcBackProject(&image,

1,

channels,

histogram,

result,

ranges,

255.0

);

// 通过阈值投影获得二值图像

if (threshold>0.0)

cv::threshold(result, result, 255*threshold, 255, cv::THRESH_BINARY);

return result;

}

};

int main()

{

//读取圆图像

cv::Mat initimage= cv::imread("f:\\img\\skin.jpg");

if (!initimage.data)

return 0;

//显示原图像

cv::namedWindow("原图像");

cv::imshow("原图像",initimage);

//读取灰度图像

cv::Mat image= cv::imread("f:\\img\\skin.jpg",0);

if (!image.data)

return 0;

//设置目标区域

cv::Mat imageROI;

imageROI= image(cv::Rect(262,151,113,150)); // 区域为小孩的脸部区域

//显示目标区域

cv::namedWindow("目标区域图像");

cv::imshow("目标区域图像",imageROI);

//计算目标区域直方图

Histogram1D h;

cv::MatND hist= h.getHistogram(imageROI);

cv::namedWindow("目标区域直方图");

cv::imshow("目标区域直方图",h.getHistogramImage(imageROI));

//创建检查类

ObjectFinder finder;

//将目标区域直方图传入检测类

finder.setHistogram(hist);

//初始化阈值

finder.setThreshold(-1.0f);

//进行反投影

cv::Mat result1;

result1= finder.find(image);

//创建负图像并显示概率结果

cv::Mat tmp;

result1.convertTo(tmp,CV_8U,-1.0,255.0);

cv::namedWindow("负图像概率结果图像越暗概率越大");

cv::imshow("负图像概率结果图像越暗概率越大",tmp);

//得到二值反投影图像

finder.setThreshold(0.01f);

result1= finder.find(image);

//在图像中绘制选中区域

cv::rectangle(image,cv::Rect(262,151,113,150),cv::Scalar(0,0,0));

//显示原图像

cv::namedWindow("原图像的灰度图");

cv::imshow("原图像的灰度图",image);

//二值结果图

cv::namedWindow("二值结果图");

cv::imshow("二值结果图",result1);

cv::waitKey();

return 0;

}Histogram1D.h

#if !defined HISTOGRAM

#define HISTOGRAM

#include <opencv2/core/core.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

using namespace std;

using namespace cv;

class Histogram1D

{

private:

//直方图的点数

int histSize[1];

//直方图的范围

float hranges[2];

//指向该范围的指针

const float* ranges[1];

//通道

int channels[1];

public:

//构造函数

Histogram1D()

{

histSize[0] = 256;

hranges[0] = 0.0;

hranges[1] = 255.0;

ranges[0] = hranges;

channels[0] = 0;

}

Mat getHistogram(const Mat &image)

{

Mat hist;

//计算直方图函数

//参数为:源图像(序列)地址,输入图像的个数,通道数,掩码,输出结果,直方图维数,每一维的大小,每一维的取值范围

calcHist(&image,1,channels,Mat(),hist,1,histSize,ranges);

//这个函数虽然有很多参数,但是大多数时候,只会用于灰度图像或者彩色图像

//但是,允许通过指明一个多通道图像使用多幅图像

//第6个参数指明了直方图的维数

return hist;

}

Mat getHistogramImage(const Mat &image)

{

//首先计算直方图

Mat hist = getHistogram(image);

//获取最大值和最小值

double maxVal = 0;

double minVal = 0;

//minMaxLoc用来获得最大值和最小值,后面两个参数为最小值和最大值的位置,0代表不需要获取

minMaxLoc(hist,&minVal,&maxVal,0,0);

//展示直方图的画板:底色为白色

Mat histImg(histSize[0],histSize[0],CV_8U,Scalar(255));

//将最高点设为bin总数的90%

//int hpt = static_cast<int>(0.9*histSize[0]);

int hpt = static_cast<int>(histSize[0]);

//为每一个bin画一条线

for(int h = 0; h < histSize[0];h++)

{

float binVal = hist.at<float>(h);

int intensity = static_cast<int>(binVal*hpt/maxVal);

//int intensity = static_cast<int>(binVal);

line(histImg,Point(h,histSize[0]),Point(h,histSize[0]-intensity),Scalar::all(0));

}

return histImg;

}

Mat applyLookUp(const Mat& image,const Mat& lookup)

{

Mat result;

LUT(image,lookup,result);

return result;

}

Mat strech(const Mat &image,int minValue = 0)

{

//首先计算直方图

Mat hist = getHistogram(image);

//左边入口

int imin = 0;

for(;imin< histSize[0];imin++)

{

cout<<hist.at<float>(imin)<<endl;

if(hist.at<float>(imin) > minValue)

break;

}

//右边入口

int imax = histSize[0]-1;

for(;imax >= 0; imax--)

{

if(hist.at<float>(imax) > minValue)

break;

}

//创建查找表

int dim(256);

Mat lookup(1,&dim,CV_8U);

for(int i = 0; i < 256; i++)

{

if(i < imin)

{

lookup.at<uchar>(i) = 0;

}

else if(i > imax)

{

lookup.at<uchar>(i) = 255;

}

else

{

lookup.at<uchar>(i) = static_cast<uchar>(255.0*(i-imin)/(imax-imin)+0.5);

}

}

Mat result;

result = applyLookUp(image,lookup);

return result;

}

Mat equalize(const Mat &image)

{

Mat result;

equalizeHist(image,result);

return result;

}

};

#endif形状特征

#include "stdafx.h"

#include <cxcore.h>

#include <cv.h>

#include <highgui.h>

#include <math.h>

#include <iostream>

using namespace std;

#include <cv.h>

#include <cxcore.h>

#include <highgui.h>

#include <iostream>

using namespace std;

int main()

{

IplImage *src = cvLoadImage("c:\\img\\f.jpg",0);

IplImage *image = cvCreateImage(cvGetSize(src),8,3);

image = cvCloneImage(src);

cvNamedWindow("src",1);

cvNamedWindow("dst",1);

cvShowImage("src",src);

CvMemStorage *storage = cvCreateMemStorage(0);

CvSeq * seq = cvCreateSeq(CV_SEQ_ELTYPE_POINT, sizeof(CvSeq), sizeof(CvPoint), storage);

CvSeq * tempSeq = cvCreateSeq(CV_SEQ_ELTYPE_POINT, sizeof(CvSeq), sizeof(CvPoint), storage);

//新图,将轮廓绘制到dst

IplImage *dst = cvCreateImage(cvGetSize(src),8,3);

cvZero(dst);//赋值为0

double length,area;

//获取轮廓

int cnt = cvFindContours(src,storage,&seq);//返回轮廓的数目

cout<<"number of contours "<<cnt<<endl;

//计算边界序列的参数 长度 面积 矩形 最小矩形

//并输出每个边界的参数

CvRect rect;

CvBox2D box;

double axislong,axisShort;//长轴和短轴

double temp1= 0.0,temp2 = 0.0;

double Rectangle_degree;//矩形度

double long2short;//体态比

double x0,y0;

long sumX = 0 ,sumY = 0;

double sum =0.0;

int i,j,m,n;

unsigned char* ptr;

double UR;//区域重心到轮廓的平均距离

double PR;//区域重心到轮廓点的均方差

CvPoint * contourPoint;

int count = 0;

double CDegree;//圆形性

CvPoint *A,*B,*C;

double AB,BC,AC;

double cosA,sinA;

double tempR,inscribedR;

for (tempSeq = seq;tempSeq != NULL; tempSeq = tempSeq->h_next)

{

//tempSeq = seq->h_next;

length = cvArcLength(tempSeq);

area = cvContourArea(tempSeq);

cout<<"Length = "<<length<<endl;

cout<<"Area = "<<area<<endl;

cout<<"num of point "<<tempSeq->total<<endl;

//外接矩形

rect = cvBoundingRect(tempSeq,1);

//绘制轮廓和外接矩形

cvDrawContours(dst,tempSeq,CV_RGB(255,0,0),CV_RGB(255,0,0),0);

cvRectangleR(dst,rect,CV_RGB(0,255,0));

cvShowImage("dst",dst);

//cvWaitKey();

//绘制轮廓的最小外接圆

CvPoint2D32f center;//亚像素精度 因此需要使用浮点数

float radius;

cvMinEnclosingCircle(tempSeq,¢er,&radius);

cvCircle(dst,cvPointFrom32f(center),cvRound(radius),CV_RGB(100,100,100));

cvShowImage("dst",dst);

//cvWaitKey();

//寻找近似的拟合椭圆 可以使斜椭圆

CvBox2D ellipse = cvFitEllipse2(tempSeq);

cvEllipseBox(dst,ellipse,CV_RGB(255,255,0));

cvShowImage("dst",dst);

//cvWaitKey();

//绘制外接最小矩形

CvPoint2D32f pt[4];

box = cvMinAreaRect2(tempSeq,0);

cvBoxPoints(box,pt);

for(int i = 0;i<4;++i){

cvLine(dst,cvPointFrom32f(pt[i]),cvPointFrom32f(pt[((i+1)%4)?(i+1):0]),CV_RGB(0,0,255));

}

cvShowImage("dst",dst);

//cvWaitKey();

//下面开始分析图形的形状特征

//长轴 短轴

temp1 = sqrt(pow(pt[1].x -pt[0].x,2) + pow(pt[1].y -pt[0].y,2));

temp2 = sqrt(pow(pt[2].x -pt[1].x,2) + pow(pt[2].y -pt[1].y,2));

if (temp1 > temp2)

{

axislong = temp1;

axisShort=temp2;

}

else

{

axislong = temp2;

axisShort=temp1;

}

cout<<"long axis: "<<axislong<<endl;

cout<<"short axis: "<<axisShort<<endl;

//矩形度 轮廓面积和最小外接矩形面积(可以是斜矩形)之比

Rectangle_degree = (double)area/(axisShort*axislong);

cout<<"Rectangle degree :"<<Rectangle_degree<<endl;

//体态比or长宽比 最下外接矩形的长轴和短轴的比值

long2short = axislong/axisShort;

cout<<"ratio of long axis to short axis: "<<long2short<<endl;

//球状性 由于轮廓的内切圆暂时无法求出先搁置

//先求内切圆半径 枚举任意轮廓上的三个点,半径最小的就是内切圆的半径

//以下的最大内切圆半径求法有误 待改进

/*

for (int i = 0 ; i< tempSeq->total -2;i++)

{

for (int j= i+1; j<tempSeq->total-1;j++)

{

for (int m = j+1; m< tempSeq->total; m++)

{

//已知圆上三点,求半径

A = (CvPoint*)cvGetSeqElem(tempSeq ,i);

B = (CvPoint*)cvGetSeqElem(tempSeq ,j);

C = (CvPoint*)cvGetSeqElem(tempSeq,m);

AB = sqrt(pow((double)A->x - B->x,2)+ pow((double)A->y - B->y,2));

AC =sqrt(pow((double)A->x - C->x,2) + pow((double)A->y - C->y,2));

BC = sqrt(pow((double)B->x - C->x,2)+ pow((double)B->y - C->y,2));

cosA = ((B->x - A->x)*(C->x - A->x) + (B->y - A->y)*(C->y - A->y))/(AB*AC);

sinA = sqrt(1 - pow(cosA,2));

tempR = BC/(2*sinA);

if (m == 2)

{

inscribedR = tempR;

}

else

{

if (tempR < inscribedR)

{

inscribedR = tempR;

}

}

}

}

}

//输出最大内切圆半径

cout<<"radius of max inscribed circle "<<inscribedR<<endl;

*/

//圆形性 假设轮廓内是实心的

//球区域中心x0 y0

sumX = 0;

sumY = 0;

src = cvCloneImage(image);

for (int i = 0 ; i< src->height;i++)

{

for (int j = 0; j< src->width;j++)

{

ptr = (unsigned char *)src->imageData + i*src->widthStep + j;

if ((*ptr) > 128)

{

sumX += (long)j;

sumY += (long)i;

}

}

}

x0 = sumX/area;

y0 = sumY/area;

cout<<"center of gravity "<<x0<<" "<<y0<<endl;

//求区域到重心的平均距离

sum = 0;

count = 0;

for (m = 0 ; m< tempSeq->total;m++)

{

contourPoint = (CvPoint*)cvGetSeqElem(tempSeq,m);

sum += sqrt(pow(contourPoint->x - x0,2)+ pow(contourPoint->y - y0,2));

count++;

}

UR = sum/count;

cout<<"mean distance to center of gravity"<<UR<<endl;

//求区域重心到轮廓点的均方差

sum = 0;

for (m = 0 ; m< tempSeq->total;m++)

{

contourPoint = (CvPoint*)cvGetSeqElem(tempSeq,m);

temp1 = sqrt(pow(contourPoint->x - x0,2)+ pow(contourPoint->y - y0,2));

sum += pow(temp1 - UR,2);

}

PR = sum/count;

cout<<"mean square error of distance to center of gravity"<<PR<<endl;

//圆形性

CDegree= UR/PR;

cout<<"degree of circle "<<CDegree<<endl;

//中心距

cvWaitKey(0);

}

cvReleaseImage(&src);

cvReleaseImage(&dst);

cvReleaseMemStorage(&storage);

return 0;

}

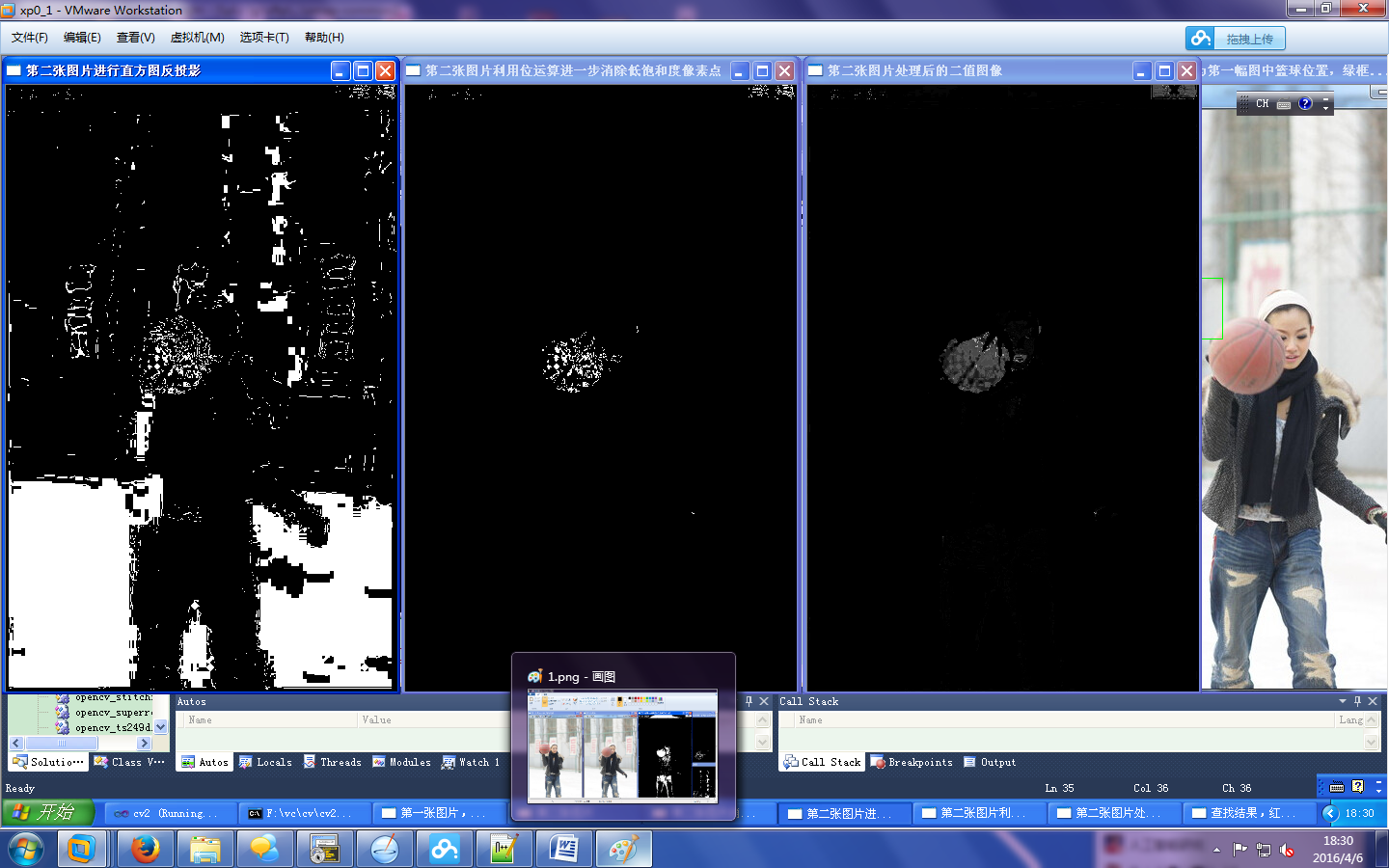

使用均值漂移算法查找物体

#include <opencv2/opencv.hpp>

using namespace cv;

using namespace std;

#include "Histogram1D.h"

#include <iostream>

#include <vector>

#include "ContentFinder.h"

#include "colorhistogram.h"

int main()

{

//读取參考图像

cv::Mat image= cv::imread("f:\\img\\ball.jpg");

if (!image.data)

return 0;

//定义查找物体

cv::Mat imageROI= image(cv::Rect(85,200,64,64));

cv::rectangle(image, cv::Rect(85,200,64,64),cv::Scalar(0,0,255));

//显示參考图像

cv::namedWindow("第一张图片,标记篮球位置");

cv::imshow("第一张图片,标记篮球位置",image);

//获得色度直方图

ColorHistogram hc;

cv::MatND colorhist= hc.getHueHistogram(imageROI);

//读入目标图像

image= cv::imread("f:\\img\\ball2.jpg");

//显示目标图像

cv::namedWindow("第二张图片");

cv::imshow("第二张图片",image);

//将RGB图像图像转换为HSV图像

cv::Mat hsv;

cv::cvtColor(image, hsv, CV_BGR2HSV);

//分离图像通道

vector<cv::Mat> v;

cv::split(hsv,v);

//消除饱和度较低的像素点

int minSat=65;

cv::threshold(v[1],v[1],minSat,255,cv::THRESH_BINARY);

cv::namedWindow("第二张图片消除饱和度较低的像素点");

cv::imshow("第二张图片消除饱和度较低的像素点",v[1]);

//进行直方图反投影

ContentFinder finder;

finder.setHistogram(colorhist);

finder.setThreshold(0.3f);

int ch[1]={0};

cv::Mat result= finder.find(hsv,0.0f,180.0f,ch,1);

cv::namedWindow("第二张图片进行直方图反投影");

cv::imshow("第二张图片进行直方图反投影",result);

//利用位运算消除低饱和度像素

cv::bitwise_and(result,v[1],result);

cv::namedWindow("第二张图片利用位运算进一步消除低饱和度像素点");

cv::imshow("第二张图片利用位运算进一步消除低饱和度像素点",result);

// 得到反投影直方图概率图像

finder.setThreshold(-1.0f);

result= finder.find(hsv,0.0f,180.0f,ch,1);

cv::bitwise_and(result,v[1],result);

cv::namedWindow("第二张图片处理后的二值图像");

cv::imshow("第二张图片处理后的二值图像",result);

cv::Rect rect(85,200,64,64);

cv::rectangle(image, rect, cv::Scalar(0,0,255));

cv::TermCriteria criteria(cv::TermCriteria::MAX_ITER,10,0.01);

cout << "均值漂移迭代次数 = " << cv::meanShift(result,rect,criteria) << endl;

cv::rectangle(image, rect, cv::Scalar(0,255,0));

//展示结果图

cv::namedWindow("查找结果,红框为第一幅图中篮球位置,绿框为现位置");

cv::imshow("查找结果,红框为第一幅图中篮球位置,绿框为现位置",image);

cv::waitKey();

return 0;

} #if!defined CONTENTFINDER

#define CONTENTFINDER

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

using namespace cv;

class ContentFinder

{

private:

float hranges[2];

const float* ranges[3];

int channels[3];

float threshold;

Mat histogram;

public:

ContentFinder():threshold(-1.0f)

{

//所有通道的范围相同

ranges[0] = hranges;

ranges[1] = hranges;

ranges[2] = hranges;

}

//设置门限参数[0,1]

void setThreshold(float t)

{

threshold = t;

}

//获取门限参数

float getThreshold()

{

return threshold;

}

//设置参考的直方图

void setHistogram(const Mat& h)

{

histogram = h;

normalize(histogram,histogram,1.0);

}

//简单的利用反向投影直方图寻找

Mat find(const Mat& image)

{

Mat result;

hranges[0] = 0.0;

hranges[1] = 255.0;

channels[0] = 0;

channels[1] = 1;

channels[2] = 2;

calcBackProject(&image,1,channels,histogram,result,ranges,255.0);

if (threshold>0.0)

{

cv::threshold(result, result, 255*threshold, 255, cv::THRESH_BINARY);

}

return result;

}

//复杂的利用反向投影直方图,增加了一些参数

Mat find(const Mat &image,float minValue,float maxValue,int *channels,int dim)

{

Mat result;

hranges[0] = minValue;

hranges[1] = maxValue;

for(int i = 0;i < dim;i++)

{

this->channels[i] = channels[i];

}

calcBackProject(&image,1,channels,histogram,result,ranges,255.0);

if(threshold >0.0)

cv::threshold(result,result, 255*threshold,255,THRESH_BINARY);

return result;

}

};

#endif #if!defined COLORHISTOGRAM

#define COLORHISTOGRAM

#include <opencv2/core/core.hpp>

#include <opencv2/imgproc/imgproc.hpp>

using namespace cv;

class ColorHistogram

{

private:

int histSize[3];

float hranges[2];

const float* ranges[3];

int channels[3];

public:

//构造函数

ColorHistogram()

{

histSize[0]= histSize[1]= histSize[2]= 256;

hranges[0] = 0.0;

hranges[1] = 255.0;

ranges[0] = hranges;

ranges[1] = hranges;

ranges[2] = hranges;

channels[0] = 0;

channels[1] = 1;

channels[2] = 2;

}

//计算彩色图像直方图

Mat getHistogram(const Mat& image)

{

Mat hist;

//BGR直方图

hranges[0]= 0.0;

hranges[1]= 255.0;

channels[0]= 0;

channels[1]= 1;

channels[2]= 2;

//计算

calcHist(&image,1,channels,Mat(),hist,3,histSize,ranges);

return hist;

}

//计算颜色的直方图

Mat getHueHistogram(const Mat &image)

{

Mat hist;

Mat hue;

//转换到HSV空间

cvtColor(image,hue,CV_BGR2HSV);

//设置1维直方图使用的参数

hranges[0] = 0.0;

hranges[1] = 180.0;

channels[0] = 0;

//计算直方图

calcHist(&hue,1,channels,Mat(),hist,1,histSize,ranges);

return hist;

}

//减少颜色

Mat colorReduce(const Mat &image,int div = 64)

{

int n = static_cast<int>(log(static_cast<double>(div))/log(2.0));

uchar mask = 0xFF<<n;

Mat_<Vec3b>::const_iterator it = image.begin<Vec3b>();

Mat_<Vec3b>::const_iterator itend = image.end<Vec3b>();

//设置输出图像

Mat result(image.rows,image.cols,image.type());

Mat_<Vec3b>::iterator itr = result.begin<Vec3b>();

for(;it != itend;++it,++itr)

{

(*itr)[0] = ((*it)[0]&mask) + div/2;

(*itr)[1] = ((*it)[1]&mask) + div/2;

(*itr)[2] = ((*it)[2]&mask) + div/2;

}

return result;

}

};

#endif #if !defined HISTOGRAM

#define HISTOGRAM

#include <opencv2/core/core.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

using namespace std;

using namespace cv;

class Histogram1D

{

private:

//直方图的点数

int histSize[1];

//直方图的范围

float hranges[2];

//指向该范围的指针

const float* ranges[1];

//通道

int channels[1];

public:

//构造函数

Histogram1D()

{

histSize[0] = 256;

hranges[0] = 0.0;

hranges[1] = 255.0;

ranges[0] = hranges;

channels[0] = 0;

}

Mat getHistogram(const Mat &image)

{

Mat hist;

//计算直方图函数

//参数为:源图像(序列)地址,输入图像的个数,通道数,掩码,输出结果,直方图维数,每一维的大小,每一维的取值范围

calcHist(&image,1,channels,Mat(),hist,1,histSize,ranges);

//这个函数虽然有很多参数,但是大多数时候,只会用于灰度图像或者彩色图像

//但是,允许通过指明一个多通道图像使用多幅图像

//第6个参数指明了直方图的维数

return hist;

}

Mat getHistogramImage(const Mat &image)

{

//首先计算直方图

Mat hist = getHistogram(image);

//获取最大值和最小值

double maxVal = 0;

double minVal = 0;

//minMaxLoc用来获得最大值和最小值,后面两个参数为最小值和最大值的位置,0代表不需要获取

minMaxLoc(hist,&minVal,&maxVal,0,0);

//展示直方图的画板:底色为白色

Mat histImg(histSize[0],histSize[0],CV_8U,Scalar(255));

//将最高点设为bin总数的90%

//int hpt = static_cast<int>(0.9*histSize[0]);

int hpt = static_cast<int>(histSize[0]);

//为每一个bin画一条线

for(int h = 0; h < histSize[0];h++)

{

float binVal = hist.at<float>(h);

int intensity = static_cast<int>(binVal*hpt/maxVal);

//int intensity = static_cast<int>(binVal);

line(histImg,Point(h,histSize[0]),Point(h,histSize[0]-intensity),Scalar::all(0));

}

return histImg;

}

Mat applyLookUp(const Mat& image,const Mat& lookup)

{

Mat result;

LUT(image,lookup,result);

return result;

}

Mat strech(const Mat &image,int minValue = 0)

{

//首先计算直方图

Mat hist = getHistogram(image);

//左边入口

int imin = 0;

for(;imin< histSize[0];imin++)

{

cout<<hist.at<float>(imin)<<endl;

if(hist.at<float>(imin) > minValue)

break;

}

//右边入口

int imax = histSize[0]-1;

for(;imax >= 0; imax--)

{

if(hist.at<float>(imax) > minValue)

break;

}

//创建查找表

int dim(256);

Mat lookup(1,&dim,CV_8U);

for(int i = 0; i < 256; i++)

{

if(i < imin)

{

lookup.at<uchar>(i) = 0;

}

else if(i > imax)

{

lookup.at<uchar>(i) = 255;

}

else

{

lookup.at<uchar>(i) = static_cast<uchar>(255.0*(i-imin)/(imax-imin)+0.5);

}

}

Mat result;

result = applyLookUp(image,lookup);

return result;

}

Mat equalize(const Mat &image)

{

Mat result;

equalizeHist(image,result);

return result;

}

};

#endif通过直方图比较检索相似图片

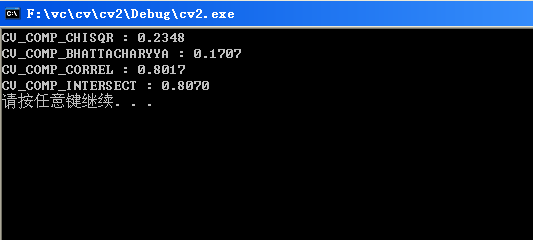

CompareHist(),是比较两个统计直方图的分布,总共有四个方法,被定义如下:

#define CV_COMP_CORREL 0

#define CV_COMP_CHISQR 1

#define CV_COMP_INTERSECT2

#define CV_COMP_BHATTACHARYYA3

而这些方法分别为相关系数,卡方,交集法以及在做常态分布比对的Bhattacharyya距离,这些方法都是用来做统计直方图的相似度比较的方法,而且,都是根据统计学的概念,这边就简单的拿来用灰阶统计直方图来比较,而这部份的比较方式,是由图形的色彩结构来着手,下面就简单的用三种情况来分析它们距离比较的方式

#include "opencv2/highgui/highgui.hpp"

#include "opencv/cv.hpp"

//画直方图用

int HistogramBins = 256;

float HistogramRange1[2]={0,255};

float *HistogramRange[1]={&HistogramRange1[0]};

/*

* imagefile1:

* imagefile2:

* method: could be CV_COMP_CHISQR, CV_COMP_BHATTACHARYYA, CV_COMP_CORREL, CV_COMP_INTERSECT

*/

int CompareHist(const char* imagefile1, const char* imagefile2)

{

IplImage *image1=cvLoadImage(imagefile1, 0);

IplImage *image2=cvLoadImage(imagefile2, 0);

CvHistogram *Histogram1 = cvCreateHist(1, &HistogramBins, CV_HIST_ARRAY,HistogramRange);

CvHistogram *Histogram2 = cvCreateHist(1, &HistogramBins, CV_HIST_ARRAY,HistogramRange);

cvCalcHist(&image1, Histogram1);

cvCalcHist(&image2, Histogram2);

cvNormalizeHist(Histogram1, 1);

cvNormalizeHist(Histogram2, 1);

// CV_COMP_CHISQR,CV_COMP_BHATTACHARYYA这两种都可以用来做直方图的比较,值越小,说明图形越相似

printf("CV_COMP_CHISQR : %.4f\n", cvCompareHist(Histogram1, Histogram2, CV_COMP_CHISQR));

printf("CV_COMP_BHATTACHARYYA : %.4f\n", cvCompareHist(Histogram1, Histogram2, CV_COMP_BHATTACHARYYA));

// CV_COMP_CORREL, CV_COMP_INTERSECT这两种直方图的比较,值越大,说明图形越相似

printf("CV_COMP_CORREL : %.4f\n", cvCompareHist(Histogram1, Histogram2, CV_COMP_CORREL));

printf("CV_COMP_INTERSECT : %.4f\n", cvCompareHist(Histogram1, Histogram2, CV_COMP_INTERSECT));

cvReleaseImage(&image1);

cvReleaseImage(&image2);

cvReleaseHist(&Histogram1);

cvReleaseHist(&Histogram2);

return 0;

}

int main(int argc, char* argv[])

{

CompareHist(argv[1], argv[2]);

//CompareHist("d:\\camera.jpg", "d:\\camera1.jpg");

system("pause");

return 0;

}

图1

图2

结果

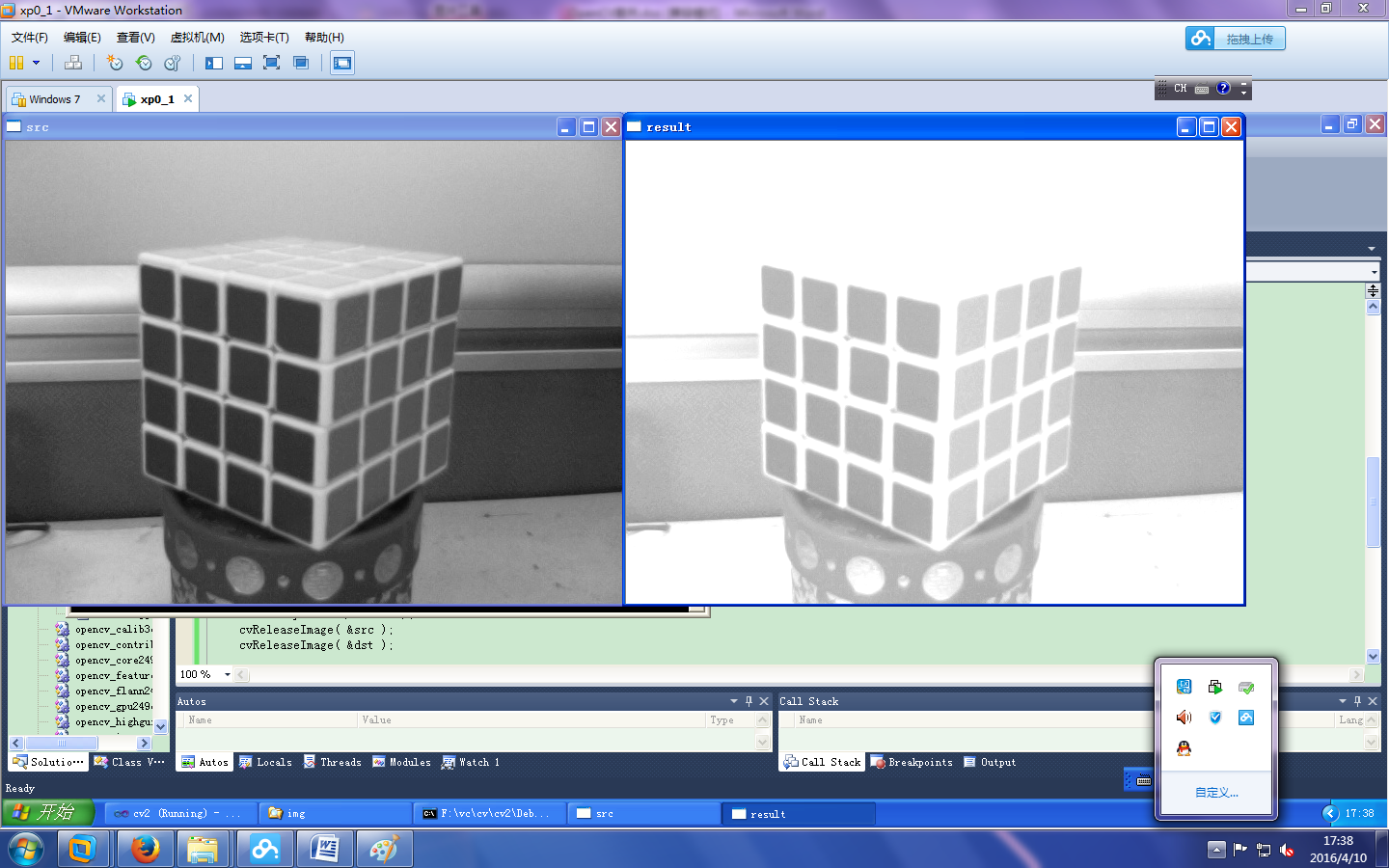

亮度变换

#include "opencv\\cv.h"

#include "opencv\\highgui.h"

/*

src and dst are grayscale, 8-bit images;

Default input value:

[low, high] = [0,1]; X-Direction

[bottom, top] = [0,1]; Y-Direction

gamma ;

if adjust successfully, return 0, otherwise, return non-zero.

*/

int ImageAdjust(IplImage* src, IplImage* dst,

double low, double high, // X方向:low and high are the intensities of src

double bottom, double top, // Y方向:mapped to bottom and top of dst

double gamma )

{

if( low<0 && low>1 && high <0 && high>1&&

bottom<0 && bottom>1 && top<0 && top>1 && low>high)

return -1;

double low2 = low*255;

double high2 = high*255;

double bottom2 = bottom*255;

double top2 = top*255;

double err_in = high2 - low2;

double err_out = top2 - bottom2;

int x,y;

double val;

// intensity transform

for( y = 0; y < src->height; y++)

{

for (x = 0; x < src->width; x++)

{

val = ((uchar*)(src->imageData + src->widthStep*y))[x];

val = pow((val - low2)/err_in, gamma) * err_out + bottom2;

if(val>255) val=255; if(val<0) val=0; // Make sure src is in the range [low,high]

((uchar*)(dst->imageData + dst->widthStep*y))[x] = (uchar) val;

}

}

return 0;

}

int main( int argc, char** argv )

{

IplImage *src = 0, *dst = 0;

src=cvLoadImage("f:\\img\\c2.jpg", 0); // force to gray image

if(src==0)return 0;

cvNamedWindow( "src", 1 );

cvNamedWindow( "result", 1 );

// Image adjust

dst = cvCloneImage(src);

// 输入参数 [0,0.5] 和 [0.5,1], gamma=1

if( ImageAdjust( src, dst, 0, 0.5, 0.5, 1, 1)!=0) return -1;

cvShowImage( "src", src );

cvShowImage( "result", dst );

cvWaitKey(0);

cvDestroyWindow("src");

cvDestroyWindow("result");

cvReleaseImage( &src );

cvReleaseImage( &dst );

return 0;

}

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?