CIFAR-10 是由 Hinton 的学生 Alex Krizhevsky 和 Ilya Sutskever 整理的一个用于识别普适物体的小型数据集。一共包含 10 个类别的 RGB 彩色图 片:飞机( a叩lane )、汽车( automobile )、鸟类( bird )、猫( cat )、鹿( deer )、狗( dog )、蛙类( frog )、马( horse )、船( ship )和卡车( truck )。图片的尺寸为 32×32 ,数据集中一共有 50000 张训练圄片和 10000 张测试图片。

这里我们通过CNN对其进行特征的提取,训练出一个模型,再用训练出的模型对测试集进行预测,得到预测的结果

这里我们使用tensorboardX来绘制损失函数,

使用的方式是我们在代码中使用SummaryWriter来写入events文件,例如,写入的文件在E:\pythonProject1\runs\scalar下(注意这是文件夹的名字,不是文件的名字)

在cmd中输入下列命令:

tensorboard --logdir=E:\pythonProject1\runs\scalar

得到下面的界面

在浏览器中输入:http://localhost:6006/ 即可打开我们的tensorBoard面板,在我们已经训练结束,得到了loss曲线,展示的结果如下所示:

放大的Loss曲线如下所示:

可以看到,上述的图形会有一个波动,我们可以调节左侧的Smoothing来增加它的平滑度,使曲线平滑,如下所示:

其中虚线是我们原始的图像,实线是我们平滑之后的图像

同时TensorBoard还有实时刷新的功能

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

import torchvision

from torch import optim

import numpy as np

from tensorboardX import SummaryWriter

import torch.nn.functional as F

torch.cuda.set_device(1)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

batch_size = 1000

cifar_train = datasets.CIFAR10(root="./data", train=True, transform=torchvision.transforms.ToTensor(), download = True)

cifar_loader = DataLoader(dataset=cifar_train, shuffle=True, batch_size=batch_size)

class CNN(torch.nn.Module):

def __init__(self):

super(CNN, self).__init__()

# 3*32*32 ---> 10*14*14

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=3, out_channels=10, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2)

)

#10*14*14 --> 20*5*5

self.conv2 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=10,out_channels=20,kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2)

)

self.conv3 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=20, out_channels=30,kernel_size=3),

torch.nn.ReLU(),

)

self.linear = torch.nn.Linear(30*3*3, 10)

def forward(self,x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = x.view(batch_size, -1)

x = self.linear(x)

return x

model = CNN()

model = model.to(device)

writer = SummaryWriter('runs/scalar')

optimizer = optim.SGD(params=model.parameters(), lr=0.01, momentum=0.9)

criteria = torch.nn.CrossEntropyLoss()

epoch = 100

for train_epoch in range(epoch):

Loss = 0

for i,date in enumerate(cifar_loader, 0):

inputs, labels = date

inputs, labels = inputs.to(device), labels.to(device)

x = model(inputs)

loss = criteria(x, labels)

Loss += loss.item()

#误差反传

optimizer.zero_grad()

loss.backward()

optimizer.step()

writer.add_scalar('loss', Loss/(len(cifar_train) / batch_size), global_step=train_epoch + 1)

#writer.add_scalar('epoch',Loss, global_step=train_epoch)

print("Epoch {} Loss = {}".format(train_epoch + 1, Loss/(len(cifar_train) / batch_size)))

torch.save(model.state_dict(), "CNN_CIFAR10.pth")

网络中使用了三个卷积层和两个最大池化层,最后得到一个3033的张量,将其转变为向量的性质,并添加一个全连接层,由3033维降低到我们要预测的10维

在训练完毕后,通过torch.save()将模型中的参数进行保存,然后再进行预测操作

测试模型如下所示:

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

import torchvision

import matplotlib.pyplot as plt

import numpy as np

from torchvision.utils import make_grid

import torch.nn.functional as F

batch_size = 100

torch.cuda.set_device(1)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

cifar_test = datasets.CIFAR10("./data", train=False, transform=torchvision.transforms.ToTensor(), download=True)

cifar_test_loader = DataLoader(dataset=cifar_test, shuffle=True,batch_size=batch_size)

class CNN(torch.nn.Module):

def __init__(self):

super(CNN, self).__init__()

# 3*32*32 ---> 10*14*14

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=3, out_channels=10, kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2)

)

#10*14*14 --> 20*5*5

self.conv2 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=10,out_channels=20,kernel_size=5),

torch.nn.ReLU(),

torch.nn.MaxPool2d(kernel_size=2)

)

self.conv3 = torch.nn.Sequential(

torch.nn.Conv2d(in_channels=20, out_channels=30,kernel_size=3),

torch.nn.ReLU(),

)

self.linear = torch.nn.Linear(30*3*3, 10)

def forward(self,x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = x.view(batch_size, -1)

x = self.linear(x)

return x

model = CNN()

model.load_state_dict(torch.load("CNN_CIFAR10.pth"))

model = model.to(device)

correct_num = 0

total_num = len(cifar_test)

with torch.no_grad():

total = 0

for i, data in enumerate(cifar_test_loader):

inputs, labels = data

# img = inputs.reshape(batch_size, 3, 32, 32)

# plt.imshow(np.transpose(make_grid(img).numpy(), (1,2,0)))

# plt.show()

inputs, labels = inputs.to(device), labels.to(device)

x = model(inputs)

_, y = torch.max(x,dim=1)

correct_num += sum(y == labels)

print("Correct Rate = {}%".format(correct_num * 100 / total_num))

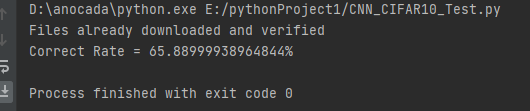

输出的结果为:

在这里我们可以得到65%的正确率在测试集上

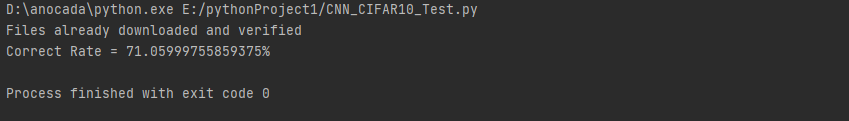

如果我们使用训练集的样本进行测试,得到的结果如下所示:

在测试集上,有71%的正确率

3552

3552

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?