My implement of LeNet. Using cifar10 dataset.

参考了https://github.com/OUCTheoryGroup/colab_demo,多看看官方文档对学习pytorch也有好处,之前一直看代码,没有动手写,这次还是熟悉了一些api用法

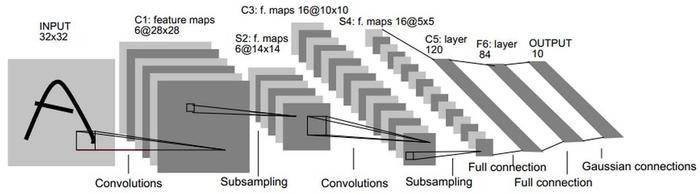

LeNet的网络结构,其中C5使用了55的卷积核将尺度下降为11,也有其他人用(400,120)的全连接层,不过由于此时尺度只有5*5,卷积和全连接也可以视为类似操作,其余改动便是LeNet使用tanh作为activation,这里使用relu

import torch

import torchvision

from torchvision import transforms

# import torchvision.transforms as transforms

from torch import nn

# import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

# 使用GPU训练,可以在菜单 "代码执行工具" -> "更改运行时类型" 里进行设置

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# device = ="cuda:0" if torch.cuda.is_available() else "cpu"

# 加载数据集

transform_train = transforms.Compose([

transforms.RandomCrop(32, padding=4),

transforms.RandomHorizontalFlip(p = 0.5),

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))])

transform_test = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))])

# cifar10 dataset

# - train: True = 训练集, False = 测试集

trainset = torchvision.datasets.CIFAR10(root='./data', train=True, transform=transform_train, target_transform=None, download=True)

testset = torchvision.datasets.CIFAR10(root='./data', train=False, transform=transform_test, target_transform=None, download=True)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=128, shuffle=True, num_workers=2)

testloader = torch.utils.data.DataLoader(testset, batch_size=128, shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

# BCHW [batch_size, channels, height, weight]

# [128, 3, 32, 32]

# [128, 6, 28, 28]

# [128, 16, 10, 10]

# [128, 16, 5, 5]

# [128, 120, 1, 1]

# [128, 84]

# [128, 10]

# nn.Conv2d(in_channels, out_channels, kernel_size, stride, padding)

self.conv1 = nn.Conv2d(3, 6, 5)

# nn.MaxPool2d(kernel_size=2, stride=2, padding=0)

self.pool2 = nn.MaxPool2d(2, 2)

self.conv3 = nn.Conv2d(6, 16, 5)

self.pool4 = nn.MaxPool2d(kernel_size=2, stride=2, padding=0)

self.conv5 = nn.Conv2d(in_channels=16, out_channels=120, kernel_size=5, stride=1, padding=0)

# self.fc5= nn.Linear(400, 120) 400=16*5*5

self.fc6 = nn.Linear(in_features=120, out_features=84, bias=True)

self.fc7 = nn.Linear(in_features=84, out_features=10, bias=True)

# [batch_size, channels, height, weight] [128, 3, 32, 32]

def forward(self, x):

x = F.relu(self.conv1(x))

x = self.pool2(x)

x = F.relu(self.conv3(x))

x = self.pool4(x)

x = F.relu(self.conv5(x))

x = x.view(x.size(0), -1)

# x = F.relu(self.fc5(x))

x = F.relu(self.fc6(x))

out = self.fc7(x)

return out

# 网络放到GPU上

net = LeNet().to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.001)

for epoch in range(10): # 重复多轮训练

for i, (inputs, labels) in enumerate(trainloader):

inputs = inputs.to(device)

labels = labels.to(device)

# print(inputs.shape, labels.shape)

# torch.Size([128, 3, 32, 32]) torch.Size([128])

# 优化器梯度归零

optimizer.zero_grad()

# 正向传播 + 反向传播 + 优化

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# 输出统计信息

if i % 100 == 0:

print('Epoch: %d Minibatch: %5d loss: %.3f' %(epoch + 1, i + 1, loss.item()))

print('Finished Training')

correct = 0

total = 0

for data in testloader:

images, labels = data

images, labels = images.to(device), labels.to(device)

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %.2f %%' % (

100 * correct / total))

1709

1709

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?