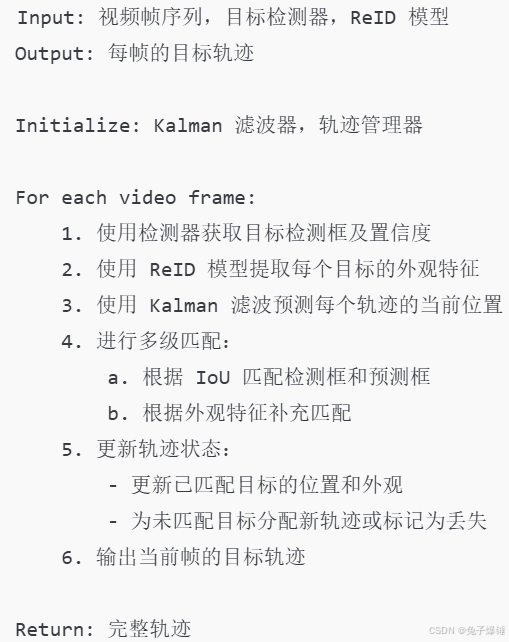

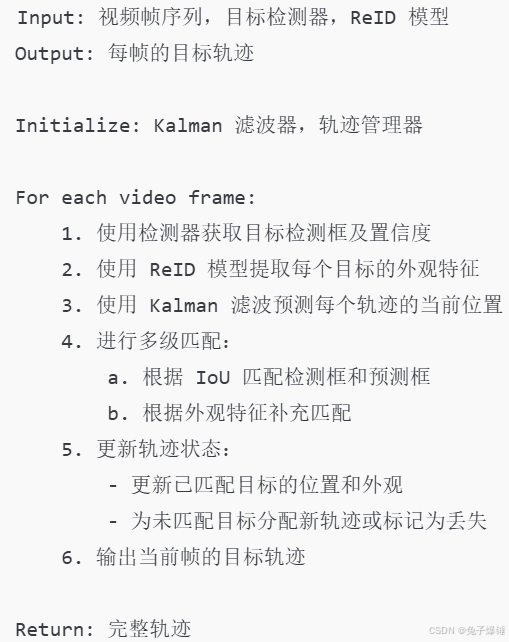

1. 伪代码

2. STrack 类

# 这个类是用来存放轨迹的,每个轨迹都有一些自己的属性,例如id、边界框、预测框、状态等等

# 继承 BaseTrack 的单个 track 类

class STrack(BaseTrack):

shared_kalman = KalmanFilter()

def __init__(self, tlwh, score, feat=None, feat_history=50): # bboxs score 得分

# wait activate

# 初始化 track 全部都是 False 的状态

# 一般是第一次出现某个 track 的情景

self._tlwh = np.asarray(tlwh, dtype=np.float)

self.kalman_filter = None

self.mean, self.covariance = None, None

self.is_activated = False

self.score = score

self.tracklet_len = 0

self.smooth_feat = None

self.curr_feat = None

if feat is not None:

self.update_features(feat)

self.features = deque([], maxlen=feat_history)

self.alpha = 0.9

def update_features(self, feat):

feat /= np.linalg.norm(feat)

self.curr_feat = feat

if self.smooth_feat is None:

self.smooth_feat = feat

else:

self.smooth_feat = self.alpha * self.smooth_feat + (1 - self.alpha) * feat

self.features.append(feat)

self.smooth_feat /= np.linalg.norm(self.smooth_feat) # 用于求范数,linalg本意为linear(线性) + algebra(代数),norm则表示范数

# 预测这个 track 下一次的位置,其实就是调用自身卡尔曼的 predict 函数更新均值和方差

def predict(self):

mean_state = self.mean.copy()

if self.state != TrackState.Tracked:

mean_state[6] = 0

mean_state[7] = 0

self.mean, self.covariance = self.kalman_filter.predict(mean_state, self.covariance)

@staticmethod

# 这个就是 predict 函数的矩阵版本,做的事情是一样的

def multi_predict(stracks):

if len(stracks) > 0:

multi_mean = np.asarray([st.mean.copy() for st in stracks]) # mean的值是从哪里来的???????????

multi_covariance = np.asarray([st.covariance for st in stracks])

for i, st in enumerate(stracks):

if st.state != TrackState.Tracked:

multi_mean[i][6] = 0

multi_mean[i][7] = 0

multi_mean, multi_covariance = STrack.shared_kalman.multi_predict(multi_mean, multi_covariance)

for i, (mean, cov) in enumerate(zip(multi_mean, multi_covariance)):

stracks[i].mean = mean

stracks[i].covariance = cov

@staticmethod

def multi_gmc(stracks, H=np.eye(2, 3)): # 得到仿射矩阵后, 修正Kalman的结果

if len(stracks) > 0:

multi_mean = np.asarray([st.mean.copy() for st in stracks])

multi_covariance = np.asarray([st.covariance for st in stracks])

R = H[:2, :2]

R8x8 = np.kron(np.eye(4, dtype=float), R)

t = H[:2, 2]

for i, (mean, cov) in enumerate(zip(multi_mean, multi_covariance)):

mean = R8x8.dot(mean)

mean[:2] += t

cov = R8x8.dot(cov).dot(R8x8.transpose())

stracks[i].mean = mean

stracks[i].covariance = cov

# 新激活一个轨迹,用轨迹的初始框来初始化对应的卡尔曼滤波器的参数,并且记录下 track 的 id

# 这个是新建一个 track 调用的函数,并且如果是视频刚开始的话,直接会将 track 的状态变成激活态

# 不是在视频刚开始激活的框的状态为未激活,需要下一帧还有检测框与其进行匹配才会变成激活状态

def activate(self, kalman_filter, frame_id):

"""Start a new tracklet"""

self.kalman_filter = kalman_filter

self.track_id = self.next_id()

# self.mean, self.covariance = self.kalman_filter.initiate(self.tlwh_to_xywh(self._tlwh))

self.mean, self.covariance = self.kalman_filter.initiate(self.tlwh_to_xywh(self._tlwh), self.score)

self.tracklet_len = 0

self.state = TrackState.Tracked

if frame_id == 1:

self.is_activated = True

self.frame_id = frame_id

self.start_frame = frame_id

# 这个应该是轨迹被遮挡或者消失之后重新激活轨迹调用的函数

def re_activate(self, new_track, frame_id, new_id=False):

# self.mean, self.covariance = self.kalman_filter.update(self.mean, self.covariance, self.tlwh_to_xywh(new_track.tlwh))

self.mean, self.covariance = self.kalman_filter.update(self.mean, self.covariance, self.tlwh_to_xywh(new_track.tlwh), new_track.score) # ???????????????????

if new_track.curr_feat is not None:

self.update_features(new_track.curr_feat)

self.tracklet_len = 0

self.state = TrackState.Tracked

self.is_activated = True

self.frame_id = frame_id

if new_id:

self.track_id = self.next_id()

self.score = new_track.score

# 更新轨迹的位置

def update(self, new_track, frame_id): # self本身就是 轨迹

"""

Update a matched track

:type new_track: STrack 其实就是检测的 new_track

:type frame_id: int

:type update_feature: bool

:return:

"""

self.frame_id = frame_id

self.tracklet_len += 1

new_tlwh = new_track.tlwh

self.mean, self.covariance = self.kalman_filter.update(self.mean, self.covariance, self.tlwh_to_xywh(new_tlwh), new_track.score) # ?????????????????

if new_track.curr_feat is not None:

self.update_features(new_track.curr_feat)

self.state = TrackState.Tracked

self.is_activated = True

self.score = new_track.score

@property

# @jit(nopython=True)

# 这个函数很重要,在进行匹配的时候会调用到他,指的是 track 在经过卡尔曼预测之后在当前帧的位置

# 所以这里用了 mean,因为卡尔曼经过 predict 之后会更新 mean 和 covariance 的状态,mean 是

# [cx, cy, w, h, vx, vy, vw, vh],所以 self.mean[:4] 指的就是预测框的坐标信息

def tlwh(self):

"""Get current position in bounding box format `(top left x, top left y,

width, height)`.

"""

if self.mean is None:

return self._tlwh.copy()

ret = self.mean[:4].copy()

ret[:2] -= ret[2:] / 2 # 相当于是 中心点xy - 宽高的一半 = tl

return ret

@property

def tlbr(self):

"""Convert bounding box to format `(min x, min y, max x, max y)`, i.e.,

`(top left, bottom right)`.

"""

ret = self.tlwh.copy()

ret[2:] += ret[:2]

return ret

@property

def xywh(self):

"""Convert bounding box to format `(min x, min y, max x, max y)`, i.e.,

`(top left, bottom right)`.

"""

ret = self.tlwh.copy()

ret[:2] += ret[2:] / 2.0

return ret

@staticmethod

def tlwh_to_xyah(tlwh):

"""Convert bounding box to format `(center x, center y, aspect ratio,

height)`, where the aspect ratio is `width / height`.

"""

ret = np.asarray(tlwh).copy()

ret[:2] += ret[2:] / 2

ret[2] /= ret[3]

return ret

@staticmethod

def tlwh_to_xywh(tlwh):

"""Convert bounding box to format `(center x, center y, width,

height)`.

"""

ret = np.asarray(tlwh).copy()

ret[:2] += ret[2:] / 2

return ret

def to_xywh(self):

return self.tlwh_to_xywh(self.tlwh)

@staticmethod

def tlbr_to_tlwh(tlbr):

ret = np.asarray(tlbr).copy()

ret[2:] -= ret[:2]

return ret

@staticmethod

def tlwh_to_tlbr(tlwh):

ret = np.asarray(tlwh).copy()

ret[2:] += ret[:2]

return ret

def __repr__(self):

return 'OT_{}_({}-{})'.format(self.track_id, self.start_frame, self.end_frame)

3. BoTSORT类

# 正片开始

class BoTSORT(object):

def __init__(self, args, frame_rate=30):

self.tracked_stracks = [] # type : list[STrack] 已追踪轨迹:在前一帧成功追踪的轨迹

self.lost_stracks = [] # type : list[STrack] 失追轨迹:在前n帧失去追踪的轨迹(n<=30)

self.removed_stracks = [] # type : list[STrack] 已删除轨迹:在前n帧失去追踪的轨迹(n>30)

BaseTrack.clear_count() # 应该是初始跟踪,所以清零

self.frame_id = 0

self.args = args

self.track_high_thresh = args.track_high_thresh

self.track_low_thresh = args.track_low_thresh

self.new_track_thresh = args.new_track_thresh

# 缓冲的帧数,超过这么多帧丢失目标才算是真正丢失

self.buffer_size = int(frame_rate / 30.0 * args.track_buffer)

self.max_time_lost = self.buffer_size

self.kalman_filter = KalmanFilter()

# ReID module

self.proximity_thresh = args.proximity_thresh

self.appearance_thresh = args.appearance_thresh

if args.with_reid:

self.encoder = FastReIDInterface(args.fast_reid_config, args.fast_reid_weights, args.device)

if args.benchmark in ["MOT16", "dancetrack"]:

pass

else:

self.gmc = GMC(method=args.cmc_method, verbose=[args.name, args.ablation])

# 追踪主要逻辑函数

def update(self, output_results, img): # 检测输出结果 原图

self.frame_id += 1

activated_starcks = [] # 保存当前帧匹配到持续追踪的轨迹

refind_stracks = [] # 保存当前帧匹配到之前目标丢失的轨迹

lost_stracks = [] # 保存当前帧没有匹配到目标的轨迹

removed_stracks = [] # 保存当前帧

if len(output_results):

# 第一步:将objects转换为x1,y1,x2,y2,score的格式,并构建strack

# output_results 是 [xyxy,score] 或者 [xyxy, score, conf] 的情况

if output_results.shape[1] == 5:

scores = output_results[:, 4]

bboxes = output_results[:, :4]

classes = output_results[:, -1]

else:

# scores = output_results[:, 4] * output_results[:, 5] # [left,top,right,bottom,obj_conf,cls_conf,cls_id]

scores = output_results[:, 4] # [left,top,right,bottom,cls_conf,cls_id]

bboxes = output_results[:, :4] # x1y1x2y2

classes = output_results[:, -1]

# 第二步:根据scroe和track_thresh将strack分为detetions(dets)(>=)和detections_low(dets_second)

# Remove bad detections 移除效果比较差的检测,置信度低于0.1

lowest_inds = scores > self.track_low_thresh

bboxes = bboxes[lowest_inds]

scores = scores[lowest_inds]

classes = classes[lowest_inds]

# Find high threshold detections 高置信度检测 0.65

remain_inds = scores > self.args.track_high_thresh

dets = bboxes[remain_inds]

scores_keep = scores[remain_inds]

classes_keep = classes[remain_inds]

else:

bboxes = []

scores = []

classes = []

dets = []

scores_keep = []

classes_keep = []

'''Extract embeddings '''

if self.args.with_reid:

features_keep = self.encoder.inference(img, dets) # 获得检测框中,经过 ReID提取得到的特征向量

if len(dets) > 0:

'''Detections'''

if self.args.with_reid:

# 结果很特殊,因为类中实现了__repr__(self):

# 'OT_{}_({}-{})'.format(self.track_id, self.start_frame, self.end_frame)

# [OT_1_(1 - 20), OT_2_(1 - 20), OT_3_(1 - 20)......]

# 把初始框封装成 STrack 的格式

detections = [STrack(STrack.tlbr_to_tlwh(tlbr), s, f) for

(tlbr, s, f) in zip(dets, scores_keep, features_keep)]

else:

detections = [STrack(STrack.tlbr_to_tlwh(tlbr), s) for

(tlbr, s) in zip(dets, scores_keep)]

else:

detections = []

''' Add newly detected tracklets to tracked_stracks. 将新检测到的轨迹添加到tracked_stracks'''

# 遍历tracked_stracks(所有的轨迹),如果track还activated,加入tracked_stracks(继续匹配该帧),否则加入unconfirmed

unconfirmed = [] # 未确定的轨迹

tracked_stracks = [] # 确定轨迹 # type: list[STrack]

# is_activated表示除了第一帧外中途只出现一次的目标轨迹(新轨迹,没有匹配过或从未匹配到其他轨迹)

for track in self.tracked_stracks: # self.tracked_stracks : [OT_1_(1-20), OT_2_(1-20), OT_3_(1-20), OT_4_(1-20), OT_5_(1-20), OT_6_(1-20), OT_7_(1-20), OT_8_(1-20), OT_9_(3-20)]

# 当 track 只有一帧的记录时,is_activated=False

if not track.is_activated: # track.is_activated 为 True表示,该轨迹是确定轨迹

unconfirmed.append(track)

else:

tracked_stracks.append(track)

''' Step 2: First association, with high score detection boxes 高分检测框进行第一次关联'''

# 第一次匹配

# 将track_stracks和lost_stracks合并得到track_pool

# 丢失的 track 代表某一帧可能丢了一次,但是仍然在缓冲帧范围之内,所以依然可以用来匹配

strack_pool = joint_stracks(tracked_stracks, self.lost_stracks) # OT_1_(1-20),'OT_{}_({}-{})'.format(self.track_id, self.start_frame, self.end_frame) strack_pool : [OT_1_(1-20), OT_2_(1-20), OT_3_(1-20), OT_4_(1-20), OT_5_(1-20), OT_6_(1-20), OT_7_(1-20), OT_8_(1-20), OT_9_(3-20)]

# Predict the current location with KF 用卡尔曼滤波预测

# 将strack_pool送入muti_predict进行预测(卡尔曼滤波)

STrack.multi_predict(strack_pool)

# Fix camera motion 这一块先不管哈!!!!!!!!!!!!!!!

warp = self.gmc.apply(img, dets)

STrack.multi_gmc(strack_pool, warp)

STrack.multi_gmc(unconfirmed, warp)

# Associate with high score detection boxes 确定轨迹与丢失轨迹 与 高分检测框先进性匹配

# 让预测后的 track 和当前帧的 detection 框做 cost_matrix,用的方式为 IOU 关联

# 这里的 iou_distance 函数中调用了 track.tlbr,返回的是预测之后的 track 坐标信息

ious_dists = matching.iou_distance(strack_pool, detections) # 已计算出 IOU损失

ious_dists_mask = (ious_dists > self.proximity_thresh) # self.proximity_thresh = 0.5

if not self.args.mot20:

ious_dists = matching.fuse_score(ious_dists, detections) # 我的理解:IOU结果与检测score结合了

if self.args.with_reid:

emb_dists = matching.embedding_distance(strack_pool, detections) / 2.0

raw_emb_dists = emb_dists.copy()

emb_dists[emb_dists > self.appearance_thresh] = 1.0 # 外观损失 大于 0.25,直接把损失定为 1

emb_dists[ious_dists_mask] = 1.0 # 把 IOU损失过大的,直接定为 1 (ps:IOU与检测未结合的损失哈)

dists = np.minimum(ious_dists, emb_dists) # IOU与嵌入损失,取最小值

# Popular ReID method (JDE / FairMOT)

# raw_emb_dists = matching.embedding_distance(strack_pool, detections)

# dists = matching.fuse_motion(self.kalman_filter, raw_emb_dists, strack_pool, detections)

# emb_dists = dists

# IoU making ReID

# dists = matching.embedding_distance(strack_pool, detections)

# dists[ious_dists_mask] = 1.0

else:

dists = ious_dists

# 用match_thresh = 0.8(越大说明iou越小)过滤较小的iou,利用匈牙利算法进行匹配,得到matches, u_track, u_detection

matches, u_track, u_detection = matching.linear_assignment(dists, thresh=self.args.match_thresh) # 返回匹配的轨迹,未匹配轨迹,未匹配高分检测

# 遍历matches,如果state为Tracked,调用update方法,并加入到activated_stracks,否则调用re_activate,并加入refind_stracks

# matches = [itracked, idet] itracked指的是轨迹的索引,idet 指的是当前目标框的索引,意思是第几个轨迹匹配第几个目标框

for itracked, idet in matches:

track = strack_pool[itracked]

det = detections[idet]

# 对应 strack_pool 中的 tracked_stracks

if track.state == TrackState.Tracked:

# 更新轨迹的bbox为当前匹配到的bbox

track.update(detections[idet], self.frame_id)

# activated_starcks是目前能持续追踪到的轨迹

activated_starcks.append(track)

# 对应 strack_pool 中的 self.lost_stracks,重新激活 track

else:

track.re_activate(det, self.frame_id, new_id=False)

# refind_stracks是重新追踪到的轨迹

refind_stracks.append(track)

# 第二次匹配:和低分的矩阵进行匹配

''' Step 3: Second association, with low score detection boxes'''

if len(scores):

inds_high = scores < self.args.track_high_thresh

inds_low = scores > self.args.track_low_thresh

inds_second = np.logical_and(inds_low, inds_high) # 筛选分数处于0.1<分数<阈值的

dets_second = bboxes[inds_second]

scores_second = scores[inds_second]

classes_second = classes[inds_second]

else:

dets_second = []

scores_second = []

classes_second = []

# association the untrack to the low score detections

if len(dets_second) > 0:

'''Detections'''

detections_second = [STrack(STrack.tlbr_to_tlwh(tlbr), s) for

(tlbr, s) in zip(dets_second, scores_second)]

else:

detections_second = []

# 找出第一次匹配中没匹配到的轨迹(激活状态) 找出 strack_pool 中没有被匹配到的 track(这帧目标被遮挡的情况)

r_tracked_stracks = [strack_pool[i] for i in u_track if strack_pool[i].state == TrackState.Tracked]

# 计算r_tracked_stracks和detections_second的iou_distance(代价矩阵)

dists = matching.iou_distance(r_tracked_stracks, detections_second)

# 用match_thresh = 0.8过滤较小的iou,利用匈牙利算法进行匹配,得到matches, u_track, u_detection

matches, u_track, u_detection_second = matching.linear_assignment(dists, thresh=0.5) # 分数比较低的目标框没有匹配到轨迹就会直接被扔掉,不会创建新的轨迹

# 遍历matches,如果state为Tracked,调用update方法,并加入到activated_stracks,否则调用re_activate,并加入refind_stracks

# 在低置信度的检测框中再次与没有被匹配到的 track 做 IOU 匹配

for itracked, idet in matches:

track = r_tracked_stracks[itracked]

det = detections_second[idet]

if track.state == TrackState.Tracked:

track.update(det, self.frame_id)

activated_starcks.append(track)

else:

track.re_activate(det, self.frame_id, new_id=False)

refind_stracks.append(track)

# 遍历u_track(第二次匹配也没匹配到的轨迹),将state不是Lost的轨迹,调用mark_losk方法,并加入lost_stracks,等待下一帧匹配

# lost_stracks加入上一帧还在持续追踪但是这一帧两次匹配不到的轨迹

# 如果 track 经过两次匹配之后还没有匹配到 box 的话,就标记为丢失了

for it in u_track:

track = r_tracked_stracks[it]

if not track.state == TrackState.Lost:

track.mark_lost()

lost_stracks.append(track)

'''Deal with unconfirmed tracks, usually tracks with only one beginning frame'''

# 尝试匹配中途第一次出现的轨迹

# 当前帧的目标框会优先和长期存在的轨迹(包括持续追踪的和断追的轨迹)匹配,再和只出现过一次的目标框匹配

detections = [detections[i] for i in u_detection]

# 计算unconfirmed和detections的iou_distance(代价矩阵)

# unconfirmed是不活跃的轨迹(过了30帧)

ious_dists = matching.iou_distance(unconfirmed, detections)

ious_dists_mask = (ious_dists > self.proximity_thresh)

if not self.args.mot20:

ious_dists = matching.fuse_score(ious_dists, detections)

if self.args.with_reid:

emb_dists = matching.embedding_distance(unconfirmed, detections) / 2.0

raw_emb_dists = emb_dists.copy()

emb_dists[emb_dists > self.appearance_thresh] = 1.0

emb_dists[ious_dists_mask] = 1.0

dists = np.minimum(ious_dists, emb_dists)

else:

dists = ious_dists

# 用match_thresh = 0.8过滤较小的iou,利用匈牙利算法进行匹配,得到matches, u_track, u_detection

matches, u_unconfirmed, u_detection = matching.linear_assignment(dists, thresh=0.7)

# 遍历matches,如果state为Tracked,调用update方法,并加入到activated_stracks,否则调用re_activate,并加入refind_stracks

# 如果能够匹配上的话,说明这个 track 已经是确定状态了,用当前匹配到的框对卡尔曼的预测进行调节,并且将其加入到 activated_starcks

for itracked, idet in matches:

unconfirmed[itracked].update(detections[idet], self.frame_id)

activated_starcks.append(unconfirmed[itracked])

# 遍历u_unconfirmed,调用mark_removd方法,并加入removed_stracks

for it in u_unconfirmed:

# 中途出现一次的轨迹和当前目标框匹配失败,删除该轨迹(认为是检测器误判)

# 真的需要直接删除吗??????

track = unconfirmed[it]

track.mark_removed()

removed_stracks.append(track)

""" Step 4: Init new stracks"""

# 遍历u_detection(前两步都没匹配成功的目标框),对于score大于high_thresh,调用activate方法,并加入activated_stracks

# 此时还没匹配的u_detection将赋予新的id

# 经过上面这些步骤之后,如果还有没被匹配的检测框,说明可能画面中新来了一个物体

# 那么就直接将他视为一个新的 track,但是这个 track 的状态并不是激活态

# 在下一次循环的时候会先将他放到 unconfirmed_track 中去,然后根据有没有框匹配他来决定是激活还是丢弃

for inew in u_detection:

track = detections[inew]

if track.score < self.new_track_thresh:

continue

# 只有第一帧新建的轨迹会被标记为is_activate=True,其他帧不会

track.activate(self.kalman_filter, self.frame_id)

# 把新的轨迹加入到当前活跃轨迹中

activated_starcks.append(track)

# 遍历lost_stracks,对于丢失超过max_time_lost(30)的轨迹,调用mark_removed方法,并加入removed_stracks

""" Step 5: Update state"""

# 对于丢失目标的 track 来说,判断他丢失的帧数是不是超过了 buffer 缓冲帧数,超过就删除

for track in self.lost_stracks:

if self.frame_id - track.end_frame > self.max_time_lost:

track.mark_removed()

removed_stracks.append(track)

""" Merge """

# print('Ramained match {} s'.format(t4-t3))

# 遍历tracked_stracks,筛选出state为Tracked的轨迹,保存到tracked_stracks

# 指上一帧匹配上的 track

self.tracked_stracks = [t for t in self.tracked_stracks if t.state == TrackState.Tracked]

# 将activated_stracks,refind_stracks合并到track_stracks

# 加上这一帧新激活的 track(两次匹配到的 track,以及由 unconfirm 状态变为激活态的 track)

self.tracked_stracks = joint_stracks(self.tracked_stracks, activated_starcks)

# 加上丢帧目标重新被匹配的 track

self.tracked_stracks = joint_stracks(self.tracked_stracks, refind_stracks)

# 遍历lost_stracks,去除tracked_stracks和removed_stracks中存在的轨迹

# self.lost_stracks 在经过这一帧的匹配之后如果被重新激活的话就将其移出列表

self.lost_stracks = sub_stracks(self.lost_stracks, self.tracked_stracks)

# 将这一帧丢失的 track 添加进列表

self.lost_stracks.extend(lost_stracks)

# self.lost_stracks 如果在缓冲帧数内一直没有被匹配上被 remove 的话也将其移出 lost_stracks 列表

self.lost_stracks = sub_stracks(self.lost_stracks, self.removed_stracks)

# 更新被移除的 track 列表

self.removed_stracks.extend(removed_stracks)

# 调用remove_duplicate_stracks函数,计算tracked_stracks,lost_stracks的iou_distance,对于iou_distance<0.15的认为是同一个轨迹,

# 对比该轨迹在track_stracks和lost_stracks的跟踪帧数和长短,仅保留长的那个

# 将这两段 track 中重合度高的部分给移除掉(暂时还不是特别理解为啥要这样)

self.tracked_stracks, self.lost_stracks = remove_duplicate_stracks(self.tracked_stracks, self.lost_stracks)

# get scores of lost tracks

# 遍历tracked_stracks,将所有的is_activated为true的轨迹输出 得到最终的结果,也就是成功追踪的 track 序列

# output_stracks = [track for track in self.tracked_stracks if track.is_activated]

output_stracks = [track for track in self.tracked_stracks]

return output_stracks

4. 方法

# 将 tlista 和 tlistb 的 track 给合并成一个大的列表

def joint_stracks(tlista, tlistb): # 确定轨迹 + 丢失轨迹

exists = {}

res = []

for t in tlista:

exists[t.track_id] = 1

res.append(t)

for t in tlistb:

tid = t.track_id

if not exists.get(tid, 0):

exists[tid] = 1

res.append(t)

return res

# 取两个 track 的不重合部分

def sub_stracks(tlista, tlistb):

stracks = {}

for t in tlista:

stracks[t.track_id] = t

for t in tlistb:

tid = t.track_id

if stracks.get(tid, 0):

del stracks[tid]

return list(stracks.values())

# 如果两段 track 离得很近的话,就要去掉一个

# 根据时间维度上出现的帧数多少来决定移除哪一边的 track

def remove_duplicate_stracks(stracksa, stracksb): # self.tracked_stracks, self.lost_stracks

pdist = matching.iou_distance(stracksa, stracksb)

pairs = np.where(pdist < 0.15)

dupa, dupb = list(), list()

for p, q in zip(*pairs):

timep = stracksa[p].frame_id - stracksa[p].start_frame

timeq = stracksb[q].frame_id - stracksb[q].start_frame

if timep > timeq:

dupb.append(q)

else:

dupa.append(p)

resa = [t for i, t in enumerate(stracksa) if not i in dupa]

resb = [t for i, t in enumerate(stracksb) if not i in dupb]

return resa, resb

7887

7887

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?