对 MNIST图像数据集,建立具有一个隐藏层的神经网络,隐藏层使用 sigmoid 函数作为激活函数。(不使用pytorch,手写python代码实现)

1.首先确保MNIST数据集已经下载到本地并解压,然后根据数据集路径(代码中使用的是绝对路径)导入MNIST数据集,导入后对数据进行相应的预处理。

代码如下:

#导入MNIST数据集

import numpy as np

def load_mnist_images(filename):

with open(filename, 'rb') as f:

data = np.frombuffer(f.read(), np.uint8, offset=16)

data = data.reshape(-1, 28, 28) # 图像大小为28x28

return data

def load_mnist_labels(filename):

with open(filename, 'rb') as f:

labels = np.frombuffer(f.read(), np.uint8, offset=8)

return labels

# 设置文件路径

train_images_file = r'D:\研究生\课程\深度学习\data\mnist\train-images.idx3-ubyte'

train_labels_file = r'D:\研究生\课程\深度学习\data\mnist\train-labels.idx1-ubyte'

test_images_file = r'D:\研究生\课程\深度学习\data\mnist\t10k-images.idx3-ubyte'

test_labels_file = r'D:\研究生\课程\深度学习\data\mnist\t10k-labels.idx1-ubyte'

# 加载训练和测试图像

x_train = load_mnist_images(train_images_file)

x_test = load_mnist_images(test_images_file)

# 加载训练和测试标签

y_train = load_mnist_labels(train_labels_file)

y_test = load_mnist_labels(test_labels_file)

# 因变量为10类的定性数据;自变量维度为784

num_classes, pixels_per_image = 10, 784

n_train_images = 1000

# 训练数据自变量:把28*28的像素点转换成向量的形式

images = x_train[0:n_train_images]

images = images.reshape(n_train_images, 28*28)/255

labels = y_train[0:n_train_images] # 训练数据因变量

# 训练数据因变量:one-hot编码

one_hot_labels = np.zeros((len(labels), num_classes))

for i, j in enumerate(labels):

one_hot_labels[i][j] = 1

# 测试数据自变量

test_images = x_test.reshape(len(x_test), 28*28)/255

# 测试数据因变量:one-hot编码

one_hot_test_labels = np.zeros((len(y_test), num_classes))

for i, j in enumerate(y_test):

one_hot_test_labels[i][j] = 1

2.使用批量随机梯度下降法,训练具有1个隐藏层的神经网络,其中隐藏层使用 sigmoid 函数作为激活函数,输出层使用softmax激活函数输出概率值。

代码如下:

"""

隐藏层使用 sigmoid 函数作为激活函数

mnist数据

训练具有1个隐藏层的神经网络

批量随机梯度下降法

"""

np.random.seed(2)

# 定义sigmoid激活函数

def sigmoid(x):

return 1 / (1 + np.exp(-x))

# 定义sigmoid激活函数的导数

def sigmoid_deriv(x):

return sigmoid(x) * (1 - sigmoid(x))

def softmax(x):

temp = np.exp(x)

return temp / np.sum(temp, axis = 1, keepdims=True)

lr, hidden_size = 0.05, 200 # 给定学习步长和隐藏层的节点个数

batch_size, epochs = 100, 2000 # 给定每一批数据的观测点个数和循环次数

num_batch = int(np.floor(n_train_images/batch_size))

# 初始化b_0_1和b_1_2

b_0_1 = np.zeros((1, hidden_size))

b_1_2 = np.zeros((1, num_classes))

# 初始化w_0_1和w_1_2, 服从均匀分布

w_0_1 = 0.02*np.random.random(size=(pixels_per_image,hidden_size))-0.01

w_1_2 = 0.2*np.random.random(size=(hidden_size, num_classes))-0.1

for e in range(epochs):

total_loss = 0

train_acc = 0

for i in range(num_batch):

batch_start = i * batch_size

batch_end = (i+1) * batch_size

layer_0 = images[batch_start:batch_end] # layer_0

# 正向传播算法: 计算layer_1 *****

layer_1 = sigmoid(np.dot(layer_0, w_0_1)+b_0_1)

# 正向传播算法: 计算layer_2

layer_2 = softmax(np.dot(layer_1, w_1_2)+b_1_2)

labels_batch = one_hot_labels[batch_start:batch_end]

for j in range(len(layer_0)):

# 计算损失函数

total_loss += - np.log(layer_2[j, np.argmax(labels_batch[j])])

# 计算预测正确的观测点数量

train_acc += int(np.argmax(labels_batch[j]) == np.argmax(layer_2[j]))

# 反向传播算法:计算delta_2

layer_2_delta = (layer_2 - labels_batch)/batch_size

# 反向传播算法:计算delta_1 *****

layer_1_delta = layer_2_delta.dot(w_1_2.T)* sigmoid_deriv(layer_1)

# 更新b_1_2和b_0_1

b_1_2 -= lr * np.sum(layer_2_delta, axis = 0, keepdims=True)

b_0_1 -= lr * np.sum(layer_1_delta, axis = 0, keepdims=True)

# 更新w_1_2和w_0_1

w_1_2 -= lr * layer_1.T.dot(layer_2_delta)

w_0_1 -= lr * layer_0.T.dot(layer_1_delta)

if (e % 200 == 0 or e == (epochs - 1)):

layer_0 = test_images

layer_1 = sigmoid(np.dot(layer_0, w_0_1) + b_0_1)

layer_2 = softmax(np.dot(layer_1, w_1_2) + b_1_2)

# 计算测试准确率

test_acc = 0

for i in range(len(test_images)):

test_acc += int(np.argmax(one_hot_test_labels[i]) == np.argmax(layer_2[i]))

print("Loss: %10.3f; Train Acc: %0.3f; Test Acc: %0.3f"%(total_loss, train_acc/n_train_images,test_acc/len(test_images)))

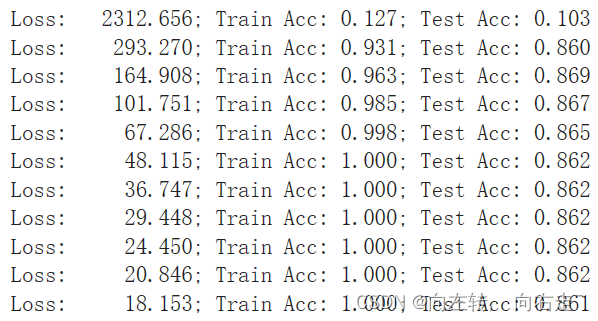

3.输出结果分别有预测损失、训练准确率、测试准确率,如下图:

2514

2514

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?