model.py

# 搭建神经网络

import torch

from torch import nn

class Lixinyu(nn.Module):

def __init__(self):

super(Lixinyu, self).__init__()

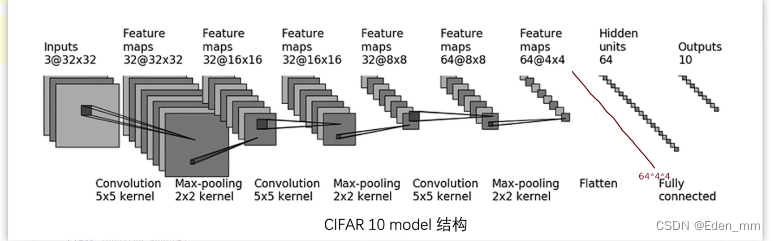

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(1024, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 主方法测试正确性

if __name__ == '__main__':

lixinyu = Lixinyu()

input = torch.ones(64, 3, 32, 32)

output = lixinyu(input)

print(output.shape)

train.py

# 准备数据集

import torch.optim

import torchvision

from torch import nn

from torch.utils.data import DataLoader

from model import *

train_data = torchvision.datasets.CIFAR10("./dataset", train=True, transform=torchvision.transforms.ToTensor(),

download=True)

test_data = torchvision.datasets.CIFAR10("./dataset", train=False, transform=torchvision.transforms.ToTensor(),

download=True)

# length

train_data_size = len(train_data)

test_data_siz = len(test_data)

print(f"训练数据集的长度为:{train_data_size}")

print(f"测试数据集的长度为:{test_data_siz}")

# 利用DataLoader加载

train_data_loader = DataLoader(train_data, batch_size=64)

test_data_loader = DataLoader(test_data, batch_size=64)

# 创建网络模型

lixinyu = Lixinyu()

# 损失函数

loss_fn = nn.CrossEntropyLoss()

# 优化器

# learning_rate = 0.01

learning_rate = 1e-2

optimizer = torch.optim.SGD(lixinyu.parameters(), learning_rate)

# 设置训练网络参数

# 记录训练的次数

total_train_step = 0

# 记录测试的次数

total_test_step = 0

# 训练的轮数

epoch = 5

for i in range(epoch):

print(f"--------------------第{i+1}轮训练开始了-----------------")

# 训练步骤开始

for data in train_data_loader:

imgs, targets = data

output = lixinyu(imgs)

loss = loss_fn(output, targets)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_train_step += 1

print(f"训练次数{total_train_step} 损失值{loss.item()}") # loss.item()标准写法,这里直接写loss也行

loss与loss.item()区别

import torch

a = torch.tensor(5)

print(a)

print(a.item())

D:\anaconda\python.exe C:/Users/ASUS/Desktop/tudui/loss_item.py

tensor(5)

5

Process finished with exit code 0

246

246

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?