GRU 由 Cho 等人于 2014 年提出,优化 LSTM 结构。Kyunghyun Cho,Bart van Merrienboer,Caglar Gulcehre,Dzmitry Bahdanau,Fethi Bougares,Holger Schwenk,Yoshua Bengio.Learning Phrase Representations using RNN Encoder–Decoder for Statistical Machine Translation.Computer ence, 2014.

原理: 门控循环单元(Gated Recurrent Unit,GRU)是 LSTM 的一种变体,将 LSTM 中 遗忘门与输入门合二为一为更新门,模型比 LSTM 模型更简单。

Tensorflow描述 GRU 层 tf.keras.layers.GRU(神经元个数, return_sequences=是否返回输出) 神经元个数和 return_sequences 的含义与 SimpleRNN 相同。 例:GRU(8,return_sequences=True)

GRU 股票预测代码如下

import numpy as np

import tensorflow as tf

from tensorflow.keras.layers import Dropout, Dense, GRU

import matplotlib.pyplot as plt

import os

import pandas as pd

from sklearn.preprocessing import MinMaxScaler

from sklearn.metrics import mean_squared_error, mean_absolute_error

import math

maotai = pd.read_csv('D:/tensorflow/class6/SH600519.csv') # 读取股票文件

# 前(2426-300=2126)天的开盘价作为训练集,表格从0开始计数,2:3 是提取[2:3)列,前闭后开,故提取出C列开盘价

training_set = maotai.iloc[0:2426 - 300, 2:3].values

test_set = maotai.iloc[2426 - 300:, 2:3].values # 后300天的开盘价作为测试集

# 归一化

sc = MinMaxScaler(feature_range=(0, 1)) # 定义归一化:归一化到(0,1)之间

training_set_scaled = sc.fit_transform(training_set) # 求得训练集的最大值,最小值这些训练集固有的属性,并在训练集上进行归一化

test_set = sc.transform(test_set) # 利用训练集的属性对测试集进行归一化

x_train = []

y_train = []

x_test = []

y_test = []

# 测试集:csv表格中前2426-300=2126天数据

# 利用for循环,遍历整个训练集,提取训练集中连续60天的开盘价作为输入特征x_train,第61天的数据作为标签,for循环共构建2426-300-60=2066组数据。

for i in range(60, len(training_set_scaled)):

x_train.append(training_set_scaled[i - 60:i, 0])

y_train.append(training_set_scaled[i, 0])

# 对训练集进行打乱

np.random.seed(7)

np.random.shuffle(x_train)

np.random.seed(7)

np.random.shuffle(y_train)

tf.random.set_seed(7)

# 将训练集由list格式变为array格式

x_train, y_train = np.array(x_train), np.array(y_train)

# 使x_train符合RNN输入要求:[送入样本数, 循环核时间展开步数, 每个时间步输入特征个数]。

# 此处整个数据集送入,送入样本数为x_train.shape[0]即2066组数据;输入60个开盘价,预测出第61天的开盘价,循环核时间展开步数为60; 每个时间步送入的特征是某一天的开盘价,只有1个数据,故每个时间步输入特征个数为1

x_train = np.reshape(x_train, (x_train.shape[0], 60, 1))

# 测试集:csv表格中后300天数据

# 利用for循环,遍历整个测试集,提取测试集中连续60天的开盘价作为输入特征x_train,第61天的数据作为标签,for循环共构建300-60=240组数据。

for i in range(60, len(test_set)):

x_test.append(test_set[i - 60:i, 0])

y_test.append(test_set[i, 0])

# 测试集变array并reshape为符合RNN输入要求:[送入样本数, 循环核时间展开步数, 每个时间步输入特征个数]

x_test, y_test = np.array(x_test), np.array(y_test)

x_test = np.reshape(x_test, (x_test.shape[0], 60, 1))

model = tf.keras.Sequential([

GRU(80, return_sequences=True),

Dropout(0.2),

GRU(100),

Dropout(0.2),

Dense(1)

])

model.compile(optimizer=tf.keras.optimizers.Adam(0.001),

loss='mean_squared_error') # 损失函数用均方误差

# 该应用只观测loss数值,不观测准确率,所以删去metrics选项,一会在每个epoch迭代显示时只显示loss值

checkpoint_save_path = "./checkpoint/stock.ckpt"

if os.path.exists(checkpoint_save_path + '.index'):

print('-------------load the model-----------------')

model.load_weights(checkpoint_save_path)

cp_callback = tf.keras.callbacks.ModelCheckpoint(filepath=checkpoint_save_path,

save_weights_only=True,

save_best_only=True,

monitor='val_loss')

history = model.fit(x_train, y_train, batch_size=64, epochs=50, validation_data=(x_test, y_test), validation_freq=1,

callbacks=[cp_callback])

model.summary()

file = open('./weights.txt', 'w') # 参数提取

for v in model.trainable_variables:

file.write(str(v.name) + '\n')

file.write(str(v.shape) + '\n')

file.write(str(v.numpy()) + '\n')

file.close()

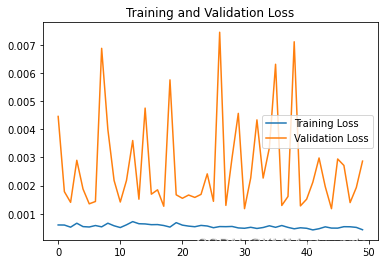

loss = history.history['loss']

val_loss = history.history['val_loss']

plt.plot(loss, label='Training Loss')

plt.plot(val_loss, label='Validation Loss')

plt.title('Training and Validation Loss')

plt.legend()

plt.show()

################## predict ######################

# 测试集输入模型进行预测

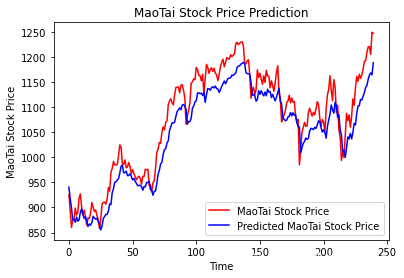

predicted_stock_price = model.predict(x_test)

# 对预测数据还原---从(0,1)反归一化到原始范围

predicted_stock_price = sc.inverse_transform(predicted_stock_price)

# 对真实数据还原---从(0,1)反归一化到原始范围

real_stock_price = sc.inverse_transform(test_set[60:])

# 画出真实数据和预测数据的对比曲线

plt.plot(real_stock_price, color='red', label='MaoTai Stock Price')

plt.plot(predicted_stock_price, color='blue', label='Predicted MaoTai Stock Price')

plt.title('MaoTai Stock Price Prediction')

plt.xlabel('Time')

plt.ylabel('MaoTai Stock Price')

plt.legend()

plt.show()

##########evaluate##############

# calculate MSE 均方误差 ---> E[(预测值-真实值)^2] (预测值减真实值求平方后求均值)

mse = mean_squared_error(predicted_stock_price, real_stock_price)

# calculate RMSE 均方根误差--->sqrt[MSE] (对均方误差开方)

rmse = math.sqrt(mean_squared_error(predicted_stock_price, real_stock_price))

# calculate MAE 平均绝对误差----->E[|预测值-真实值|](预测值减真实值求绝对值后求均值)

mae = mean_absolute_error(predicted_stock_price, real_stock_price)

print('均方误差: %.6f' % mse)

print('均方根误差: %.6f' % rmse)

print('平均绝对误差: %.6f' % mae)

股票预测结果: Epoch 46/50 33/33 [==============================] - 2s 60ms/step - loss: 4.8815e-04 - val_loss: 0.0029 Epoch 47/50 33/33 [==============================] - 2s 73ms/step - loss: 5.3936e-04 - val_loss: 0.0027 Epoch 48/50 33/33 [==============================] - 2s 74ms/step - loss: 5.3651e-04 - val_loss: 0.0014 Epoch 49/50 33/33 [==============================] - 2s 75ms/step - loss: 5.1446e-04 - val_loss: 0.0019 Epoch 50/50 33/33 [==============================] - 2s 75ms/step - loss: 4.3118e-04 - val_loss: 0.0029 Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= gru (GRU) (None, 60, 80) 19920 _________________________________________________________________ dropout (Dropout) (None, 60, 80) 0 _________________________________________________________________ gru_1 (GRU) (None, 100) 54600 _________________________________________________________________ dropout_1 (Dropout) (None, 100) 0 _________________________________________________________________ dense (Dense) (None, 1) 101 ================================================================= Total params: 74,621 Trainable params: 74,621 Non-trainable params: 0 _________________________________________________________________

均方误差: 1440.501382 均方根误差: 37.953938 平均绝对误差: 32.823045

股票预测文件标签![]()

685

685

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?