一、关于训练自己的YOLOV5-lite

我训练的是自己的数据集,一个关于垃圾分类的数据集,最开始是在自己电脑上训练了100轮效果一般,后来又训练了100轮结果仍然一般,最后租了一台服务器训练了500轮,结果发现差不多在300轮左右就收敛了,但是精度非常差,准确率差不多在0.58左右

二、关于部署到树莓派

我把yolo部署到树莓派的过程可谓非常非常艰辛

1、树莓派环境的配置

1.1第一问题是我一不小心点了拒绝vnc访问导致电脑访问不了vnc,只能选择重新烧入系统。重新烧入了64位系统后出现xshell可以访问,vnc访问不了的情况,使用VNC Viewer连接树莓派超时的原因。

解决方法:

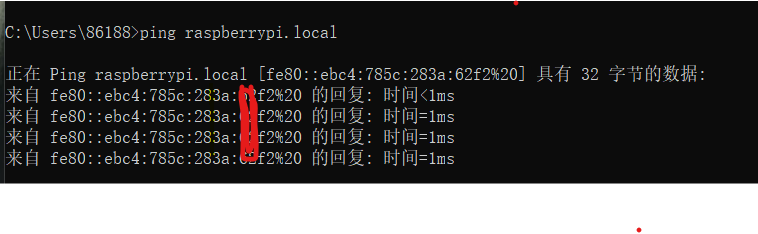

a:cmd输入

ping raspberrypi.local

返回的是IPv6的地址

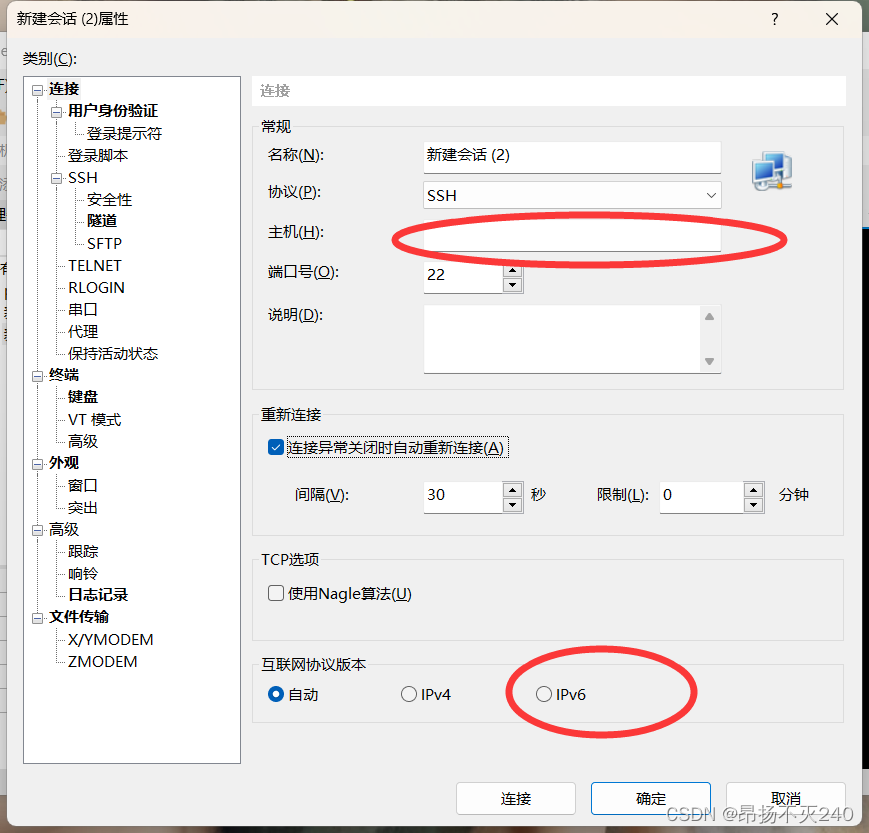

b:打开xshell,对应窗口填入ip地址并选择IPv6

c:设置用户名:pi;密码:raspberry(或者其他什么自己记住就好)

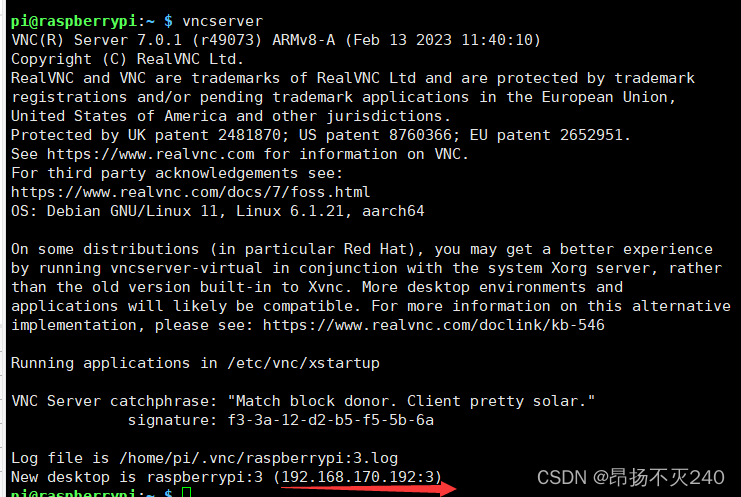

d:链接成功后输入:vncserver,可以查看到树莓派的IPv4地址和对应的端口号

e:复制ip地址和端口号(即括号内的全部内容),在vnc中新建链接,粘贴上去即可。

1.2 树莓派的python环境无法直接运行yolov5和yolov5-lite

最开始我使用的yolov5,传到树莓派上去后发现会报segement fualt,查阅相关资料后发现是树莓派跑步起来pytorch框架,虽然我看见有视频博主运行YOLOv5非常轻松,只需要配置好相关环境,直接使用python就可以跑起来,希望各位也是幸运的人。我使用的是树莓派4b,拥有2G的内存,我查看了运行时候的内存容量确实好像是内存炸了,我尝试了增加虚拟内存的大小,但是无济于事,最后我选择尝试轻量级的yolov5也就是yolov5-lite,训练完后我发现还是会报相同的错误。我花费很大功夫查到了,嵌入式设备上跑步起来torch,需要对应的c++推理框架,于是便开始我和ncnn的爱恨情仇

2、树莓派4B使用NCNN部署Yolov5-lite

CSDN上有好多博客,他们也都写的很详细。

我当时主要是参考这两篇,都写的非常详细(一个是做口罩识别的,一个是做物体识别的,我参照物体识别的比较多)

链接: link

链接: link

但是在这里我要批评一个博主,过程明显有错误,不建议参考

链接: link

2.1 分享我在跟着别人做的过程中出现的一些问题,以及解决办法

此处我参照的是链接: link

a:第一步配置ncnn,安装环境依赖没什么大问题,下载ncnn可以考虑直接到对应地址下载,这里建议下载NCNN-20210525版本,下载后移到树莓派桌面安装下面步骤完成ncnn编译

b:模型转换过程中,将pytorch模型转换为onnx模型过程没什么太大问题,生成onnx模型后传到树莓派上。

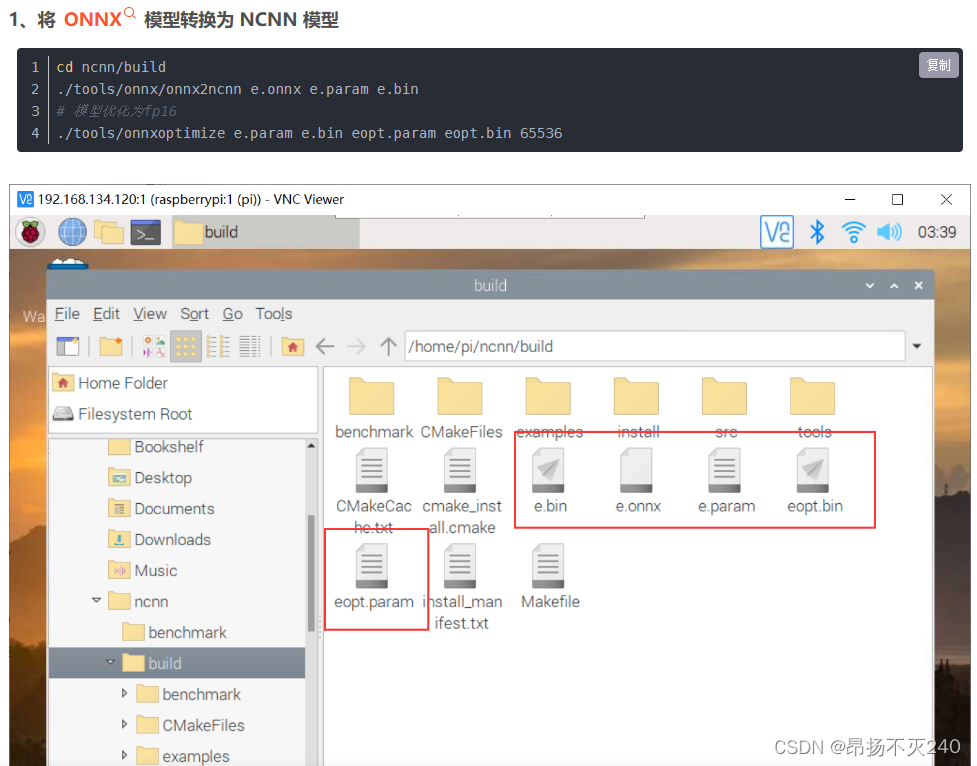

在将onnx模型推导成ncnn模型并优化时,需要注意源文件的位置,需要将源文件放在该文件夹下

c:代码部分:

// Tencent is pleased to support the open source community by making ncnn available.

//

// Copyright (C) 2020 THL A29 Limited, a Tencent company. All rights reserved.

//

// Licensed under the BSD 3-Clause License (the "License"); you may not use this file except

// in compliance with the License. You may obtain a copy of the License at

//

// https://opensource.org/licenses/BSD-3-Clause

//

// Unless required by applicable law or agreed to in writing, software distributed

// under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR

// CONDITIONS OF ANY KIND, either express or implied. See the License for the

// specific language governing permissions and limitations under the License.

#include "layer.h"

#include "net.h"

#if defined(USE_NCNN_SIMPLEOCV)

#include "simpleocv.h"

#else

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#endif

#include <float.h>

#include <stdio.h>

#include <vector>

#include <sys/time.h>

#include <iostream>

#include <chrono>

#include <opencv2/opencv.hpp>

using namespace std;

using namespace cv;

using namespace std::chrono;

// 0 : FP16

// 1 : INT8

#define USE_INT8 0

// 0 : Image

// 1 : Camera

#define USE_CAMERA 1

struct Object

{

cv::Rect_<float> rect;

int label;

float prob;

};

static inline float intersection_area(const Object& a, const Object& b)

{

cv::Rect_<float> inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects, int left, int right)

{

int i = left;

int j = right;

float p = faceobjects[(left + right) / 2].prob;

while (i <= j)

{

while (faceobjects[i].prob > p)

i++;

while (faceobjects[j].prob < p)

j--;

if (i <= j)

{

// swap

std::swap(faceobjects[i], faceobjects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(faceobjects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(faceobjects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects)

{

if (faceobjects.empty())

return;

qsort_descent_inplace(faceobjects, 0, faceobjects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& faceobjects, std::vector<int>& picked, float nms_threshold)

{

picked.clear();

const int n = faceobjects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = faceobjects[i].rect.area();

}

for (int i = 0; i < n; i++)

{

const Object& a = faceobjects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = faceobjects[picked[j]];

// intersection over union

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

// unsigmoid

static inline float unsigmoid(float y) {

return static_cast<float>(-1.0 * (log((1.0 / y) - 1.0)));

}

static void generate_proposals(const ncnn::Mat &anchors, int stride, const ncnn::Mat &in_pad,

const ncnn::Mat &feat_blob, float prob_threshold,

std::vector <Object> &objects) {

const int num_grid = feat_blob.h;

float unsig_pro = 0;

if (prob_threshold > 0.6)

unsig_pro = unsigmoid(prob_threshold);

int num_grid_x;

int num_grid_y;

if (in_pad.w > in_pad.h) {

num_grid_x = in_pad.w / stride;

num_grid_y = num_grid / num_grid_x;

} else {

num_grid_y = in_pad.h / stride;

num_grid_x = num_grid / num_grid_y;

}

const int num_class = feat_blob.w - 5;

const int num_anchors = anchors.w / 2;

for (int q = 0; q < num_anchors; q++) {

const float anchor_w = anchors[q * 2];

const float anchor_h = anchors[q * 2 + 1];

const ncnn::Mat feat = feat_blob.channel(q);

for (int i = 0; i < num_grid_y; i++) {

for (int j = 0; j < num_grid_x; j++) {

const float *featptr = feat.row(i * num_grid_x + j);

// find class index with max class score

int class_index = 0;

float class_score = -FLT_MAX;

float box_score = featptr[4];

if (prob_threshold > 0.6) {

// while prob_threshold > 0.6, unsigmoid better than sigmoid

if (box_score > unsig_pro) {

for (int k = 0; k < num_class; k++) {

float score = featptr[5 + k];

if (score > class_score) {

class_index = k;

class_score = score;

}

}

float confidence = sigmoid(box_score) * sigmoid(class_score);

if (confidence >= prob_threshold) {

float dx = sigmoid(featptr[0]);

float dy = sigmoid(featptr[1]);

float dw = sigmoid(featptr[2]);

float dh = sigmoid(featptr[3]);

float pb_cx = (dx * 2.f - 0.5f + j) * stride;

float pb_cy = (dy * 2.f - 0.5f + i) * stride;

float pb_w = pow(dw * 2.f, 2) * anchor_w;

float pb_h = pow(dh * 2.f, 2) * anchor_h;

float x0 = pb_cx - pb_w * 0.5f;

float y0 = pb_cy - pb_h * 0.5f;

float x1 = pb_cx + pb_w * 0.5f;

float y1 = pb_cy + pb_h * 0.5f;

Object obj;

obj.rect.x = x0;

obj.rect.y = y0;

obj.rect.width = x1 - x0;

obj.rect.height = y1 - y0;

obj.label = class_index;

obj.prob = confidence;

objects.push_back(obj);

}

} else {

for (int k = 0; k < num_class; k++) {

float score = featptr[5 + k];

if (score > class_score) {

class_index = k;

class_score = score;

}

}

float confidence = sigmoid(box_score) * sigmoid(class_score);

if (confidence >= prob_threshold) {

float dx = sigmoid(featptr[0]);

float dy = sigmoid(featptr[1]);

float dw = sigmoid(featptr[2]);

float dh = sigmoid(featptr[3]);

float pb_cx = (dx * 2.f - 0.5f + j) * stride;

float pb_cy = (dy * 2.f - 0.5f + i) * stride;

float pb_w = pow(dw * 2.f, 2) * anchor_w;

float pb_h = pow(dh * 2.f, 2) * anchor_h;

float x0 = pb_cx - pb_w * 0.5f;

float y0 = pb_cy - pb_h * 0.5f;

float x1 = pb_cx + pb_w * 0.5f;

float y1 = pb_cy + pb_h * 0.5f;

Object obj;

obj.rect.x = x0;

obj.rect.y = y0;

obj.rect.width = x1 - x0;

obj.rect.height = y1 - y0;

obj.label = class_index;

obj.prob = confidence;

objects.push_back(obj);

}

}

}

}

}

}

}

static int detect_yolov5(const cv::Mat& bgr, std::vector<Object>& objects)

{

ncnn::Net yolov5;

#if USE_INT8

yolov5.opt.use_int8_inference=true;

#else

yolov5.opt.use_vulkan_compute = true;

yolov5.opt.use_bf16_storage = true;

#endif

// original pretrained model from https://github.com/ultralytics/yolov5

// the ncnn model https://github.com/nihui/ncnn-assets/tree/master/models

#if USE_INT8

yolov5.load_param("/home/pi/ncnn/build/e.param");

yolov5.load_model("/home/pi/ncnn/build/e.bin");

#else

yolov5.load_param("/home/pi/ncnn/build/eopt.param");

yolov5.load_model("/home/pi/ncnn/build/eopt.bin");

#endif

const int target_size = 320;

const float prob_threshold = 0.60f;

const float nms_threshold = 0.60f;

int img_w = bgr.cols;

int img_h = bgr.rows;

// letterbox pad to multiple of 32

int w = img_w;

int h = img_h;

float scale = 1.f;

if (w > h)

{

scale = (float)target_size / w;

w = target_size;

h = h * scale;

}

else

{

scale = (float)target_size / h;

h = target_size;

w = w * scale;

}

ncnn::Mat in = ncnn::Mat::from_pixels_resize(bgr.data, ncnn::Mat::PIXEL_BGR2RGB, img_w, img_h, w, h);

// pad to target_size rectangle

// yolov5/utils/datasets.py letterbox

int wpad = (w + 31) / 32 * 32 - w;

int hpad = (h + 31) / 32 * 32 - h;

ncnn::Mat in_pad;

ncnn::copy_make_border(in, in_pad, hpad / 2, hpad - hpad / 2, wpad / 2, wpad - wpad / 2, ncnn::BORDER_CONSTANT, 114.f);

const float norm_vals[3] = {1 / 255.f, 1 / 255.f, 1 / 255.f};

in_pad.substract_mean_normalize(0, norm_vals);

ncnn::Extractor ex = yolov5.create_extractor();

ex.input("images", in_pad);

std::vector<Object> proposals;

// stride 8

{

ncnn::Mat out;

ex.extract("onnx::Sigmoid_647", out);

ncnn::Mat anchors(6);

anchors[0] = 10.f;

anchors[1] = 13.f;

anchors[2] = 16.f;

anchors[3] = 30.f;

anchors[4] = 33.f;

anchors[5] = 23.f;

std::vector<Object> objects8;

generate_proposals(anchors, 8, in_pad, out, prob_threshold, objects8);

proposals.insert(proposals.end(), objects8.begin(), objects8.end());

}

// stride 16

{

ncnn::Mat out;

ex.extract("onnx::Sigmoid_669", out);

ncnn::Mat anchors(6);

anchors[0] = 30.f;

anchors[1] = 61.f;

anchors[2] = 62.f;

anchors[3] = 45.f;

anchors[4] = 59.f;

anchors[5] = 119.f;

std::vector<Object> objects16;

generate_proposals(anchors, 16, in_pad, out, prob_threshold, objects16);

proposals.insert(proposals.end(), objects16.begin(), objects16.end());

}

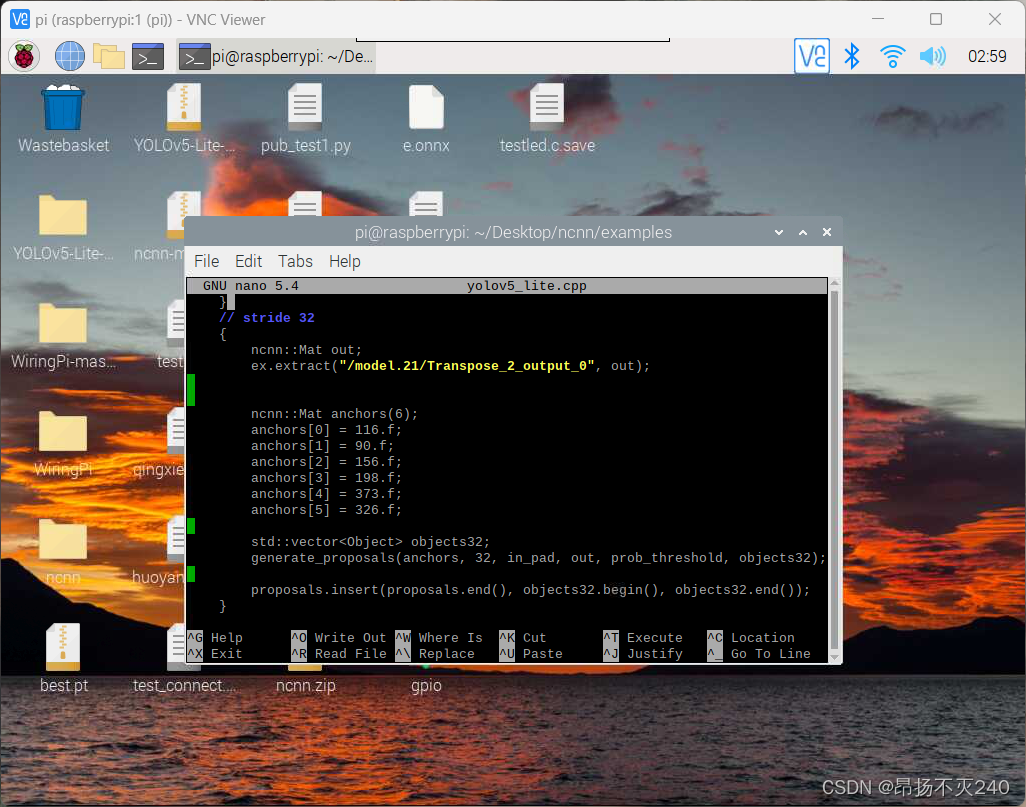

// stride 32

{

ncnn::Mat out;

ex.extract("onnx::Sigmoid_691", out);

ncnn::Mat anchors(6);

anchors[0] = 116.f;

anchors[1] = 90.f;

anchors[2] = 156.f;

anchors[3] = 198.f;

anchors[4] = 373.f;

anchors[5] = 326.f;

std::vector<Object> objects32;

generate_proposals(anchors, 32, in_pad, out, prob_threshold, objects32);

proposals.insert(proposals.end(), objects32.begin(), objects32.end());

}

// sort all proposals by score from highest to lowest

qsort_descent_inplace(proposals);

// apply nms with nms_threshold

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

// adjust offset to original unpadded

float x0 = (objects[i].rect.x - (wpad / 2)) / scale;

float y0 = (objects[i].rect.y - (hpad / 2)) / scale;

float x1 = (objects[i].rect.x + objects[i].rect.width - (wpad / 2)) / scale;

float y1 = (objects[i].rect.y + objects[i].rect.height - (hpad / 2)) / scale;

// clip

x0 = std::max(std::min(x0, (float)(img_w - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(img_h - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(img_w - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(img_h - 1)), 0.f);

objects[i].rect.x = x0;

objects[i].rect.y = y0;

objects[i].rect.width = x1 - x0;

objects[i].rect.height = y1 - y0;

}

return 0;

}

static void draw_objects(const cv::Mat& bgr, const std::vector<Object>& objects)

{

static const char* class_names[] = {

"drug","glue","prime"

};

cv::Mat image = bgr.clone();

for (size_t i = 0; i < objects.size(); i++)

{

const Object& obj = objects[i];

fprintf(stderr, "%d = %.5f at %.2f %.2f %.2f x %.2f\n", obj.label, obj.prob,

obj.rect.x, obj.rect.y, obj.rect.width, obj.rect.height);

cv::rectangle(image, obj.rect, cv::Scalar(0, 255, 0));

char text[256];

sprintf(text, "%s %.1f%%", class_names[obj.label], obj.prob * 100);

int baseLine = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

int x = obj.rect.x;

int y = obj.rect.y - label_size.height - baseLine;

if (y < 0)

y = 0;

if (x + label_size.width > image.cols)

x = image.cols - label_size.width;

cv::rectangle(image, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseLine)),

cv::Scalar(255, 255, 255), -1);

cv::putText(image, text, cv::Point(x, y + label_size.height),

cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0));

// cv::putText(image, to_string(fps), cv::Point(100, 100), //FPS

//cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0));

}

#if USE_CAMERA

imshow("camera", image);

cv::waitKey(1);

#else

cv::imwrite("result.jpg", image);

#endif

}

#if USE_CAMERA

int main(int argc, char** argv)

{

cv::VideoCapture capture;

capture.open(0); //修改这个参数可以选择打开想要用的摄像头

cv::Mat frame;

//111

int FPS = 0;

int total_frames = 0;

high_resolution_clock::time_point t1, t2;

while (true)

{

capture >> frame;

cv::Mat m = frame;

cv::Mat f = frame;

std::vector<Object> objects;

auto start_time = std::chrono::high_resolution_clock::now(); // 记录开始时间

detect_yolov5(frame, objects);

auto end_time = std::chrono::high_resolution_clock::now(); // 记录结束时间

auto duration = std::chrono::duration_cast<std::chrono::milliseconds>(end_time - start_time); // 计算执行时间

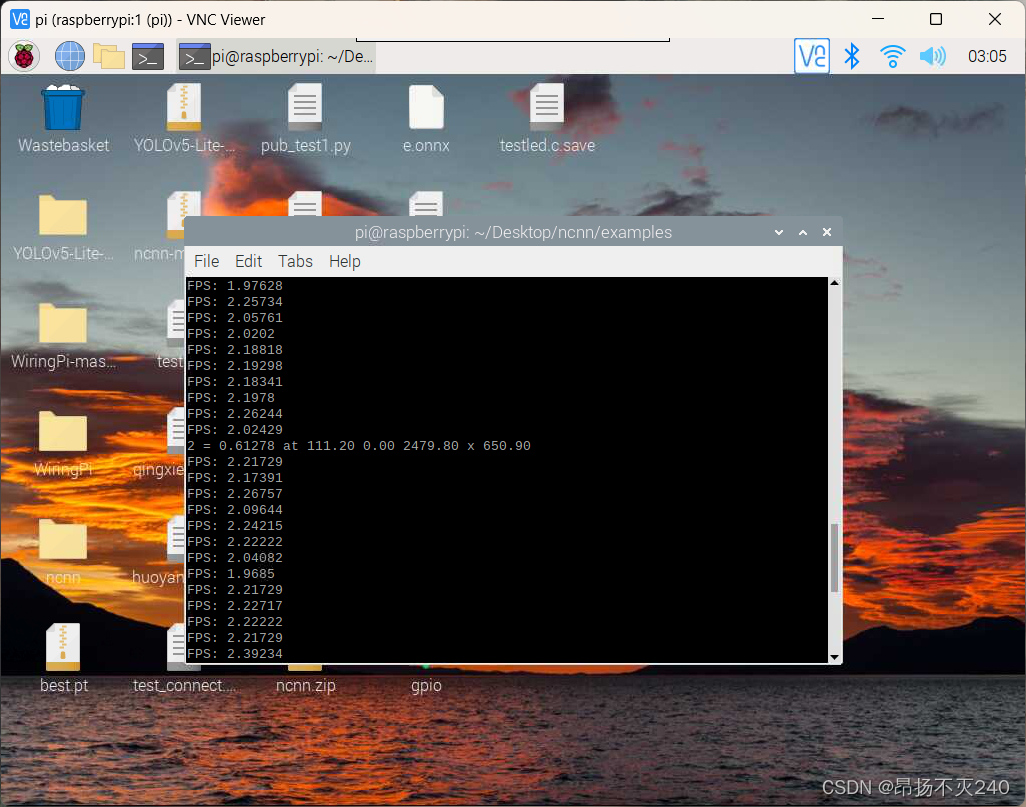

float fps = (float)(1000)/duration.count();

draw_objects(m, objects);

cout << "FPS: " << fps << endl;

//int fps = 1000/duration.count();

//int x = m.cols-50;

//int y = m.rows-50;

//cv::putText(f, to_string(fps), cv::Point(100, 100), cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0));

//if (cv::waitKey(30) >= 0)

//break;

}

}

#else

int main(int argc, char** argv)

{

if (argc != 2)

{

fprintf(stderr, "Usage: %s [imagepath]\n", argv[0]);

return -1;

}

const char* imagepath = argv[1];

struct timespec begin, end;

long time;

clock_gettime(CLOCK_MONOTONIC, &begin);

cv::Mat m = cv::imread(imagepath, 1);

if (m.empty())

{

fprintf(stderr, "cv::imread %s failed\n", imagepath);

return -1;

}

std::vector<Object> objects;

detect_yolov5(m, objects);

clock_gettime(CLOCK_MONOTONIC, &end);

time = (end.tv_sec - begin.tv_sec) + (end.tv_nsec - begin.tv_nsec);

printf(">> Time : %lf ms\n", (double)time/1000000);

draw_objects(m, objects);

return 0;

}

#endif

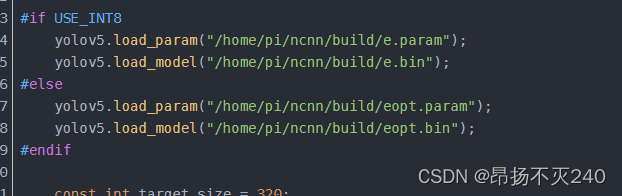

需要特别注意的地方是,此处代码有两个地方需要修改:

(1)

此处地址需要修改为自己文件所对应的位置!!!!

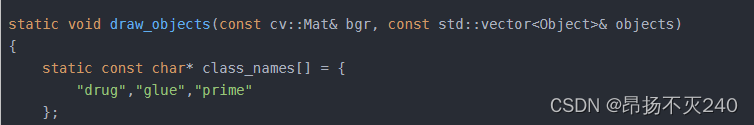

(2)

此处的class应修改为自己对应的类别,

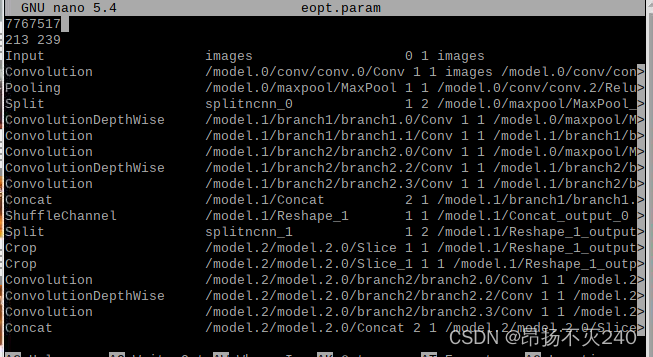

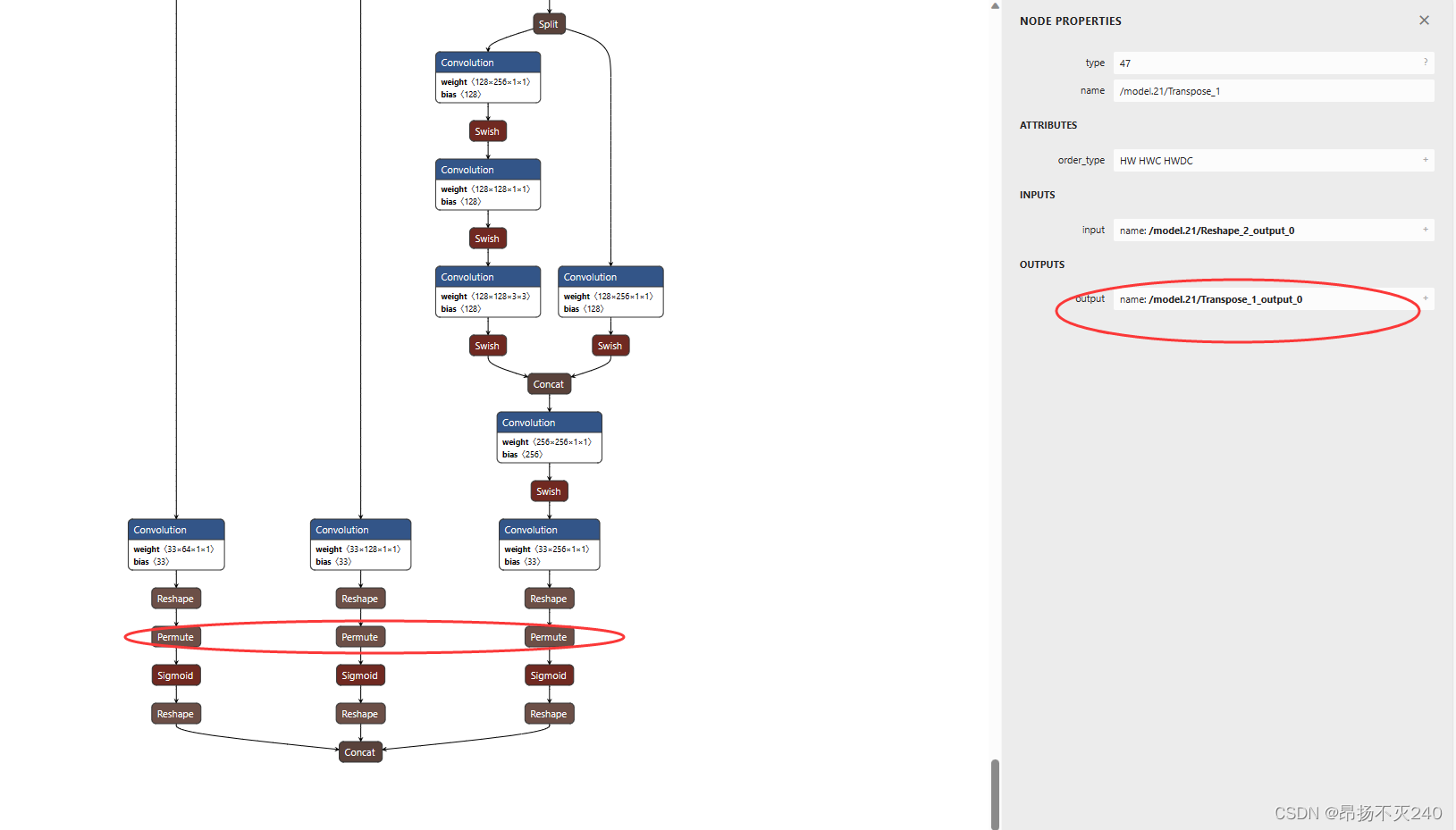

d:我在修改eopt.param时出现了较大问题:我的eopt.param文件结构很奇怪,如图:

当然我仍然按照步骤修改了其中的reshape后面的参数

e:修改yolov5_lite.cpp时,我发现这一步源码与我的anchor一样,所以就没有修改

f:打开eopt.param,根据permute修改cpp文件,因为我特殊的文件结构故无法修改我跳过了这一步

g:修改CMakeLists.txt。打开examples/CMakeLists.txt ,添加ncnn_add_example(yolov5-lite) ,注意和文件名保持一致,同时注意CMakeList地址。

h:完成后使用cmake编译;

cd ncnn/build

cmake ..

make

生成的可执行文件在build/example中,可以看见yolov5-lite的可执行文件

i:进入example文件夹输入

./yolov5-lite

因为我跳过了其中一步,故我运行报错了,如图:

解决

find_blob_index_by_name images failed

Try

find blob index by_name onnx:Sigmoid 647 failed

Try

解决思路:

a:把pytorch模型的param和bin文件按使用指北中去除可见字符串的方式得到id.h和mem.h文件,然后在id.h文件就有输入层和输出层的blob名字

b:参考解决方案

我使用的是方案二

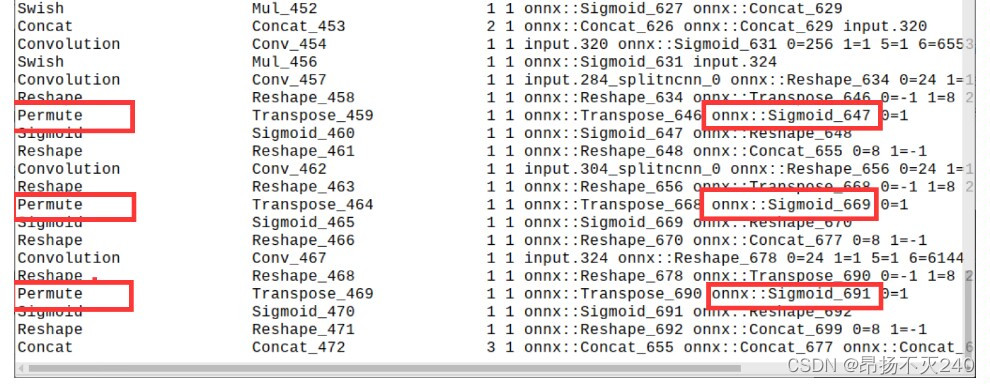

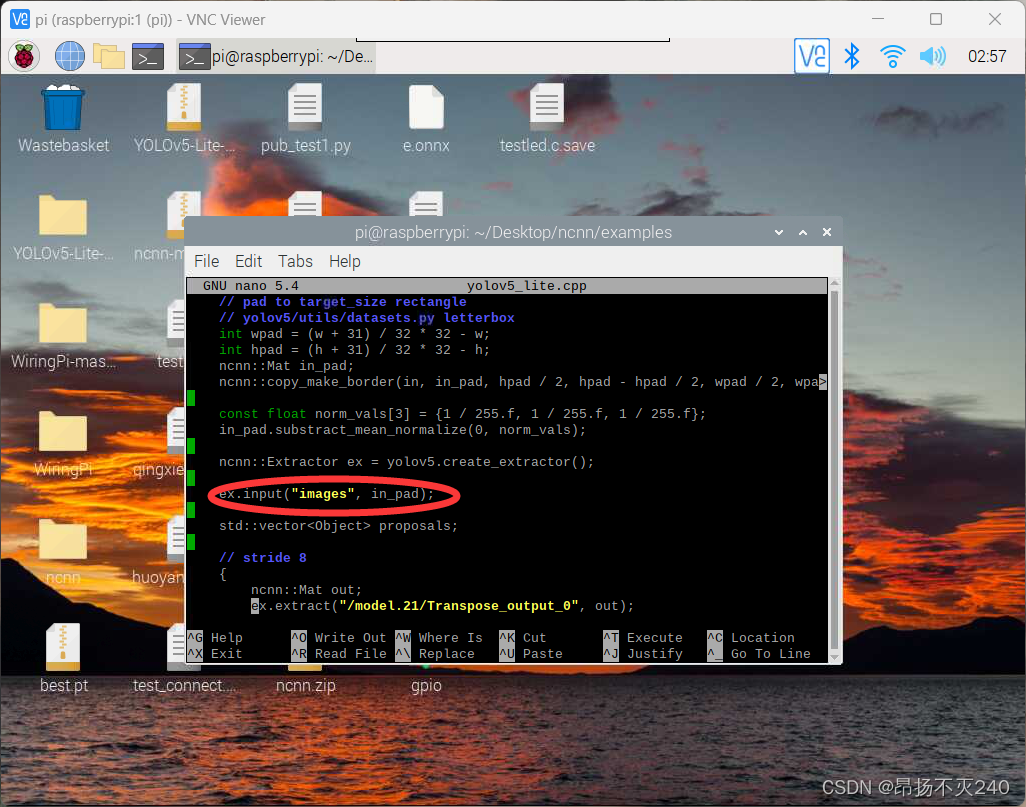

c:使用netron打开eopt.param,找到输入对应的名字

并修改yolov5-lite.cpp中对应的位置

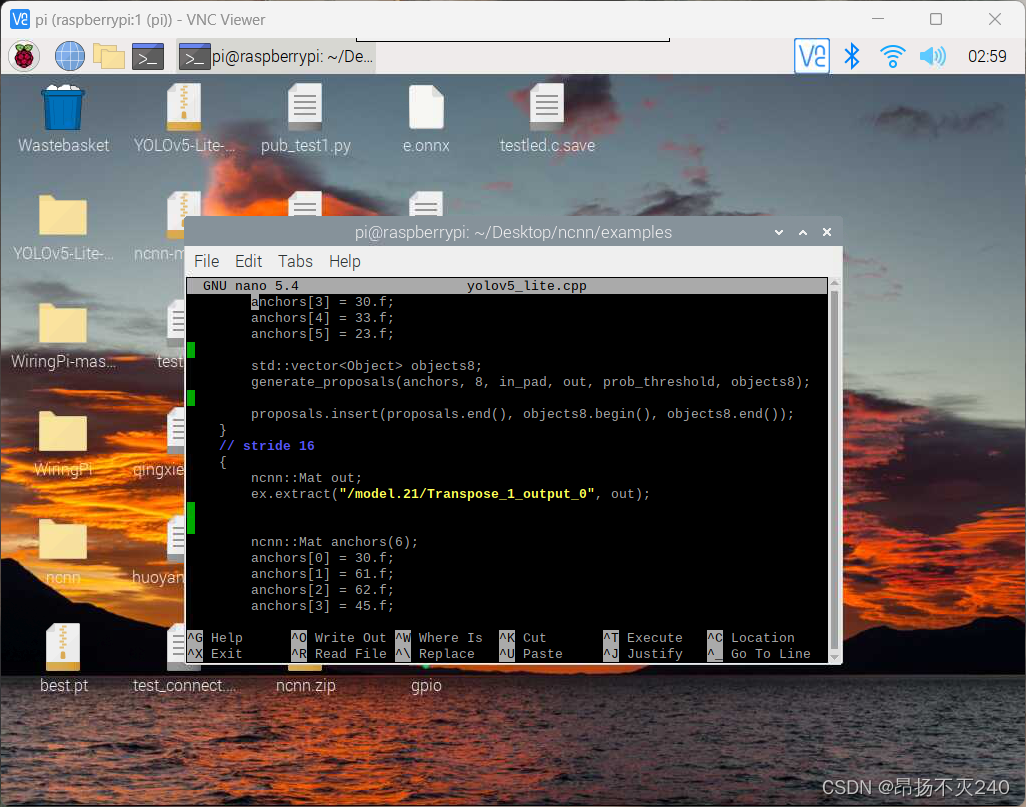

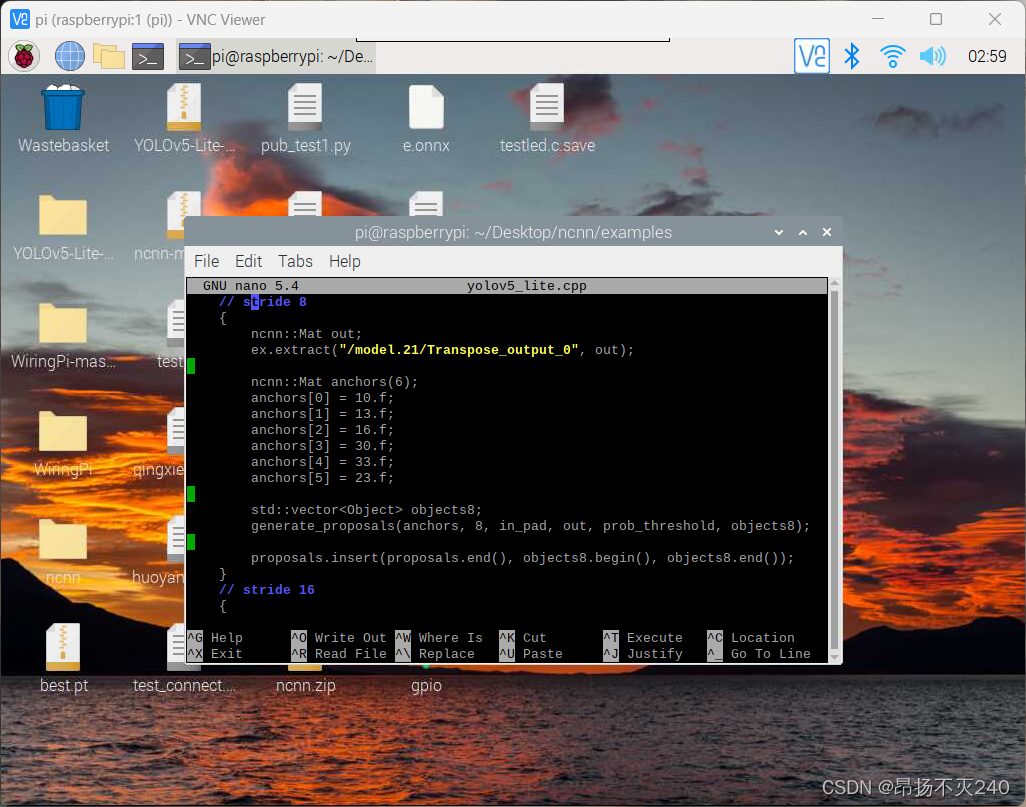

找到输出对应的名字

并修改yolov5-lite.cpp对应的位置

保存后重写编译

cd /ncnn/build

cmake ..

make

即可正确运行

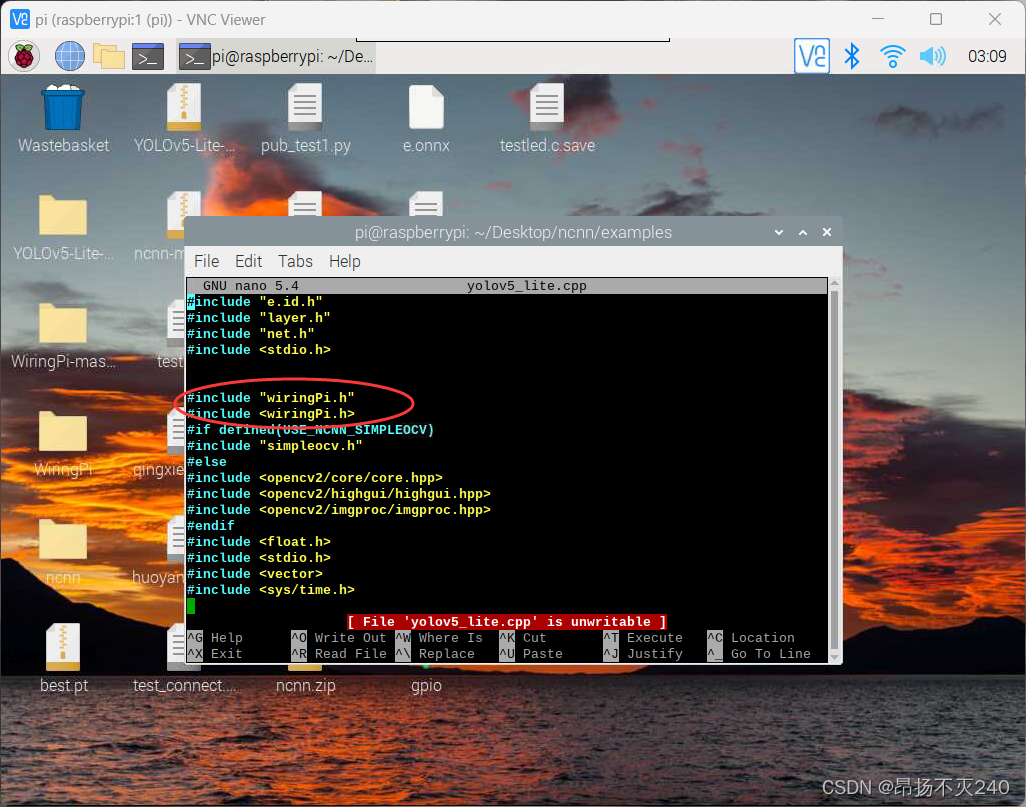

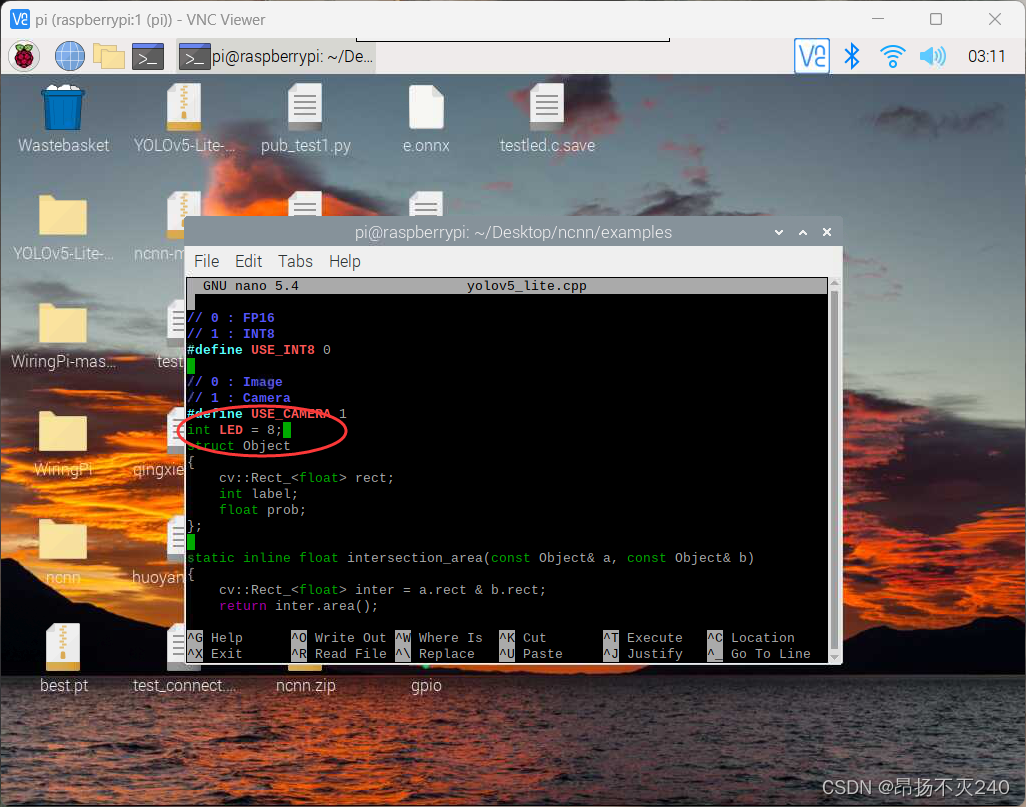

三、关于扩展功能–树莓派检测到塑料瓶后点亮一个led

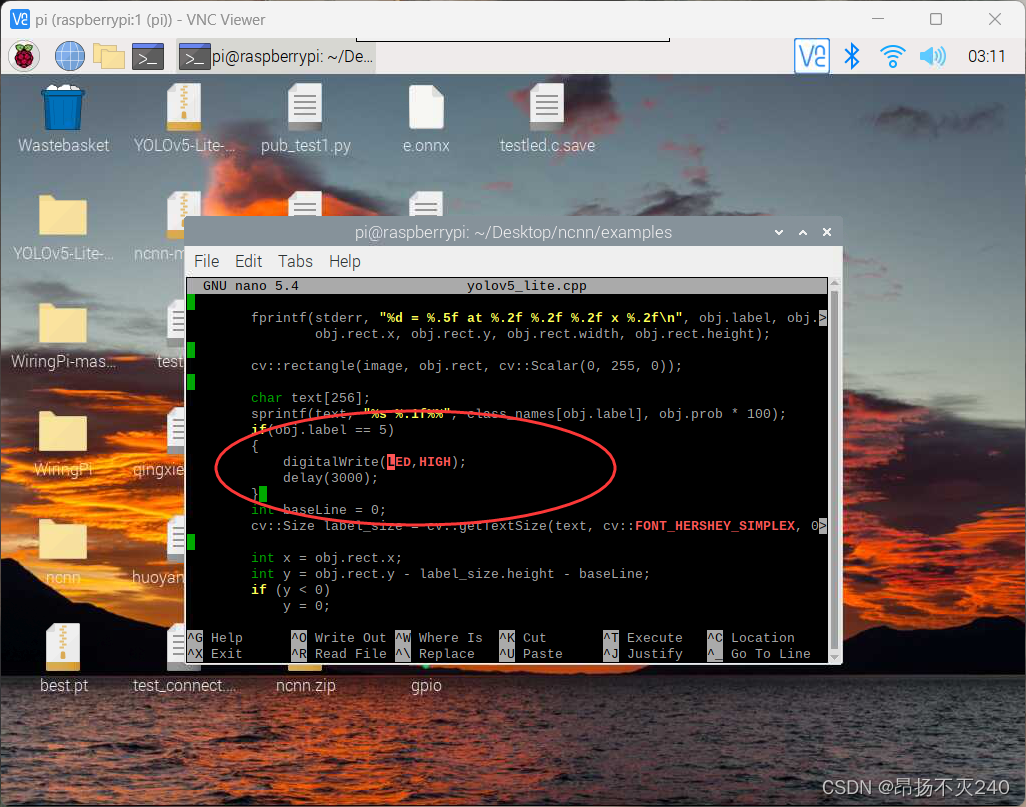

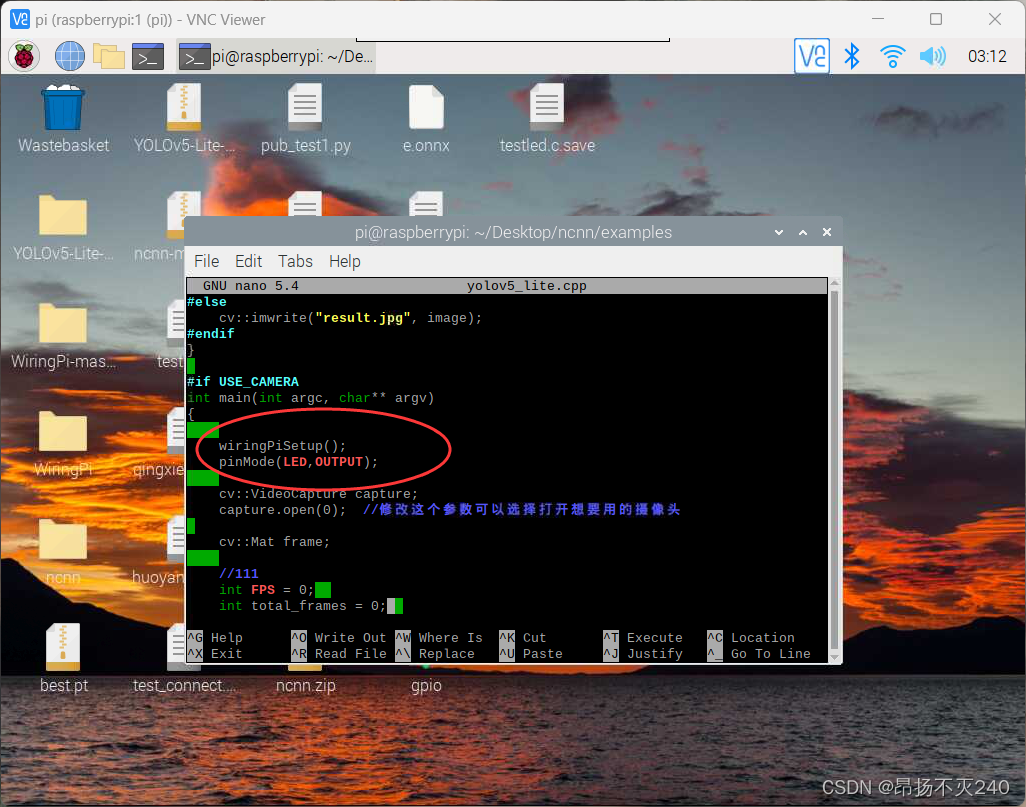

1、修改yolov5_lite.cpp代码

1.1 加入头文件

1.2、添加相应功能代码段

1.3、接线, 我们使用物理3号引脚(wiringpi编号为8)连接led的正极,使用物理引脚9连接负极。

直接运行会报如下错:/usr/bin/ld: CMakeFiles/yolov5_lite.dir/yolov5_lite.cpp.o: in function main': yolov5_lite.cpp:(.text.startup+0x208): undefined reference to wiringPiSetup’

/usr/bin/ld: yolov5_lite.cpp:(.text.startup+0x21c): undefined reference to pinMode' /usr/bin/ld: yolov5_lite.cpp:(.text.startup+0x22c): undefined reference to digitalWrite’

/usr/bin/ld: yolov5_lite.cpp:(.text.startup+0x1ae8): undefined reference to digitalWrite' /usr/bin/ld: yolov5_lite.cpp:(.text.startup+0x1af0): undefined reference to delay’

collect2: error: ld returned 1 exit status

解决办法:这个错误提示表明链接器(ld)无法解析对 WiringPi 库函数的引用,因此在链接过程中出现了未定义的符号(undefined reference)。这可能是由于链接过程中缺少对 WiringPi 库的链接所致。

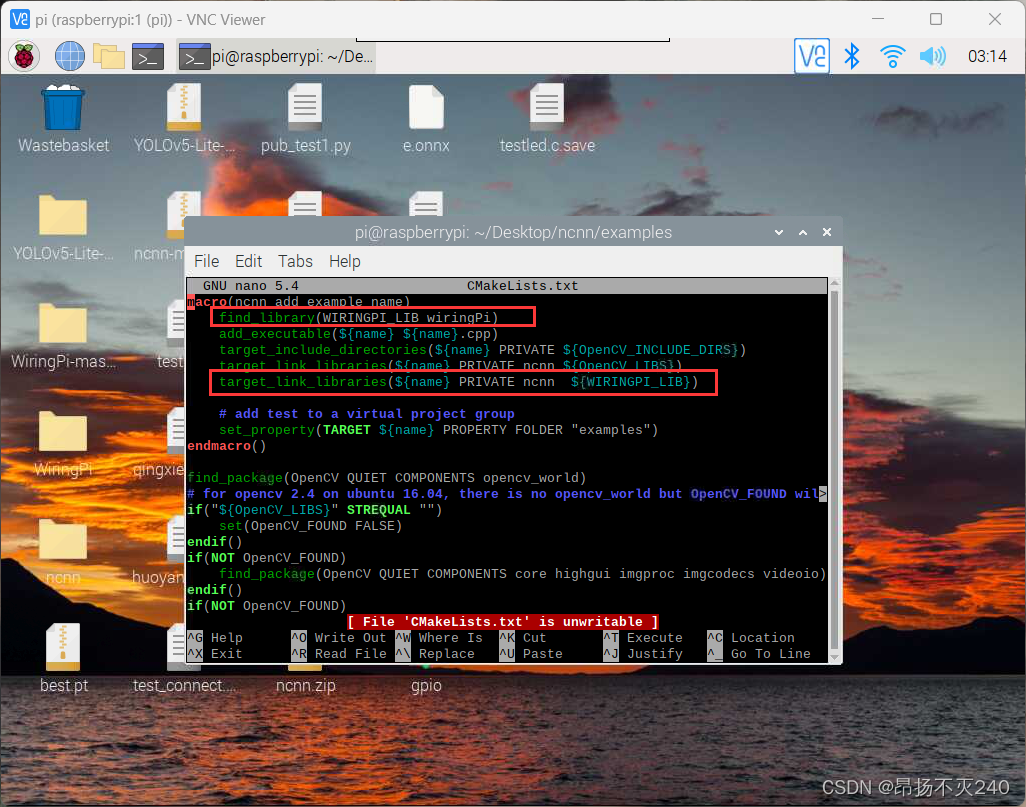

在编译时,你需要确保正确地链接 WiringPi 库。如果你使用 CMake 来构建项目,需要在 CMakeLists.txt 文件中添加对 WiringPi 库的链接。

1.4、下载wiringpi库,并解压

1.5、链接wiringpi库。打开example/CMakeList.tst,增加如下代码

1.6、重新编译即可实现

3293

3293

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?