一,需求

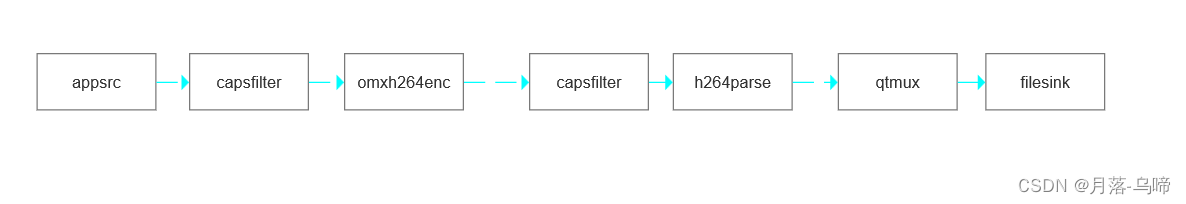

采集工业相机图片 ,h264编码,之后保存到h264文件

二,

三,代码

#pragma once

#include <opencv2/core/core.hpp>

#include <string>

#include <gst/gst.h>

namespace Transmit

{

class VideoEncode : public std::enable_shared_from_this<VideoEncode>

{

public:

using Ptr = std::shared_ptr<VideoEncode>;

~VideoEncode();

/**

* @brief 打开视频, url为视频的文件路径或者网络地址

*/

int Open(const std::string url);

/**

* @brief 设置视频帧率

* @param fps = framerate.first/framerate.second

*/

void SetFramerate(std::pair<int, int> framerate)

{

framerate_ = framerate;

}

/**

* @brief 设置视频分辨率

*/

void SetSize(int width, int height)

{

width_ = width;

height_ = height;

}

/**

* @brief 设置视频码率

* @param bitrate 单位bit/sec

*/

void SetBitrate(int bitrate)

{

bitrate_ = bitrate;

}

/**

* @brief 写入视频帧

*/

int Write(const cv::Mat &frame);

private:

int PushData2Pipeline(const cv::Mat &frame);

GstElement *pipeline_;

GstElement *appSrc_;

GstElement *queue_;

GstElement *videoConvert_;

GstElement *encoder_;

GstElement *capsFilter1_;

GstElement *capsFilter2_;

GstElement *mux_;

GstElement *sink_;

GstElement *parser_;

int width_ = 0;

int height_ = 0;

int bitrate_ = 2;

std::pair<int, int> framerate_{30, 1};

GstClockTime timestamp{0};

};

}

#include <transmit/video_encode.h>

#include <iostream>

#include <stdio.h>

#include <unistd.h>

using namespace std;

namespace Transmit

{

VideoEncode::~VideoEncode()

{

if (appSrc_)

{

GstFlowReturn retflow;

g_signal_emit_by_name(appSrc_, "end-of-stream", &retflow);

std::cout << "EOS sended. Writing last several frame..." << std::endl;

g_usleep(4000000); // 等待4s,写数据

std::cout << "Writing Done!" << std::endl;

if (retflow != GST_FLOW_OK)

{

std::cerr << "We got some error when sending eos!" << std::endl;

}

}

if (pipeline_)

{

gst_element_set_state(pipeline_, GST_STATE_NULL);

gst_object_unref(pipeline_);

pipeline_ = nullptr;

}

}

int VideoEncode::Open(const std::string url)

{

pipeline_ = gst_pipeline_new("pipeline");

appSrc_ = gst_element_factory_make("appsrc", "AppSrc");

capsFilter1_ = gst_element_factory_make("capsfilter", "Capsfilter");

capsFilter2_ = gst_element_factory_make("capsfilter", "Capsfilter2");

encoder_ = gst_element_factory_make("omxh264enc", "Omxh264enc");

parser_ = gst_element_factory_make("h264parse", "H264parse");

mux_ = gst_element_factory_make("qtmux", "muxer");

sink_ = gst_element_factory_make("filesink", "OutputFile");

if (!pipeline_ || !appSrc_ || !encoder_ || !parser_ ||!capsFilter2_||!capsFilter1_ || !sink_||!mux_)

{

std::cerr << "Not all elements could be created" << std::endl;

return -1;

}

// 设置 src format

std::string srcFmt = "I420";

//Set up appsrc

g_object_set (appSrc_, "format", GST_FORMAT_TIME, NULL);

g_object_set(G_OBJECT(capsFilter1_), "caps",gst_caps_new_simple ("video/x-raw",

"format", G_TYPE_STRING, srcFmt.c_str(),

"width", G_TYPE_INT, width_,

"height", G_TYPE_INT, height_,

"framerate", GST_TYPE_FRACTION,framerate_.first, framerate_.second,nullptr),NULL);

g_object_set(G_OBJECT(capsFilter2_), "caps", gst_caps_new_simple("video/x-h264",

"stream-format", G_TYPE_STRING,"byte-stream",nullptr), nullptr);

// Set up x264enc

g_object_set(G_OBJECT(encoder_), "bitrate", bitrate_* 1024 * 1024 * 8, NULL);

// Set up filesink

g_object_set(G_OBJECT(sink_), "location", url.c_str(), NULL);

// BAdd elements to pipeline

gst_bin_add_many(GST_BIN(pipeline_), appSrc_, capsFilter1_,encoder_, capsFilter2_, parser_,mux_,sink_, nullptr);

// Link elements

if (gst_element_link_many(appSrc_, capsFilter1_,encoder_,capsFilter2_ ,parser_,mux_,sink_, nullptr) != TRUE)

{

std::cerr << "appSrc, capsFilter2, parser, encoder, capsFilter, mux and sink could not be linked" << std::endl;

return -1;

}

// Start playing

auto ret = gst_element_set_state(pipeline_, GST_STATE_PLAYING);

if (ret == GST_STATE_CHANGE_FAILURE)

{

std::cerr << "Unable to set the pipeline to the playing state" << std::endl;

return -1;

}

return 0;

}

int VideoEncode::PushData2Pipeline(const cv::Mat &frame)

{

GstBuffer *buffer;

GstFlowReturn ret;

GstMapInfo map;

// Create a new empty buffer

uint size = frame.total() * frame.elemSize();

buffer = gst_buffer_new_and_alloc(size);

gst_buffer_map(buffer, &map, GST_MAP_WRITE);

memcpy(map.data, frame.data, size);

// debug

// std::cout << "wrote size:" << size << std::endl;

// 必须写入时间戳和每帧画面持续时间

gst_buffer_unmap(buffer, &map);

GST_BUFFER_PTS(buffer) = timestamp; // static_cast<uint64>(timestamp * GST_SECOND);

GST_BUFFER_DTS(buffer) = timestamp;

GST_BUFFER_DURATION(buffer) = gst_util_uint64_scale_int(1, GST_SECOND, framerate_.first / framerate_.second);

timestamp += GST_BUFFER_DURATION(buffer);

// std::cout << "send data into buffer" << std::endl;

// std::cout << "GST_BUFFER_DURATION(buffer):" << GST_BUFFER_DURATION(buffer) << std::endl;

// std::cout << "timestamp:" << static_cast<uint64>(_timestamp * GST_SECOND) << std::endl;

// Push the buffer into the appsrc

g_signal_emit_by_name(appSrc_, "push-buffer", buffer, &ret);

// Free the buffer now that we are done with it

gst_buffer_unref(buffer);

if (ret != GST_FLOW_OK)

{

// We got some error, stop sending data

std::cout << "We got some error, stop sending data" << std::endl;

return -1;

}

return 0;

}

int VideoEncode::Write(const cv::Mat &frame)

{

return PushData2Pipeline(frame);

}

}参考:【gstreamer opencv::Mat】使用gstreamer读取视频中的每一帧为cv::Mat_jjungle666的博客-CSDN博客

1456

1456

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?