一、定义

1.trl + lora+ transformers 训练模型

2.部署与预测

3.模型合并

4.vllm 部署

4. 代码讲解

5. trl 训练器参数讲解

二、训练

1.trl + lora+ transformers 训练模型

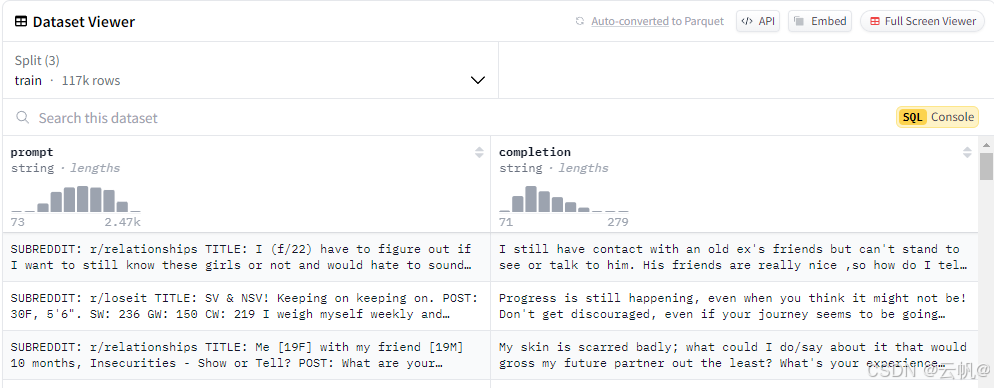

训练数据格式要求:包含字段:prompt ,不需要ans

如:

注意: 数据的处理,被封装在了grpo trainer 优化器的compute_loss 中。

自定义输入:

# Load and prep dataset

SYSTEM_PROMPT = """

Respond in the following format:

<reasoning>

...

</reasoning>

<answer>

...

</answer>

"""

XML_COT_FORMAT = """\

<reasoning>

{reasoning}

</reasoning>

<answer>

{answer}

</answer>

"""

def extract_xml_answer(text: str) -> str:

answer = text.split("<answer>")[-1]

answer = answer.split("</answer>")[0]

return answer.strip()

def extract_hash_answer(text: str):

if "####" not in text:

return None

return text.split("####")[1].strip()

# uncomment middle messages for 1-shot prompting

def get_gsm8k_questions(split = "train"):

data = load_dataset('E://openaigsm8k/main')[split].select(range(200)) # type: ignore

data = data.map(lambda x: { # type: ignore 自定义输入格式

'prompt': [

{'role': 'system', 'content': SYSTEM_PROMPT},

{'role': 'user', 'content': x['question']}

],

'answer': extract_hash_answer(x['answer'])

}) # type: ignore

return data # type: ignore

dataset = get_gsm8k_questions()

训练:

import argparse

from dataclasses import dataclass, field

from typing import Optional

from datasets import load_dataset

from transformers import AutoModelForCausalLM, AutoModelForSequenceClassification, AutoTokenizer

from trl import GRPOConfig, GRPOTrainer, ModelConfig, ScriptArguments, TrlParser, get_peft_config

@dataclass

class GRPOScriptArguments(ScriptArguments):

"""

Script arguments for the GRPO training script.

Args:

reward_model_name_or_path (`str` or `None`):

Reward model id of a pretrained model hosted inside a model repo on huggingface.co or local path to a

directory containing model weights saved using [`~transformers.PreTrainedModel.save_pretrained`].

"""

reward_model_name_or_path: Optional[str] = field(

default=None,

metadata={

"help": "Reward model id of a pretrained model hosted inside a model repo on huggingface.co or "

"local path to a directory containing model weights saved using `PreTrainedModel.save_pretrained`."

},

)

def main(script_args, training_args, model_args):

# Load a pretrained model

model = AutoModelForCausalLM.from_pretrained(

model_args.model_name_or_path, trust_remote_code=model_args.trust_remote_code

)

tokenizer = AutoTokenizer.from_pretrained(

model_args.model_name_or_path, trust_remote_code=model_args.trust_remote_code

)

def reward_len(completions, **kwargs):

return [-abs(20 - len(completion)) for completion in completions]

# Load the dataset

dataset = load_dataset(script_args.dataset_name, name=script_args.dataset_config)

# Initialize the GRPO trainer

trainer = GRPOTrainer(

model=model,

reward_funcs=reward_len,

args=training_args,

train_dataset=dataset[script_args.dataset_train_split].select(range(100)),

eval_dataset=dataset[script_args.dataset_test_split].select(range(100)) if training_args.eval_strategy != "no" else None,

processing_class=tokenizer,

peft_config=get_peft_config(model_args),

)

# Train and push the model to the Hub

trainer.train()

# Save and push to hub

trainer.save_model(training_args.output_dir)

# if training_args.push_to_hub:

# trainer.push_to_hub(dataset_name=script_args.dataset_name)

def make_parser(subparsers: argparse._SubParsersAction = None):

dataclass_types = (GRPOScriptArguments, GRPOConfig, ModelConfig)

if subparsers is not None:

parser = subparsers.add_parser("grpo", help="Run the GRPO training script", dataclass_types=dataclass_types)

else:

parser = TrlParser(dataclass_types)

return parser

if __name__ == "__main__":

parser = make_parser()

script_args, training_args, model_args = parser.parse_args_and_config()

main(script_args, training_args, model_args)

'''

CUDA_VISIBLE_DEVICES=0 python test.py --model_name_or_path /home/grpo_test//Qwen2.5-0.5B-Instruct --dataset_name /home/grpo_test/trl-libtldr \

--learning_rate 2.0e-4 --num_train_epochs 1 --per_device_train_batch_size 2 \

--gradient_accumulation_steps 2 --eval_strategy no --logging_steps 2 --use_peft 1 --lora_r 32 --lora_alpha 16 --output_dir Qwen2-0.5B-GRPO

'''

#加速使用vllm 训练

2.部署与预测

from peft import AutoPeftModelForCausalLM

from transformers import AutoTokenizer

model = AutoPeftModelForCausalLM.from_pretrained(

"/home/grpo_test/qwen2-grpo-lora", # YOUR MODEL YOU USED FOR TRAINING

load_in_4bit = True,

)

tokenizer = AutoTokenizer.from_pretrained("/home/grpo_test/qwen2-grpo-lora")

SYSTEM_PROMPT = """

Respond in the following format:

<reasoning>

...

</reasoning>

<answer>

...

</answer>

"""

text = tokenizer.apply_chat_template([

{"role" : "user", "content" : "Calculate pi."},

], tokenize = False, add_generation_prompt = True)

inputs = tokenizer(

[

text,

], return_tensors = "pt").to("cuda")

from transformers import TextStreamer

text_streamer = TextStreamer(tokenizer)

outputs = model.generate(**inputs, max_new_tokens = 128)

print(outputs)

outputs = outputs[:, inputs['input_ids'].shape[1]:]

print(tokenizer.decode(outputs[0]))

'''

As an artificial intelligence language model, I do not calculate or execute numerical operations. However, I can provide you with the mathematical formula to calculate pi. The approximate value of pi is 3.14159, and the full mathematical formula is:

π = ∑ (2 × sin(1/n) × n^2) / (n × (n + 1) × (2n + 1))

Please note that this is an approximate value, and the accuracy can vary depending on the precision required.

'''

3.模型合并

from peft import AutoPeftModelForCausalLM

from transformers import AutoTokenizer

lora_model = AutoPeftModelForCausalLM.from_pretrained(

"/home/grpo_test/qwen2-grpo-lora", # YOUR MODEL YOU USED FOR TRAINING

torch_dtype="auto",

device_map="cuda:0"

)

model2 = lora_model.merge_and_unload()

print(model2)

model2.save_pretrained("merged-model")

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

model_name = "merged-model"

model1 = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="cuda:0"

)

print(model1)

model1_dict = dict()

model2_dict = dict()

for k, v in model1.named_parameters():

model1_dict[k] = v

for k, v in model2.named_parameters():

model2_dict[k] = v

keys = model1_dict.keys()

keys2 = model2_dict.keys()

print(set(keys) == set(keys2))

for k in keys:

print(f'{k}: {torch.allclose(model1_dict[k], model2_dict[k])},')

4.vllm 加载

from transformers import AutoTokenizer

from vllm import LLM, SamplingParams

max_model_len, tp_size = 8000, 1

model_name = "merged-model"

#prompt = [{"role": "user", "content": "你好"}]

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

llm = LLM(

model=model_name,

tensor_parallel_size=tp_size,

max_model_len=max_model_len,

trust_remote_code=True,

enforce_eager=True,

# GLM-4-9B-Chat-1M 如果遇见 OOM 现象,建议开启下述参数

dtype=torch.float16,

gpu_memory_utilization=0.35 #降低gpu 显存

# enable_chunked_prefill=True,

# max_num_batched_tokens=8192

)

SYSTEM_PROMPT = """

Respond in the following format:

<reasoning>

...

</reasoning>

<answer>

...

</answer>

"""

#自定义输入

prompt = tokenizer.apply_chat_template([

{"role" : "system", "content" : SYSTEM_PROMPT},

{"role" : "user", "content" : "How many r's are in strawberry?"},

], tokenize = False, add_generation_prompt = True)

sampling_params = SamplingParams(temperature=0.95, max_tokens = 1000)

inputs = tokenizer.apply_chat_template(prompt, tokenize=False, add_generation_prompt=True)

outputs = llm.generate(prompts=inputs, sampling_params=sampling_params)

print(outputs[0].outputs[0].text)

- 代码讲解

- 数据处理部分

#数据处理 已包含在compute_loss中。

device = self.accelerator.device

prompts = [x["prompt"] for x in inputs] #获取prompt 内容

#maybe_apply_chat_template 将输入转换为指定格式的输入。后台会自动判定,是否需要应用template 模板

prompts_text = [maybe_apply_chat_template(example, self.processing_class)["prompt"] for example in inputs]

#转向量化

prompt_inputs = self.processing_class(

prompts_text, return_tensors="pt", padding=True, padding_side="left", add_special_tokens=False

)

#gpu化

prompt_inputs = super()._prepare_inputs(prompt_inputs)

if self.max_prompt_length is not None:

prompt_inputs["input_ids"] = prompt_inputs["input_ids"][:, -self.max_prompt_length :]

prompt_inputs["attention_mask"] = prompt_inputs["attention_mask"][:, -self.max_prompt_length :]

def maybe_apply_chat_template(

example: dict[str, list[dict[str, str]]],

tokenizer: PreTrainedTokenizer,

tools: Optional[list[Union[dict, Callable]]] = None,

) -> dict[str, str]:

r"""

Example:

```python

>>> from transformers import AutoTokenizer

>>> tokenizer = AutoTokenizer.from_pretrained("microsoft/Phi-3-mini-128k-instruct")

>>> example = {

... "prompt": [{"role": "user", "content": "What color is the sky?"}],

... "completion": [{"role": "assistant", "content": "It is blue."}]

... }

>>> apply_chat_template(example, tokenizer)

{'prompt': '<|user|>\nWhat color is the sky?<|end|>\n<|assistant|>\n', 'completion': 'It is blue.<|end|>\n<|endoftext|>'}

```

"""

if is_conversational(example): #判定是不是问答模式,即 [{"role": "user", "content": "What color is the sky?"}]

return apply_chat_template(example, tokenizer, tools)

else:

return example

- 损失部分

#参考模型生成

with torch.inference_mode():

if self.ref_model is not None:

ref_per_token_logps = get_per_token_logps(self.ref_model, prompt_completion_ids, num_logits_to_keep)

else:

with self.accelerator.unwrap_model(model).disable_adapter(): # 关闭适配器,原生模型作为参考模型

ref_per_token_logps = get_per_token_logps(model, prompt_completion_ids, num_logits_to_keep)

#计算模型与参考模型的KL 散度

# Compute the KL divergence between the model and the reference model

per_token_kl = torch.exp(ref_per_token_logps - per_token_logps) - (ref_per_token_logps - per_token_logps) - 1

# Compute the rewards 计算奖励

prompts = [prompt for prompt in prompts for _ in range(self.num_generations)]

rewards_per_func = torch.zeros(len(prompts), len(self.reward_funcs), device=device)

for i, (reward_func, reward_processing_class) in enumerate(

zip(self.reward_funcs, self.reward_processing_classes)

):

if isinstance(reward_func, PreTrainedModel):

if is_conversational(inputs[0]):

messages = [{"messages": p + c} for p, c in zip(prompts, completions)]

texts = [apply_chat_template(x, reward_processing_class)["text"] for x in messages]

else:

texts = [p + c for p, c in zip(prompts, completions)]

reward_inputs = reward_processing_class(

texts, return_tensors="pt", padding=True, padding_side="right", add_special_tokens=False

)

reward_inputs = super()._prepare_inputs(reward_inputs)

with torch.inference_mode():

rewards_per_func[:, i] = reward_func(**reward_inputs).logits[:, 0] # Shape (B*G,)

else:

# Repeat all input columns (but "prompt" and "completion") to match the number of generations

reward_kwargs = {key: [] for key in inputs[0].keys() if key not in ["prompt", "completion"]}

for key in reward_kwargs:

for example in inputs:

# Repeat each value in the column for `num_generations` times

reward_kwargs[key].extend([example[key]] * self.num_generations)

output_reward_func = reward_func(prompts=prompts, completions=completions, **reward_kwargs)

rewards_per_func[:, i] = torch.tensor(output_reward_func, dtype=torch.float32, device=device)

# Sum the rewards from all reward functions 计算出奖励

rewards = rewards_per_func.sum(dim=1)

# Compute grouped-wise rewards 计算组内奖励均值和方差

mean_grouped_rewards = rewards.view(-1, self.num_generations).mean(dim=1)

std_grouped_rewards = rewards.view(-1, self.num_generations).std(dim=1)

# Normalize the rewards to compute the advantages 标准化奖励来计算优势

mean_grouped_rewards = mean_grouped_rewards.repeat_interleave(self.num_generations, dim=0)

std_grouped_rewards = std_grouped_rewards.repeat_interleave(self.num_generations, dim=0)

advantages = (rewards - mean_grouped_rewards) / (std_grouped_rewards + 1e-4) #优势标准化

#计算最后的损失函数

# x - x.detach() allows for preserving gradients from x #x - x.detach()允许从x中保留梯度

per_token_loss = torch.exp(per_token_logps - per_token_logps.detach()) * advantages.unsqueeze(1)

per_token_loss = -(per_token_loss - self.beta * per_token_kl)

loss = ((per_token_loss * completion_mask).sum(dim=1) / completion_mask.sum(dim=1)).mean()

#per_token_logps - per_token_logps.detach() 的理解:策略比例 采用梯度代替

1. per_token_logps - per_token_logps.detach()的目的是在计算损失函数时,排除那些不需要梯度更新的部分,通常用于避免反向传播到某些特定的操作或变量。

330

330

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?