1、前言

在构建神经网络模型时,有时会遇到需要自定义一个可训练的参数来优化模型。在参考了前人代码的基础上,进行验证自定义的参数是否可以被训练。

2、实验条件

首先定义一个线性模型y=a*x+b,

其中a 和 b是可被训练的。

定义输入数据x = np.arange(500).reshape(500,1).astype(np.float32),x是0-500的有序整数。

定义标签数据y = np.arange(500).reshape(500,1).astype(np.float32)+2.5

所以a、b最终的值应为a=1,b=2.5

3、代码

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

from tensorflow.keras.layers import*

from tensorflow.keras.models import*

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

x = np.arange(500).reshape(500,1).astype(np.float32)

# x

y = np.arange(500).reshape(500,1).astype(np.float32)+2.5

# y

class En_train_para(Layer):

def __init__(self, parameter_initializer=tf.zeros_initializer(),

parameter_regularizer=None,

parameter_constraint=None,

**kwargs):

super(En_train_para, self).__init__(**kwargs)

self.gamma_initializer = parameter_initializer

self.gamma_regularizer = parameter_regularizer

self.gamma_constraint = parameter_constraint

self.alpha_initializer = parameter_initializer

self.alpha_regularizer = parameter_regularizer

self.alpha_constraint = parameter_constraint

def build(self, input_shape):

self.gamma = self.add_weight(shape=(1,),

initializer=self.gamma_initializer,

name='gamma',

regularizer=self.gamma_regularizer,

constraint=self.gamma_constraint)

self.alpha = self.add_weight(shape=(1,),

initializer=self.alpha_initializer,

name='alpha',

regularizer=self.alpha_regularizer,

constraint=self.alpha_constraint)

self.built = True

def compute_output_shape(self, input_shape):

return input_shape

def call(self, inputs, **kwargs):

out = self.gamma * inputs + self.alpha

return out

den = En_train_para()

def net(in_shape=(1,)):

input_tensor = Input(in_shape)

outs = den(input_tensor)

model = Model(input_tensor, outs)

return model

model = net()

model.compile(optimizer='adam',loss='MSE')

model.fit(x=x, y=y, epochs=1000)

print(den.gamma.numpy()[0])

print(den.alpha.numpy()[0])

4、结果

在初始化中,a=0, b=0

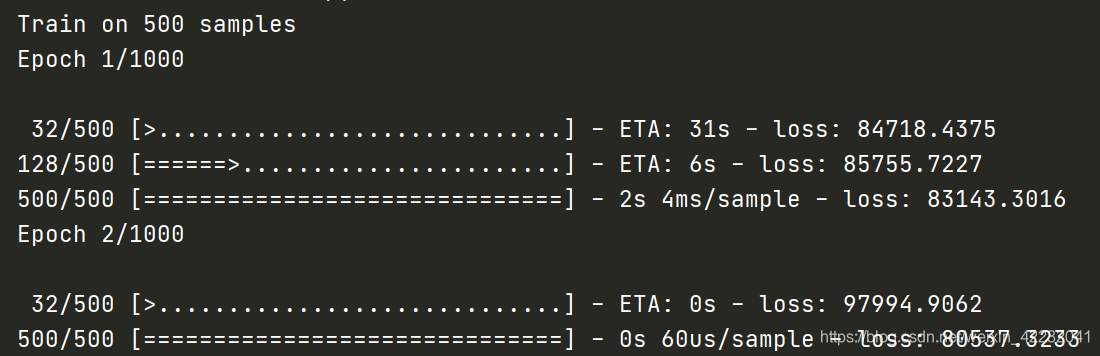

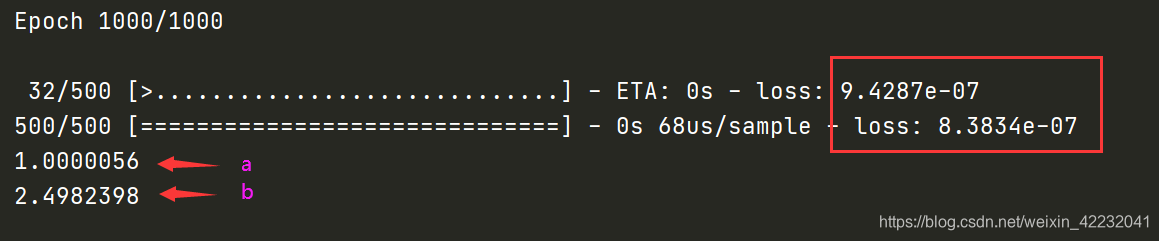

从损失值中可以看出,经过1000次迭代,损失从84718 到9.4e-07。

最终a=1.0000056 b=2.4982398

5、结论

所以,通过以上方法自定义可训练参数是可行的。

1695

1695

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?