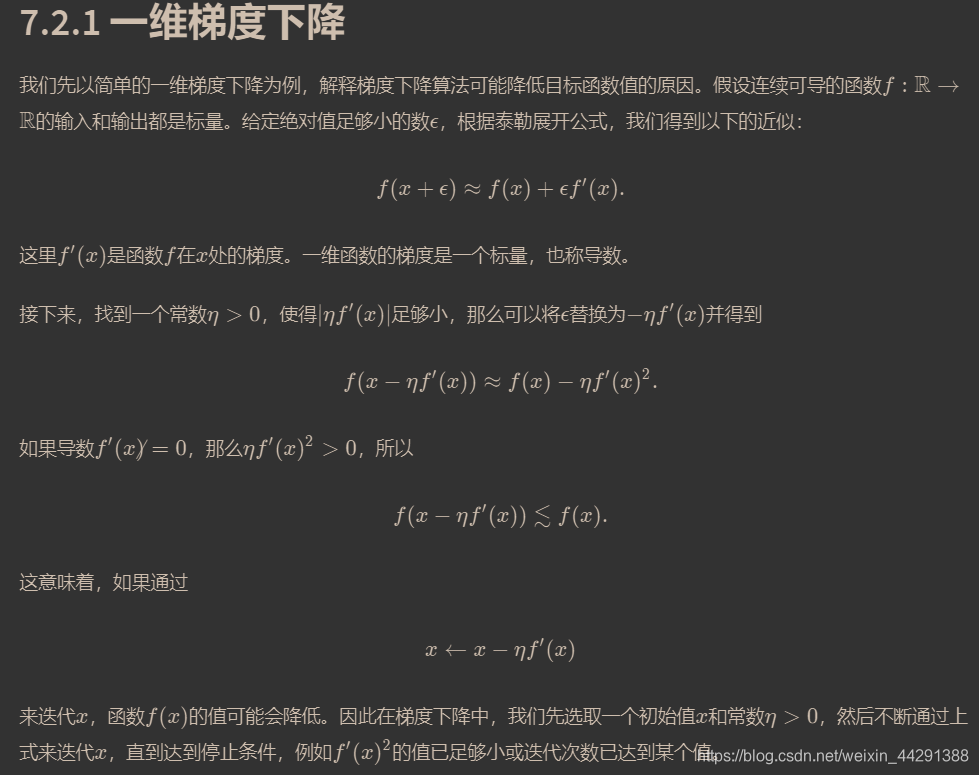

参考梯度下降和随机梯度下降

以f(x) = x2为例

import matplotlib.pyplot as plt

import numpy as np

import torch

import math

def gd(eta,iter):

x = 10

results = []

for i in range(iter):

x -= eta * 2 * x # f(x) = x * x的导数为f'(x) = 2 * x

results.append(x)

return results

res = gd(0.2,iter = 10)

print(res)

# 绘制出自变量x的迭代轨迹

def show_trace(res):

n = max(abs(min(res)), abs(max(res)), 10)

f_line = np.arange(-n, n, 0.1)

plt.plot(f_line, [x * x for x in f_line])

plt.plot(res, [x * x for x in res], '-o')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.figure(1)

show_trace(res)

# 学习率

res = gd(0.05,10) #学习率过小迭代10次不能达到最优解

plt.figure(2)

show_trace(res)

res = gd(0.05,100) #迭代100次达到最优解,100是我自己随意设的,只是100次肯定与最优解误差不大

plt.figure(3)

print(res,'\n')

show_trace(res)

res = gd(1.1,10) #学习率过大

plt.figure(4)

print(res)

show_trace(res)

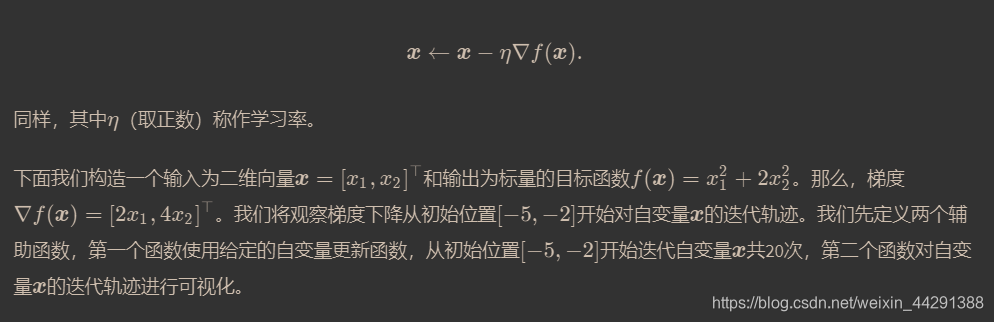

# 多维梯度下降------------------------------------------------------

def train_2d(trainer):

x1, x2, s1, s2 = -5, -2, 0, 0 # s1和s2是自变量状态,本章后续几节会使用

results = [(x1, x2)]

for i in range(20):

x1, x2, s1, s2 = trainer(x1, x2, s1, s2)

results.append((x1, x2))

print('epoch %d, x1 %f, x2 %f' % (i + 1, x1, x2))

return results

def show_trace_2d(f, results):

plt.plot(*zip(*results), '-o', color='#ff7f0e')

x1, x2 = np.meshgrid(np.arange(-5.5, 1.0, 0.1), np.arange(-3.0, 1.0, 0.1))

plt.contour(x1, x2, f(x1, x2), colors='#1f77b4')

plt.xlabel('x1')

plt.ylabel('x2')

def f_2d(x1, x2): # 目标函数

return x1 ** 2 + 2 * x2 ** 2

def gd_2d(x1, x2, s1, s2):

return (x1 - eta * 2 * x1, x2 - eta * 4 * x2, 0, 0)

eta = 0.1

plt.subplot(3,2,5)

show_trace_2d(f_2d, train_2d(gd_2d))

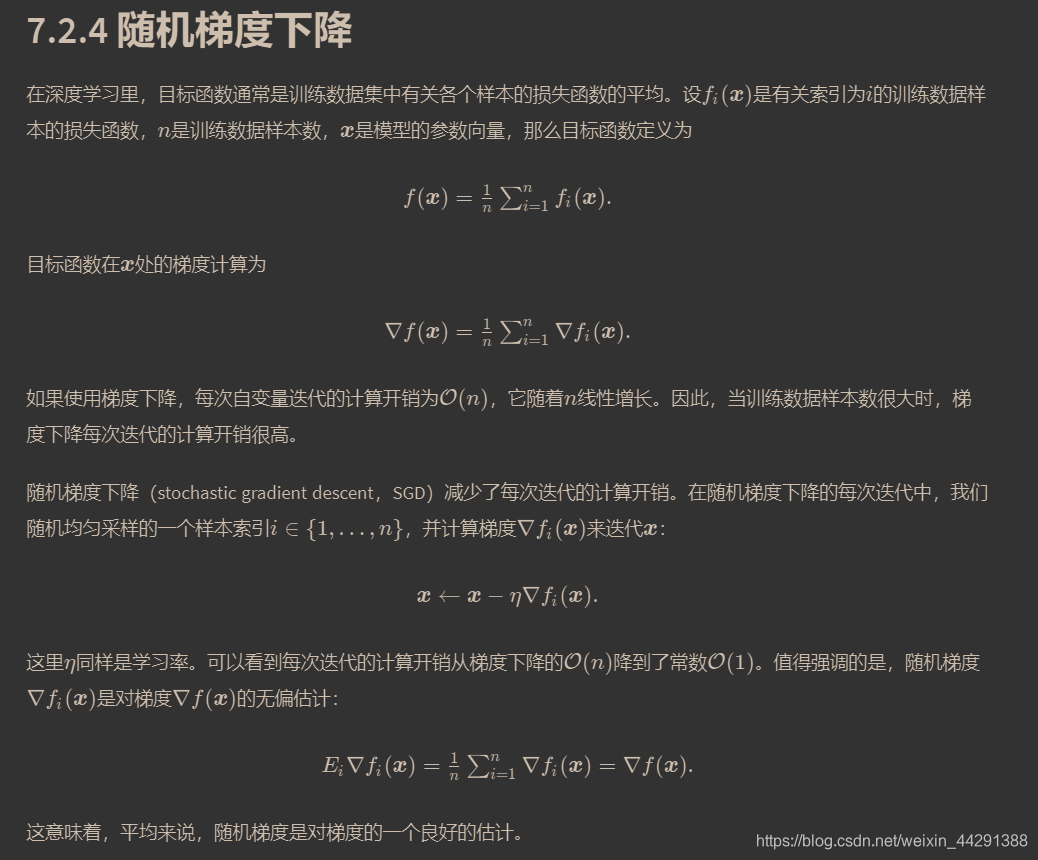

# 随机梯度下降-----------------------------

# 通过在梯度中添加均值为0的随机噪声来模拟随机梯度下降,以此来比较它与梯度下降的区别

def sgd_2d(x1, x2, s1, s2):

return (x1 - eta * (2 * x1 + np.random.normal(0.1)),

x2 - eta * (4 * x2 + np.random.normal(0.1)), 0, 0)

plt.subplot(3,2,6)

show_trace_2d(f_2d, train_2d(sgd_2d))

#当训练数据集的样本较多时,梯度下降每次迭代的计算开销较大,因而随机梯度下降通常更受青睐。```

759

759

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?