- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊 | 接辅导、项目定制

- 🚀 文章来源:K同学的学习圈子

我的环境:

-

语言环境:python 3.8

-

编译器:jupyter notebook

-

深度学习环境:Pytorch

torch == 2.1.0+cpu

torchvision == 0.16.0+cpu

-

要求:

- 本地读取并加载数据。

- 了解RNN构建过程。

- 测试集acc达到87%(89%更好)。

一、准备工作

import tensorflow as tf

import pandas as pd

import numpy as np

df = pd.read_csv("heart.csv")

df

| age | sex | cp | trestbps | chol | fbs | restecg | thalach | exang | oldpeak | slope | ca | thal | target | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 63 | 1 | 3 | 145 | 233 | 1 | 0 | 150 | 0 | 2.3 | 0 | 0 | 1 | 1 |

| 1 | 37 | 1 | 2 | 130 | 250 | 0 | 1 | 187 | 0 | 3.5 | 0 | 0 | 2 | 1 |

| 2 | 41 | 0 | 1 | 130 | 204 | 0 | 0 | 172 | 0 | 1.4 | 2 | 0 | 2 | 1 |

| 3 | 56 | 1 | 1 | 120 | 236 | 0 | 1 | 178 | 0 | 0.8 | 2 | 0 | 2 | 1 |

| 4 | 57 | 0 | 0 | 120 | 354 | 0 | 1 | 163 | 1 | 0.6 | 2 | 0 | 2 | 1 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 298 | 57 | 0 | 0 | 140 | 241 | 0 | 1 | 123 | 1 | 0.2 | 1 | 0 | 3 | 0 |

| 299 | 45 | 1 | 3 | 110 | 264 | 0 | 1 | 132 | 0 | 1.2 | 1 | 0 | 3 | 0 |

| 300 | 68 | 1 | 0 | 144 | 193 | 1 | 1 | 141 | 0 | 3.4 | 1 | 2 | 3 | 0 |

| 301 | 57 | 1 | 0 | 130 | 131 | 0 | 1 | 115 | 1 | 1.2 | 1 | 1 | 3 | 0 |

| 302 | 57 | 0 | 1 | 130 | 236 | 0 | 0 | 174 | 0 | 0.0 | 1 | 1 | 2 | 0 |

303 rows × 14 columns

#检查是否有空值

df.isnull().sum()

age 0

sex 0

cp 0

trestbps 0

chol 0

fbs 0

restecg 0

thalach 0

exang 0

oldpeak 0

slope 0

ca 0

thal 0

target 0

dtype: int64

二、数据预处理

1. 划分训练集和测试集

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import train_test_split

X = df.iloc[:,:-1]

y = df.iloc[:,-1]

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.1, random_state = 1)

X_train.shape, y_train.shape

((272, 13), (272,))

2. 标准化

#将每一列标准化为标准正态分布

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)

X_test = X_test.reshape(X_test.shape[0], X_test.shape[1], 1)

X_train.shape, X_test.shape

((272, 13, 1), (31, 13, 1))

三、模型构建,编译,训练

1. 模型构建

import tensorflow

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, LSTM, SimpleRNN

model = Sequential()

model.add(SimpleRNN(200,input_shape = (13,1), activation = 'relu'))

model.add(Dense(100, activation = 'relu'))

model.add(Dense(1, activation = 'sigmoid'))

model.summary()

Model: "sequential_3"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

simple_rnn_3 (SimpleRNN) (None, 200) 40400

dense_6 (Dense) (None, 100) 20100

dense_7 (Dense) (None, 1) 101

=================================================================

Total params: 60601 (236.72 KB)

Trainable params: 60601 (236.72 KB)

Non-trainable params: 0 (0.00 Byte)

_________________________________________________________________

2. 模型编译

opt = tf.keras.optimizers.Adam(learning_rate = 1e-4)

model.compile(loss = 'binary_crossentropy',

optimizer = opt,

metrics = 'accuracy')

3. 模型训练

epochs = 60

history = model.fit(X_train,y_train,

epochs = epochs,

batch_size = 128,

validation_data = (X_test,y_test),

verbose = 1)

Epoch 1/60

3/3 [==============================] - 1s 106ms/step - loss: 0.7025 - accuracy: 0.3382 - val_loss: 0.6939 - val_accuracy: 0.3548

Epoch 2/60

3/3 [==============================] - 0s 23ms/step - loss: 0.6929 - accuracy: 0.5809 - val_loss: 0.6805 - val_accuracy: 0.7097

Epoch 3/60

3/3 [==============================] - 0s 22ms/step - loss: 0.6839 - accuracy: 0.6875 - val_loss: 0.6674 - val_accuracy: 0.8710

Epoch 4/60

3/3 [==============================] - 0s 24ms/step - loss: 0.6752 - accuracy: 0.7279 - val_loss: 0.6546 - val_accuracy: 0.8710

Epoch 5/60

3/3 [==============================] - 0s 24ms/step - loss: 0.6669 - accuracy: 0.7390 - val_loss: 0.6420 - val_accuracy: 0.8710

Epoch 6/60

3/3 [==============================] - 0s 23ms/step - loss: 0.6588 - accuracy: 0.7500 - val_loss: 0.6301 - val_accuracy: 0.8710

Epoch 7/60

3/3 [==============================] - 0s 21ms/step - loss: 0.6508 - accuracy: 0.7610 - val_loss: 0.6184 - val_accuracy: 0.8710

Epoch 8/60

3/3 [==============================] - 0s 24ms/step - loss: 0.6426 - accuracy: 0.7610 - val_loss: 0.6065 - val_accuracy: 0.8710

Epoch 9/60

3/3 [==============================] - 0s 23ms/step - loss: 0.6340 - accuracy: 0.7610 - val_loss: 0.5944 - val_accuracy: 0.8710

Epoch 10/60

3/3 [==============================] - 0s 23ms/step - loss: 0.6250 - accuracy: 0.7647 - val_loss: 0.5814 - val_accuracy: 0.8710

Epoch 11/60

3/3 [==============================] - 0s 23ms/step - loss: 0.6160 - accuracy: 0.7721 - val_loss: 0.5676 - val_accuracy: 0.8710

Epoch 12/60

3/3 [==============================] - 0s 25ms/step - loss: 0.6057 - accuracy: 0.7794 - val_loss: 0.5527 - val_accuracy: 0.8710

Epoch 13/60

3/3 [==============================] - 0s 24ms/step - loss: 0.5952 - accuracy: 0.7978 - val_loss: 0.5368 - val_accuracy: 0.8710

Epoch 14/60

3/3 [==============================] - 0s 25ms/step - loss: 0.5836 - accuracy: 0.8051 - val_loss: 0.5193 - val_accuracy: 0.9032

Epoch 15/60

3/3 [==============================] - 0s 27ms/step - loss: 0.5706 - accuracy: 0.7978 - val_loss: 0.4999 - val_accuracy: 0.9032

Epoch 16/60

3/3 [==============================] - 0s 24ms/step - loss: 0.5576 - accuracy: 0.8015 - val_loss: 0.4784 - val_accuracy: 0.9032

Epoch 17/60

3/3 [==============================] - 0s 24ms/step - loss: 0.5424 - accuracy: 0.8015 - val_loss: 0.4550 - val_accuracy: 0.9032

Epoch 18/60

3/3 [==============================] - 0s 25ms/step - loss: 0.5264 - accuracy: 0.8088 - val_loss: 0.4306 - val_accuracy: 0.8710

Epoch 19/60

3/3 [==============================] - 0s 25ms/step - loss: 0.5103 - accuracy: 0.8199 - val_loss: 0.4056 - val_accuracy: 0.8710

Epoch 20/60

3/3 [==============================] - 0s 25ms/step - loss: 0.4935 - accuracy: 0.8088 - val_loss: 0.3815 - val_accuracy: 0.8710

Epoch 21/60

3/3 [==============================] - 0s 25ms/step - loss: 0.4771 - accuracy: 0.8088 - val_loss: 0.3569 - val_accuracy: 0.8710

Epoch 22/60

3/3 [==============================] - 0s 26ms/step - loss: 0.4627 - accuracy: 0.8125 - val_loss: 0.3348 - val_accuracy: 0.8710

Epoch 23/60

3/3 [==============================] - 0s 28ms/step - loss: 0.4498 - accuracy: 0.8199 - val_loss: 0.3159 - val_accuracy: 0.8710

Epoch 24/60

3/3 [==============================] - 0s 28ms/step - loss: 0.4388 - accuracy: 0.7941 - val_loss: 0.3001 - val_accuracy: 0.8710

Epoch 25/60

3/3 [==============================] - 0s 26ms/step - loss: 0.4325 - accuracy: 0.7904 - val_loss: 0.2914 - val_accuracy: 0.8065

...

Epoch 59/60

3/3 [==============================] - 0s 26ms/step - loss: 0.3221 - accuracy: 0.8640 - val_loss: 0.2794 - val_accuracy: 0.9032

Epoch 60/60

3/3 [==============================] - 0s 27ms/step - loss: 0.3244 - accuracy: 0.8640 - val_loss: 0.2833 - val_accuracy: 0.9032

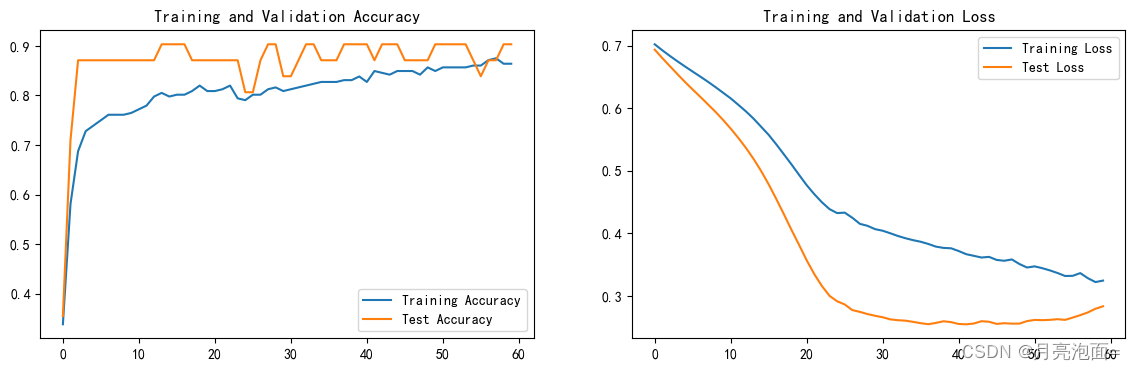

四、模型评估

import matplotlib.pyplot as plt

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(14, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

scores = model.evaluate(X_test,y_test,verbose=0)

print("%s: %.2f%%" % (model.metrics_names[1],scores[1]*100))

accuracy: 90.32%

当训练轮次过高后,测试集准确率会下降,可能达到了过拟合,所以修改epoch在60时,达到了比较好的性能。

1518

1518

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?