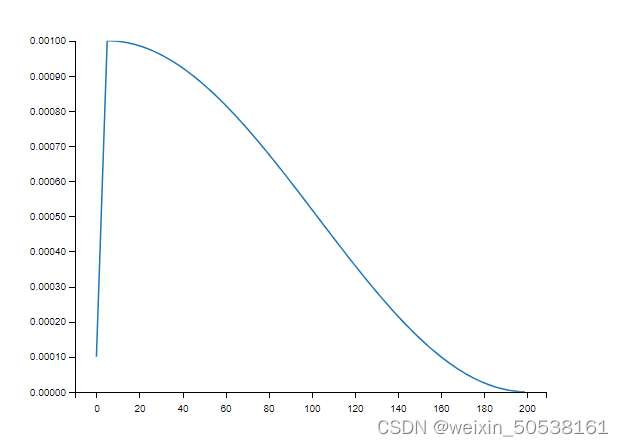

1. 初始学习率设为1 × 10−4,根据余弦退火计划,预热5个epoch后学习率上升到1 × 10−3,最后一个epoch学习率下降到0。

import numpy as np

import matplotlib.pyplot as plt

def compute_eta_t(eta_min, eta_max, T_cur, Ti):

pi = np.pi

eta_t = eta_min + 0.5 * (eta_max - eta_min) * (np.cos(pi * T_cur / Ti) + 1)

return eta_t

# 每Ti个epoch进行一次restart。

def SLR_learning():

Ti = [5, 195]

n_batches = 200

eta_ts = []

for ti in Ti:

if ti == Ti[0]:

for i in range(ti):

item = ((0.001 - 0.0001) / ti)*i + 0.0001

eta_ts.append(item)

else:

T_cur = np.arange(0, ti)

for t_cur in T_cur:

eta_ts.append(compute_eta_t(0.0000, 0.001, t_cur, ti))

n_iterations = sum(Ti)

epoch = np.arange(0, n_iterations)

plt.plot(epoch, eta_ts)

plt.ylim(0, 0.001)

plt.show()

return eta_ts

learn = SLR_learning()2. 结构如下图所示

3. 调用余弦退火函数

import SLR_learning

learn = SLR_learning.SLR_learning()

for epoch in range(200): # 运行200个周期

...

lr = optimizer.param_groups[0]['lr']

optimizer.param_groups[0]['lr'] = learn[batch_idx]

1739

1739

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?