多层感知机,其实就是多层神经元。

这一小节比较简单,看一下案例即可。

举例

分类fashion_mnist数据集。

fashion_mnist数据集大小为28*28,我们将28*28=784作为输入,输出为10个分类。

隐藏层一般设置为2^n,此处设置为256.

1.初始化模型参数

import torch

from torch import nn

from d2l import torch as d2l

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)num_inputs, num_outputs, num_hiddens = 784, 10, 256

W1 = nn.Parameter(torch.randn(

num_inputs, num_hiddens1, requires_grad=True) * 0.01)

b1 = nn.Parameter(torch.zeros(num_hiddens, requires_grad=True))

W2 = nn.Parameter(torch.randn(

num_hiddens, num_outputs, requires_grad=True) * 0.01)

b2 = nn.Parameter(torch.zeros(num_outputs, requires_grad=True))

params = [W1, b1, W2, b2]2.定义激活函数

# 有现成的API,咱们就是不用

def relu(X):

a = torch.zeros_like(X)

return torch.max(X, a)3.定义模型

# 由于X为28*28,我们把它展平

def net(X):

X = X.reshape((-1, num_inputs))

H = relu(X@W1 + b1) # 这里“@”代表矩阵乘法

return (H@W2 + b2)4.定义损失函数

# 交叉熵损失,上一节说了

# 报错“grad can be implicitly created only for scalar outputs”

# 把损失函数里面的内容删掉就可以了。该参数主要影响多个样本输入时,损失的综合方法。

# reduction默认为 mean。mean表示损失为多个样本的平均值,sum表示损失的和,none表示不综合。

# loss = nn.CrossEntropyLoss(reduction='none')

loss = nn.CrossEntropyLoss()5.开始训练

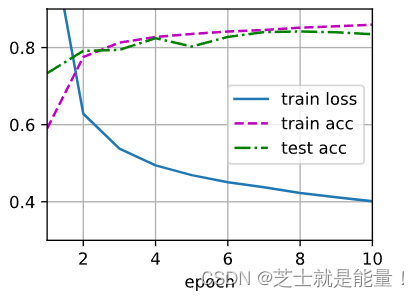

num_epochs, lr = 10, 0.1

updater = torch.optim.SGD(params, lr=lr)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater)训练的结果:

6.测试

d2l.predict_ch3(net, test_iter)简洁实现

1.建立模型

import torch

from torch import nn

from d2l import torch as d2l

net = nn.Sequential(nn.Flatten(),

nn.Linear(784, 256),

nn.ReLU(),

nn.Linear(256, 10))

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights);2.训练

batch_size, lr, num_epochs = 256, 0.1, 10

loss = nn.CrossEntropyLoss()

trainer = torch.optim.SGD(net.parameters(), lr=lr)

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

781

781

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?