很多yolo党刚刚入门时要学习的则是学习即插即用的注意力机制,本文章以yolov8添加CAA注意力机制为例。

代码如下

- 1、导入(首先在nn.modules.conv.py端导入这部分代码)

class CAA(nn.Module):

def __init__(self, ch, h_kernel_size=11, v_kernel_size=11) -> None:

super().__init__()

self.avg_pool = nn.AvgPool2d(7, 1, 3)

self.conv1 = Conv(ch, ch)

self.h_conv = nn.Conv2d(ch, ch, (1, h_kernel_size), 1, (0, h_kernel_size // 2), 1, ch)

self.v_conv = nn.Conv2d(ch, ch, (v_kernel_size, 1), 1, (v_kernel_size // 2, 0), 1, ch)

self.conv2 = Conv(ch, ch)

self.act = nn.Sigmoid()

def forward(self, x):

attn_factor = self.act(self.conv2(self.v_conv(self.h_conv(self.conv1(self.avg_pool(x))))))

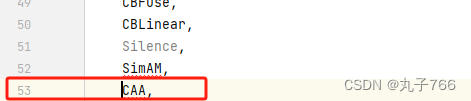

return attn_factor * x- 2、定义(也称register)

在nn.modules.init.py文件里进行注册

- 在nn.tasks.py文件里进入一个注册(共修改三个部分)

- (1)

- (2)

- (3)

-

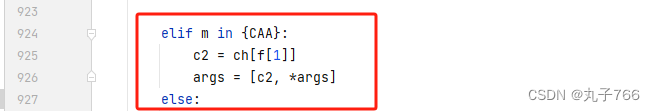

elif m in {CAA}: c2 = ch[f[1]] args = [c2, *args]

3、接下来就是修改yaml配置文件了(自己根据需求来调整CAA的位置)

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 4 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 3, C2f, [128, True]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 6, C2f, [256, True]]

- [-1, 1, CAA, [11]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 6, C2f, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 3, C2f, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 7], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 12

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 15 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 18 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 21 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

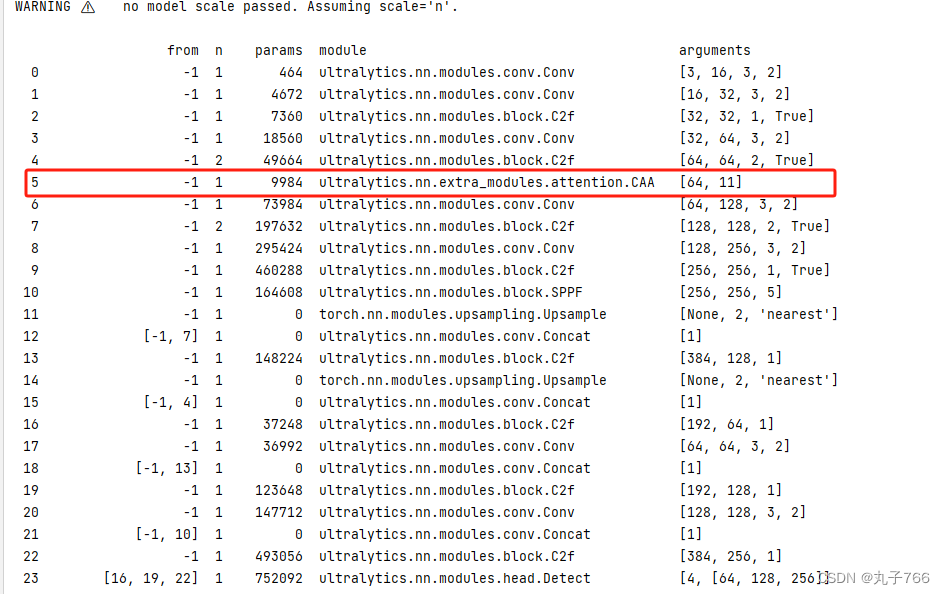

4、把yaml配置文件放到train.py文件里就可以运行啦

成功结果如下(可以看到CAA注意力机制已经成功导入进去了)

于是乎CAA注意力机制就成功导入啦!其他即插即用的注意力机制也可以按照这个方法哦。

如果出现显示未定义等报错,大家一定要看看自己注册那几个步骤有没有遗漏。

祝各位视觉小伙伴们研学之路顺顺利利。

1590

1590

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?