KNN(K-Nearest Neighbors,K-近邻)是机器学习中最简单的算法之一,大多数初学者都是从这个算法开始自己机器学习之旅的。

百科给出的算法核心思想是:如果一个样本在特征空间中的k个最相邻的样本中的大多数属于某一个类别,则该样本也属于这个类别,并具有这个类别上样本的特性。该方法在确定分类决策上只依据最邻近的一个或者几个样本的类别来决定待分样本所属的类别。

我总结的算法的核心思想是:待分类的元素周围离自己最近的k个邻居中,大多数从属的类别就是这个元素从属的类别。将待分类的元素的每一个特征与样本集中的数据对应的特征进行比较,特征最相似的k个数据为k个邻近点。k=1时为最近邻。

在上图中,绿色原点为待分类的元素,当k=3时,可以看到在实线区域内为离绿点最近的3个元素有一个蓝色的,两个红色的,那么绿点被分到红色的三角形一类。当k=5时,在虚线区域内,有三个蓝色的,两个红色的,则绿点被分到蓝色正方形一类。

KNN算法采用测量不同特征值之间的距离方法进行分类。

优点:精度高、对异常值不敏感、无数据输入假定。

确定:计算复杂度高、空间复杂度高。

适用数据范围:数值型和标称型。

OpenCV中KNN手册

CvKNearest::CvKNearest

//C++

CvKNearest::CvKNearest()

//C++

CvKNearest::CvKNearest(const Mat& trainData, const Mat& responses, const Mat& sampleIdx=Mat(), bool isRegression=false, int max_k=32 )

//C++

CvKNearest::CvKNearest(const CvMat* trainData, const CvMat* responses, const CvMat* sampleIdx=0, bool isRegression=false, int max_k=32 )CvKNearest::train

//C++

bool CvKNearest::train(const Mat& trainData, const Mat& responses, const Mat& sampleIdx=Mat(), bool isRegression=false, int maxK=32, bool updateBase=false )

//C++

bool CvKNearest::train(const CvMat* trainData, const CvMat* responses, const CvMat* sampleIdx=0, bool is_regression=false, int maxK=32, bool updateBase=false )

//Python

cv2.KNearest.train(trainData, responses[, sampleIdx[, isRegression[, maxK[,updateBase]]]]) → retvalCvKNearest::find_nearest

//Finds the neighbors and predicts responses for input vectors.

//C++

float CvKNearest::find_nearest(const Mat& samples, int k, Mat* results=0, const float** neighbors=0, Mat* neighborResponses=0, Mat* dist=0 ) const

//C++

float CvKNearest::find_nearest(const Mat& samples, int k, Mat& results, Mat& neighborResponses, Mat& dists) const

//C++

float CvKNearest::find_nearest(const CvMat* samples, int k, CvMat* results=0, const float** neighbors=0, CvMat* neighborResponses=0, CvMat* dist=0 ) const

//Python

cv2.KNearest.find_nearest(samples, k[, results[, neighborResponses[, dists]]]) → retval, results, neighborResponses, distsCvKNearest::get_max_k

//Returns the number of maximum neighbors

//C++

int CvKNearest::get_max_k() constCvKNearest::get_var_count

//Returns the number of used features (variables count)

//C++

int CvKNearest::get_var_count() constCvKNearest::get_sample_count

//Returns the total number of train samples

//C++

int CvKNearest::get_sample_count() constCvKNearest::is_regression

//Returns type of the problem: true for regression and false for classification

//C++

bool CvKNearest::is_regression() const一个二分类的例子

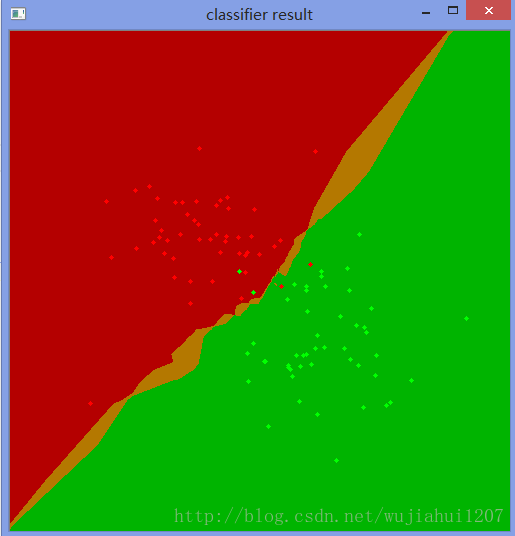

#include "ml.h"

#include "highgui.h"

int main( int argc, char** argv )

{

const int K = 10;

int i, j, k, accuracy;

float response;

int train_sample_count = 100;

CvRNG rng_state = cvRNG(-1);

CvMat* trainData = cvCreateMat( train_sample_count, 2, CV_32FC1 );

CvMat* trainClasses = cvCreateMat( train_sample_count, 1, CV_32FC1 );

IplImage* img = cvCreateImage( cvSize( 500, 500 ), 8, 3 );

float _sample[2];

CvMat sample = cvMat( 1, 2, CV_32FC1, _sample );

cvZero( img );

CvMat trainData1, trainData2, trainClasses1, trainClasses2;

// form the training samples

cvGetRows( trainData, &trainData1, 0, train_sample_count/2 );

cvRandArr( &rng_state, &trainData1, CV_RAND_NORMAL, cvScalar(200,200), cvScalar(50,50) );

cvGetRows( trainData, &trainData2, train_sample_count/2, train_sample_count );

cvRandArr( &rng_state, &trainData2, CV_RAND_NORMAL, cvScalar(300,300), cvScalar(50,50) );

cvGetRows( trainClasses, &trainClasses1, 0, train_sample_count/2 );

cvSet( &trainClasses1, cvScalar(1) );

cvGetRows( trainClasses, &trainClasses2, train_sample_count/2, train_sample_count );

cvSet( &trainClasses2, cvScalar(2) );

// learn classifier

CvKNearest knn( trainData, trainClasses, 0, false, K );

CvMat* nearests = cvCreateMat( 1, K, CV_32FC1);

for( i = 0; i < img->height; i++ )

{

for( j = 0; j < img->width; j++ )

{

sample.data.fl[0] = (float)j;

sample.data.fl[1] = (float)i;

// estimate the response and get the neighbors' labels

response = knn.find_nearest(&sample,K,0,0,nearests,0);

// compute the number of neighbors representing the majority

for( k = 0, accuracy = 0; k < K; k++ )

{

if( nearests->data.fl[k] == response)

accuracy++;

}

// highlight the pixel depending on the accuracy (or confidence)

cvSet2D( img, i, j, response == 1 ?

(accuracy > 5 ? CV_RGB(180,0,0) : CV_RGB(180,120,0)) :

(accuracy > 5 ? CV_RGB(0,180,0) : CV_RGB(120,120,0)) );

}

}

// display the original training samples

for( i = 0; i < train_sample_count/2; i++ )

{

CvPoint pt;

pt.x = cvRound(trainData1.data.fl[i*2]);

pt.y = cvRound(trainData1.data.fl[i*2+1]);

cvCircle( img, pt, 2, CV_RGB(255,0,0), CV_FILLED );

pt.x = cvRound(trainData2.data.fl[i*2]);

pt.y = cvRound(trainData2.data.fl[i*2+1]);

cvCircle( img, pt, 2, CV_RGB(0,255,0), CV_FILLED );

}

cvNamedWindow( "classifier result", 1 );

cvShowImage( "classifier result", img );

cvWaitKey(0);

cvReleaseMat( &trainClasses );

cvReleaseMat( &trainData );

return 0;

}分类的结果图如下

上述手册及例子代码来源于下面的网页

http://docs.opencv.org/2.4.13/modules/ml/doc/k_nearest_neighbors.html

634

634

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?