文章目录

pytorch-权值初始化

因为不恰当的权值初始化是会导致梯度爆炸,w的梯度会依赖于上一层的输出,输出非常小大,会引发梯度消失,爆炸。要严格控制网络输出值的尺度范围,不能太大,太小。

正常情况

# -*- coding: utf-8 -*-

"""

# @file name : grad_vanish_explod.py

# @author : TingsongYu https://github.com/TingsongYu

# @date : 2019-09-30 10:08:00

# @brief : 梯度消失与爆炸实验

"""

import os

BASE_DIR = os.path.dirname(os.path.abspath(__file__))

import torch

import random

import numpy as np

import torch.nn as nn

path_tools = os.path.abspath(os.path.join(BASE_DIR, "..", "..", "tools", "common_tools.py"))

assert os.path.exists(path_tools), "{}不存在,请将common_tools.py文件放到 {}".format(path_tools, os.path.dirname(path_tools))

import sys

hello_pytorch_DIR = os.path.abspath(os.path.dirname(__file__)+os.path.sep+".."+os.path.sep+"..")

sys.path.append(hello_pytorch_DIR)

from tools.common_tools import set_seed

set_seed(1) # 设置随机种子

class MLP(nn.Module):

def __init__(self, neural_num, layers):

super(MLP, self).__init__()

self.linears = nn.ModuleList([nn.Linear(neural_num, neural_num, bias=False) for i in range(layers)])

self.neural_num = neural_num

def forward(self, x):

for (i, linear) in enumerate(self.linears):

x = linear(x)

#x = torch.relu(x)

# print("layer:{}, std:{}".format(i, x.std()))

# if torch.isnan(x.std()):

# print("output is nan in {} layers".format(i))

# break

return x

def initialize(self):

for m in self.modules():

if isinstance(m, nn.Linear):

nn.init.normal_(m.weight.data) # normal: mean=0, std=1

# a = np.sqrt(6 / (self.neural_num + self.neural_num))

#

# tanh_gain = nn.init.calculate_gain('tanh')

# a *= tanh_gain

#

# nn.init.uniform_(m.weight.data, -a, a)

# nn.init.xavier_uniform_(m.weight.data, gain=tanh_gain)

# nn.init.normal_(m.weight.data, std=np.sqrt(2 / self.neural_num))

#nn.init.kaiming_normal_(m.weight.data)

#flag = 0

flag = 1

if flag:

layer_nums = 100

neural_nums = 256

batch_size = 16

net = MLP(neural_nums, layer_nums)

net.initialize()

inputs = torch.randn((batch_size, neural_nums)) # normal: mean=0, std=1

output = net(inputs)

print(output)

# ======================================= calculate gain =======================================

flag = 0

#flag = 1

if flag:

x = torch.randn(10000)

out = torch.tanh(x)

gain = x.std() / out.std()

print('gain:{}'.format(gain))

tanh_gain = nn.init.calculate_gain('tanh')

print('tanh_gain in PyTorch:', tanh_gain)

这段代码我们输入100层的神经网络,每一层是256个,输入 的bs是 16,经过MLP输出:

看一下细节

def forward(self, x):

for (i, linear) in enumerate(self.linears):

x = linear(x)

#x = torch.relu(x)

print("layer:{}, std:{}".format(i, x.std()))

if torch.isnan(x.std()):

print("output is nan in {} layers".format(i))

break

return x

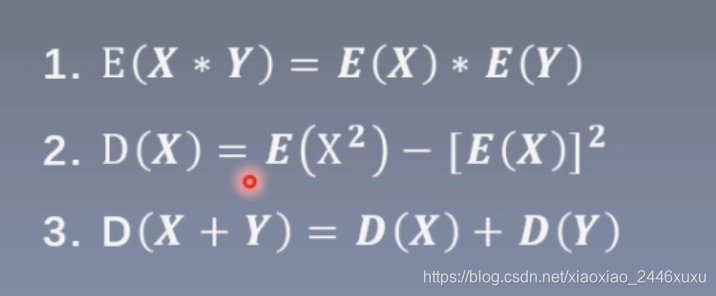

为什么是出现这个呢,是因为这些数据经过线性层,逐渐计算,会一步一步输出的范围变大,那么怎么让输出的范围变得不大呢,是因为。

](https://i-blog.csdnimg.cn/blog_migrate/e92091ef026b44cac8e23f9d1fd15e51.png)

可以试着改变一下输入的个数256变成多少都可以,所以为了解决方差一致性,从公式中可以看到,为了保持方差尺度不变,只能是1,D(w)=根号n分之1.

def initialize(self):

for m in self.modules():

if isinstance(m, nn.Linear):

nn.init.normal_(m.weight.data, std=np.sqrt(1/self.neural_num)) # normal: mean=0, std=1

这样就可以解决只是线性层的方差一致性。如果加入tanh的话,会出现梯度消失的现象。为了解决梯度消失的问题,Xavier提出了 均匀分布,方式。

这是手动计算;

这是pytorch提供的:

如果由tanh改为relu的话,可以观察到标准差逐渐变大:

针对这一点,Kaiming提出了这一分布。

手动计算:

pytorch提供:

2106

2106

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?