ParallaxOcclusionMapping(后面成为POM)是一个不错的高级技术,在我看来它是至今让我印象最深刻的技术。与其说是视差,不如说准确的视线与高度图交点算法。在《Real-time Rendering》中也见过他的前身-ParallaxMapping,但是POM的精确度更高,对于斜视效果也很不错,这将让它在未来的重要技术中占有一席之地。闲话少说,还是重在实质技术。

在DX9的samples中,由ATI提高的POM案例,确实是一个不错的实践机会。看过2006的Dynamic Parallax Mapping with Soft Shadows的技术文档,也不如实际看到代码时透彻。

总体可将POM分为两部分。

- 在切空间中,从给定的像素位置出发,沿着视向量,找到与高程图的交点,也称为置换点。

- 在切空间中,从置换点出发,沿着光照方向,采集定量高程图样本,对这样本进行分析求值,得到软阴影因子。

下面细致说明两个过程,如果想要原版的技术说明文档,请到我的上传的资源中下载Dynamic Parallax Mapping with Soft Shadows的技术文档。

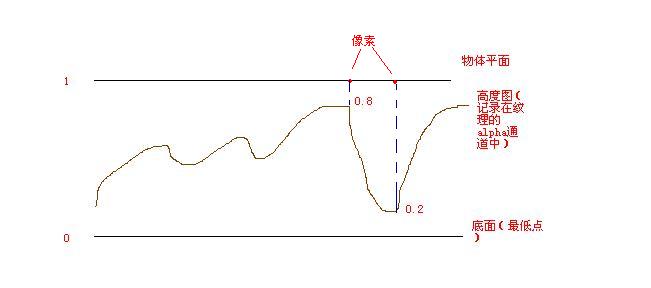

1. 我们需要先对我们的模型计算每个顶点的切空间基础向量(DX中带有相应接口去做,其计算方法可以google了解一下),然后用这些基础向量,可以构建从世界空间到切空间的转换矩阵。这样通过建立三角形的插值操作,在给定的单个像素中,我们能够得到这个像素的切空间矩阵,并能得到相应的在这个切空间中视向量(眼睛到该像素的向量)和光照向量。首先我们需要认识一点,在POM技术中,用到了高度图,高度图记录着该像素距离底部的最大高度。如何理解呢?假如一个平面要用POM技术,那么平面本身所在的平面是最大高度,为1.0f。然后我们给出在单位高度范围内,每个像素的高度。用单位高度范围是为了可能将高度所以伸缩。如图

对高度图有认识后,我们就可以从给定像素出发,沿着视向量,找到与高度图的交点。如图

如何沿着视向量去找交点呢,我们采用从给点像素位置出发,沿着视向量采取相应高度图的样点,然后在样点的高度和相应在视向量的高度进行比较,直到第一次发现视向量的高度低于样点高度,我们就知道在上次采样和本次采样之间发生了相交,然后我们用直线将上次样点和本次样点连接起来,就能找到与视向量的交点。这是一种近似方法,但是效果已经不错了。过程如下图:

2. 继续在刚找到的交点处,实施软阴影。如何实现软阴影,其实我们只需要一个暗化因子就可以将处于阴影区域的像素暗化,运用于整个平面就能呈现对应的软阴影!这里采用一种探索性求值的方法来计算软阴影因素。这样实现,从得到的交点处出发,朝着光源方向发出一条射线,然后在这条射线上固定采取10个样点,如果这10个样点比规定的高度要高,那么我们就会计算得到一个有效的阴影因子。对每个样点求阴影因子,然后用其中最大的因子作为该像素点的暗化因子。

如图

这样,在理论上我们已经知道怎么实施POM技术了。现在让我们实地去考查一下真实的实现方法。

在POM案例中的shader我只提其中的重点,难点出进行中文注释。

//--------------------------------------------------------------------------------------

// File: ParallaxOcclusionMapping.fx

//

// Parallax occlusion mapping implementation

//

// Implementation of the algorithm as described in "Dynamic Parallax Occlusion

// Mapping with Approximate Soft Shadows" paper, by N. Tatarchuk, ATI Research,

// to appear in the proceedings of ACM Symposium on Interactive 3D Graphics and Games, 2006.

//

// For examples of use in a real-time scene, see ATI X1K demo "ToyShop":

// http://www.ati.com/developer/demos/rx1800.html

//

// Copyright (c) ATI Research, Inc. All rights reserved.

//--------------------------------------------------------------------------------------

//--------------------------------------------------------------------------------------

// Global variables

//--------------------------------------------------------------------------------------

texture g_baseTexture; // Base color texture

texture g_nmhTexture; // Normal map and height map texture pair

float4 g_materialAmbientColor; // Material's ambient color

float4 g_materialDiffuseColor; // Material's diffuse color

float4 g_materialSpecularColor; // Material's specular color

float g_fSpecularExponent; // Material's specular exponent

bool g_bAddSpecular; // Toggles rendering with specular or without

// Light parameters:

float3 g_LightDir; // Light's direction in world space

float4 g_LightDiffuse; // Light's diffuse color

float4 g_LightAmbient; // Light's ambient color

float4 g_vEye; // Camera's location

float g_fBaseTextureRepeat; // The tiling factor for base and normal map textures

float g_fHeightMapScale; // Describes the useful range of values for the height field

// Matrices:

float4x4 g_mWorld; // World matrix for object

float4x4 g_mWorldViewProjection; // World * View * Projection matrix

float4x4 g_mView; // View matrix

bool g_bVisualizeLOD; // Toggles visualization of level of detail colors

bool g_bVisualizeMipLevel; // Toggles visualization of mip level

bool g_bDisplayShadows; // Toggles display of self-occlusion based shadows

float2 g_vTextureDims; // Specifies texture dimensions for computation of mip level at

// render time (width, height)

int g_nLODThreshold; // The mip level id for transitioning between the full computation

// for parallax occlusion mapping and the bump mapping computation

float g_fShadowSoftening; // Blurring factor for the soft shadows computation

int g_nMinSamples; // The minimum number of samples for sampling the height field profile

int g_nMaxSamples; // The maximum number of samples for sampling the height field profile

//--------------------------------------------------------------------------------------

// Texture samplers

//--------------------------------------------------------------------------------------

sampler tBase =

sampler_state

{

Texture = < g_baseTexture >;

MipFilter = LINEAR;

MinFilter = LINEAR;

MagFilter = LINEAR;

};

sampler tNormalHeightMap =

sampler_state

{

Texture = < g_nmhTexture >;

MipFilter = LINEAR;

MinFilter = LINEAR;

MagFilter = LINEAR;

};

//--------------------------------------------------------------------------------------

// Vertex shader output structure

//--------------------------------------------------------------------------------------

struct VS_OUTPUT

{

float4 position : POSITION;

float2 texCoord : TEXCOORD0;

float3 vLightTS : TEXCOORD1; // light vector in tangent space, denormalized

float3 vViewTS : TEXCOORD2; // view vector in tangent space, denormalized

float2 vParallaxOffsetTS : TEXCOORD3; // Parallax offset vector in tangent space

float3 vNormalWS : TEXCOORD4; // Normal vector in world space

float3 vViewWS : TEXCOORD5; // View vector in world space

};

//--------------------------------------------------------------------------------------

// This shader computes standard transform and lighting

//--------------------------------------------------------------------------------------

VS_OUTPUT RenderSceneVS( float4 inPositionOS : POSITION,

float2 inTexCoord : TEXCOORD0,

float3 vInNormalOS : NORMAL,

float3 vInBinormalOS : BINORMAL,

float3 vInTangentOS : TANGENT )

{

VS_OUTPUT Out;

// Transform and output input position

Out.position = mul( inPositionOS, g_mWorldViewProjection );

// Propagate texture coordinate through:

Out.texCoord = inTexCoord * g_fBaseTextureRepeat;//部分NormalMap图比较小,需要再网格中进行重复

// Transform the normal, tangent and binormal vectors from object space to homogeneous projection space:

float3 vNormalWS = mul( vInNormalOS, (float3x3) g_mWorld );

float3 vTangentWS = mul( vInTangentOS, (float3x3) g_mWorld );

float3 vBinormalWS = mul( vInBinormalOS, (float3x3) g_mWorld );

// Propagate the world space vertex normal through:

Out.vNormalWS = vNormalWS;

vNormalWS = normalize( vNormalWS );

vTangentWS = normalize( vTangentWS );

vBinormalWS = normalize( vBinormalWS );

// Compute position in world space:

float4 vPositionWS = mul( inPositionOS, g_mWorld );

// Compute and output the world view vector (unnormalized):

float3 vViewWS = g_vEye - vPositionWS;

Out.vViewWS = vViewWS;

// Compute denormalized light vector in world space:

float3 vLightWS = g_LightDir;

// Normalize the light and view vectors and transform it to the tangent space:

//float3x3 mWorldToTangent = float3x3( vTangentWS, vBinormalWS, vNormalWS );

float3x3 mWorldToTangent =

{

vTangentWS.x, vTangentWS.y, vTangentWS.z,

vBinormalWS.x, vBinormalWS.y, vBinormalWS.z,

vNormalWS.x, vNormalWS.y, vNormalWS.z

};

// Propagate the view and the light vectors (in tangent space):

Out.vLightTS = mul( vLightWS, mWorldToTangent );//这里很诡异,我们一般采用mul( mWorldToTangentS, vLightWS )

Out.vViewTS = mul( mWorldToTangent, vViewWS );

// Compute the ray direction for intersecting the height field profile with

// current view ray. See the above paper for derivation of this computation.

// Compute initial parallax displacement direction:

float2 vParallaxDirection = normalize( Out.vViewTS.xy );

// The length of this vector determines the furthest amount of displacement:

float fLength = length( Out.vViewTS );

float fParallaxLength = sqrt( fLength * fLength - Out.vViewTS.z * Out.vViewTS.z ) / Out.vViewTS.z;//我们需要将视向量规矩到高度为1的单位高度范围内,所以将视向量的xy进行相应缩放,不缩放z,是因为已经明确了高度为1,z不用了。

// Compute the actual reverse parallax displacement vector:

Out.vParallaxOffsetTS = vParallaxDirection * fParallaxLength;

// Need to scale the amount of displacement to account for different height ranges

// in height maps. This is controlled by an artist-editable parameter:

Out.vParallaxOffsetTS *= g_fHeightMapScale;//对高度图的缩放实际体现在视向量的缩放上,因为最后我们看的是光照公式的结果,所以调大高度图的范围,和缩放视向量的xy是一曲同工之妙。这是我个人的见解,如有不对,敬请指出。

return Out;

}

//--------------------------------------------------------------------------------------

// Pixel shader output structure

//--------------------------------------------------------------------------------------

struct PS_OUTPUT

{

float4 RGBColor : COLOR0; // Pixel color

};

struct PS_INPUT

{

float2 texCoord : TEXCOORD0;

float3 vLightTS : TEXCOORD1; // light vector in tangent space, denormalized

float3 vViewTS : TEXCOORD2; // view vector in tangent space, denormalized

float2 vParallaxOffsetTS : TEXCOORD3; // Parallax offset vector in tangent space

float3 vNormalWS : TEXCOORD4; // Normal vector in world space

float3 vViewWS : TEXCOORD5; // View vector in world space

};

//--------------------------------------------------------------------------------------

// Function: ComputeIllumination

//

// Description: Computes phong illumination for the given pixel using its attribute

// textures and a light vector.

//--------------------------------------------------------------------------------------

float4 ComputeIllumination( float2 texCoord, float3 vLightTS, float3 vViewTS, float fOcclusionShadow )

{

// Sample the normal from the normal map for the given texture sample:

float3 vNormalTS = normalize( tex2D( tNormalHeightMap, texCoord ) * 2 - 1 );

// Sample base map:

float4 cBaseColor = tex2D( tBase, texCoord );

// Compute diffuse color component:

float3 vLightTSAdj = float3( vLightTS.x, -vLightTS.y, vLightTS.z );//此处发现是否改变y对POM技术没有绝对变化,只是翻转y后亮度高了一些。具体原因暂未发现,如果你知道,记得留言。

float4 cDiffuse = saturate( dot( vNormalTS, vLightTSAdj )) * g_materialDiffuseColor;

// Compute the specular component if desired:

float4 cSpecular = 0;

if ( g_bAddSpecular )

{

float3 vReflectionTS = normalize( 2 * dot( vViewTS, vNormalTS ) * vNormalTS - vViewTS );

float fRdotL = saturate( dot( vReflectionTS, vLightTSAdj ));

cSpecular = saturate( pow( fRdotL, g_fSpecularExponent )) * g_materialSpecularColor;

}

// Composite the final color:

float4 cFinalColor = (( g_materialAmbientColor + cDiffuse ) * cBaseColor + cSpecular ) * fOcclusionShadow;

//float4 cFinalColor =cBaseColor * cDiffuse * fOcclusionShadow;

return cFinalColor;

}

//--------------------------------------------------------------------------------------

// Parallax occlusion mapping pixel shader

//

// Note: this shader contains several educational modes that would not be in the final

// game or other complicated scene rendering. The blocks of code in various "if"

// statements for turning off visual qualities (such as visual level of detail

// or specular or shadows, etc), can be handled differently, and more optimally.

// It is implemented here purely for educational purposes.

//--------------------------------------------------------------------------------------

float4 RenderScenePS( PS_INPUT i ) : COLOR0

{

// Normalize the interpolated vectors:

float3 vViewTS = normalize( i.vViewTS );

float3 vViewWS = normalize( i.vViewWS );

float3 vLightTS = normalize( i.vLightTS );

float3 vNormalWS = normalize( i.vNormalWS );

float4 cResultColor = float4( 0, 0, 0, 1 );

// Adaptive in-shader level-of-detail system implementation. Compute the

// current mip level explicitly in the pixel shader and use this information

// to transition between different levels of detail from the full effect to

// simple bump mapping. See the above paper for more discussion of the approach

// and its benefits.

// Compute the current gradients:

float2 fTexCoordsPerSize = i.texCoord * g_vTextureDims;//计算像素级别的坐标如124,256等

// Compute all 4 derivatives in x and y in a single instruction to optimize:

float2 dxSize, dySize;

float2 dx, dy;

float4( dxSize, dx ) = ddx( float4( fTexCoordsPerSize, i.texCoord ) );//获取相对屏幕x轴上,纹理U,V的变化大小。一般认为右移或者左移一个屏幕像素,会在纹理图中引起u方向,和V方向移动几个纹理像素,下面意义相同。

float4( dySize, dy ) = ddy( float4( fTexCoordsPerSize, i.texCoord ) );

float fMipLevel;

float fMipLevelInt; // mip level integer portion

float fMipLevelFrac; // mip level fractional amount for blending in between levels

float fMinTexCoordDelta;

float2 dTexCoords;

// Find min of change in u and v across quad: compute du and dv magnitude across quad

dTexCoords = dxSize * dxSize + dySize * dySize;//求得一个屏幕像素,对应纹理的U,V的大小(这里存在平方,在后面求log时会去掉平方)

// Standard mipmapping uses max here

fMinTexCoordDelta = max( dTexCoords.x, dTexCoords.y );//求相对亦一个屏幕像素,U,V变化较大的一个

// Compute the current mip level (* 0.5 is effectively computing a square root before )

fMipLevel = max( 0.5 * log2( fMinTexCoordDelta ), 0 );//获取应该使用的纹理链,如果一个屏幕像素对应 4个纹理像素,那么结果会是2.其中0.5是用来对fMinTexCoorDDelta进行开平方。

// Start the current sample located at the input texture coordinate, which would correspond

// to computing a bump mapping result:

float2 texSample = i.texCoord;

// Multiplier for visualizing the level of detail (see notes for 'nLODThreshold' variable

// for how that is done visually)

float4 cLODColoring = float4( 1, 1, 3, 1 );//用于可视化纹理链级别的变量

float fOcclusionShadow = 1.0;

if ( fMipLevel <= (float) g_nLODThreshold )

{

//===============================================//

// Parallax occlusion mapping offset computation //

//===============================================//

// Utilize dynamic flow control to change the number of samples per ray

// depending on the viewing angle for the surface. Oblique angles require

// smaller step sizes to achieve more accurate precision for computing displacement.

// We express the sampling rate as a linear function of the angle between

// the geometric normal and the view direction ray:

int nNumSteps = (int) lerp( g_nMaxSamples, g_nMinSamples, dot( vViewWS, vNormalWS ) );//用视向量与法向量的夹角来改变采样数,比如如果夹角是0,则lerp结果会是g_nMinSamples.也就是说如果垂直看该像素,则我们采样数采用最少就可以。我们对倾斜角越大的地方,采用跟多的采样点,以提高精度。

// Intersect the view ray with the height field profile along the direction of

// the parallax offset ray (computed in the vertex shader. Note that the code is

// designed specifically to take advantage of the dynamic flow control constructs

// in HLSL and is very sensitive to specific syntax. When converting to other examples,

// if still want to use dynamic flow control in the resulting assembly shader,

// care must be applied.

//

// In the below steps we approximate the height field profile as piecewise linear

// curve. We find the pair of endpoints between which the intersection between the

// height field profile and the view ray is found and then compute line segment

// intersection for the view ray and the line segment formed by the two endpoints.

// This intersection is the displacement offset from the original texture coordinate.

// See the above paper for more details about the process and derivation.

//

float fCurrHeight = 0.0;

float fStepSize = 1.0 / (float) nNumSteps;

float fPrevHeight = 1.0;

float fNextHeight = 0.0;

int nStepIndex = 0;

bool bCondition = true;

float2 vTexOffsetPerStep = fStepSize * i.vParallaxOffsetTS;

float2 vTexCurrentOffset = i.texCoord;

float fCurrentBound = 1.0;

float fParallaxAmount = 0.0;

float2 pt1 = 0;

float2 pt2 = 0;

float2 texOffset2 = 0;

while ( nStepIndex < nNumSteps )

{

vTexCurrentOffset -= vTexOffsetPerStep;//由于视向量是从像素到眼睛,所以需要减去视向量

// Sample height map which in this case is stored in the alpha channel of the normal map:

fCurrHeight = tex2Dgrad( tNormalHeightMap, vTexCurrentOffset, dx, dy ).a;

fCurrentBound -= fStepSize;

if ( fCurrHeight > fCurrentBound )

{

pt1 = float2( fCurrentBound, fCurrHeight );

pt2 = float2( fCurrentBound + fStepSize, fPrevHeight );

texOffset2 = vTexCurrentOffset - vTexOffsetPerStep;

nStepIndex = nNumSteps + 1;

fPrevHeight = fCurrHeight;

}

else

{

nStepIndex++;

fPrevHeight = fCurrHeight;

}

}

float fDelta2 = pt2.x - pt2.y;

float fDelta1 = pt1.x - pt1.y;

float fDenominator = fDelta2 - fDelta1;//pt2.x- pt1.x + pt1.y - pt2.y

// SM 3.0 requires a check for divide by zero, since that operation will generate

// an 'Inf' number instead of 0, as previous models (conveniently) did:

if ( fDenominator == 0.0f )

{

fParallaxAmount = 0.0f;

}

else

{

fParallaxAmount = (pt1.x * fDelta2 - pt2.x * fDelta1 ) / fDenominator;//计算超过交点的部分,我之间没有理解这个公式。但是我用自己的三角形相似的公式得到了同样的计算结果。我自认为自己写的更易读。后面会贴出我的对应这一块代码。 }

float2 vParallaxOffset = i.vParallaxOffsetTS * (1 - fParallaxAmount );

// The computed texture offset for the displaced point on the pseudo-extruded surface:

float2 texSampleBase = i.texCoord - vParallaxOffset;

texSample = texSampleBase;

// Lerp to bump mapping only if we are in between, transition section:

cLODColoring = float4( 1, 1, 1, 1 );

if ( fMipLevel > (float)(g_nLODThreshold - 1) )

{

// Lerp based on the fractional part:

fMipLevelFrac = modf( fMipLevel, fMipLevelInt );

if ( g_bVisualizeLOD )

{

// For visualizing: lerping from regular POM-resulted color through blue color for transition layer:

cLODColoring = float4( 1, 1, max( 1, 2 * fMipLevelFrac ), 1 );

}

// Lerp the texture coordinate from parallax occlusion mapped coordinate to bump mapping

// smoothly based on the current mip level:

texSample = lerp( texSampleBase, i.texCoord, fMipLevelFrac );

}

if ( g_bDisplayShadows == true )

{ //此处我们是探索性求值公式,前面讲过,这里面的数字,我个人认为是通过试探进行调节的。你也可以试着调节权重什么的。

float2 vLightRayTS = vLightTS.xy * g_fHeightMapScale;

// Compute the soft blurry shadows taking into account self-occlusion for

// features of the height field:

float sh0 = tex2Dgrad( tNormalHeightMap, texSampleBase, dx, dy ).a;

float shA = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.88, dx, dy ).a - sh0 - 0.88 ) * 1 * g_fShadowSoftening;

float sh9 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.77, dx, dy ).a - sh0 - 0.77 ) * 2 * g_fShadowSoftening;

float sh8 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.66, dx, dy ).a - sh0 - 0.66 ) * 4 * g_fShadowSoftening;

float sh7 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.55, dx, dy ).a - sh0 - 0.55 ) * 6 * g_fShadowSoftening;

float sh6 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.44, dx, dy ).a - sh0 - 0.44 ) * 8 * g_fShadowSoftening;

float sh5 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.33, dx, dy ).a - sh0 - 0.33 ) * 10 * g_fShadowSoftening;

float sh4 = (tex2Dgrad( tNormalHeightMap, texSampleBase + vLightRayTS * 0.22, dx, dy ).a - sh0 - 0.22 ) * 12 * g_fShadowSoftening;

// Compute the actual shadow strength:

fOcclusionShadow = 1 - max( max( max( max( max( max( shA, sh9 ), sh8 ), sh7 ), sh6 ), sh5 ), sh4 );

// The previous computation overbrightens the image, let's adjust for that:

fOcclusionShadow = fOcclusionShadow * 0.6 + 0.4;

}

}

//fOcclusionShadow = 1;

// Compute resulting color for the pixel:

cResultColor = ComputeIllumination( texSample, vLightTS, vViewTS, fOcclusionShadow );

if ( g_bVisualizeLOD )

{

cResultColor *= cLODColoring;

}

// Visualize currently computed mip level, tinting the color blue if we are in

// the region outside of the threshold level:

if ( g_bVisualizeMipLevel )

{

cResultColor = fMipLevel.xxxx;

}

// If using HDR rendering, make sure to tonemap the resuld color prior to outputting it.

// But since this example isn't doing that, we just output the computed result color here:

return cResultColor;

}

//--------------------------------------------------------------------------------------

// Bump mapping shader

//--------------------------------------------------------------------------------------

float4 RenderSceneBumpMapPS( PS_INPUT i ) : COLOR0

{

// Normalize the interpolated vectors:

float3 vViewTS = normalize( i.vViewTS );

float3 vLightTS = normalize( i.vLightTS );

float4 cResultColor = float4( 0, 0, 0, 1 );

// Start the current sample located at the input texture coordinate, which would correspond

// to computing a bump mapping result:

float2 texSample = i.texCoord;

// Compute resulting color for the pixel:

cResultColor = ComputeIllumination( texSample, vLightTS, vViewTS, 1.0f );

// If using HDR rendering, make sure to tonemap the resuld color prior to outputting it.

// But since this example isn't doing that, we just output the computed result color here:

return cResultColor;

}

//--------------------------------------------------------------------------------------

// Apply parallax mapping with offset limiting technique to the current pixel

//--------------------------------------------------------------------------------------

float4 RenderSceneParallaxMappingPS( PS_INPUT i ) : COLOR0

{

const float sfHeightBias = 0.01;

// Normalize the interpolated vectors:

float3 vViewTS = normalize( i.vViewTS );

float3 vLightTS = normalize( i.vLightTS );

// Sample the height map at the current texture coordinate:

float fCurrentHeight = tex2D( tNormalHeightMap, i.texCoord ).a;

// Scale and bias this height map value:

float fHeight = fCurrentHeight * g_fHeightMapScale + sfHeightBias;

// Perform offset limiting if desired:

fHeight /= vViewTS.z;

// Compute the offset vector for approximating parallax:

float2 texSample = i.texCoord + vViewTS.xy * fHeight;//这里和POM中减去视向量正好相反,需要加上,具体原因还是自己考虑比较好。我自己也考虑了许久才略微明白。

float4 cResultColor = float4( 0, 0, 0, 1 );

// Compute resulting color for the pixel:

cResultColor = ComputeIllumination( texSample, vLightTS, vViewTS, 1.0f );

// If using HDR rendering, make sure to tonemap the resuld color prior to outputting it.

// But since this example isn't doing that, we just output the computed result color here:

return cResultColor;

}

//--------------------------------------------------------------------------------------

// Renders scene to render target

//--------------------------------------------------------------------------------------

technique RenderSceneWithPOM

{

pass P0

{

VertexShader = compile vs_3_0 RenderSceneVS();

PixelShader = compile ps_3_0 RenderScenePS();

//FillMode = WIREFRAME;

}

}

technique RenderSceneWithBumpMap

{

pass P0

{

VertexShader = compile vs_2_0 RenderSceneVS();

PixelShader = compile ps_2_0 RenderSceneBumpMapPS();

}

}

technique RenderSceneWithPM

{

pass P0

{

VertexShader = compile vs_2_0 RenderSceneVS();

PixelShader = compile ps_2_0 RenderSceneParallaxMappingPS();

}

}

我的求交点部分的代码float fNumSamples = lerp( MAX_SAMPLES, MIN_SAMPLES, dot( iNormalWS, iViewWS) ); float fStepSize = 1.0f / fNumSamples; float2 fStepDisplace = fStepSize * iDisplaceTS; float fCurrentSample = 0; float fCurrentBound = 1.0f; float fCurrentHeight = 0; float fPrevHeight = tex2Dgrad( g_samNMH, iTex, ddxSmall, ddySmall ).a; float fPrevBound = 1.0f; while( fCurrentSample < fNumSamples ) { iTex -= fStepDisplace; fCurrentBound -= fStepSize; fCurrentHeight = tex2Dgrad( g_samNMH, iTex, ddxSmall, ddySmall ).a; if( fCurrentBound < fCurrentHeight ) { fCurrentSample = fNumSamples + 1; } else { fPrevHeight = fCurrentHeight; fPrevBound = fCurrentBound; fCurrentSample++; } } float fHeightDelta1 = fPrevBound - fPrevHeight; float fHeightDelta2 = fCurrentHeight - fCurrentBound; float fIntersectionStepFraction = fHeightDelta2 / ( fHeightDelta1 + fHeightDelta2 ); iTex += fIntersectionStepFraction * fStepDisplace;//iTex就是最后的交点处的纹理坐标

最后一句话,看的再多不如实际自己重新编写一遍体会的深!继续加油!

2220

2220

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?