前言

本文主要是解析论文Notes onConvolutional Neural Networks的公式,参考了http://blog.csdn.net/lu597203933/article/details/46575871的公式推导,借用https://github.com/BigPeng/JavaCNN代码

CNN

cnn每一层会输出多个feature map, 每个feature map由多个神经元组成,假如某个feature map的shape是m*n, 则该feature map有m*n个神经元

卷积层

卷积计算

设当前层l为卷积层,下一层l+1为子采样层subsampling.

则卷积层l的输出feature map为:

Xlj=f(∑i∈MjXl−1i∗klij+blj)

∗ 为卷积符号

残差计算

设当前层l为卷积层,下一层l+1为子采样层subsampling.

第l层的第j个feature map的残差公式为:

δlj=βl+1j(f′(μlj)∘up(δl+1j))(1)

其中

f(x)=11+e−x(2) ,

其导数

f′(x)=f(x)∗(1−f(x))(3)

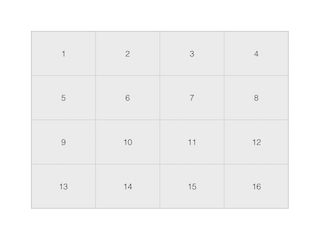

为了之后的推导,先提前讲讲subsample过程,比较简单,假设采样层是对卷积层的均值处理,如卷积层的输出feature map( f(μlj) )是

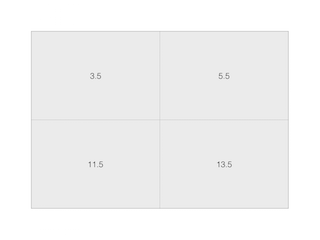

则经过subsample的结果是:

subsample过程如下:

import java.util.Arrays;

/**

* Created by keliz on 7/7/16.

*/

public class test

{

/**

* 卷积核或者采样层scale的大小,长与宽可以不等.

*/

public static class Size

{

public final int x;

public final int y;

public Size(int x, int y)

{

this.x = x;

this.y = y;

}

}

/**

* 对矩阵进行均值缩小

*

* @param matrix

* @param scale

* @return

*/

public static double[][] scaleMatrix(final double[][] matrix, final Size scale)

{

int m = matrix.length;

int n = matrix[0].length;

final int sm = m / scale.x;

final int sn = n / scale.y;

final double[][] outMatrix = new double[sm][sn];

if (sm * scale.x != m || sn * scale.y != n)

throw new RuntimeException("scale不能整除matrix");

final int size = scale.x * scale.y;

for (int i = 0; i < sm; i++)

{

for (int j = 0; j < sn; j++)

{

double sum = 0.0;

for (int si = i * scale.x; si < (i + 1) * scale.x; si++)

{

for (int sj = j * scale.y; sj < (j + 1) * scale.y; sj++)

{

sum += matrix[si][sj];

}

}

outMatrix[i][j] = sum / size;

}

}

return outMatrix;

}

public static void main(String args[])

{

int row = 4;

int column = 4;

int k = 0;

double[][] matrix = new double[row][column];

Size s = new Size(2, 2);

for (int i = 0; i < row; ++i)

for (int j = 0; j < column; ++j)

matrix[i][j] = ++k;

double[][] result = scaleMatrix(matrix, s);

System.out.println(Arrays.deepToString(matrix).replaceAll("],", "]," + System.getProperty("line.separator")));

System.out.println(Arrays.deepToString(result).replaceAll("],", "]," + System.getProperty("line.separator")));

}

}

其中3.5=(1+2+5+6)/(2*2); 5.5=(3+4+7+8)/(2*2)

由此可知,卷积层输出的feature map中的值为1的节点,值为2的节点,值为5的节点,值为6的节点(神经元)与subsample层的值为3.5的节点相连接,值为3,值为4,值为7,值为8节点与subsample层的值为5.5节点相连接。由BP算法章节的推导结论可知

卷积层第j个节点的残差等于子采样层与其相连接的所有节点的权值乘以相应的残差的加权和再乘以该节点的导数

对着公式看比较容易理解这句话。

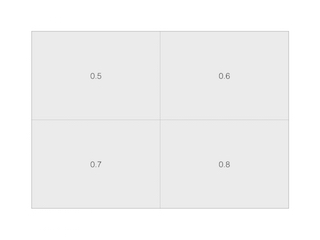

假设子采样层的对应文中的卷积层的残差 δl+1j 是,

按照公式(1),节点1值为0.5的残差是

因为这是计算单个神经元的残差,所以需要把 ∘ 换成 ∗ ,

即

同理,对于节点2,

残差为

对于节点5,

残差为

对于节点6,

残差为

因为节点3对应的子采样层的残差是0.6,所以节点3的残差为

即

本文详细解析了卷积神经网络(CNN)中的卷积层和子采样层的残差计算,包括卷积计算公式、残差公式,并通过实例展示了卷积层与子采样层之间的残差传递过程,对于理解CNN的内部工作机制非常有帮助。

本文详细解析了卷积神经网络(CNN)中的卷积层和子采样层的残差计算,包括卷积计算公式、残差公式,并通过实例展示了卷积层与子采样层之间的残差传递过程,对于理解CNN的内部工作机制非常有帮助。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

3048

3048

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?