通过DL框架更容易地实现softmax

(还未看明白,只好先记录代码...)

import torch

from torch import nn

from d2l import torch as d2lbatch_size = 256 # 随机读256预图片

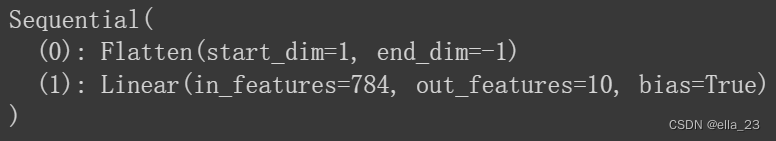

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size) # train和test的迭代器model = nn.Sequential(

nn.Flatten(), # flatten是将任何维度的tensor变成2D tensor, 因为softmax不会调整输入形状, 所以如不是2D的就要处理

nn.Linear(784, 10) # 定义linear layer

)def init_weight(current_layer):

if type(current_layer) == nn.Linear:

nn.init.normal_(current_layer.weight, std=0.01)model.apply(init_weight)输出这个model layers:

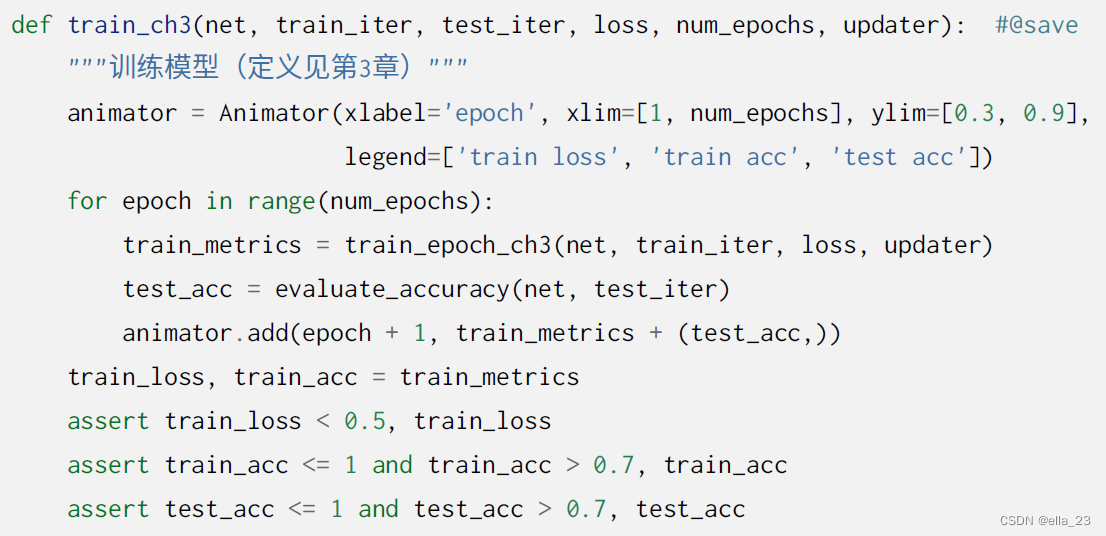

loss = nn.CrossEntropyLoss(reduction='none')

optimizer = torch.optim.SGD(model.parameters(), lr=0.1) # lr=0.01的⼩批量随机梯度下降

num_epochs = 10

d2l.train_ch3(model, train_iter, test_iter, loss, num_epochs, optimizer)--

参考:动手学深度学习v2

1167

1167

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?