Mishkin, Dmytro, and Jiri Matas. “All you need is a good init.” arXiv preprint arXiv:1511.06422 (2015). [Citations: 19].

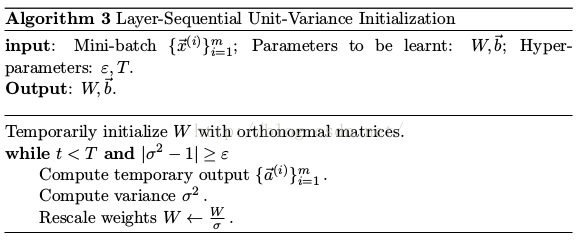

• Pre-initialize weights of each convolution or fc layer with orthonormal matrices.

• Normalizing the variance of the output of each layer to be equal to one.

1 Layer-Sequential Unit-Variance Initialization

[Idea]• Pre-initialize weights of each convolution or fc layer with orthonormal matrices.

• Normalizing the variance of the output of each layer to be equal to one.

[Algorithm] See Alg. 3.

[Hyper-parameters ε, T] Use them because it is often not possible to normalize variance with the desired precision due to the variation of data.

3745

3745

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?