- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊 | 接辅导、项目定制

- ResNet模型搭建

-

import torch import torch.nn as nn import torch.nn.functional as F class IdentityBlock(nn.Module): def __init__(self, in_channels, filters, kernel_size): super(IdentityBlock, self).__init__() f1, f2, f3 = filters self.conv1 = nn.Conv2d(in_channels, f1, kernel_size=1) self.bn1 = nn.BatchNorm2d(f1) self.conv2 = nn.Conv2d(f1, f2, kernel_size=kernel_size, padding='same') self.bn2 = nn.BatchNorm2d(f2) self.conv3 = nn.Conv2d(f2, f3, kernel_size=1) self.bn3 = nn.BatchNorm2d(f3) self.relu = nn.ReLU(inplace=True) def forward(self, x): identity = x out = self.relu(self.bn1(self.conv1(x))) out = self.relu(self.bn2(self.conv2(out))) out = self.bn3(self.conv3(out)) out += identity out = self.relu(out) return out class ConvBlock(nn.Module): def __init__(self, in_channels, filters, kernel_size, stride=2): super(ConvBlock, self).__init__() f1, f2, f3 = filters self.conv1 = nn.Conv2d(in_channels, f1, kernel_size=1, stride=stride) self.bn1 = nn.BatchNorm2d(f1) self.conv2 = nn.Conv2d(f1, f2, kernel_size=kernel_size, padding='same') self.bn2 = nn.BatchNorm2d(f2) self.conv3 = nn.Conv2d(f2, f3, kernel_size=1) self.bn3 = nn.BatchNorm2d(f3) self.shortcut = nn.Sequential( nn.Conv2d(in_channels, f3, kernel_size=1, stride=stride), nn.BatchNorm2d(f3) ) self.relu = nn.ReLU(inplace=True) def forward(self, x): identity = self.shortcut(x) out = self.relu(self.bn1(self.conv1(x))) out = self.relu(self.bn2(self.conv2(out))) out = self.bn3(self.conv3(out)) out += identity out = self.relu(out) return out class ResNet50(nn.Module): def __init__(self, input_shape, num_classes=1000): super(ResNet50, self).__init__() self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3) self.bn1 = nn.BatchNorm2d(64) self.pool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) self.layer1 = self._make_layer(64, [64, 64, 256], stride=1, num_blocks=3) self.layer2 = self._make_layer(256, [128, 128, 512], stride=2, num_blocks=4) self.layer3 = self._make_layer(512, [256, 256, 1024], stride=2, num_blocks=6) self.layer4 = self._make_layer(1024, [512, 512, 2048], stride=2, num_blocks=3) self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) self.fc = nn.Linear(2048, num_classes) def _make_layer(self, in_channels, filters, stride, num_blocks): layers = [ConvBlock(in_channels, filters, kernel_size=3, stride=stride)] for _ in range(1, num_blocks): layers.append(IdentityBlock(filters[2], filters, kernel_size=3)) return nn.Sequential(*layers) def forward(self, x): x = F.relu(self.bn1(self.conv1(x))) x = self.pool1(x) x = self.layer1(x) x = self.layer2(x) x = self.layer3(x) x = self.layer4(x) x = self.avgpool(x) x = torch.flatten(x, 1) x = self.fc(x) return x device = "cuda" if torch.cuda.is_available() else "cpu" print("Using {} device".format(device)) model = ResNet50((224,224,3)).to(device) model

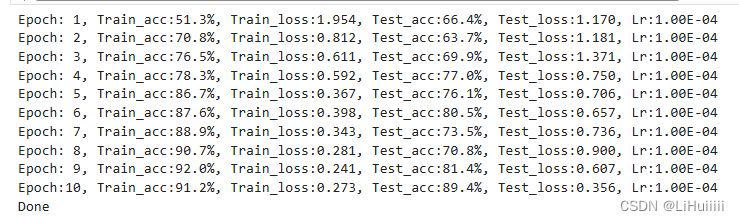

总结:过程有点艰难,TF的代码还是不太熟悉,不过结合图还能自己分析逻辑敲出代码继续加油

总结:过程有点艰难,TF的代码还是不太熟悉,不过结合图还能自己分析逻辑敲出代码继续加油

第J1周:ResNet-50算法实战与解析

最新推荐文章于 2024-09-06 10:38:06 发布

868

868

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?