zookeeper的配置:

1. 环境变量,在home目录下的 bashrc文件里加入zookeeper的环境变量

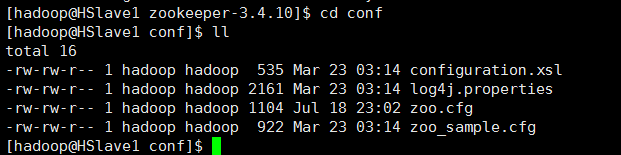

在zookeeper解压缩后的目录下,进入conf目录,创建一个zoo.cfg的档案

档案内容

# The number of milliseconds of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. #dataDir=/tmp/zookeeper ÔÚÄãµÄÖ÷»úÖн¨Á¢ÏàÓ¦µÄĿ¼ dataDir=/home/hadoop/zookeeper-3.4.10/data # the port at which the clients will connect clientPort=2181 # the maximum number of client connections. # increase this if you need to handle more clients #maxClientCnxns=60 # # Be sure to read the maintenance section of the # administrator guide before turning on autopurge. # # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance # # The number of snapshots to retain in dataDir #autopurge.snapRetainCount=3 # Purge task interval in hours # Set to "0" to disable auto purge feature #autopurge.purgeInterval=1 server.1=HSlave1:2888:3888 server.2=HSlave2:2888:3888 server.3=HSlave3:2888:3888

HSlave1,HSlave2,HSlave3 要改成自己的主机名字。

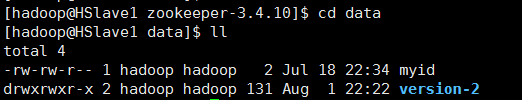

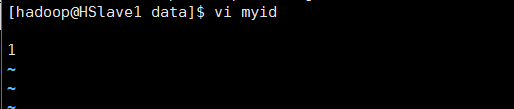

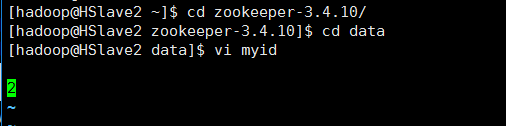

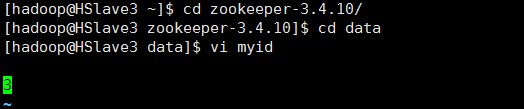

最后在zk 的data文件夹里建立一个myid的文件,HSlave1 写入一个数字1,HSlave2写入2,HSlave3写入3,就完成了。剩下的是在hadoop的配置文件里配置相关的zookeeper设置。

======================================

=============

用idea创建hadoop的项目,环境设置,查看远程文件,下载远程文件,上传远程文件

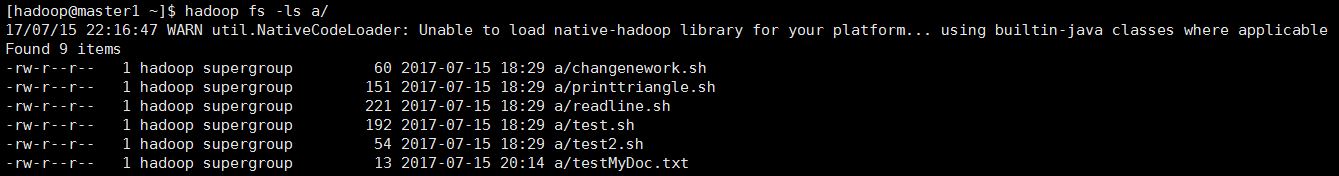

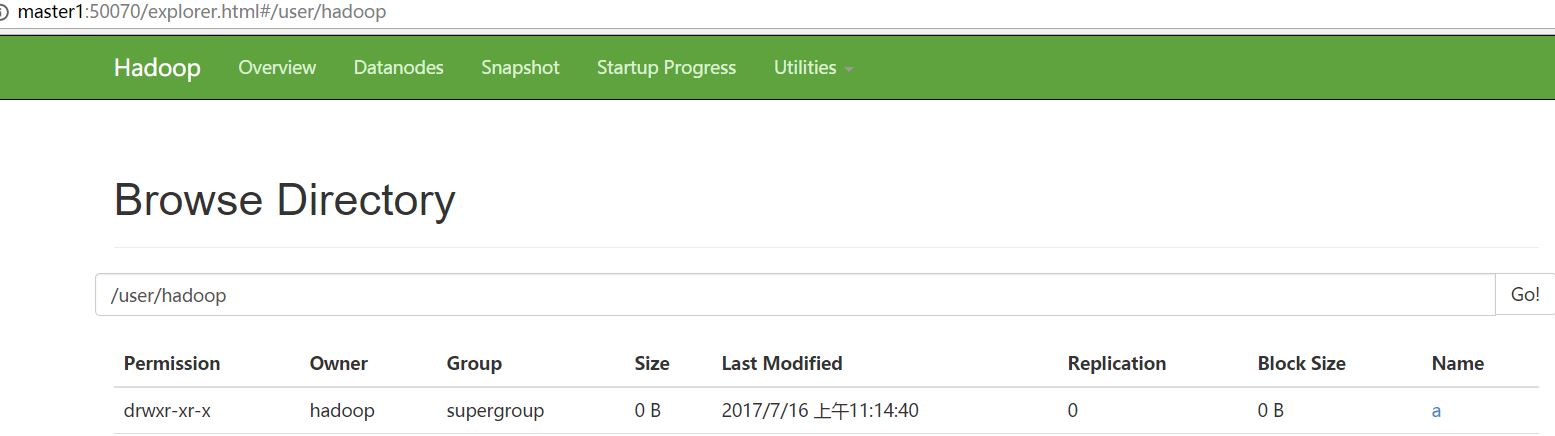

1.查看远程文件

hadoop运行后,用以下代码在远程建立a资料夹(user/hadoop/a),并传入一个testMyDoc.txt文件(范例内容为howard 0716)到a

hadoop fs -ls 查看所有目录

hadoop fs -mkdir -p /user/hadoop (创建user/hadoop目录)

hadoop dfs -mkdir a(创建a 目录,网站上显示的路径是会在user/hadoop/a)

hadoop fs -put testMyDoc.txt a/ (把 主机里当前目录的testMyDoc.txt文件上传到a 目录)

hadoop fs -ls a/ (查看a目录下的档案,如果是网站要看user/hadoop/a目录)

----------------------------------------------------------------

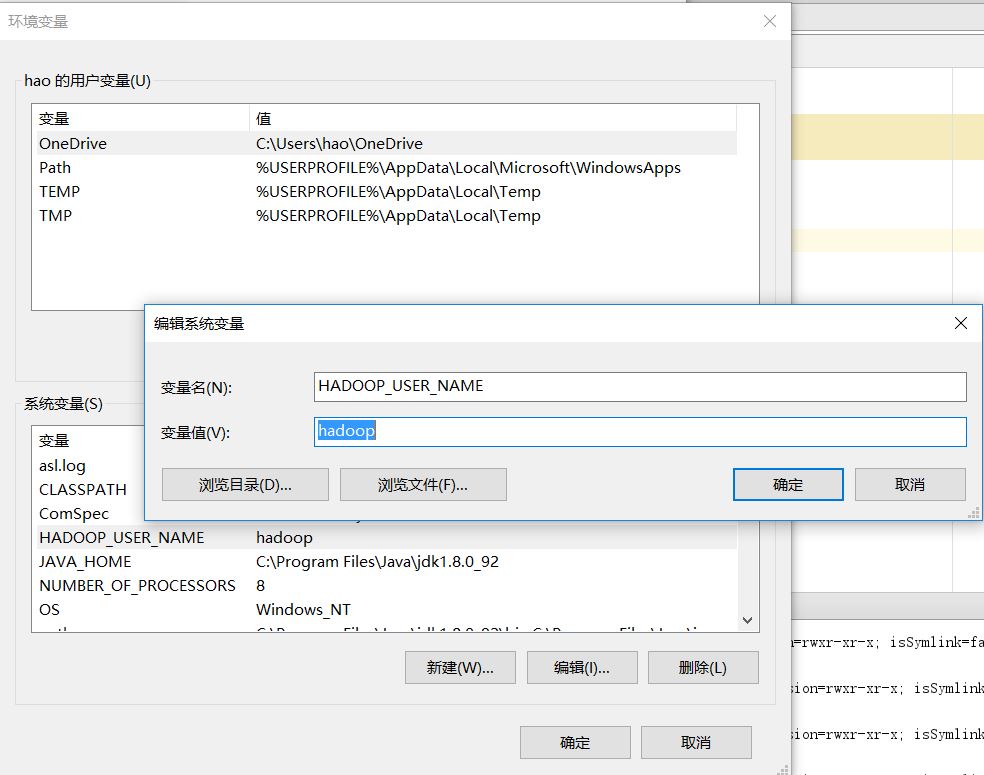

然后修改windows的环境变量,增加hadoop用户

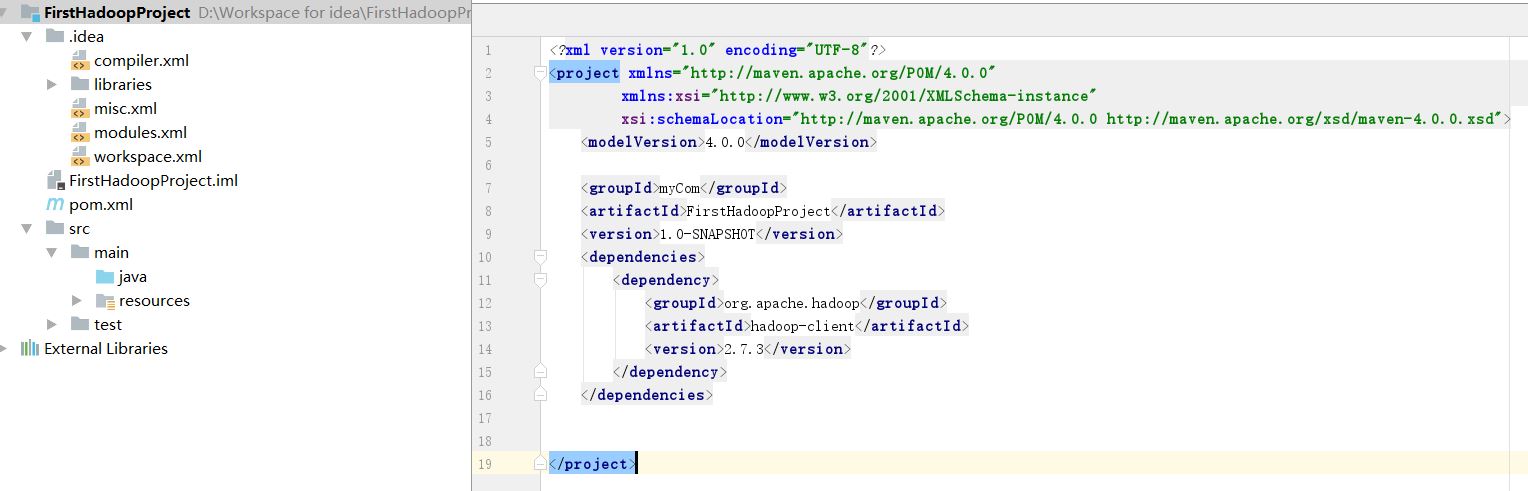

创建新的Maven项目

2 修改pom.xml,添加以下dependencies后,点击右下角的Enable Auto-Import

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.3</version>

</dependency>

</dependencies>

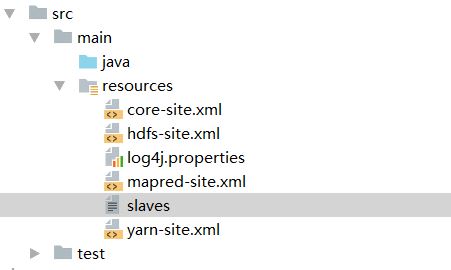

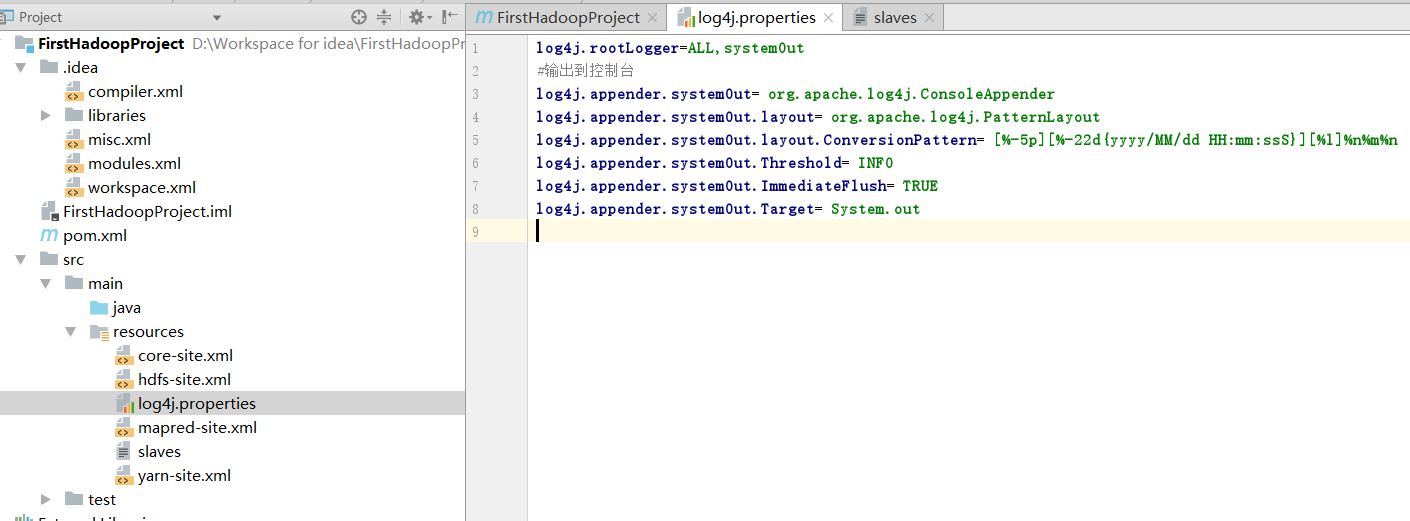

3 在src/main/resources创建一个properties文件

文件名log4j.properties

内容

log4j.rootLogger=ALL,systemOut

#输出到控制台

log4j.appender.systemOut= org.apache.log4j.ConsoleAppender

log4j.appender.systemOut.layout= org.apache.log4j.PatternLayout

log4j.appender.systemOut.layout.ConversionPattern= [%-5p][%-22d{yyyy/MM/dd HH:mm:ssS}][%l]%n%m%n

log4j.appender.systemOut.Threshold= INFO

log4j.appender.systemOut.ImmediateFlush= TRUE

log4j.appender.systemOut.Target= System.out

4 hadoop运行成功后,复制5个hadoop的配置文件到src/main/resources,只能复制自己电脑的,不能用别人的

5.编写第一个class,取名dfsDemo

写入以下的代码,注意导入包的时候要导入hadoop的

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.log4j.Logger;

import java.io.IOException;

public class dfsDemo {

public static Logger logger=Logger.getLogger(dfsDemo.class);

public static void main(String[] args) throws IOException {

//Configuration FileSystem Path FileStatus FSDateInputStream FSDataOutputStream

Configuration cfg=new Configuration(); //创建一个hadoop的Configuration

FileSystem fileSystem=FileSystem.get(cfg); //get如果出错检查properties的文件内容,以及hadoop的配置文件

Path home=fileSystem.getHomeDirectory();

logger.info(home);

//遍历所有的文件

FileStatus[] fileStatuses=fileSystem.listStatus(home);

for (FileStatus f:fileStatuses){

logger.info(f);

}

Path a=new Path(home,"a"); //在user/hadoop/a 的路径

Path shh=new Path(a,"testMyDoc.txt"); //a目录下的testMyDoc.txt文件

FSDataInputStream in=fileSystem.open(shh);

IOUtils.copyBytes(in,System.out,1024,false);

in.close();

}

}

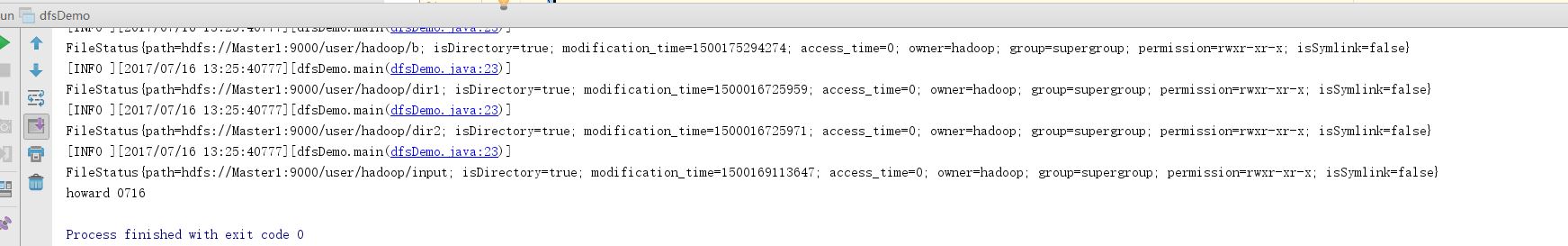

点击运行后,控制台看到testMyDoc.txt的内容howard 0716

6 下载hadoop远程文件到本地

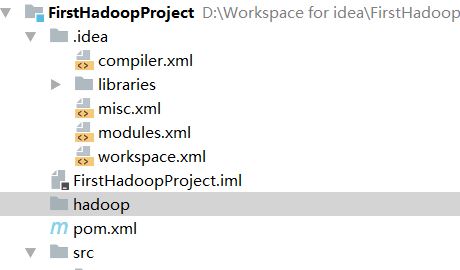

现创建一个目录hadoop用于存放下载的东西

TestDownd类里的内容:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.OutputStream;

public class TestDownload {

public static Configuration cfg=null; //常用的放静态

public static FileSystem fs=null;

public static void main(String[] args) throws IOException {

cfg=new Configuration();

fs=FileSystem.get(cfg);

Path home=fs.getHomeDirectory();

//下载的方法

download(home,new File("D:\\Workspace for idea\\FirstHadoopProject\\hadoop")); //在左侧导航hadoop文件按右键选择Copy Path

}

private static void download(Path home,File dir) throws IOException {

//如果下载的保存目录不存在,则创建下载保存目录

if(!dir.exists()){

dir.mkdirs();

}

//获取hadoop集群目标目录的所有文件讯息

FileStatus[] fileStatuses=fs.listStatus(home);

if(fileStatuses!=null&&fileStatuses.length>0){ //判断是否为空

for(FileStatus f:fileStatuses){ //循环遍历

//如果目标是目录,就递归

if(f.isDirectory()){ //判断是否是目录

String name=f.getPath().getName(); //取得文件名称

File toFile=new File(dir,name); //建立目录

download(f.getPath(),toFile); //下载

}else{

downloadFile(f.getPath(),dir);

}

}

}

}

private static void downloadFile(Path path,File dir) throws IOException {

if(!dir.exists()){

dir.mkdirs();

}

File file =new File(dir,path.getName());

FSDataInputStream in=fs.open(path);

OutputStream out =new FileOutputStream(file);

IOUtils.copyBytes(in,out,1024,true);

}

}

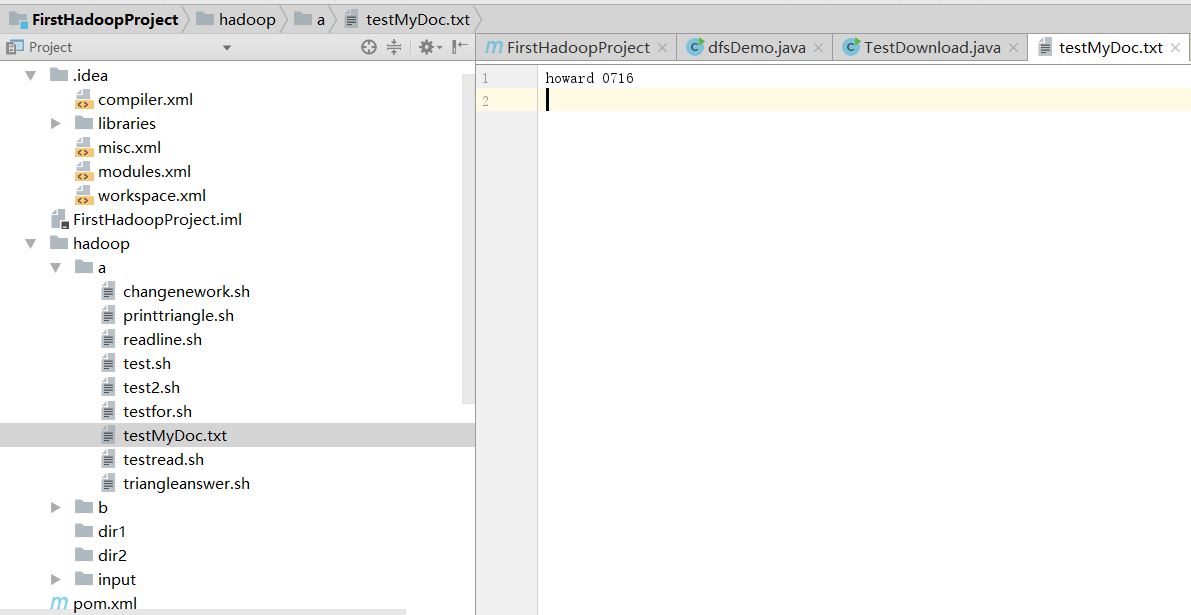

运行完成后,下载到hadoop目录,检查刚刚的testMyDoc.txt文件

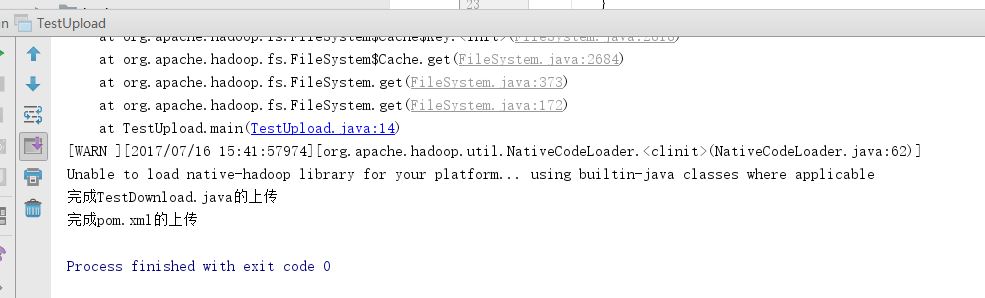

7 上传本地文件到远程hadoop

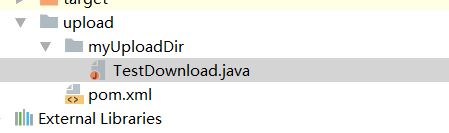

创建本地目录upload,存入2个档案

创建一个类TestUpload写入以下代码

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import org.apache.hadoop.io.IOUtils;

import java.io.*;

import org.apache.hadoop.fs.FileSystem;

import java.io.IOException;

public class TestUpload {

public static Configuration cfg=null; //常用的放静态

public static FileSystem fs=null;

public static void main(String[] args) throws IOException {

cfg = new Configuration();

fs = FileSystem.get(cfg);

Path home=fs.getHomeDirectory();

Path remoteFilePath=new Path(home,"RemoteDir"); //远程目标RemoteDir目录

upload(remoteFilePath,new File("D:\\Workspace for idea\\FirstHadoopProject\\upload"));//本地文件在upload目录

}

private static void upload(Path remoteFilePath,File localFile) throws IOException {

if(!fs.exists(remoteFilePath)){ //判断远程是否存在欲上传的目录

fs.mkdirs(remoteFilePath); //不存在就创建

}

File[] localFiles=localFile.listFiles(); //将upload目录的文件存到数组里

if(localFiles!=null&&localFiles.length>0){ //如果数组有东西(有要上传的东西)

for(File f:localFiles){ //遍历

if(f.isDirectory()){ //如果是目录,就在远程目录下,建立本地文件的目录

Path path=new Path(remoteFilePath,f.getName());

upload(path,f); //创建完了后递归,下一次就会进入上传档案的环节

}else{

uploadFile(remoteFilePath,f); //如果远程有同名目录,就上传本地目录里的文件

}

}

}

}

private static void uploadFile(Path remoteFilePath,File localFile) throws IOException {

InputStream in=new FileInputStream(localFile); //写入本地文件输入流

FSDataOutputStream out=fs.create(new Path(remoteFilePath,localFile.getName())); //输出流目标是远程文件下的本地文件名

IOUtils.copyBytes(in,out,1024,true);

System.out.println("完成"+localFile.getName()+"的上传");

}

}

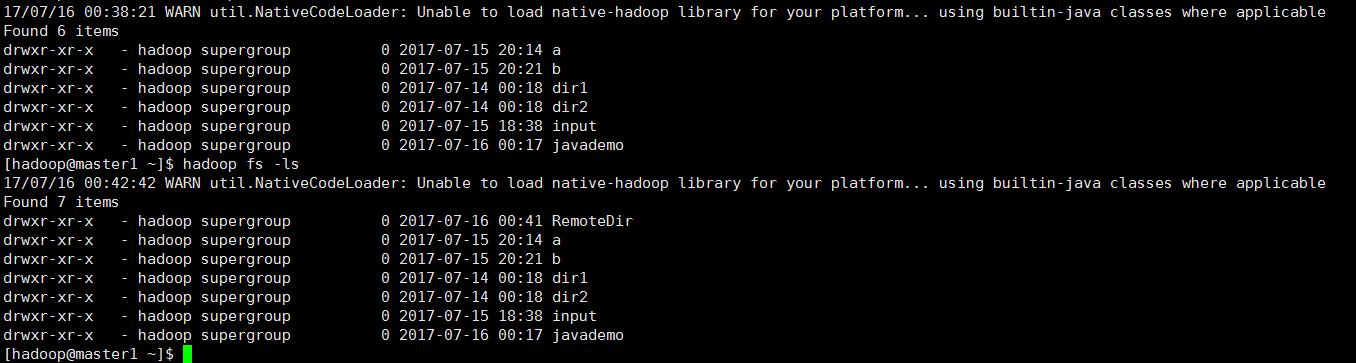

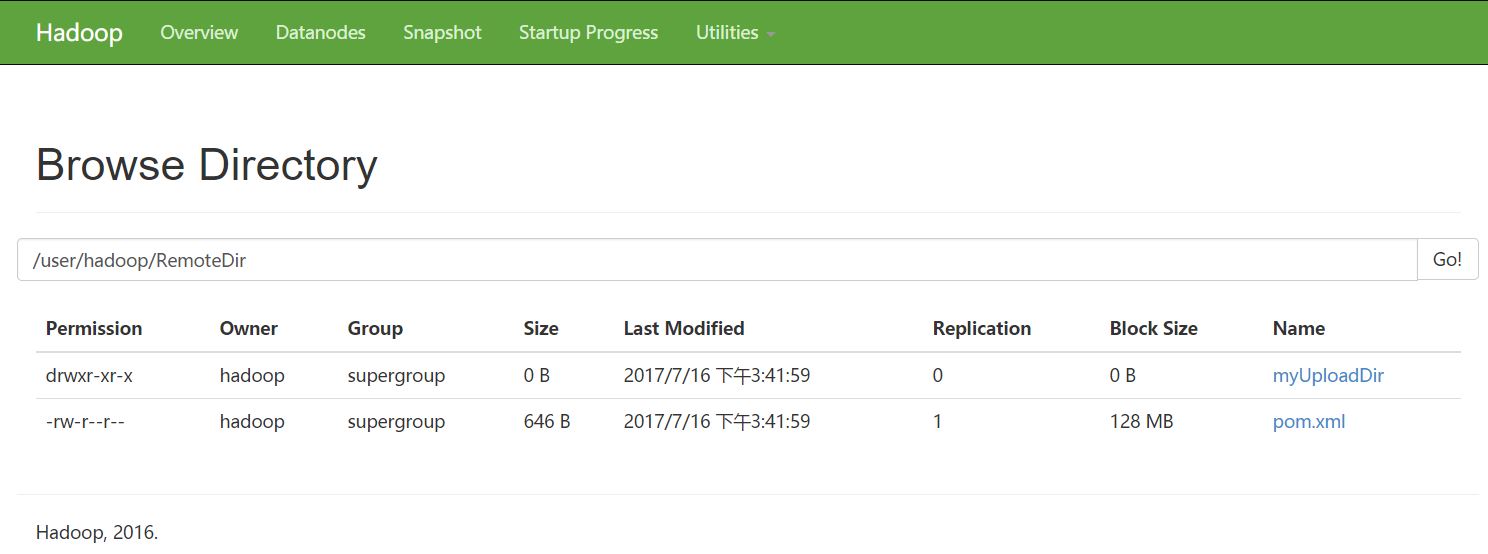

执行后,远程的目录变化,多了一个RemoteDir目录

======================================================

1416

1416

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?