从最终实现的角度来看,说不上是遗留物检测,至多是静止目标检测【运动物体长时间滞留检测】。实验室同学搞毕业设计,帮忙弄弄,也没弄成。

本文将分别给出老外论文+对应的代码【在老外论文基础上改进的】和国内的论文+代码【失败,能力不行】。总体思路都是帧间差分法,没有用sift特征匹配或者在线学习。

算法一:

《An abandoned object detection system based on dual backgroundsegmentation》 IEEE 2009

搞了两个背景缓冲区:

Current_background:初始为第一帧,其后对每个像素,若下一帧像素大于该背景像素,则该出背景像素加1,否则该处背景像素减1。【挺神奇的,好处是This way, even if the foreground ischanging at a fast pace, it will not affect the background but if theforeground is stationary, it gradually merges into the background.但是效果还是不及混合高斯,因为适应期太长太频繁了】

Buffer_background:论文上说每隔20秒更新一次,直接拷贝Current_background,遗留物检测直接通过Current_background和Buffer_background相减即可。【他这边认为一个遗留物丢弃满20秒,如果还在,则认为该遗留物为背景了】。随后,搞了一堆跟踪该区域的东西,我不太感兴趣。

本文对上述的改进的目标是物体如果被遗弃了,那么它就应该一直被检测到。我额外搞了一个遗弃背景模版abandon_background,用于记录遗弃物之前的背景图像。

A.若物体离开,则abandon相应区域恢复到当前current的值,buffer更新为当前整个current。B.若物体未离开,则用abandon对buffer局部更新

整体算法:

1. 第一帧,用来初始化Current_background和Buffer_background

2. 通过两个背景区域计算遗留物

3. 每一帧更新Current_background

4. 若时间间隔满足,更新Buffer_background和遗弃物背景abandon_background,更新计数器

a. 若遗弃物背景首次更新,根据current和buffer之差,在相应的地方赋current的值,其余为0

b. 进行物体离开判断,即:若abandon与current对应区域背景像素不同,则物体还未离开,否则离开

c. 若物体离开,将current更新abandon的相应区域,buffer复制完整的current

d. 若物体未离开,将abandon更新buffer的相应区域,abandon保持不变。

5. 读取下一帧,返回2

代码:

调用的函数:

#include "Model.h"

#define Th 50

#define Ta 90

int first_update = 0;//首次更新标志

void calc_fore(IplImage *current,IplImage *back,IplImage *fore)

{

int i,j;

for (i=0;i<current->height;i++)

{

for (j=0;j<current->width;j++)

{

if (abs((u_char)current->imageData[i*current->widthStep+j] - (u_char)back->imageData[i*current->widthStep+j]) <=Ta )

{

fore->imageData[i*current->widthStep+j] = 0;//background

}else

{

fore->imageData[i*current->widthStep+j] = 255;//foreground

}

}

}

}

void update_currentback(IplImage *current,IplImage *curr_back)

{

int i,j;

int p,q;

for (i=0;i<current->height;i++)

{

for (j=0;j<current->width;j++)

{

p = (u_char)current->imageData[i * current->widthStep + j];

q = (u_char)curr_back->imageData[i * current->widthStep + j];

//printf("%d,%d\n",p,q);

if (p >= q)

{

if (q == 255)

{

q = 254;

}

curr_back->imageData[i * current->widthStep + j] = q + 1;

}else

{

if (q == 0)

{

q = 1;

}

curr_back->imageData[i * current->widthStep + j] = q - 1;

}

}

}

}

void update_bufferedback(IplImage *curr_back,IplImage *buf_back,IplImage *abandon)

{

int i,j,height,width;

int leave_flag = 0;

height = curr_back->height;

width = curr_back->widthStep;

if (first_update == 0)

{

for ( i = 0;i < height; i++)

{

for (j = 0;j < width; j++)

{

if (abs((u_char)curr_back->imageData[i*width+j] - (u_char)buf_back->imageData[i*width+j]) <=Th )

{

abandon->imageData[i*width+j] = 0;//background

}else

{

abandon->imageData[i*width+j] = curr_back->imageData[i*width+j];//foreground

first_update = 1;

}

}

}

return;

}

//物体离开判断

for ( i = 0;i < height; i++)

{

for (j = 0;j < width; j++)

{

if (abandon->imageData[i*width+j] != 0)

{

if (abandon->imageData[i*width+j] != curr_back->imageData[i*width+j])

{

leave_flag = 1; //物体掩膜处之前背景与当前的不一致,1:物体未离开,0:物体离开

}

}

}

}

if(leave_flag == 0) //物体离开

{

cvCopy(curr_back,buf_back);

for ( i = 0;i < height; i++)

{

for (j = 0;j < width; j++)

{

if (abandon->imageData[i*width+j] != 0)

{

abandon->imageData[i*width+j] = curr_back->imageData[i*width+j];

}

}

}

}else

{

for ( i = 0;i < height; i++)

{

for (j = 0;j < width; j++)

{

if (abandon->imageData[i*width+j] != 0)

{

buf_back->imageData[i*width+j] = abandon->imageData[i*width+j];

}

}

}

}

}主函数:

// abandon_left.cpp : 定义控制台应用程序的入口点。

//

#include "stdafx.h"

#include "Model.h"

int _tmain(int argc, _TCHAR* argv[])

{

CvCapture *capture=cvCreateFileCapture("test.avi");

IplImage *current_back,*buff_back,*abandon,*frame,*current_img,*fore;

int count,intern;

frame = cvQueryFrame(capture);

fore = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

current_back = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

current_img = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

buff_back = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

abandon = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

count=0;

intern = count + 20;

while (1)

{

cvCvtColor(frame,current_img,CV_RGB2GRAY);

if (count == 0)

{

//初始化背景模版

cvCopy(current_img,current_back);

cvCopy(current_img,buff_back);

}

if (count > 0)

{

//计算前景掩膜

calc_fore(current_back,buff_back,fore);

//更新跟踪背景

update_currentback(current_img,current_back);

if (count == intern)

{

update_bufferedback(current_back,buff_back,abandon);

intern = count + 20;

}

cvShowImage("current",current_img);

cvShowImage("current_back",current_back);

cvShowImage("buff_back",buff_back);

cvShowImage("abandon detection",fore);

}

count++;

frame =cvQueryFrame(capture);

if (cvWaitKey(23)>=0)

{

break;

}

}

cvNamedWindow("current",0);

cvNamedWindow("buff_back",0);

cvNamedWindow("current_back",0);

cvNamedWindow("abandon detection",0);

cvReleaseCapture(&capture);

return 0;

}

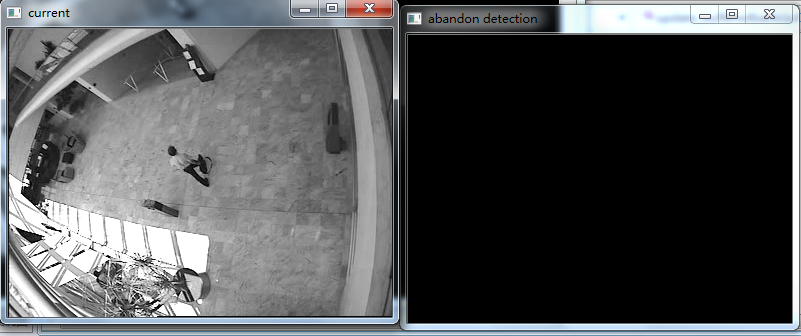

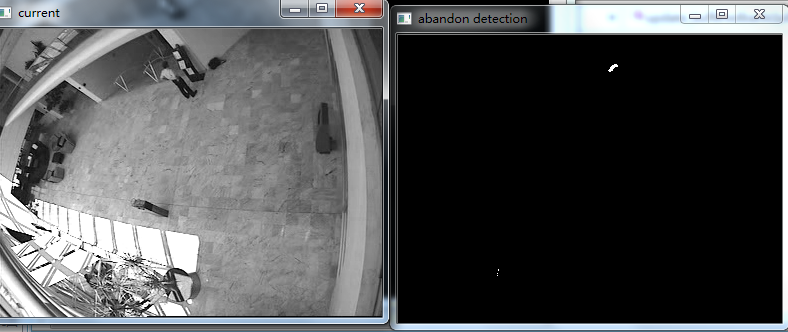

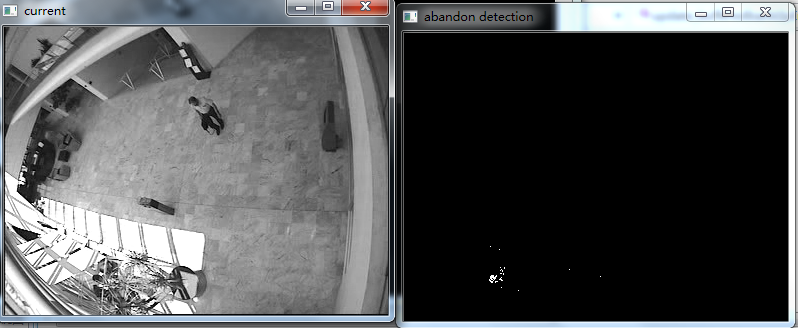

效果图:

说明:左下角有个女的运动规律也符合静止目标检测规律,所以也被检测出来了。后期可以通过外接矩形长宽比等其他手段过滤掉。

视频+代码工程的下载连接:http://download.csdn.net/detail/jinshengtao/7157943

算法二:

《一种基于双背景模型的遗留物检测方法》

搞了个脏背景和纯背景,定义:

当视频场景中不出现运动目标,或者背景不受场景中所出现的运动目标影响时,这样的背景称为纯背景。否则,称为脏背景

它们的更新规则:

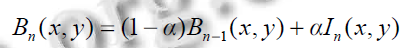

一般背景的更新按照帧间差分法:

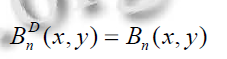

脏背景使用全局更新,直接赋值一般背景:

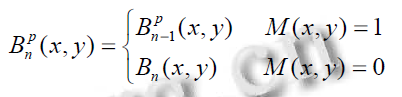

纯背景根据前景掩膜,进行局部更新,即若前景掩膜被标记为运动的部分,则相应的纯背景区域用上一帧的纯背景更新;若前景掩膜被标记为非运动的部分,则相应的纯背景区域用当前帧的一般背景更新。

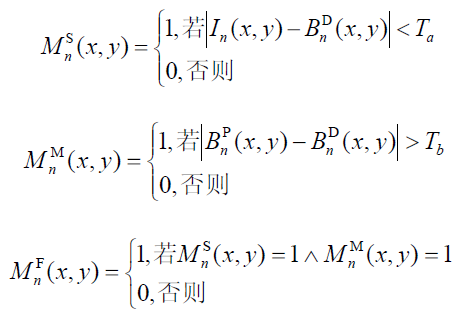

静止目标前景检测算法可以通过以下公式看明白:

具体算法流程不给咯,论文没提,自己摸索的,反正试验效果失败了。

代码:// left_bag.cpp : 定义控制台应用程序的入口点。

//

#include "stdafx.h"

#include "cv.h"

#include "highgui.h"

#define u_char unsigned char

#define alfa 0.03

#define Th 60

#define Ta 60

#define Tb 40

void calc_fore(IplImage *current,IplImage *back,IplImage *fore)

{

int i,j;

for (i=0;i<current->height;i++)

{

for (j=0;j<current->width;j++)

{

if (abs((u_char)current->imageData[i*current->widthStep+j] - (u_char)back->imageData[i*current->widthStep+j]) <=Th )

{

fore->imageData[i*current->widthStep+j] = 0;//background

}else

{

fore->imageData[i*current->widthStep+j] = 255;//foreground

}

}

}

}

void update_back(IplImage *current,IplImage *back,IplImage *B_p,IplImage *B_d,IplImage *fore,IplImage *B_p_pre)

{

int i,j;

//更新B_n

for (i=0;i<current->height;i++)

{

for (j=0;j<current->width;j++)

{

back->imageData[i*current->widthStep+j] = (1-alfa)*current->imageData[i*current->widthStep+j] + alfa * back->imageData[i*current->widthStep+j];

}

}

//更新B_d

cvCopy(back,B_d);

//更新B_p

for (i = 0;i < fore->height;i++)

{

for (j = 0;j < fore->width;j++)

{

if ((unsigned char)fore->imageData[i*fore->widthStep + j ] == 255)

{

B_p->imageData[i*fore->widthStep + j] = B_p_pre->imageData[i*fore->widthStep + j];

}else

{

B_p->imageData[i*fore->widthStep + j] = back->imageData[i*fore->widthStep + j];

}

}

}

}

void calc_StaticTarget(IplImage *current,IplImage *B_d,IplImage *B_p,IplImage *M_s,IplImage *M_m,IplImage *M_f)

{

int i,j;

for (i = 0;i < current->height;i++)

{

for (j = 0;j < current->width;j++)

{

if (abs((u_char)current->imageData[i*current->widthStep+j] - (u_char)B_d->imageData[i*current->widthStep+j]) <= Th )

{

M_s->imageData[i*current->widthStep+j] = 255;

}else

{

M_s->imageData[i*current->widthStep+j] = 0;

}

if (abs((u_char)B_p->imageData[i*current->widthStep+j] - (u_char)B_d->imageData[i*current->widthStep+j]) > Tb)

{

M_m->imageData[i*current->widthStep+j] = 255;

}else

{

M_m->imageData[i*current->widthStep+j] = 0;

}

if (((unsigned char)M_m->imageData[i*current->widthStep+j] == 255) &&((unsigned char)M_s->imageData[i*current->widthStep+j] == 255))

{

M_f->imageData[i*current->widthStep+j] = 255;

}else

{

M_f->imageData[i*current->widthStep+j] = 0;

}

}

}

}

int _tmain(int argc, _TCHAR* argv[])

{

CvCapture *capture=cvCreateFileCapture("test.avi");

IplImage *frame,*current_img,*B_n,*B_p,*B_d,*B_p_pre;

IplImage *M,*M1,*M_s,*M_m,*M_f;

int count,i,j;

frame = cvQueryFrame(capture);

current_img = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

B_n = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

B_p = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

B_d = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

B_p_pre = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

M = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

M1 = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

M_s = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

M_m = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

M_f = cvCreateImage(cvSize(frame->width,frame->height),IPL_DEPTH_8U,1);

count=0;

while (1)

{

cvCvtColor(frame,current_img,CV_RGB2GRAY);

if (count == 0)

{

//初始化各种背景模版

cvCopy(current_img,B_n);

cvCopy(current_img,B_p);

cvCopy(current_img,B_d);

cvCopy(current_img,B_p_pre);

}

if (count > 1)

{

//计算前景掩膜

calc_fore(current_img,B_n,M1);

//膨胀腐蚀操作

cvDilate(M1, M, 0, 1);

cvErode(M, M1, 0, 2);

cvDilate(M1, M, 0,1);

//静止目标检测

calc_StaticTarget(current_img,B_d,B_p,M_s,M_m,M_f);

//更新跟踪背景

update_back(current_img,B_n,B_p,B_d,M,B_p_pre);

cvShowImage("pure ground",B_p);

cvShowImage("dirty ground",B_d);

cvShowImage("static target",M_f);

cvShowImage("fore ground",M);

cvCopy(B_p,B_p_pre);

}

count++;

frame =cvQueryFrame(capture);

if (cvWaitKey(23)>=0)

{

break;

}

}

cvNamedWindow("pure ground",0);

cvNamedWindow("dirty ground",0);

cvNamedWindow("static target",0);

cvNamedWindow("fore ground",0);

cvReleaseCapture(&capture);

return 0;

}

算法三【MATLAB toolbox中的一个demo】

理论部分没看,在控制台直接输入:edit videoabandonedobj 会有相应的代码跳出来。

在help中搜索Abandoned Object Detection,会有理论部分介绍

视频素材下载地址:http://www.mathworks.cn/products/viprocessing/vipdemos.html

代码:【2010b 版本可跑】clc;

clear;

status = videogetdemodata('viptrain.avi');

if ~status

displayEndOfDemoMessage(mfilename);

return;

end

roi = [80 100 240 360];

% Maximum number of objects to track

maxNumObj = 200;

% Number of frames that an object must remain stationary before an alarm is

% raised

alarmCount = 45;

% Maximum number of frames that an abandoned object can be hidden before it

% is no longer tracked

maxConsecutiveMiss = 4;

% Maximum allowable change in object area in percent

areaChangeFraction = 15;

% Maximum allowable change in object centroid in percent

centroidChangeFraction = 20;

% Minimum ratio between the number of frames in which an object is detected

% and the total number of frames, for that object to be tracked.

minPersistenceRatio = 0.7;

% Offsets for drawing bounding boxes in original input video

PtsOffset = int32(repmat([roi(1); roi(2); 0 ; 0],[1 maxNumObj]));

hVideoSrc = video.MultimediaFileReader;

hVideoSrc.Filename = 'viptrain.avi';

hVideoSrc.VideoOutputDataType = 'single';

hColorConv = video.ColorSpaceConverter;

hColorConv.Conversion = 'RGB to YCbCr';

hAutothreshold = video.Autothresholder;

hAutothreshold.ThresholdScaleFactor = 1.3;

hClosing = video.MorphologicalClose;

hClosing.Neighborhood = strel('square',5);

hBlob = video.BlobAnalysis;

hBlob.MaximumCount = maxNumObj;

hBlob.NumBlobsOutputPort = true;

hBlob.MinimumBlobAreaSource = 'Property';

hBlob.MinimumBlobArea = 100;

hBlob.MaximumBlobAreaSource = 'Property';

hBlob.MaximumBlobArea = 2500;

hBlob.ExcludeBorderBlobs = true;

hDrawRectangles1 = video.ShapeInserter;

hDrawRectangles1.Fill = true;

hDrawRectangles1.FillColor = 'Custom';

hDrawRectangles1.CustomFillColor = [1 0 0];

hDrawRectangles1.Opacity = 0.5;

hDisplayCount = video.TextInserter;

hDisplayCount.Text = '%4d';

hDisplayCount.Color = [1 1 1];

hAbandonedObjects = video.VideoPlayer;

hAbandonedObjects.Name = 'Abandoned Objects';

hAbandonedObjects.Position = [10 300 roi(4)+25 roi(3)+25];

hDrawRectangles2 = video.ShapeInserter;

hDrawRectangles2.BorderColor = 'Custom';

hDrawRectangles2.CustomBorderColor = [0 1 0];

hDrawBBox = video.ShapeInserter;

hDrawBBox.BorderColor = 'Custom';

hDrawBBox.CustomBorderColor = [1 1 0];

hAllObjects = video.VideoPlayer;

hAllObjects.Position = [45+roi(4) 300 roi(4)+25 roi(3)+25];

hAllObjects.Name = 'All Objects';

hDrawRectangles3 = video.ShapeInserter;

hDrawRectangles3.BorderColor = 'Custom';

hDrawRectangles3.CustomBorderColor = [0 1 0];

hThresholdDisplay = video.VideoPlayer;

hThresholdDisplay.Position = ...

[80+2*roi(4) 300 roi(4)-roi(2)+25 roi(3)-roi(1)+25];

hThresholdDisplay.Name = 'Threshold';

firsttime = true;

while ~isDone(hVideoSrc)

Im = step(hVideoSrc);

% Select the region of interest from the original video

OutIm = Im(roi(1):end, roi(2):end, :);

YCbCr = step(hColorConv, OutIm);

CbCr = complex(YCbCr(:,:,2), YCbCr(:,:,3));

% Store the first video frame as the background

if firsttime

firsttime = false;

BkgY = YCbCr(:,:,1);

BkgCbCr = CbCr;

end

SegY = step(hAutothreshold, abs(YCbCr(:,:,1)-BkgY));

SegCbCr = abs(CbCr-BkgCbCr) > 0.05;

% Fill in small gaps in the detected objects

Segmented = step(hClosing, SegY | SegCbCr);

% Perform blob analysis

[Area, Centroid, BBox, Count] = step(hBlob, Segmented);

% Call the helper function that tracks the identified objects and

% returns the bounding boxes and the number of the abandoned objects.

[OutCount, OutBBox] = videoobjtracker(Area, Centroid, BBox, Count,...

areaChangeFraction, centroidChangeFraction, maxConsecutiveMiss, ...

minPersistenceRatio, alarmCount);

% Display the abandoned object detection results

Imr = step(hDrawRectangles1, Im, OutBBox+PtsOffset);

Imr(1:15,1:30,:) = 0;

Imr = step(hDisplayCount, Imr, OutCount);

step(hAbandonedObjects, Imr);

% Display all the detected objects

Imr = step(hDrawRectangles2, Im, BBox+PtsOffset);

Imr(1:15,1:30,:) = 0;

Imr = step(hDisplayCount, Imr, OutCount);

Imr = step(hDrawBBox, Imr, roi);

step(hAllObjects, Imr);

% Display the segmented video

SegIm = step(hDrawRectangles3, repmat(Segmented,[1 1 3]), BBox);

step(hThresholdDisplay, SegIm);

end

release(hVideoSrc);虽然不是自己的研究方向,但也算尝试了吧。马上快答辩咯,攒个人品先~

961

961

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?