DINOv2

Meta AI团队开源的大模型,可用于分类、分割、图像检索、深度估计等下游任务,且效果优异。

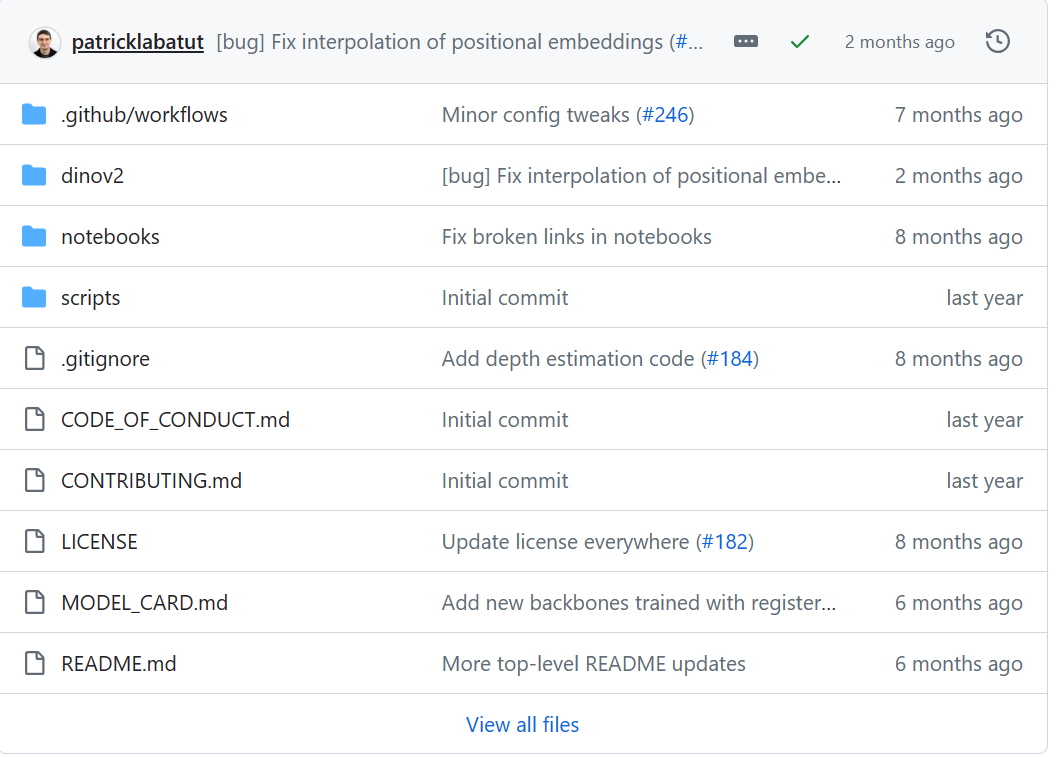

https://github.com/facebookresearch/dinov2

深度估计

通过输入RGB图像,深度学习模型对该图像进行深度估计并输出深度图。

准备工作

从下载前面网址进入下载DINOv2

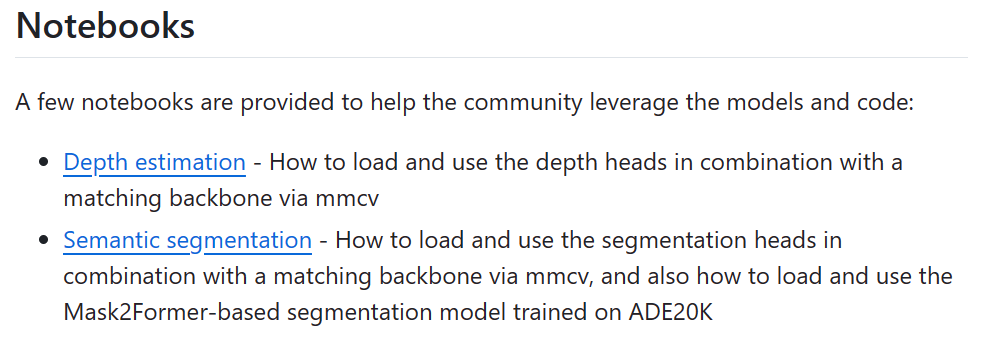

在README.md中找到“Notebooks”

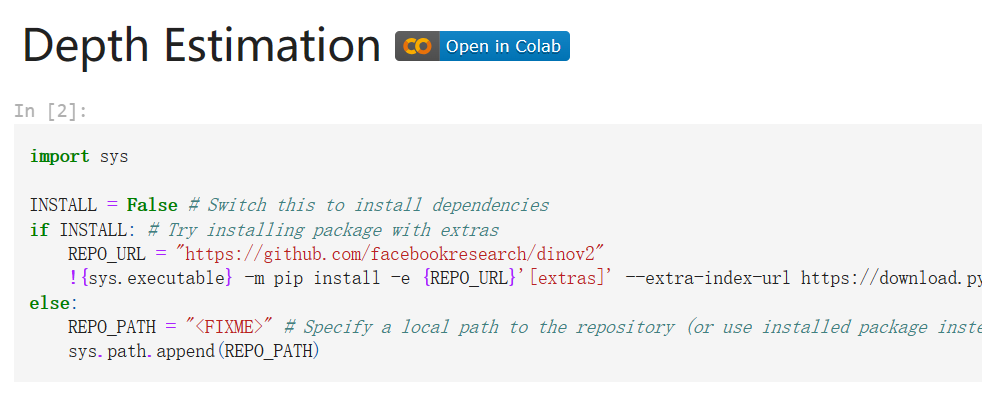

点击"Depth estimation",进入作者的代码

代码运行

新建py,粘贴代码,试调运行,缺失的文件会在运行时自动下载,如有其他错误,跟着往常一样调试就行。

import math

import itertools

import os

from functools import partial

import numpy as np

import torch

import torch.nn.functional as F

from dinov2.eval.depth.models import build_depther

class CenterPadding(torch.nn.Module):

def __init__(self, multiple):

super().__init__()

self.multiple = multiple

def _get_pad(self, size):

new_size = math.ceil(size / self.multiple) * self.multiple

pad_size = new_size - size

pad_size_left = pad_size // 2

pad_size_right = pad_size - pad_size_left

return pad_size_left, pad_size_right

@torch.inference_mode()

def forward(self, x):

pads = list(itertools.chain.from_iterable(self._get_pad(m) for m in x.shape[:1:-1]))

output = F.pad(x

本文介绍了如何使用MetaAI团队开源的DINOv2模型进行深度估计,包括下载模型、准备代码、运行过程以及处理可能遇到的问题。开发者可以按照步骤操作,通过输入RGB图像获取深度图。

本文介绍了如何使用MetaAI团队开源的DINOv2模型进行深度估计,包括下载模型、准备代码、运行过程以及处理可能遇到的问题。开发者可以按照步骤操作,通过输入RGB图像获取深度图。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1726

1726

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?