目录

YOLOv5 网络架构

论文原址:https://arxiv.org/pdf/2207.02696

Code:https://github.com/WongKinYiu/yolov7

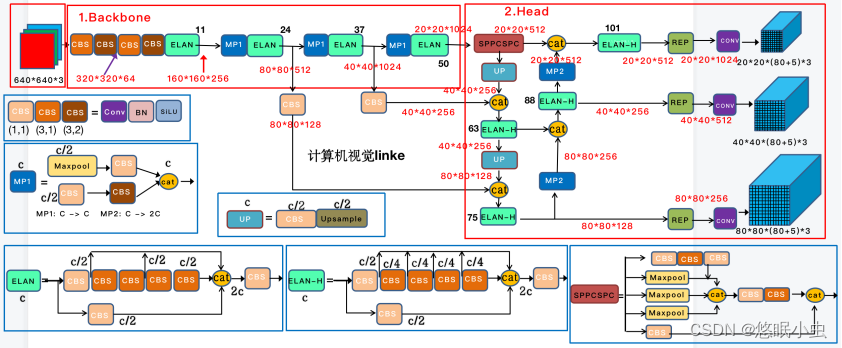

YOLOv7 网络的模型架构如下:

YOLOv7 大部分与前面提及的YOLO系列并没有太多变化,网络创新点主要包含3个部分:模型结构重参化、E-ELAN、过渡模块(MP)。

YOLO系列

下面对创新部分进行详细说明。

模型结构重参化

模型重参化策略在推理阶段将多个模块合并为一个计算模块,可以看作是一种集成技术,可以将其分为模块级集成和模型级集成两类。对于模型级重新参数化有两种常见的操作:

- 用不同的训练数据训练多个相同的模型,然后对多个训练模型的权重进行平均。

- 对不同迭代次数下模型权重进行加权平均。

这种方法在训练过程中将一个整体模块分割为多个相同或不同的模块分支,但在推理过程中将多个分支模块集成到一个完全等价的模块中。下图是作者使用重参化实现构建的多个module,按照分组数不同进行排列。

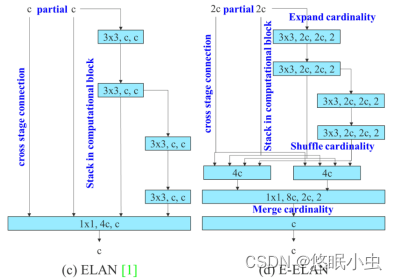

ELAN

E-ELAN是基于ELAN的扩展,在大规模ELAN中,无论梯度路径长度和计算模块数量如何,都达到了稳定的状态。但如果更多计算模块被无限地堆叠,这种稳定状态可能会被破坏,参数利用率也会降低。而提出的E-ELAN采用expand(拓展)、shuffle(混洗)、merge cardinality(合并)结构,实现在不破坏原始梯度路径的情况下,提高网络的学习能力。此外,多堆叠其实也对应了更密集的残差结构,残差网络的特点是容易优化,并且能够通过增加相当的深度来提高准确率。其内部的残差块使用了跳跃连接,缓解了在深度神经网络中增加深度带来的梯度消失问题。

在体系结构方面,E-ELAN只改变了计算模块中的结构,而过渡层的结构则完全不变。利用分组卷积来扩展计算模块的通道和基数,将相同的group parameter和channel multiplier用于计算每一层中的所有模块。然后,将每个模块计算出的特征图根据设置的分组数打乱成G组,最后将它们连接在一起。此时,每一组特征图中的通道数将与原始体系结构中的通道数相同。最后,作者添加了G组特征来合并。

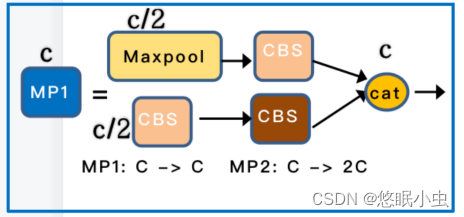

过渡模块(MP)

网络中使用创新的过渡模块(MP)来进行下采样,在卷积神经网络中,常见的用于下采样的过渡模块是一个卷积核大小为3x3、步长为2x2的卷积或者一个步长为2x2的最大池化。在YoloV7中,作者将两种过渡模块进行了集合,一个过渡模块存在两个分支,如下图示。上分支是一个步长为2x2的最大池化+一个1x1卷积,下分支是一个1x1卷积+一个卷积核大小为3x3、步长为2x2的卷积,两个分支的结果在输出时会进行堆叠。

YOLOv7 网络搭建

Backbone网络搭建

import torch

import torch.nn as nn

def autopad(k, p=None):

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k]

return p

class SiLU(nn.Module):

@staticmethod

def forward(x):

return x * torch.sigmoid(x)

class Conv(nn.Module):

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=SiLU()): # ch_in, ch_out, kernel, stride, padding, groups

super(Conv, self).__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2, eps=0.001, momentum=0.03)

self.act = nn.LeakyReLU(0.1, inplace=True) if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class Multi_Concat_Block(nn.Module):

def __init__(self, c1, c2, c3, n=4, e=1, ids=[0]):

super(Multi_Concat_Block, self).__init__()

c_ = int(c2 * e)

self.ids = ids

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = nn.ModuleList(

[Conv(c_ if i ==0 else c2, c2, 3, 1) for i in range(n)]

)

self.cv4 = Conv(c_ * 2 + c2 * (len(ids) - 2), c3, 1, 1)

def forward(self, x):

x_1 = self.cv1(x)

x_2 = self.cv2(x)

x_all = [x_1, x_2]

# [-1, -3, -5, -6] => [5, 3, 1, 0]

for i in range(len(self.cv3)):

x_2 = self.cv3[i](x_2)

x_all.append(x_2)

out = self.cv4(torch.cat([x_all[id] for id in self.ids], 1))

return out

class MP(nn.Module):

def __init__(self, k=2):

super(MP, self).__init__()

self.m = nn.MaxPool2d(kernel_size=k, stride=k)

def forward(self, x):

return self.m(x)

class Transition_Block(nn.Module):

def __init__(self, c1, c2):

super(Transition_Block, self).__init__()

self.cv1 = Conv(c1, c2, 1, 1)

self.cv2 = Conv(c1, c2, 1, 1)

self.cv3 = Conv(c2, c2, 3, 2)

self.mp = MP()

def forward(self, x):

# 160, 160, 256 => 80, 80, 256 => 80, 80, 128

x_1 = self.mp(x)

x_1 = self.cv1(x_1)

# 160, 160, 256 => 160, 160, 128 => 80, 80, 128

x_2 = self.cv2(x)

x_2 = self.cv3(x_2)

# 80, 80, 128 cat 80, 80, 128 => 80, 80, 256

return torch.cat([x_2, x_1], 1)

class Backbone(nn.Module):

def __init__(self, transition_channels, block_channels, n, phi, pretrained=False):

super().__init__()

#-----------------------------------------------#

# 输入图片是640, 640, 3

#-----------------------------------------------#

ids = {

'l' : [-1, -3, -5, -6],

'x' : [-1, -3, -5, -7, -8],

}[phi]

# 640, 640, 3 => 640, 640, 32 => 320, 320, 64

self.stem = nn.Sequential(

Conv(3, transition_channels, 3, 1),

Conv(transition_channels, transition_channels * 2, 3, 2),

Conv(transition_channels * 2, transition_channels * 2, 3, 1),

)

# 320, 320, 64 => 160, 160, 128 => 160, 160, 256

self.dark2 = nn.Sequential(

Conv(transition_channels * 2, transition_channels * 4, 3, 2),

Multi_Concat_Block(transition_channels * 4, block_channels * 2, transition_channels * 8, n=n, ids=ids),

)

# 160, 160, 256 => 80, 80, 256 => 80, 80, 512

self.dark3 = nn.Sequential(

Transition_Block(transition_channels * 8, transition_channels * 4),

Multi_Concat_Block(transition_channels * 8, block_channels * 4, transition_channels * 16, n=n, ids=ids),

)

# 80, 80, 512 => 40, 40, 512 => 40, 40, 1024

self.dark4 = nn.Sequential(

Transition_Block(transition_channels * 16, transition_channels * 8),

Multi_Concat_Block(transition_channels * 16, block_channels * 8, transition_channels * 32, n=n, ids=ids),

)

# 40, 40, 1024 => 20, 20, 1024 => 20, 20, 1024

self.dark5 = nn.Sequential(

Transition_Block(transition_channels * 32, transition_channels * 16),

Multi_Concat_Block(transition_channels * 32, block_channels * 8, transition_channels * 32, n=n, ids=ids),

)

if pretrained:

url = {

"l" : 'https://github.com/bubbliiiing/yolov7-pytorch/releases/download/v1.0/yolov7_backbone_weights.pth',

"x" : 'https://github.com/bubbliiiing/yolov7-pytorch/releases/download/v1.0/yolov7_x_backbone_weights.pth',

}[phi]

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu", model_dir="./model_data")

self.load_state_dict(checkpoint, strict=False)

print("Load weights from " + url.split('/')[-1])

def forward(self, x):

x = self.stem(x)

x = self.dark2(x)

#-----------------------------------------------#

# dark3的输出为80, 80, 512,是一个有效特征层

#-----------------------------------------------#

x = self.dark3(x)

feat1 = x

#-----------------------------------------------#

# dark4的输出为40, 40, 1024,是一个有效特征层

#-----------------------------------------------#

x = self.dark4(x)

feat2 = x

#-----------------------------------------------#

# dark5的输出为20, 20, 1024,是一个有效特征层

#-----------------------------------------------#

x = self.dark5(x)

feat3 = x

return feat1, feat2, feat3

YOLOv7 完整网络实现

import numpy as np

import torch

import torch.nn as nn

from nets.backbone import Backbone, Multi_Concat_Block, Conv, SiLU, Transition_Block, autopad

class SPPCSPC(nn.Module):

# CSP https://github.com/WongKinYiu/CrossStagePartialNetworks

def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5, k=(5, 9, 13)):

super(SPPCSPC, self).__init__()

c_ = int(2 * c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(c_, c_, 3, 1)

self.cv4 = Conv(c_, c_, 1, 1)

self.m = nn.ModuleList([nn.MaxPool2d(kernel_size=x, stride=1, padding=x // 2) for x in k])

self.cv5 = Conv(4 * c_, c_, 1, 1)

self.cv6 = Conv(c_, c_, 3, 1)

# 输出通道数为c2

self.cv7 = Conv(2 * c_, c2, 1, 1)

def forward(self, x):

x1 = self.cv4(self.cv3(self.cv1(x)))

y1 = self.cv6(self.cv5(torch.cat([x1] + [m(x1) for m in self.m], 1)))

y2 = self.cv2(x)

return self.cv7(torch.cat((y1, y2), dim=1))

class RepConv(nn.Module):

# Represented convolution

# https://arxiv.org/abs/2101.03697

def __init__(self, c1, c2, k=3, s=1, p=None, g=1, act=SiLU(), deploy=False):

super(RepConv, self).__init__()

self.deploy = deploy

self.groups = g

self.in_channels = c1

self.out_channels = c2

assert k == 3

assert autopad(k, p) == 1

padding_11 = autopad(k, p) - k // 2

self.act = nn.LeakyReLU(0.1, inplace=True) if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

if deploy:

self.rbr_reparam = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=True)

else:

self.rbr_identity = (nn.BatchNorm2d(num_features=c1, eps=0.001, momentum=0.03) if c2 == c1 and s == 1 else None)

self.rbr_dense = nn.Sequential(

nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False),

nn.BatchNorm2d(num_features=c2, eps=0.001, momentum=0.03),

)

self.rbr_1x1 = nn.Sequential(

nn.Conv2d( c1, c2, 1, s, padding_11, groups=g, bias=False),

nn.BatchNorm2d(num_features=c2, eps=0.001, momentum=0.03),

)

def forward(self, inputs):

if hasattr(self, "rbr_reparam"):

return self.act(self.rbr_reparam(inputs))

if self.rbr_identity is None:

id_out = 0

else:

id_out = self.rbr_identity(inputs)

return self.act(self.rbr_dense(inputs) + self.rbr_1x1(inputs) + id_out)

def get_equivalent_kernel_bias(self):

kernel3x3, bias3x3 = self._fuse_bn_tensor(self.rbr_dense)

kernel1x1, bias1x1 = self._fuse_bn_tensor(self.rbr_1x1)

kernelid, biasid = self._fuse_bn_tensor(self.rbr_identity)

return (

kernel3x3 + self._pad_1x1_to_3x3_tensor(kernel1x1) + kernelid,

bias3x3 + bias1x1 + biasid,

)

def _pad_1x1_to_3x3_tensor(self, kernel1x1):

if kernel1x1 is None:

return 0

else:

return nn.functional.pad(kernel1x1, [1, 1, 1, 1])

def _fuse_bn_tensor(self, branch):

if branch is None:

return 0, 0

if isinstance(branch, nn.Sequential):

kernel = branch[0].weight

running_mean = branch[1].running_mean

running_var = branch[1].running_var

gamma = branch[1].weight

beta = branch[1].bias

eps = branch[1].eps

else:

assert isinstance(branch, nn.BatchNorm2d)

if not hasattr(self, "id_tensor"):

input_dim = self.in_channels // self.groups

kernel_value = np.zeros(

(self.in_channels, input_dim, 3, 3), dtype=np.float32

)

for i in range(self.in_channels):

kernel_value[i, i % input_dim, 1, 1] = 1

self.id_tensor = torch.from_numpy(kernel_value).to(branch.weight.device)

kernel = self.id_tensor

running_mean = branch.running_mean

running_var = branch.running_var

gamma = branch.weight

beta = branch.bias

eps = branch.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta - running_mean * gamma / std

def repvgg_convert(self):

kernel, bias = self.get_equivalent_kernel_bias()

return (

kernel.detach().cpu().numpy(),

bias.detach().cpu().numpy(),

)

def fuse_conv_bn(self, conv, bn):

std = (bn.running_var + bn.eps).sqrt()

bias = bn.bias - bn.running_mean * bn.weight / std

t = (bn.weight / std).reshape(-1, 1, 1, 1)

weights = conv.weight * t

bn = nn.Identity()

conv = nn.Conv2d(in_channels = conv.in_channels,

out_channels = conv.out_channels,

kernel_size = conv.kernel_size,

stride=conv.stride,

padding = conv.padding,

dilation = conv.dilation,

groups = conv.groups,

bias = True,

padding_mode = conv.padding_mode)

conv.weight = torch.nn.Parameter(weights)

conv.bias = torch.nn.Parameter(bias)

return conv

def fuse_repvgg_block(self):

if self.deploy:

return

print(f"RepConv.fuse_repvgg_block")

self.rbr_dense = self.fuse_conv_bn(self.rbr_dense[0], self.rbr_dense[1])

self.rbr_1x1 = self.fuse_conv_bn(self.rbr_1x1[0], self.rbr_1x1[1])

rbr_1x1_bias = self.rbr_1x1.bias

weight_1x1_expanded = torch.nn.functional.pad(self.rbr_1x1.weight, [1, 1, 1, 1])

# Fuse self.rbr_identity

if (isinstance(self.rbr_identity, nn.BatchNorm2d) or isinstance(self.rbr_identity, nn.modules.batchnorm.SyncBatchNorm)):

identity_conv_1x1 = nn.Conv2d(

in_channels=self.in_channels,

out_channels=self.out_channels,

kernel_size=1,

stride=1,

padding=0,

groups=self.groups,

bias=False)

identity_conv_1x1.weight.data = identity_conv_1x1.weight.data.to(self.rbr_1x1.weight.data.device)

identity_conv_1x1.weight.data = identity_conv_1x1.weight.data.squeeze().squeeze()

identity_conv_1x1.weight.data.fill_(0.0)

identity_conv_1x1.weight.data.fill_diagonal_(1.0)

identity_conv_1x1.weight.data = identity_conv_1x1.weight.data.unsqueeze(2).unsqueeze(3)

identity_conv_1x1 = self.fuse_conv_bn(identity_conv_1x1, self.rbr_identity)

bias_identity_expanded = identity_conv_1x1.bias

weight_identity_expanded = torch.nn.functional.pad(identity_conv_1x1.weight, [1, 1, 1, 1])

else:

bias_identity_expanded = torch.nn.Parameter( torch.zeros_like(rbr_1x1_bias) )

weight_identity_expanded = torch.nn.Parameter( torch.zeros_like(weight_1x1_expanded) )

self.rbr_dense.weight = torch.nn.Parameter(self.rbr_dense.weight + weight_1x1_expanded + weight_identity_expanded)

self.rbr_dense.bias = torch.nn.Parameter(self.rbr_dense.bias + rbr_1x1_bias + bias_identity_expanded)

self.rbr_reparam = self.rbr_dense

self.deploy = True

if self.rbr_identity is not None:

del self.rbr_identity

self.rbr_identity = None

if self.rbr_1x1 is not None:

del self.rbr_1x1

self.rbr_1x1 = None

if self.rbr_dense is not None:

del self.rbr_dense

self.rbr_dense = None

def fuse_conv_and_bn(conv, bn):

fusedconv = nn.Conv2d(conv.in_channels,

conv.out_channels,

kernel_size=conv.kernel_size,

stride=conv.stride,

padding=conv.padding,

groups=conv.groups,

bias=True).requires_grad_(False).to(conv.weight.device)

w_conv = conv.weight.clone().view(conv.out_channels, -1)

w_bn = torch.diag(bn.weight.div(torch.sqrt(bn.eps + bn.running_var)))

# fusedconv.weight.copy_(torch.mm(w_bn, w_conv).view(fusedconv.weight.shape))

fusedconv.weight.copy_(torch.mm(w_bn, w_conv).view(fusedconv.weight.shape).detach())

b_conv = torch.zeros(conv.weight.size(0), device=conv.weight.device) if conv.bias is None else conv.bias

b_bn = bn.bias - bn.weight.mul(bn.running_mean).div(torch.sqrt(bn.running_var + bn.eps))

# fusedconv.bias.copy_(torch.mm(w_bn, b_conv.reshape(-1, 1)).reshape(-1) + b_bn)

fusedconv.bias.copy_((torch.mm(w_bn, b_conv.reshape(-1, 1)).reshape(-1) + b_bn).detach())

return fusedconv

#---------------------------------------------------#

# yolo_body

#---------------------------------------------------#

class YoloBody(nn.Module):

def __init__(self, anchors_mask, num_classes, phi, pretrained=False):

super(YoloBody, self).__init__()

#-----------------------------------------------#

# 定义了不同yolov7版本的参数

#-----------------------------------------------#

transition_channels = {'l' : 32, 'x' : 40}[phi]

block_channels = 32

panet_channels = {'l' : 32, 'x' : 64}[phi]

e = {'l' : 2, 'x' : 1}[phi]

n = {'l' : 4, 'x' : 6}[phi]

ids = {'l' : [-1, -2, -3, -4, -5, -6], 'x' : [-1, -3, -5, -7, -8]}[phi]

conv = {'l' : RepConv, 'x' : Conv}[phi]

#-----------------------------------------------#

# 输入图片是640, 640, 3

#-----------------------------------------------#

#---------------------------------------------------#

# 生成主干模型

# 获得三个有效特征层,他们的shape分别是:

# 80, 80, 512

# 40, 40, 1024

# 20, 20, 1024

#---------------------------------------------------#

self.backbone = Backbone(transition_channels, block_channels, n, phi, pretrained=pretrained)

#------------------------加强特征提取网络------------------------#

self.upsample = nn.Upsample(scale_factor=2, mode="nearest")

# 20, 20, 1024 => 20, 20, 512

self.sppcspc = SPPCSPC(transition_channels * 32, transition_channels * 16)

# 20, 20, 512 => 20, 20, 256 => 40, 40, 256

self.conv_for_P5 = Conv(transition_channels * 16, transition_channels * 8)

# 40, 40, 1024 => 40, 40, 256

self.conv_for_feat2 = Conv(transition_channels * 32, transition_channels * 8)

# 40, 40, 512 => 40, 40, 256

self.conv3_for_upsample1 = Multi_Concat_Block(transition_channels * 16, panet_channels * 4, transition_channels * 8, e=e, n=n, ids=ids)

# 40, 40, 256 => 40, 40, 128 => 80, 80, 128

self.conv_for_P4 = Conv(transition_channels * 8, transition_channels * 4)

# 80, 80, 512 => 80, 80, 128

self.conv_for_feat1 = Conv(transition_channels * 16, transition_channels * 4)

# 80, 80, 256 => 80, 80, 128

self.conv3_for_upsample2 = Multi_Concat_Block(transition_channels * 8, panet_channels * 2, transition_channels * 4, e=e, n=n, ids=ids)

# 80, 80, 128 => 40, 40, 256

self.down_sample1 = Transition_Block(transition_channels * 4, transition_channels * 4)

# 40, 40, 512 => 40, 40, 256

self.conv3_for_downsample1 = Multi_Concat_Block(transition_channels * 16, panet_channels * 4, transition_channels * 8, e=e, n=n, ids=ids)

# 40, 40, 256 => 20, 20, 512

self.down_sample2 = Transition_Block(transition_channels * 8, transition_channels * 8)

# 20, 20, 1024 => 20, 20, 512

self.conv3_for_downsample2 = Multi_Concat_Block(transition_channels * 32, panet_channels * 8, transition_channels * 16, e=e, n=n, ids=ids)

#------------------------加强特征提取网络------------------------#

# 80, 80, 128 => 80, 80, 256

self.rep_conv_1 = conv(transition_channels * 4, transition_channels * 8, 3, 1)

# 40, 40, 256 => 40, 40, 512

self.rep_conv_2 = conv(transition_channels * 8, transition_channels * 16, 3, 1)

# 20, 20, 512 => 20, 20, 1024

self.rep_conv_3 = conv(transition_channels * 16, transition_channels * 32, 3, 1)

# 4 + 1 + num_classes

# 80, 80, 256 => 80, 80, 3 * 25 (4 + 1 + 20) & 85 (4 + 1 + 80)

self.yolo_head_P3 = nn.Conv2d(transition_channels * 8, len(anchors_mask[2]) * (5 + num_classes), 1)

# 40, 40, 512 => 40, 40, 3 * 25 & 85

self.yolo_head_P4 = nn.Conv2d(transition_channels * 16, len(anchors_mask[1]) * (5 + num_classes), 1)

# 20, 20, 512 => 20, 20, 3 * 25 & 85

self.yolo_head_P5 = nn.Conv2d(transition_channels * 32, len(anchors_mask[0]) * (5 + num_classes), 1)

def fuse(self):

print('Fusing layers... ')

for m in self.modules():

if isinstance(m, RepConv):

m.fuse_repvgg_block()

elif type(m) is Conv and hasattr(m, 'bn'):

m.conv = fuse_conv_and_bn(m.conv, m.bn)

delattr(m, 'bn')

m.forward = m.fuseforward

return self

def forward(self, x):

# backbone

feat1, feat2, feat3 = self.backbone.forward(x)

#------------------------加强特征提取网络------------------------#

# 20, 20, 1024 => 20, 20, 512

P5 = self.sppcspc(feat3)

# 20, 20, 512 => 20, 20, 256

P5_conv = self.conv_for_P5(P5)

# 20, 20, 256 => 40, 40, 256

P5_upsample = self.upsample(P5_conv)

# 40, 40, 256 cat 40, 40, 256 => 40, 40, 512

P4 = torch.cat([self.conv_for_feat2(feat2), P5_upsample], 1)

# 40, 40, 512 => 40, 40, 256

P4 = self.conv3_for_upsample1(P4)

# 40, 40, 256 => 40, 40, 128

P4_conv = self.conv_for_P4(P4)

# 40, 40, 128 => 80, 80, 128

P4_upsample = self.upsample(P4_conv)

# 80, 80, 128 cat 80, 80, 128 => 80, 80, 256

P3 = torch.cat([self.conv_for_feat1(feat1), P4_upsample], 1)

# 80, 80, 256 => 80, 80, 128

P3 = self.conv3_for_upsample2(P3)

# 80, 80, 128 => 40, 40, 256

P3_downsample = self.down_sample1(P3)

# 40, 40, 256 cat 40, 40, 256 => 40, 40, 512

P4 = torch.cat([P3_downsample, P4], 1)

# 40, 40, 512 => 40, 40, 256

P4 = self.conv3_for_downsample1(P4)

# 40, 40, 256 => 20, 20, 512

P4_downsample = self.down_sample2(P4)

# 20, 20, 512 cat 20, 20, 512 => 20, 20, 1024

P5 = torch.cat([P4_downsample, P5], 1)

# 20, 20, 1024 => 20, 20, 512

P5 = self.conv3_for_downsample2(P5)

#------------------------加强特征提取网络------------------------#

# P3 80, 80, 128

# P4 40, 40, 256

# P5 20, 20, 512

P3 = self.rep_conv_1(P3)

P4 = self.rep_conv_2(P4)

P5 = self.rep_conv_3(P5)

#---------------------------------------------------#

# 第三个特征层

# y3=(batch_size, 75, 80, 80)

#---------------------------------------------------#

out2 = self.yolo_head_P3(P3)

#---------------------------------------------------#

# 第二个特征层

# y2=(batch_size, 75, 40, 40)

#---------------------------------------------------#

out1 = self.yolo_head_P4(P4)

#---------------------------------------------------#

# 第一个特征层

# y1=(batch_size, 75, 20, 20)

#---------------------------------------------------#

out0 = self.yolo_head_P5(P5)

return [out0, out1, out2]

希望这篇文章对大家理解YOLOv7能够有所帮助呀!至此,YOLO系列算是一个短暂的完结啦,后续也会不定时更新这个系列,大家敬请期待吧!

2814

2814

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?