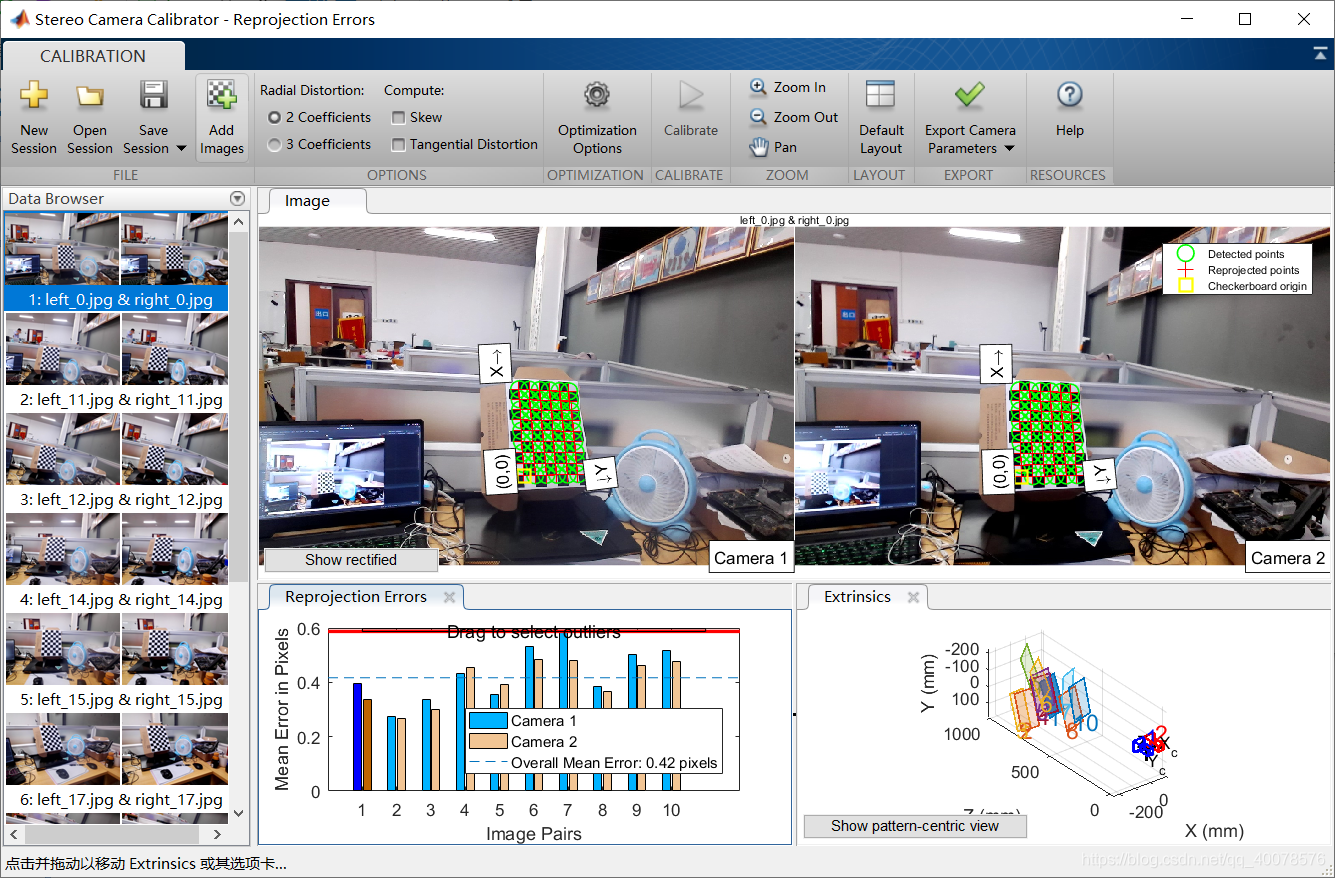

6月14,完成双目相机的标定,标定原理尚未清楚,尚未清楚,清楚

`/******************************/

/* 立体匹配和测距 */

/******************************/

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace std;

using namespace cv;

string left_image_path = "../snapshot/left/left_0.jpg";

string right_image_path = "../snapshot/right/right_0.jpg";

Mat rgbImageL , grayImageL;

Mat rgbImageR , grayImageR;

Mat rectifyImageL , rectifyImageR;

Rect validROIL;//图像校正之后,会对图像进行裁剪,这里的validROI就是指裁剪之后的区域

Rect validROIR;

Mat mapLx, mapLy, mapRx, mapRy; //映射表

Mat Rl, Rr, Pl, Pr, Q; //校正旋转矩阵R,投影矩阵P 重投影矩阵Q

Mat xyz; //三维坐标

Point origin;

Rect selection;

bool selectionObject = false ;

int blockSize = 0 , uniquenessRation= 0, numDisparities = 0;

Ptr<StereoSGBM> sgbm = StereoSGBM::create(16,9);

Ptr<StereoBM> bm = StereoBM::create(16, 9);

/*

事先标定好的相机的参数

fx 0 cx

0 fy cy

0 0 1

*/

Mat cameraL_intrinsicMatrix = (Mat_<double>(3,3)<<885.945,0,686.472,

0, 872.664,381.1532,

0,0,1);

Mat cameraL_disCoeff = (Mat_<double>(5,1)<<0,0,0,0,0);//stortionCoeff

Mat cameraR_intrinsicMatrix = (Mat_<double>(3,3)<<885.945,0,686.472,

0, 872.664,381.1532,

0,0,1);

Mat cameraR_disCoeff = (Mat_<double>(5,1)<<0,0,0,0,0);

Mat T = (Mat_<double>(3, 1) << -68.9255, 0.8937, -4.8984);//T平移向量

Mat rec = (Mat_<double>(3, 1) << -0.00068, -0.0037, 0.0033);//rec旋转向量

Mat R = (Mat_<double>(3,3)<<1.000,-0.0037,-0.0068,

0.0037,1.000,0.0033,

0.0068,-0.0033,1.000);// 旋转矩阵

/*****立体匹配*****/

void stereo_match(int,void*)

{

sgbm->setBlockSize(2*blockSize+5); //SAD窗口大小,5~21之间为宜

sgbm->setPreFilterCap(31);

sgbm->setMinDisparity(0); //最小视差,默认值为0, 可以是负值,int型

sgbm->setNumDisparities(numDisparities*16+16);//视差窗口,即最大视差值与最小视差值之差,窗口大小必须是16的整数倍,int型

sgbm->setUniquenessRatio(uniquenessRation);//uniquenessRatio主要可以防止误匹配

sgbm->setSpeckleWindowSize(100);

sgbm->setSpeckleRange(32);

sgbm->setDisp12MaxDiff(-1);

Mat disp, disp8;

sgbm->compute(rectifyImageL, rectifyImageR, disp);//输入图像必须为灰度图

disp.convertTo(disp8, CV_8U, 255 / ((numDisparities * 16 + 16)*16.));//计算出的视差是CV_16S格式

reprojectImageTo3D(disp, xyz, Q, true); //在实际求距离时,ReprojectTo3D出来的X / W, Y / W, Z / W都要乘以16(也就是W除以16),才能得到正确的三维坐标信息。

xyz = xyz * 16;

imshow("disparity", disp8);

}

void stereo_match_BM(int,void*)

{

bm->setBlockSize(2*blockSize+5); //SAD窗口大小,5~21之间为宜

bm->setROI1(validROIL);

bm->setROI2(validROIR);

bm->setPreFilterCap(31);

bm->setMinDisparity(0); //最小视差,默认值为0, 可以是负值,int型

bm->setNumDisparities(numDisparities*16+16);//视差窗口,即最大视差值与最小视差值之差,窗口大小必须是16的整数倍,int型

bm->setTextureThreshold(10);

bm->setUniquenessRatio(uniquenessRation);//uniquenessRatio主要可以防止误匹配

bm->setSpeckleWindowSize(100);

bm->setSpeckleRange(32);

bm->setDisp12MaxDiff(-1);

Mat disp, disp8;

bm->compute(rectifyImageL, rectifyImageR, disp);//输入图像必须为灰度图

disp.convertTo(disp8, CV_8U, 255 / ((numDisparities * 16 + 16)*16.));//计算出的视差是CV_16S格式

reprojectImageTo3D(disp, xyz, Q, true); //在实际求距离时,ReprojectTo3D出来的X / W, Y / W, Z / W都要乘以16(也就是W除以16),才能得到正确的三维坐标信息。

xyz = xyz * 16;

imshow("disparity", disp8);

}

static void onMouse(int event, int x, int y, int,void*)

{

if(selectionObject)

{

selection.x=MIN(x,origin.x);

selection.y=MIN(y,origin.y);

selection.width = abs(x-origin.x);

selection.height = abs(y-origin.y);

}

switch (event)

{

case EVENT_LBUTTONDOWN:

origin = Point(x,y);

selection = Rect(x,y,0,0);

selectionObject = true;

cout << origin <<"in world coordinate is: " << xyz.at<Vec3f>(origin) << endl;

break;

case EVENT_LBUTTONUP: //鼠标左按钮释放的事件 selectionObject = false;

if (selection.width > 0 && selection.height > 0)

break;

}

}

int main()

{

rgbImageL=imread(left_image_path,-1);

grayImageL=imread(left_image_path,0);

rgbImageR=imread(right_image_path,-1);

grayImageR=imread(right_image_path,0);

Size imageSize = Size(rgbImageR.cols,rgbImageR.rows);

//Rodrigues(rec,R);

cout<<R<<endl;

#if 0

stereoRectify(cameraL_intrinsicMatrix, cameraL_disCoeff, cameraR_intrinsicMatrix, cameraR_disCoeff, imageSize, R, T, Rl, Rr, Pl, Pr, Q, CALIB_ZERO_DISPARITY,

0, imageSize, &validROIL, &validROIR);

initUndistortRectifyMap(cameraL_intrinsicMatrix, cameraL_disCoeff, Rl, Pr, imageSize, CV_32FC1, mapLx, mapLy);

initUndistortRectifyMap(cameraR_intrinsicMatrix, cameraR_disCoeff, Rr, Pr, imageSize, CV_32FC1, mapRx, mapRy);

#else

stereoRectify(cameraL_intrinsicMatrix, cameraL_disCoeff, cameraR_intrinsicMatrix, cameraL_disCoeff, imageSize, R, T, Rl, Rr, Pl, Pr, Q, CALIB_ZERO_DISPARITY,

0, imageSize, &validROIL, &validROIR);

initUndistortRectifyMap(cameraL_intrinsicMatrix, cameraL_disCoeff, Rl, Pr, imageSize, CV_32FC1, mapLx, mapLy);

initUndistortRectifyMap(cameraR_intrinsicMatrix, cameraL_disCoeff, Rr, Pr, imageSize, CV_32FC1, mapRx, mapRy);

#endif

// cout<<mapLy<<mapRy<<endl;

remap(grayImageL,rectifyImageL,mapLx,mapLy,INTER_LINEAR);

remap(grayImageR,rectifyImageR,mapRx,mapRy,INTER_LINEAR);

imshow("rectified ",rectifyImageL);

imshow("rectified ",rectifyImageR);

Mat canvas;

double sf;

int w,h;

sf = 800./MAX(imageSize.height,imageSize.width);

w = cvRound(imageSize.width*sf);

h = cvRound(imageSize.height*sf);

canvas.create(h,w*2,CV_8UC3);

Mat canvasPart = canvas(Rect(w*0,0,w,h));

resize(rectifyImageR,canvasPart,canvasPart.size(),0,0,INTER_LINEAR);

Rect vrioR(cvRound(validROIR.x*sf),cvRound(validROIR.y*sf),

cvRound(validROIR.width*sf),cvRound(validROIR.height*sf));

cout<<"painted ImageR"<<endl;

for (int i = 0; i <canvas.rows ; i+=16)

{

line(canvas,Point(0,i),Point(canvas.cols,i),Scalar(0,255,0),1,8);

}

imshow("rectified",canvas);

namedWindow("disparity",WINDOW_FULLSCREEN);

createTrackbar("BlockSize:\n", "disparity",&blockSize, 8, stereo_match);

createTrackbar("uniquenessRation:\n", "disparity",&uniquenessRation, 50, stereo_match);

createTrackbar("numDisparities:\n", "disparity",&numDisparities, 16, stereo_match);

// createTrackbar("uniquenessRation:","disparity:",&uniquenessRation,50,stereo_match);

// createTrackbar("numDisparities:","disparity:",&numDisparities,16,stereo_match);

setMouseCallback("disparity",onMouse,0);

stereo_match(0,0);

waitKey();

return 0;

}

慢慢调

2459

2459

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?