一、shufflenetv2简介

ShuffleNetV2是一种高效的卷积神经网络架构,用于图像分类和目标检测任务。其主要特点是在保持较高准确性的同时,显著减少了模型的计算和参数量。

ShuffleNetV2的核心思想是通过引入轻量级的通道重排(channel shuffle)操作,促使信息在网络中充分混合和传递,从而提高模型的表示能力。通道重排操作将输入特征图按通道数分组,并将这些组进行随机排列,然后再按顺序重新组合,从而打破了通道之间的信息孤岛,增加了特征图之间的交互。

此外,ShuffleNetV2还采用了逐通道的组卷积(depthwise conv)策略,将卷积操作分成多个较小的组,减少了计算成本。

ShuffleNetV2的设计灵感来自于传统的打牌方式中的洗牌操作,通过将特征图的通道进行重排和混合,从而实现了在较小的计算和参数开销下,保持较高的模型性能。这使得ShuffleNetV2成为一种理想的深度学习网络架构,适用于嵌入式设备和计算资源有限的场景。

如下为Channel Shuffle原理:

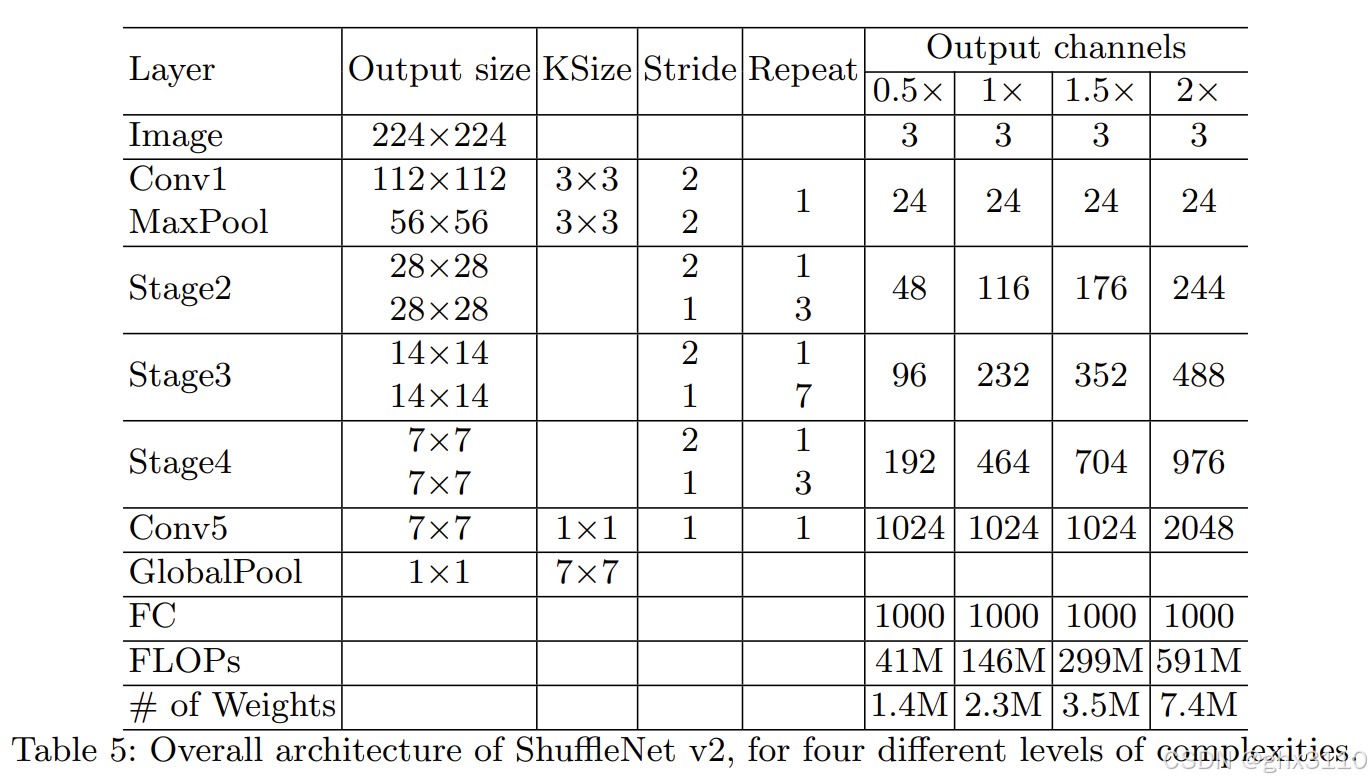

网络结构:

(a)(b)为ShuffleNet V1原理图,(c)(d)为ShuffleNet V2原理图(d为降采样层)

二、源代码

import torch

import torch.nn as nn

def channel_shuffle(x, groups):

batchsize, num_channels, height, width = x.data.size()

channels_per_group = num_channels // groups

x = x.view(batchsize, groups, channels_per_group, height, width)

x = torch.transpose(x, 1, 2).contiguous()

x = x.view(batchsize, -1, height, width)

return x

class CBRM(nn.Module): # Conv BN ReLU Maxpool2d

def __init__(self, c1, c2):

super(CBRM, self).__init__()

self.conv = nn.Sequential(

nn.Conv2d(c1, c2, kernel_size=3, stride=2, padding=1, bias=False),

nn.BatchNorm2d(c2),

nn.ReLU(inplace=True),

)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

def forward(self, x):

return self.maxpool(self.conv(x))

class Shuffle_Block(nn.Module):

def __init__(self, ch_in, ch_out, stride):

super(Shuffle_Block, self).__init__()

if not (1 <= stride <= 2):

raise ValueError('illegal stride value')

self.stride = stride

branch_features = ch_out // 2

assert (self.stride != 1) or (ch_in == branch_features << 1)

if self.stride > 1:

self.branch1 = nn.Sequential(

self.depthwise_conv(ch_in, ch_in, kernel_size=3, stride=self.stride, padding=1),

nn.BatchNorm2d(ch_in),

nn.Conv2d(ch_in, branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

)

self.branch2 = nn.Sequential(

nn.Conv2d(ch_in if (self.stride > 1) else branch_features,

branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

self.depthwise_conv(branch_features, branch_features, kernel_size=3, stride=self.stride, padding=1),

nn.BatchNorm2d(branch_features),

nn.Conv2d(branch_features, branch_features, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(branch_features),

nn.ReLU(inplace=True),

)

@staticmethod

def depthwise_conv(i, o, kernel_size, stride=1, padding=0, bias=False):

return nn.Conv2d(i, o, kernel_size, stride, padding, bias=bias, groups=i)

def forward(self, x):

if self.stride == 1:

x1, x2 = x.chunk(2, dim=1)

out = torch.cat((x1, self.branch2(x2)), dim=1)

else:

out = torch.cat((self.branch1(x), self.branch2(x)), dim=1)

out = channel_shuffle(out, 2)

return out

三、应用YOLOv8

3.1 block.py文件

在ultralytics/nn/modules/block.py中添加第二步的代码,然后在该文件最上方添加如下代码:

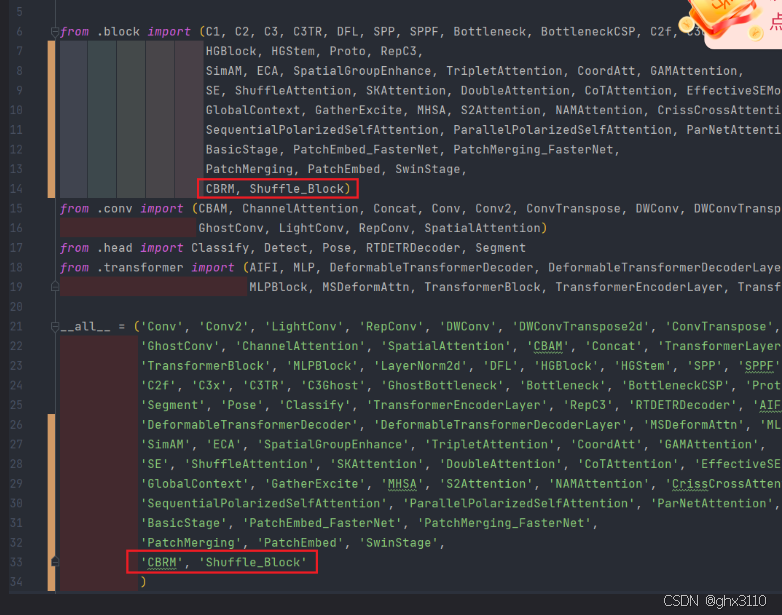

3.2 init.py 文件

在 ultralytics/nn/modules/init.py 文件中的两处位置分别添加如下代码,

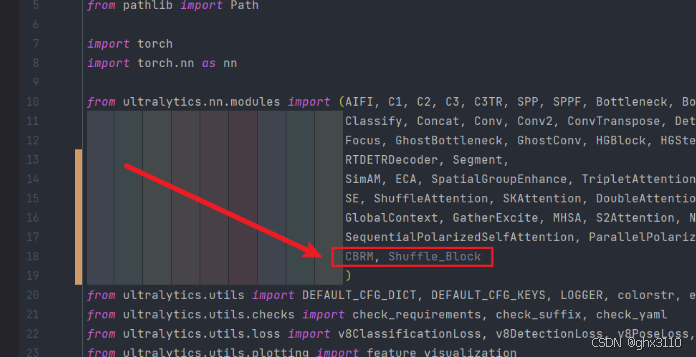

3.3 task.py导入

在 ultralytics/nn/tasks.py 上方导入类名:

在parse_model类中,添加如下代码:

elif m in [CBRM, Shuffle_Block]:

c1, c2 = ch[f], args[0]

if c2 != nc:

c2 = make_divisible(min(c2, max_channels) * width, 8)

args = [c1, c2, *args[1:]]

3.4 yolov8-ShuffleNetv2.yaml文件配置

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [ -1, 1, CBRM, [ 32 ] ] # 0-P2/4

- [ -1, 1, Shuffle_Block, [ 128, 2 ] ] # 1-P3/8

- [ -1, 3, Shuffle_Block, [ 128, 1 ] ] # 2

- [ -1, 1, Shuffle_Block, [ 256, 2 ] ] # 3-P4/16

- [ -1, 7, Shuffle_Block, [ 256, 1 ] ] # 4

- [ -1, 1, Shuffle_Block, [ 512, 2 ] ] # 5-P5/32

- [ -1, 3, Shuffle_Block, [ 512, 1 ] ] # 6

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']]

- [[-1, 3], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 9

- [-1, 1, nn.Upsample, [None, 2, 'nearest']]

- [[-1, 2], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 12 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 9], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 15 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 6], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 18 (P5/32-large)

- [[12, 15, 18], 1, Detect, [nc]] # Detect(P3, P4, P5)

6397

6397

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?