前述

ceres在求解视觉导航方面,就依赖一个数学模型,就是相机的投影模型。在视觉导航中,已知的条件只有特征点在图像中的二维坐标

(

u

,

v

)

(u,v)

(u,v),特征点在相机坐标系下的描述应该是一个三维坐标

(

X

,

Y

,

Z

)

(X,Y,Z)

(X,Y,Z),那么从相机坐标系到图像坐标系的变换,涉及到相机的内参矩阵:

(

X

,

Y

,

Z

)

→

(

u

,

v

)

:

Z

[

u

v

1

]

=

[

f

x

0

c

x

0

f

y

c

y

0

0

1

]

[

X

Y

Z

]

(X,Y,Z)\rightarrow(u,v):Z\begin{bmatrix}u\\v\\1\end{bmatrix}=\begin{bmatrix}f_x&0&c_x\\0&f_y&c_y\\0&0&1\end{bmatrix}\begin{bmatrix}X\\Y\\Z\end{bmatrix}

(X,Y,Z)→(u,v):Z

uv1

=

fx000fy0cxcy1

XYZ

在世界坐标系下描述这个特征点是

(

X

W

,

Y

W

,

Z

W

)

(X_W,Y_W,Z_W)

(XW,YW,ZW),世界坐标系到相机坐标系的变换,涉及到相机的外参矩阵:

[

X

Y

Z

]

=

R

[

X

W

Y

W

Z

W

]

+

t

\begin{bmatrix}X\\Y\\Z\end{bmatrix}=R\begin{bmatrix}X_W\\Y_W\\Z_W\end{bmatrix}+t

XYZ

=R

XWYWZW

+t

其中

R

∈

R

3

×

3

R\in R^{3\times 3}

R∈R3×3,

t

∈

R

3

×

1

t\in R^{3\times 1}

t∈R3×1。

那么问题就是,知道了

(

u

,

v

)

(u,v)

(u,v),求解剩下的所有未知量,包括内参矩阵,外参矩阵,特征点在世界坐标系下的描述,一共九个参数,因为一般

f

x

=

f

y

=

f

f_x=f_y=f

fx=fy=f,

c

x

=

c

y

=

c

c_x=c_y=c

cx=cy=c,

R

R

R虽然是

3

×

3

3\times 3

3×3的矩阵,但是实际上可以用Rodrigues角来表示,而Rodrigues角只有三个变量,因此,最终要确认的参数如下:

f

f

f、

c

c

c、

Z

Z

Z、

R

R

R、

t

t

t一共九个参数,用

F

F

F表示上述投影关系,

F

F

F的参数是

(

f

,

c

,

Z

,

R

,

t

)

(f,c,Z,R,t)

(f,c,Z,R,t)

对于视觉导航来说,在某一个时刻,有

m

m

m个路标,其中第

i

i

i个路标被

k

i

k_i

ki个相机观测到,那么就会有相应数量的观测方程,就可以用ceres来求解,很像前面的曲线拟合:有许多组测量值和已知的模型,拟合模型的参数就可以。

实例

实例分析

这里使用bal数据集进行说明,对于BAL数据集来说,关于相机的投影模型是这样的,对于世界坐标系里面某个点

(

X

W

,

Y

W

,

Z

W

)

(X_W,Y_W,Z_W)

(XW,YW,ZW),经过旋转

R

R

R和平移

t

t

t投影到相机坐标系下,经过两个畸变参数和一个焦距参数投影到图像坐标系下,和以往讨论的相机模型不同的是,这里数据集不考虑光心参数,总的来说,可以用以下方程描述:

{

[

X

Y

Z

]

=

R

[

X

W

Y

W

Z

W

]

+

t

[

u

u

n

d

i

s

v

u

n

d

i

s

1

]

=

[

l

×

f

0

0

0

l

×

f

0

0

0

1

]

[

X

/

Z

Y

/

Z

1

]

其中

l

=

1

+

l

1

×

r

2

+

l

2

×

r

4

,

r

2

=

X

2

+

Y

2

Z

2

\begin{cases}\begin{bmatrix}X\\Y\\Z\end{bmatrix}=R\begin{bmatrix}X_W\\Y_W\\Z_W\end{bmatrix}+t\\\begin{bmatrix}u_{undis}\\v_{undis}\\1\end{bmatrix}=\begin{bmatrix}l\times f&0&0\\0&l\times f&0\\0&0&1\end{bmatrix}\begin{bmatrix}X/Z\\Y/Z\\1\end{bmatrix}&其中l=1+l_1\times r^2+l_2\times r^4,r^2=\frac{X^2+Y^2}{Z^2}\end{cases}

⎩

⎨

⎧

XYZ

=R

XWYWZW

+t

uundisvundis1

=

l×f000l×f0001

X/ZY/Z1

其中l=1+l1×r2+l2×r4,r2=Z2X2+Y2

其中相机涉及到九个参数:以罗德里格角表示的旋转(三个),位移参数(三个),畸变参数(两个),焦距参数(一个)

点涉及到的参数有三个:

X

Y

Z

XYZ

XYZ世界坐标

因此,涉及到的ceres的残差方程可以这样写,是关于从世界坐标系重投影到二维图像坐标系的残差的

template<typename T>

bool operator()(const T* const camera,const T* const land_mark,T* residual)const{

T point[3];

ceres::AngleAxisRotatePoint(camera,land_mark,point);

point[0]+=camera[3];

point[1]+=camera[4];

point[2]+=camera[5];

T point_x=-point[0]/point[2];

T point_y=-point[1]/point[2];

const T& l1 = camera[7];

const T& l2 = camera[8];

T r2 = point_x * point_x + point_y * point_y;

T distortion = 1.0 + r2 * (l1 + l2 * r2);

const T& focal = camera[6];

T predicted_x = focal * distortion * point_x;

T predicted_y = focal * distortion * point_y;

// The error is the difference between the predicted and observed position.

residual[0] = predicted_x - observe_x;

residual[1] = predicted_y - observe_y;

return true;

}

实例代码

CMakeLists.txt

cmake_minimum_required(VERSION 2.8)

project(ceres-tutorial)

find_package(Ceres REQUIRED)

find_package(Python3 COMPONENTS Interpreter Development REQUIRED)

include_directories(${CERES_INCLUDE_DIRS})

file(GLOB SOURCE_FILE ${CMAKE_SOURCE_DIR}/*.cpp)

foreach(CPP IN LISTS SOURCE_FILE)

get_filename_component(CPP_FILENAME ${CPP} NAME_WE)

add_executable(${CPP_FILENAME} ${CPP})

target_link_libraries(${CPP_FILENAME} ${CERES_LIBRARIES} Python3::Python Python3::Module)

endforeach(CPP IN LISTS SOURCE_FILE)

C++源码

#include<iostream>

#include<fstream>

#include<vector>

#include<ceres/ceres.h>

#include<ceres/rotation.h>

#include<matplotlibcpp.h>

class bal_data{

public:

bal_data(std::string data_path_):data_path(data_path_){

std::ifstream in_file_obj(data_path);

if(in_file_obj.is_open()){

std::string str1,str2,str3,str4;

in_file_obj>>str1;

in_file_obj>>str2;

in_file_obj>>str3;

camera_cnt=std::stoi(str1);

land_mark_cnt=std::stoi(str2);

observation_cnt=std::stoi(str3);

for(int i=0;i<observation_cnt;i++){

in_file_obj>>str1;

in_file_obj>>str2;

in_file_obj>>str3;

in_file_obj>>str4;

land_mark_observations.push_back(

std::make_pair(

std::make_pair(std::stoi(str1),std::stoi(str2)),

std::make_pair(std::stod(str3),std::stod(str4))

));

}

for(int i=0;i<camera_cnt;i++){

double* temp(new double[9]);

for(int j=0;j<9;j++){

std::string str;

in_file_obj>>str;

temp[j]=std::stod(str);

}

cameras.push_back(temp);

}

for(int i=0;i<land_mark_cnt;i++){

double* temp(new double[3]);

for(int j=0;j<3;j++){

std::string str;

in_file_obj>>str;

temp[j]=std::stod(str);

}

land_marks.push_back(temp);

}

in_file_obj.clear();

}

else{

std::cout<<"load data fails"<<std::endl;

return;

}

}

bal_data():bal_data("../data"){}

void out_data(){

int cnt=0;

for(auto land_mark_observation:land_mark_observations){

std::cout<<cnt++<<" ";

std::cout<<land_mark_observation.first.first<<" "

<<land_mark_observation.first.second<<" "

<<land_mark_observation.second.first<<" "

<<land_mark_observation.second.second<<"\n";

}

}

double* load_camera(int i){

return cameras.at(land_mark_observations.at(i).first.first);

}

double* load_land_mark(int i){

return land_marks.at(land_mark_observations.at(i).first.second);

}

int observation_cnt_(){

return observation_cnt;

}

int land_mark_cnt_(){

return land_mark_cnt;

}

std::pair<double,double> load_observation(int i){

return land_mark_observations.at(i).second;

}

private:

std::string data_path{"../data"};

int camera_cnt{0};

int land_mark_cnt{0};

int observation_cnt{0};

//<<camera_index,land_mark_index>,<observation_x,observation_y>>

std::vector<std::pair<std::pair<int,int>,std::pair<double,double>>> land_mark_observations;

std::vector<double*> cameras;

std::vector<double*> land_marks;

};

struct reproject{

public:

reproject(double x_,double y_):observe_x(x_),observe_y(y_){}

template<typename T>

bool operator()(const T* const camera,const T* const land_mark,T* residual)const{

T point[3];

ceres::AngleAxisRotatePoint(camera,land_mark,point);

point[0]+=camera[3];

point[1]+=camera[4];

point[2]+=camera[5];

T point_x=-point[0]/point[2];

T point_y=-point[1]/point[2];

const T& l1 = camera[7];

const T& l2 = camera[8];

T r2 = point_x * point_x + point_y * point_y;

T distortion = 1.0 + r2 * (l1 + l2 * r2);

const T& focal = camera[6];

T predicted_x = focal * distortion * point_x;

T predicted_y = focal * distortion * point_y;

// The error is the difference between the predicted and observed position.

residual[0] = predicted_x - observe_x;

residual[1] = predicted_y - observe_y;

return true;

}

private:

double observe_x,observe_y;

};

int main(int argc,char**argv){

bal_data object;

// object.out_data();

std::vector<double> x_;

std::vector<double> y_;

std::vector<double> z_;

for(int i=0;i<object.land_mark_cnt_();i++){

x_.push_back(*(object.load_land_mark(i)));

y_.push_back(*(object.load_land_mark(i)+1));

z_.push_back(*(object.load_land_mark(i)+2));

}

ceres::Problem problem;

for(int i=0;i<object.observation_cnt_();i++){

// std::cout<<"point = "<<object.load_observation(i).first<<","<<object.load_observation(i).second<<std::endl;

ceres::CostFunction* cost_function(

new ceres::AutoDiffCostFunction<reproject,2,9,3>(

new reproject(object.load_observation(i).first,object.load_observation(i).second)

)

);

ceres::LossFunction* loss(new ceres::HuberLoss(1.0));

problem.AddResidualBlock(cost_function,nullptr,object.load_camera(i),object.load_land_mark(i));

}

ceres::Solver::Options option;

option.linear_solver_type=ceres::DENSE_SCHUR;

option.minimizer_progress_to_stdout=true;

ceres::Solver::Summary summary;

ceres::Solve(option,&problem,&summary);

std::cout<<summary.FullReport()<<std::endl;

std::vector<double> x;

std::vector<double> y;

std::vector<double> z;

for(int i=0;i<object.land_mark_cnt_();i++){

x.push_back(*(object.load_land_mark(i)));

y.push_back(*(object.load_land_mark(i)+1));

z.push_back(*(object.load_land_mark(i)+2));

}

std::map<std::string,std::string> kw;

kw["marker"]="v";

kw["color"]="blue";

kw["label"]="optimized";

matplotlibcpp::scatter(x,y,z,1.,kw);

matplotlibcpp::legend();

kw["marker"]="o";

kw["color"]="red";

kw["label"]="original";

matplotlibcpp::scatter(x,y,z,1.,kw);

matplotlibcpp::legend();

matplotlibcpp::show();

return 0;

}

使用

在源码文件夹中新建build文件夹编译,把从BAL官网下载数据集,数据集文件放在和build文件夹同级文件夹里面,执行以下

./simple_bal ../data

实例结果

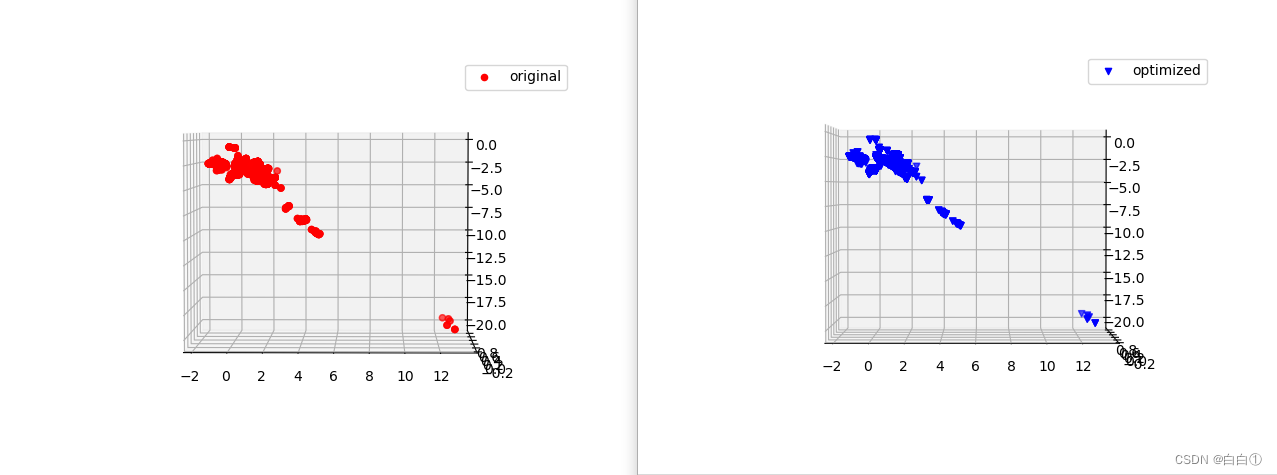

这里输出的结果是对于某一个固定时刻内,由五十台相机确定的一组路标点的坐标

似乎看不出来差别,哈哈。

似乎看不出来差别,哈哈。

716

716

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?