torch.distributed支持3中backends,'gloo', 'mpi' 和 'nccl', 每种backend支持的函数是不同的,需要查表确认 https://pytorch.org/docs/stable/distributed.html#

下面以backend = 'nccl' 为例, 演示获取分布在不同GPU上的某个tensor的值的方式

1. reduce or all_reduce

# 获取该tensor的均值

box_iou = compute_box_iou(gt_box, predicted_box)

dist.reduce(box_iou, dst=0)

if dist.get_rank() == 0:

box_iou = box_iou / dist.get_world_size()

# 获取字典中每一个字段的均值

loss_names = []

all_losses = []

for k in sorted(loss_dict.keys()):

loss_names.append(k)

all_losses.append(loss_dict[k])

all_losses = torch.stack(all_losses, dim=0)

dist.reduce(all_losses, dst=0)

if dist.get_rank() == 0:

# only main process gets accumulated, so only divide by world_size in this case

all_losses /= dist.get_world_size()

loss_dict = {k: v for k, v in zip(loss_names, all_losses)}

2. gather or all_gather

box_iou = metric_box_iou(gt_boxes, predicted_boxes)

mask_iou = metric_mask_iou(gt_masks, pred_masks)

iou = torch.stack([box_iou, mask_iou])

# 注意此处不能使用gather_t = [torch.ones_like(iou)] * dist.get_world_size() 来定义

# 因为这样the output tensors all share the same underlying storage

gather_t = [torch.ones_like(iou) for _ in range(dist.get_world_size())]

dist.all_gather(gather_t, iou)

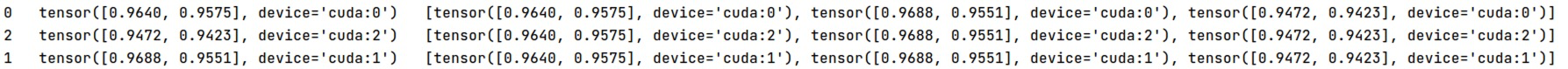

print(dist.get_rank(), ' ', iou, ' ', gather_t)

可以看到每个GPU都拿到了所有GPUs上的数据,并且以GPU0-GPUn排序

490

490

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?