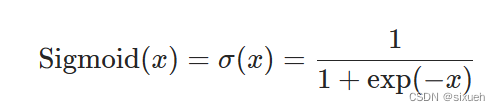

1.常见的功能

nn.ReLU:小于0,归零

二分类输出层用sigmod,隐藏层用ReLu(弹幕上说的)

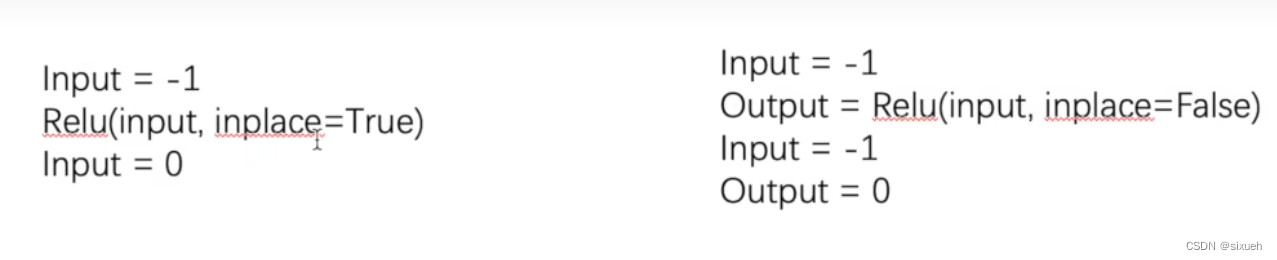

2.Relu的参数

(1)inplace 原地替换

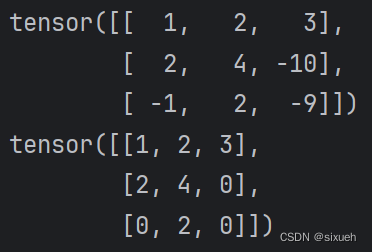

3.Relu的构建

import torch

from torch import nn

data = torch.tensor([[1, 2, 3],

[2, 4, -10],

[-1, 2, -9]])

print(data)

class Relu(nn.Module):

def __init__(self):

super().__init__()

self.relu1 = nn.ReLU(inplace=False)

def forward(self, input):

output = self.relu1(input)

return output

Relu1 = Relu()

output = Relu1(data)

print(output)输出结果

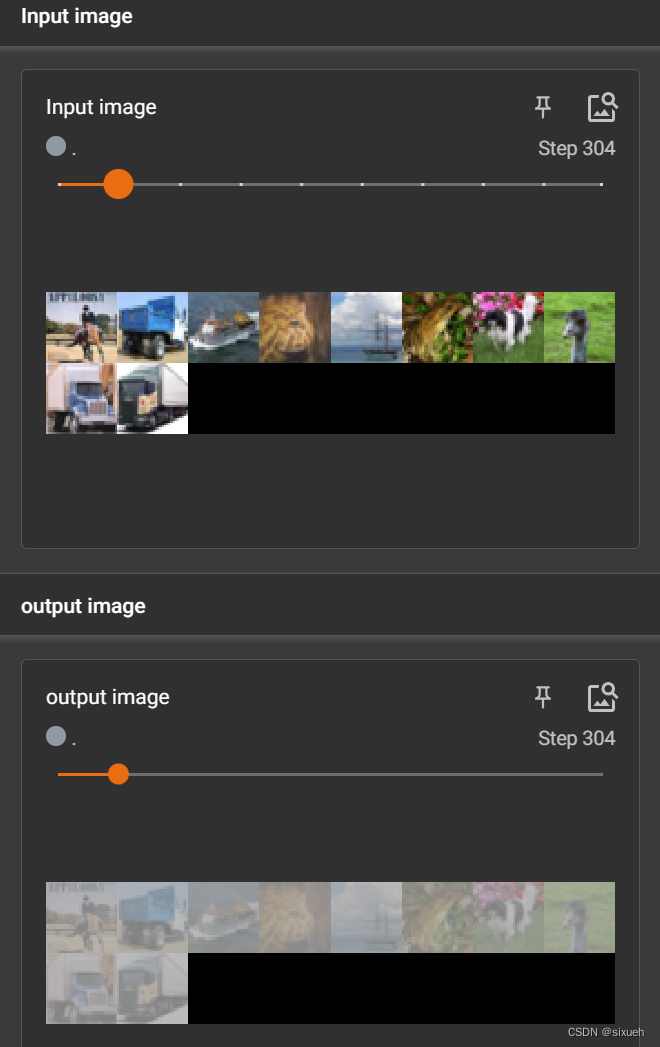

4.sigmoid处理图像

import torchvision.datasets

from torch import nn

from torch.nn import Sigmoid

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

# 数据集

dataset = torchvision.datasets.CIFAR10('../dataset', train=False, transform=torchvision.transforms.ToTensor(),

download=False)

dataloader = DataLoader(dataset, batch_size=10, shuffle=True)

class Sigmoid1(nn.Module):

def __init__(self):

super().__init__()

self.sigmoid = Sigmoid()

def forward(self, input):

output = self.sigmoid(input)

return output

writer = SummaryWriter('../Sigmoid')

Sigmoid_image = Sigmoid1()

step = 0

for data in dataloader:

imgs, targets = data

writer.add_images('Input image', imgs, step)

output = Sigmoid_image(imgs)

writer.add_images('output image', output, step)

step = step + 1

writer.close()

结果展示

本文介绍了ReLU(RectifiedLinearUnit)和Sigmoid函数在神经网络中的常见使用,包括ReLU的参数理解(如inplace选项)、如何在PyTorch中实现ReLU模块,以及如何使用Sigmoid处理CIFAR10图像数据,并通过TensorBoard展示结果。

本文介绍了ReLU(RectifiedLinearUnit)和Sigmoid函数在神经网络中的常见使用,包括ReLU的参数理解(如inplace选项)、如何在PyTorch中实现ReLU模块,以及如何使用Sigmoid处理CIFAR10图像数据,并通过TensorBoard展示结果。

192

192

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?