- 题目:An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale

- 时间:2021

- 会议:ICLR

- 研究机构:谷歌

- 参考博客:https://blog.csdn.net/baidu_36913330/article/details/120198840

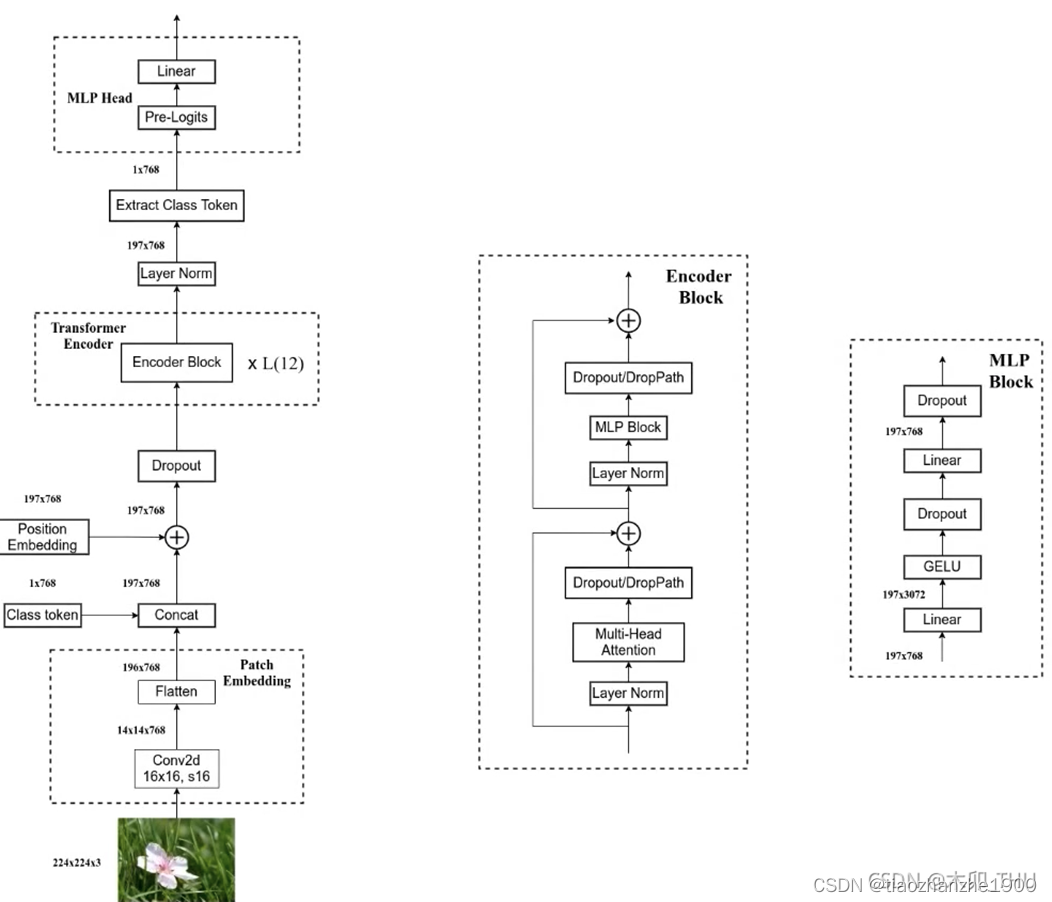

1 Patch Embedding

通过卷积核大小为 16 × 16, 步长为16,输出通道为768来实现,[224,224,3] --> [14,14,768] --> [196, 768]

2 Encoder

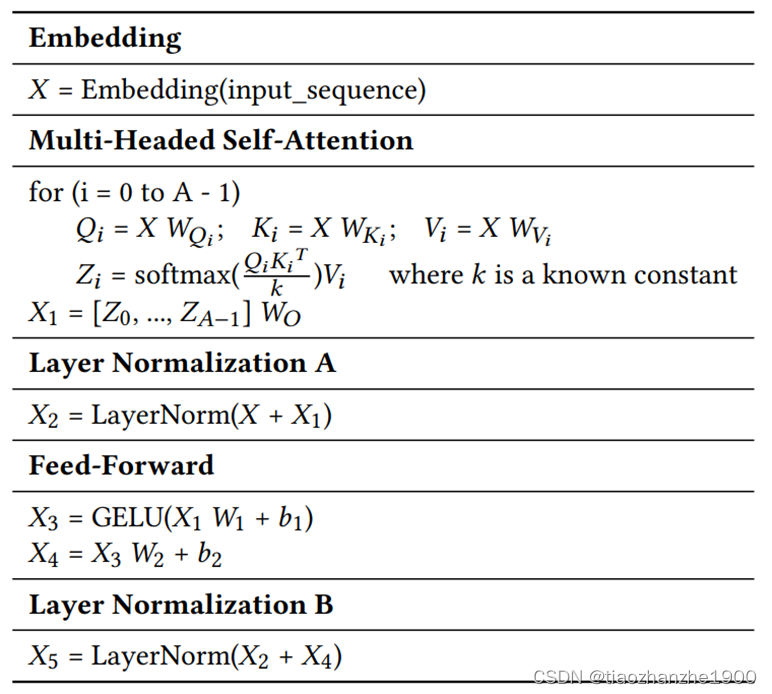

QKV = W * I:

Input: 197 * 768

Weight: 768 * ( 768 * 3)

Output: 197 * (768 * 3)

Mul: 197 * 768 * (768 * 3)

S = QKT:

Q: 197 * 768

K: 197 * 768

S: 197 * 197

Mul: 197 * 768 * 197

S’ = softmax(S/k):

S: 197 * 197

S’: 197 * 197

Mul: 197 * 197

Exp: 197 * 197

Add: 197 * 197

Div: 197 * 197

Z = S’ * V:

S’: 197 * 197

V: 197 * 768

Z: 197 * 768

Mul: 197 * 197 * 768

O = Z * Wo:

Z: 197 * 768

Wo :768 * 768

O: 197 * 768

Mul: 197 * 768 * 768

912

912

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?