1. SCConv介绍

摘要:卷积神经网络(CNN)在各种计算机视觉任务中取得了显着的性能,但这是以巨大的计算资源为代价的,部分原因是卷积层提取了冗余特征。 最近的工作要么压缩训练有素的大型模型,要么探索精心设计的轻量级模型。 在本文中,我们尝试利用特征之间的空间和通道冗余进行 CNN 压缩,并提出一种高效的卷积模块,称为 SCConv(空间和通道重建卷积),以减少冗余计算并促进代表性特征学习。 所提出的 SCConv 由两个单元组成:空间重建单元(SRU)和通道重建单元(CRU)。 SRU采用分离重建方法来抑制空间冗余,而CRU采用分割-变换-融合策略来减少通道冗余。 此外,SCConv是一个即插即用的架构单元,可以直接用来替换各种卷积神经网络中的标准卷积。 实验结果表明,SCConv嵌入模型能够通过减少冗余特征来实现更好的性能,同时显着降低复杂度和计算成本。

官方论文地址:SCConv官方论文地址

官方代码地址:SCConv官方代码地址

SCConv模块结构图:

2.核心代码

import torch

import torch.nn.functional as F

import torch.nn as nn

class GroupBatchnorm2d(nn.Module):

def __init__(self, c_num: int,

group_num: int = 16,

eps: float = 1e-10

):

super(GroupBatchnorm2d, self).__init__()

assert c_num >= group_num

self.group_num = group_num

self.weight = nn.Parameter(torch.randn(c_num, 1, 1))

self.bias = nn.Parameter(torch.zeros(c_num, 1, 1))

self.eps = eps

def forward(self, x):

N, C, H, W = x.size()

x = x.view(N, self.group_num, -1)

mean = x.mean(dim=2, keepdim=True)

std = x.std(dim=2, keepdim=True)

x = (x - mean) / (std + self.eps)

x = x.view(N, C, H, W)

return x * self.weight + self.bias

class SRU(nn.Module):

def __init__(self,

oup_channels: int,

group_num: int = 16,

gate_treshold: float = 0.5,

torch_gn: bool = True

):

super().__init__()

self.gn = nn.GroupNorm(num_channels=oup_channels, num_groups=group_num) if torch_gn else GroupBatchnorm2d(

c_num=oup_channels, group_num=group_num)

self.gate_treshold = gate_treshold

self.sigomid = nn.Sigmoid()

def forward(self, x):

gn_x = self.gn(x)

w_gamma = self.gn.weight / sum(self.gn.weight)

w_gamma = w_gamma.view(1, -1, 1, 1)

reweigts = self.sigomid(gn_x * w_gamma)

# Gate

w1 = torch.where(reweigts > self.gate_treshold, torch.ones_like(reweigts), reweigts)

w2 = torch.where(reweigts > self.gate_treshold, torch.zeros_like(reweigts), reweigts)

x_1 = w1 * x

x_2 = w2 * x

y = self.reconstruct(x_1, x_2)

return y

def reconstruct(self, x_1, x_2):

x_11, x_12 = torch.split(x_1, x_1.size(1) // 2, dim=1)

x_21, x_22 = torch.split(x_2, x_2.size(1) // 2, dim=1)

return torch.cat([x_11 + x_22, x_12 + x_21], dim=1)

class CRU(nn.Module):

def __init__(self,

op_channel: int,

alpha: float = 1 / 2,

squeeze_radio: int = 2,

group_size: int = 2,

group_kernel_size: int = 3,

):

super().__init__()

self.up_channel = up_channel = int(alpha * op_channel)

self.low_channel = low_channel = op_channel - up_channel

self.squeeze1 = nn.Conv2d(up_channel, up_channel // squeeze_radio, kernel_size=1, bias=False)

self.squeeze2 = nn.Conv2d(low_channel, low_channel // squeeze_radio, kernel_size=1, bias=False)

# up

self.GWC = nn.Conv2d(up_channel // squeeze_radio, op_channel, kernel_size=group_kernel_size, stride=1,

padding=group_kernel_size // 2, groups=group_size)

self.PWC1 = nn.Conv2d(up_channel // squeeze_radio, op_channel, kernel_size=1, bias=False)

# low

self.PWC2 = nn.Conv2d(low_channel // squeeze_radio, op_channel - low_channel // squeeze_radio, kernel_size=1,

bias=False)

self.advavg = nn.AdaptiveAvgPool2d(1)

def forward(self, x):

# Split

up, low = torch.split(x, [self.up_channel, self.low_channel], dim=1)

up, low = self.squeeze1(up), self.squeeze2(low)

# Transform

Y1 = self.GWC(up) + self.PWC1(up)

Y2 = torch.cat([self.PWC2(low), low], dim=1)

# Fuse

out = torch.cat([Y1, Y2], dim=1)

out = F.softmax(self.advavg(out), dim=1) * out

out1, out2 = torch.split(out, out.size(1) // 2, dim=1)

return out1 + out2

def autopad(k, p=None, d=1):

if d > 1:

k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

class Conv(nn.Module):

default_act = nn.SiLU() # default activation

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def forward_fuse(self, x):

return self.act(self.conv(x))

class Bottleneck_SCConv(nn.Module):

def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, k[0], 1)

self.cv2 = SCConv(c_)

self.add = shortcut and c1 == c2

def forward(self, x):

"""'forward()' applies the YOLO FPN to input data."""

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C2f_SCConv(nn.Module):

def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):

super().__init__()

self.c = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, 2 * self.c, 1, 1)

self.cv2 = Conv((2 + n) * self.c, c2, 1) # optional act=FReLU(c2)

self.m = nn.ModuleList(

Bottleneck_SCConv(self.c, self.c, shortcut, g, k=((3, 3), (3, 3)), e=1.0) for _ in range(n))

def forward(self, x):

x = self.cv1(x)

x = x.chunk(2, 1)

y = list(x)

# y = list(self.cv1(x).chunk(2, 1))

y.extend(m(y[-1]) for m in self.m)

return self.cv2(torch.cat(y, 1))

def forward_split(self, x):

y = list(self.cv1(x).split((self.c, self.c), 1))

y.extend(m(y[-1]) for m in self.m)

return self.cv2(torch.cat(y, 1))

class SCConv(nn.Module):

def __init__(self,

op_channel: int,

group_num: int = 4,

gate_treshold: float = 0.5,

alpha: float = 1 / 2,

squeeze_radio: int = 2,

group_size: int = 2,

group_kernel_size: int = 3,

):

super().__init__()

self.SRU = SRU(op_channel,

group_num=group_num,

gate_treshold=gate_treshold)

self.CRU = CRU(op_channel,

alpha=alpha,

squeeze_radio=squeeze_radio,

group_size=group_size,

group_kernel_size=group_kernel_size)

def forward(self, x):

x = self.SRU(x)

x = self.CRU(x)

return x

if __name__ == '__main__':

x = torch.randn(1, 32, 16, 16)

model = SCConv(32)

print(model(x).shape)3.YOLOv8中添加SCConv方式

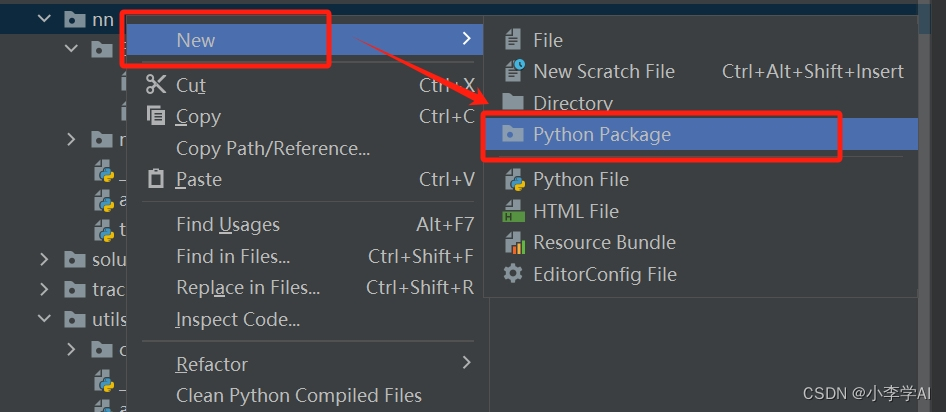

3.1 在ultralytics/nn下新建Extramodule

3.2 在Extramodule里创建SCConv

在SCConv.py文件里添加给出的SCConv代码

添加完SCConv代码后,在ultralytics/nn/Extramodule/__init__.py文件中引用

3.3 在task.py里引用

在ultralytics/nn/tasks.py文件里引用Extramodule

在task.py找到parse_model(ctrl+f可以直接搜索parse_model位置)

添加如下代码:

4.新建一个yolov8SCConv.yaml文件

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 2 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 3, C2f_SCConv, [128, True]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 6, C2f_SCConv, [256, True]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 6, C2f_SCConv, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 3, C2f_SCConv, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 12

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 15 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 12], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 18 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 9], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 21 (P5/32-large)

- [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)

大家根据自己的数据集实际情况,修改nc大小。

5.模型训练

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLO

if __name__ == '__main__':

model = YOLO(r'E:\csdn\ultralytics-main\datasets\yolov8SCConv.yaml')

model.train(data=r'E:\csdn\ultralytics-main\datasets\data.yaml',

cache=False,

imgsz=640,

epochs=100,

single_cls=False, # 是否是单类别检测

batch=16,

close_mosaic=10,

workers=0,

device='0',

optimizer='SGD',

amp=True,

project='runs/train',

name='exp',

)

模型结构打印,成功运行 :

6.本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv8改进有效涨点专栏,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~

2205

2205

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?