静态测距

(1)静态测距:

我们以无干扰墙面为背景,易于框选目标,目标物为黑色笔记本(19.2cm*13.2cm)(相对易分割,可自选其他),首先将相机正对与目标物从40cm至400cm每间隔一段距离连续拍摄几张照片(具体数目自己把控),再在侧面拍摄一些进行测距,通过opencv中的程序得出距离参数进行分析其与实际距离误差(实际距离我们通过手动测量)。

静态测距无需添加打开摄像头的程序段。

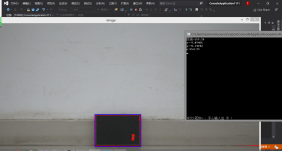

效果如图:

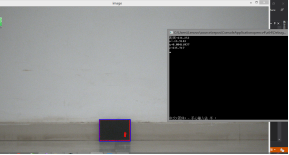

动态测距

(2)动态测距

通过相机进行实时检测,其程序见下面,可以对测距固定位置平稳性和移动过程其变化波动进行分析。

(我采用的是海康威视的相机,所以前一部分程序是驱动相机的,别的相机可能驱动程序不同,可自行修改)。

下面是测距程序 测距程序。

#include "hkcamera.h"

#include<MvCameraControl.h>

#include<iostream>

#include <opencv2/core/utility.hpp>

#include "opencv2/imgproc.hpp"

#include "opencv2/calib3d.hpp"

#include "opencv2/imgcodecs.hpp"

#include "opencv2/videoio.hpp"

#include <opencv2/opencv.hpp>

#include <math.h>

#include <fstream>

#include<vector>

#include<opencv2/calib3d/calib3d.hpp>

#include<opencv2/core/core.hpp>

#include<opencv2/highgui/highgui.hpp>

using namespace std;

using namespace cv;

int main()

{

hkcamera camera;//实例化对象

camera.cameraSet();//相机相关参数设置,可以修改

Mat pic;

int i = 1;

while (1)

{

i++;

pic = camera.frame();//获取一帧图片

if (!pic.data) {

cout << "error" << endl;

return -1;

}

const double width_target = 19.2;

const double height_target = 13.2;

double camD[9] = {

1824.8, -2.4767, 755.9790,

0, 1821.7, 539.0409,

0, 0, 1 };

Mat camera_matrix = Mat(3, 3, CV_64FC1, camD);

double distCoeffD[5] = { -0.0970,0.5801,-0.0060,0.00068725,0 };

Mat distortion_coefficients = Mat(5, 1, CV_64FC1, distCoeffD);

Mat image = imread("z80-1.bmp");

Mat grayImage;

cvtColor(pic, grayImage, COLOR_BGR2GRAY);

threshold(grayImage, grayImage, 70, 255, THRESH_BINARY_INV);

vector<vector<cv::Point>> contours;

vector<cv::Point> maxAreaContour;

findContours(grayImage, contours, RETR_TREE, CHAIN_APPROX_SIMPLE);

drawContours(pic, contours, -1, cv::Scalar(0, 0, 255), 2, 8);

double maxArea = 0;

for (size_t i = 0; i < contours.size(); i++)

{

double area = fabs(cv::contourArea(contours[i]));

if (area > maxArea)

{

maxArea = area;

maxAreaContour = contours[i];

}

}

Rect rect = boundingRect(maxAreaContour);

rectangle(pic, Point(rect.x, rect.y), Point(rect.x + rect.width, rect.y + rect.height), cv::Scalar(255, 0, 0), 2, 8);

vector<cv::Point2f> Points2D;

Points2D.push_back(cv::Point2f(rect.x - rect.width / 2, rect.y - rect.height / 2));

Points2D.push_back(cv::Point2f(rect.x + rect.width / 2, rect.y - rect.height / 2));

Points2D.push_back(cv::Point2f(rect.x + rect.width / 2, rect.y + rect.height / 2));

Points2D.push_back(cv::Point2f(rect.x - rect.width / 2, rect.y + rect.height / 2));

vector<cv::Point3f> Point3d;

double half_x = width_target / 2.0;

double half_y = height_target / 2.0;

Point3d.push_back(Point3f(-half_x, -half_y, 0));

Point3d.push_back(Point3f(half_x, -half_y, 0));

Point3d.push_back(Point3f(half_x, half_y, 0));

Point3d.push_back(Point3f(-half_x, half_y, 0));

Mat rot = Mat::eye(3, 3, CV_64FC1);

Mat trans = Mat::zeros(3, 1, CV_64FC1);

Mat r;

//三种方法求解

/*solvePnP(Point3d, Points2D, camera_matrix, distortion_coefficients, rot, trans, false, SOLVEPNP_ITERATIVE);*/ //实测迭代法似乎只能用4个共面特征点求解,5个点或非共面4点解不出正确的解

cv::solvePnP(Point3d, Points2D, camera_matrix, distortion_coefficients, rot, trans, false); //Gao的方法可以使用任意四个特征点,特征点数量不能少于4也不能多于4

/*solvePnP(Point3d, Points2D, camera_matrix, distortion_coefficients, rot, trans, false, SOLVEPNP_EPNP); */ //该方法可以用于N点位姿估计;与前两种有偏差

Rodrigues(rot, r);

double x = trans.at<double>(0, 0);

double y = trans.at<double>(1, 0);

double z = trans.at<double>(2, 0);

double dist = sqrt(trans.at<double>(0, 0) * trans.at<double>(0, 0) + trans.at<double>(1, 0) * trans.at<double>(1, 0) + trans.at<double>(2, 0) * trans.at<double>(2, 0));

cout << "距离=" << dist << endl;

//cout << "x=" << x << endl;

//cout << "y=" << y << endl;

cout << "z=" << z << endl;

/*cout << "r=" << r << endl;*/

imshow("pic", pic);

char ke = waitKey(1);

if (ke == 'q')

{

break;

}

}

camera.cameraClose();//关闭相机

return 0;

}

1034

1034

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?