简介

ORB 是 Oriented Fast and Rotated Brief 的简称,可以用来对图像中的关键点快速创建特征向量,这些特征向量可以用来识别图像中的对象。

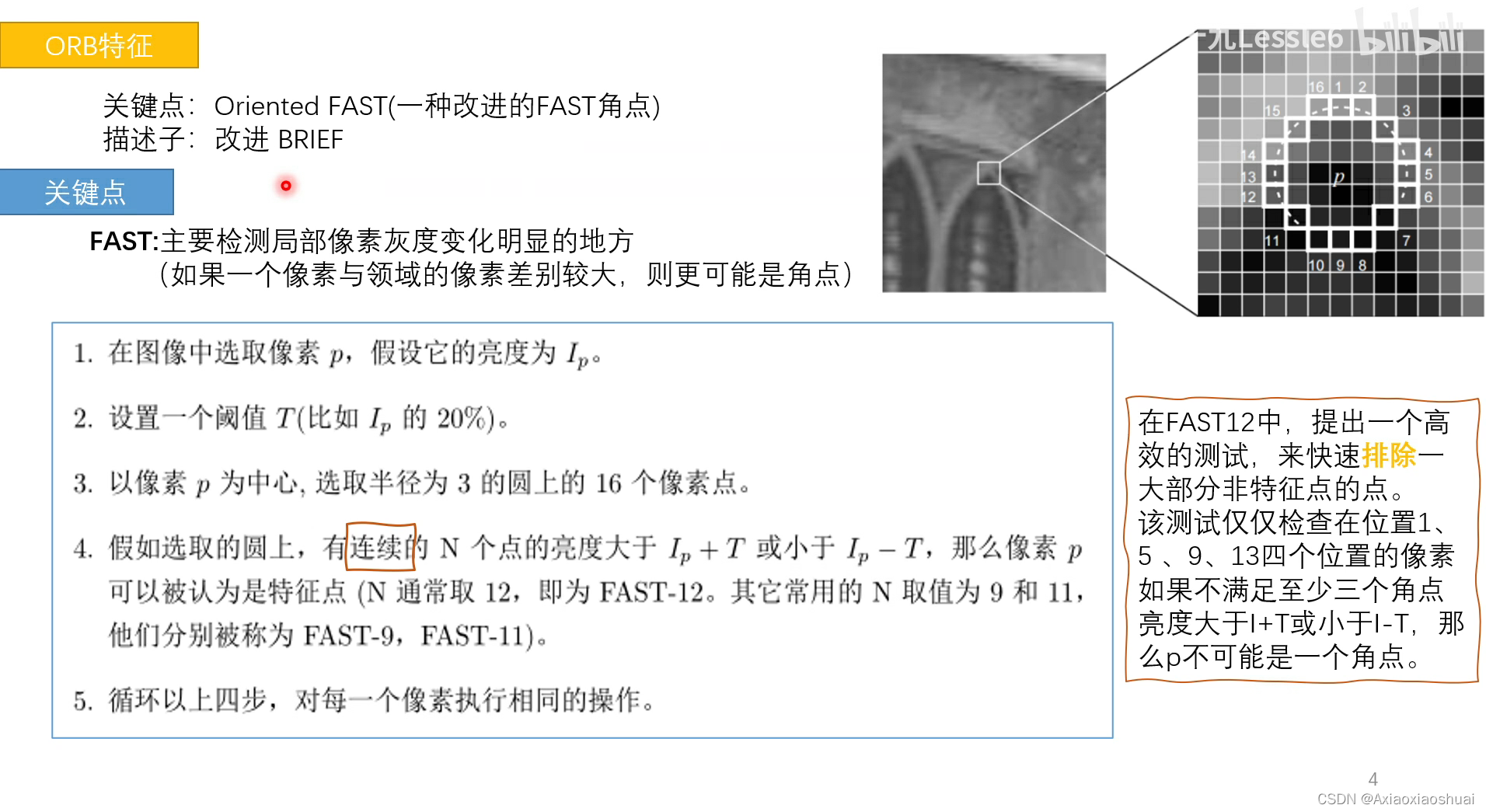

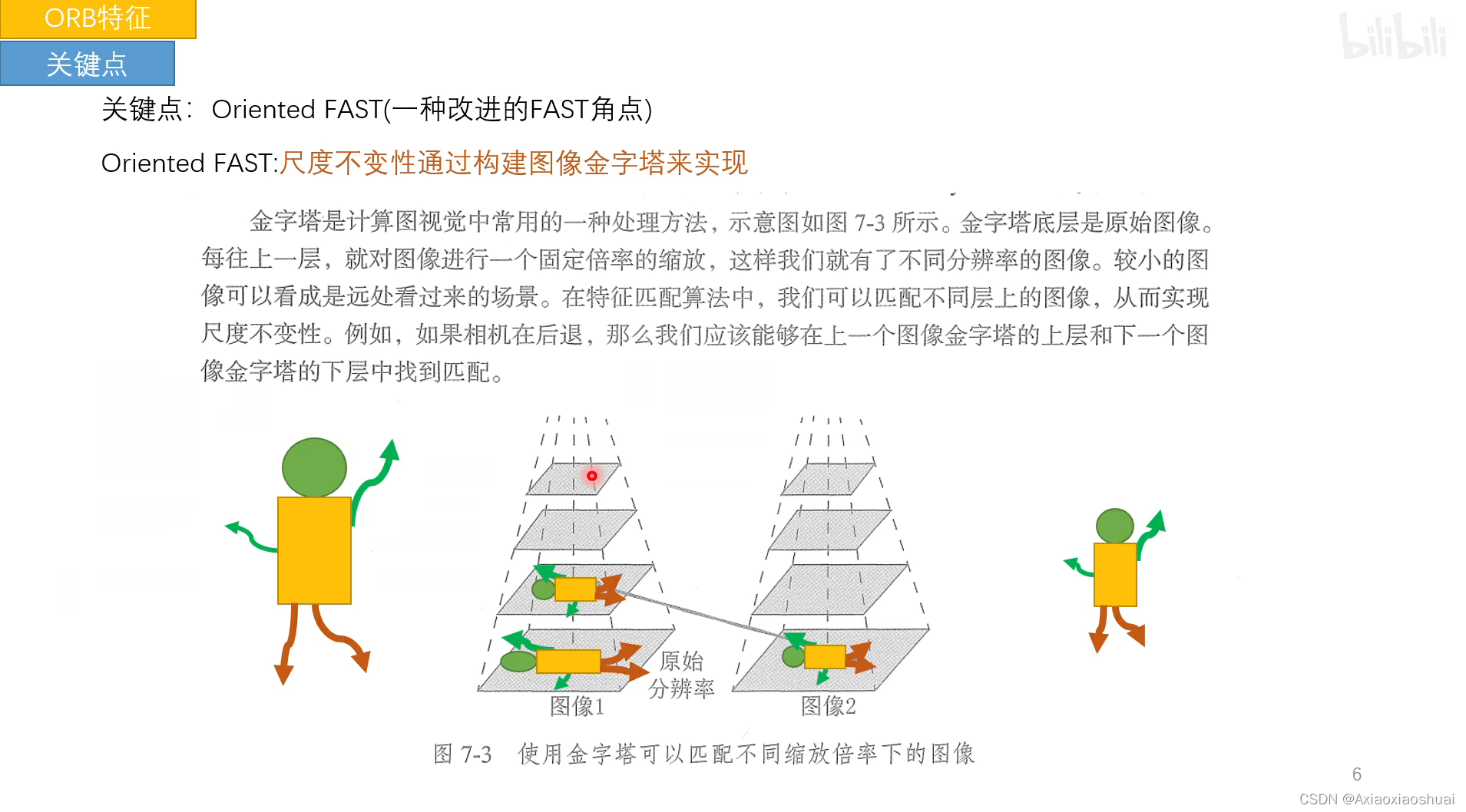

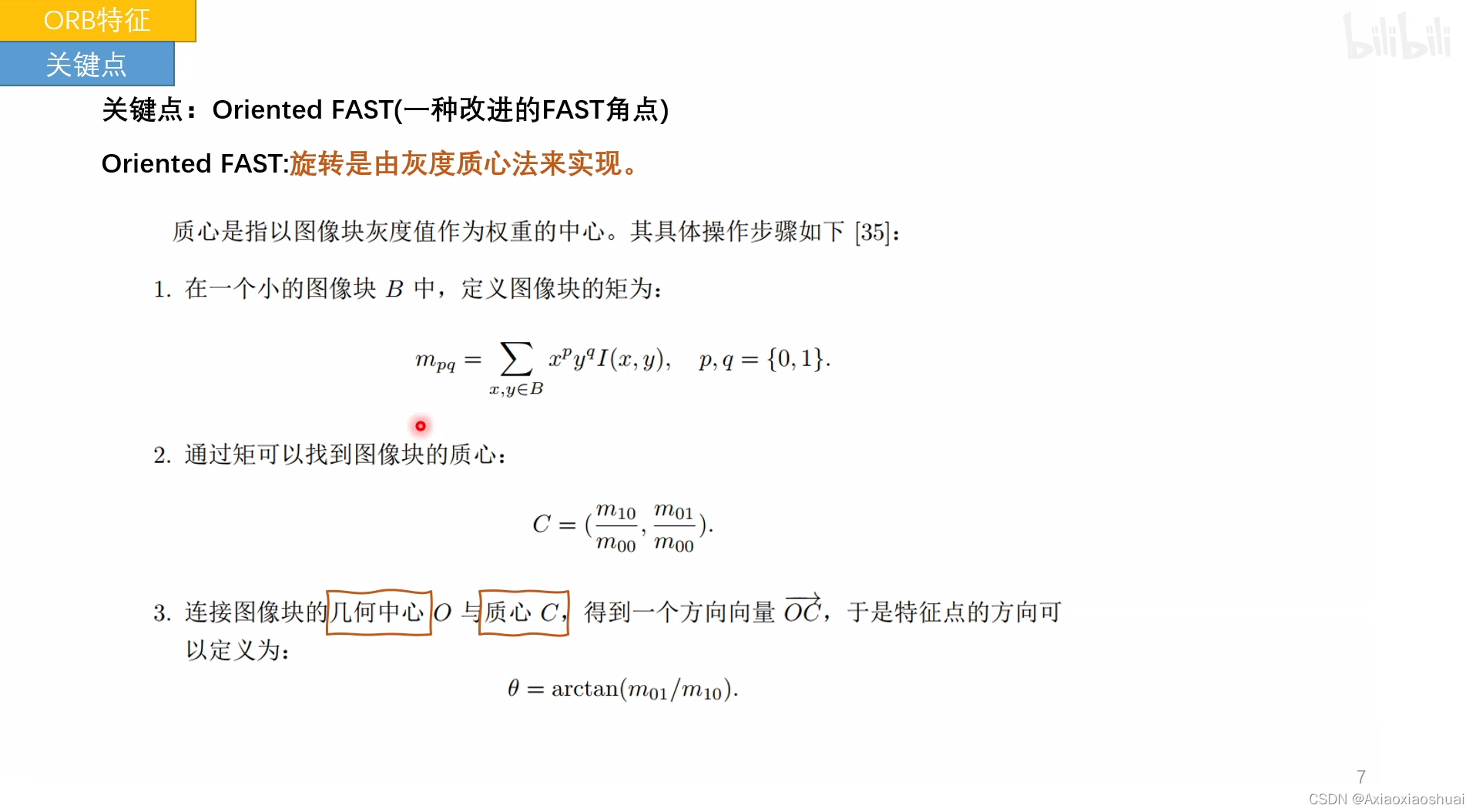

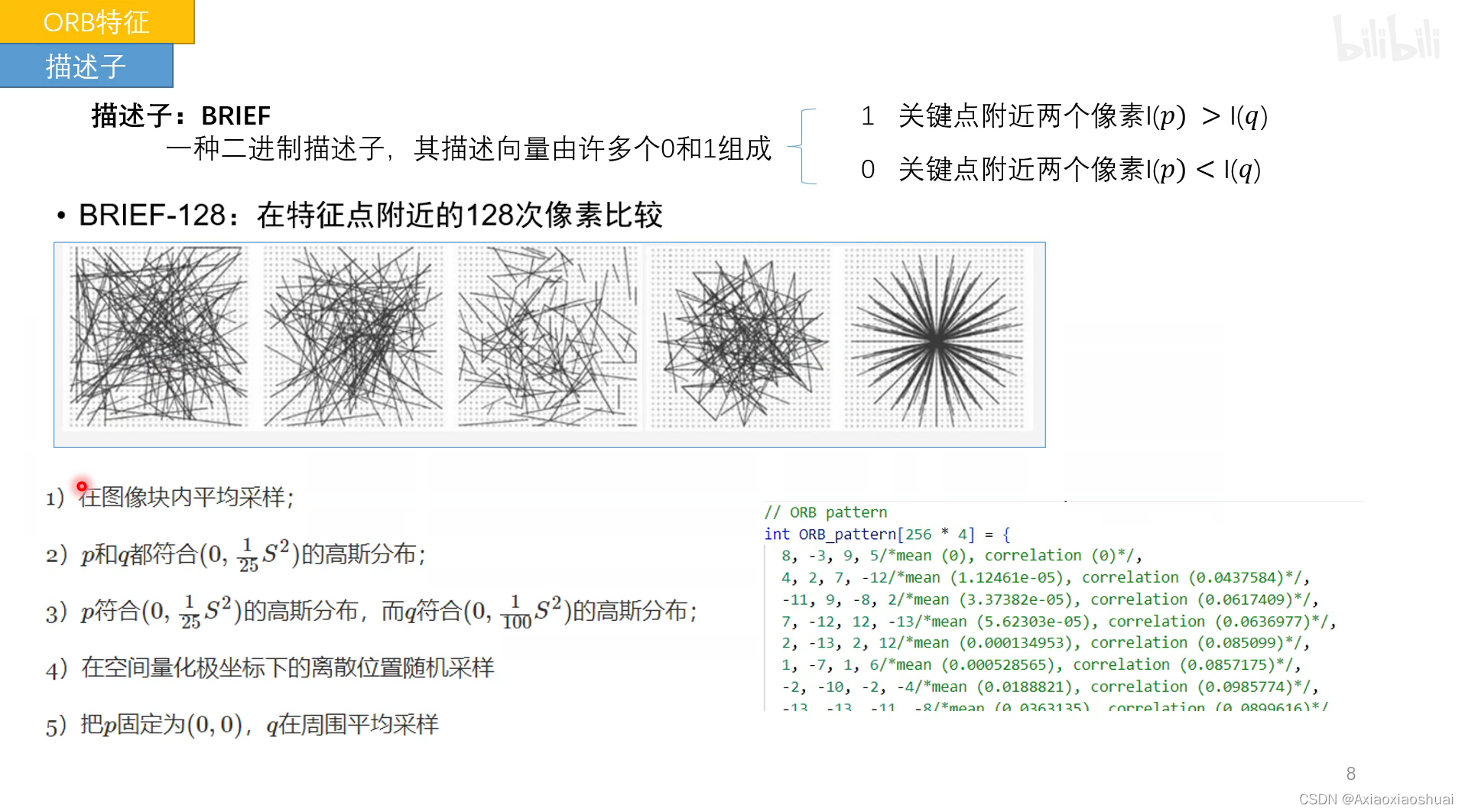

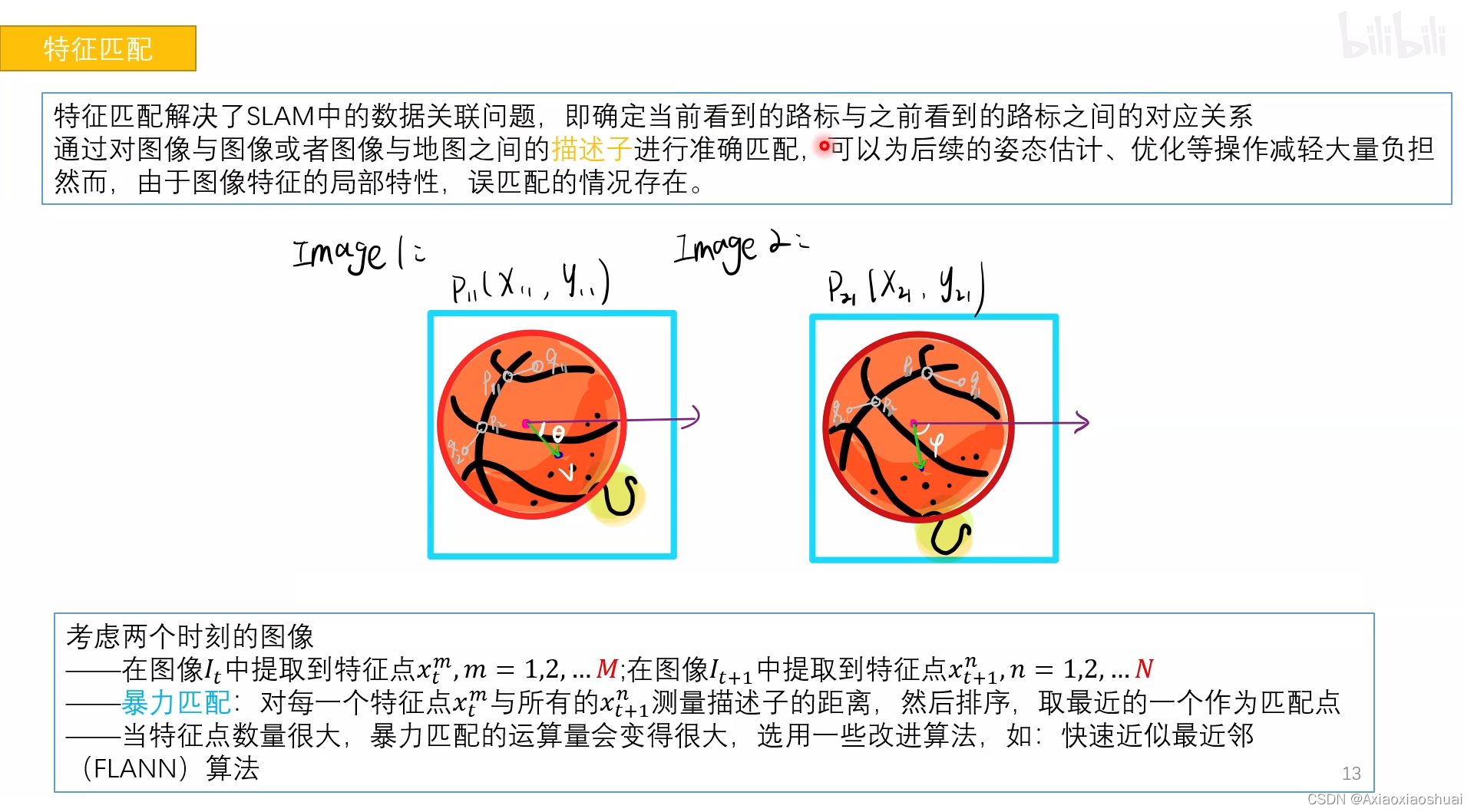

其中,Fast 和 Brief 分别是特征检测算法和向量创建算法。ORB 首先会从图像中查找特殊区域,称为关键点。关键点即图像中突出的小区域,比如角点,比如它们具有像素值急剧的从浅色变为深色的特征。然后 ORB 会为每个关键点计算相应的特征向量。ORB 算法创建的特征向量只包含 1 和 0,称为二元特征向量。1 和 0 的顺序会根据特定关键点和其周围的像素区域而变化。该向量表示关键点周围的强度模式,因此多个特征向量可以用来识别更大的区域,甚至图像中的特定对象。

算法步骤

C++代码

#include<iostream>

#include<opencv2/core/core.hpp>

#include<opencv2/opencv.hpp>

#include<opencv2/highgui/highgui.hpp>

#include <opencv2/features2d/features2d.hpp>

#include<chrono>

using namespace std;

using namespace cv;

int main()

{

//读取图像

Mat img_1 = imread("1.png", CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread("2.png", CV_LOAD_IMAGE_COLOR);

assert(img_1.data != nullptr&&img_2.data != nullptr);

//初始化

std::vector<KeyPoint>keypoints_1, keypoints_2;

Mat descriptors_1, descriptors_2;

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//检测Oriennted FAST 角点位置

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//根据角点位置 计算BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double>time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "extract ORB cost= " << time_used.count() << "seconds. " << endl;

Mat outimg1;

drawKeypoints(img_1, keypoints_1, outimg1, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

//对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> matches;

t1 = chrono::steady_clock::now();

matcher->match(descriptors_1, descriptors_2, matches);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "match ORB cost = " << time_used.count() << " seconds. " << endl;

//计算最大距离和最小距离,对匹配点筛选

auto min_max = minmax_element(matches.begin(), matches.end(), [](const DMatch &m1, const DMatch &m2) { return m1.distance < m2.distance; });

double min_dist = min_max.first->distance;

double max_dist = min_max.second->distance;

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

std::vector<DMatch> good_matches;

for (int i = 0; i < descriptors_1.rows; i++) {

if (matches[i].distance <= max(2 * min_dist, 30.0)) {

good_matches.push_back(matches[i]);

}

}

//绘制匹配结果

Mat img_match;

Mat img_goodmatch;

drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match);

drawMatches(img_1, keypoints_1, img_2, keypoints_2, good_matches, img_goodmatch);

imshow("all matches", img_match);

imshow("good matches", img_goodmatch);

waitKey(0);

system("pause");

return 0;

}

python代码

import cv2 as cv

import matplotlib.pyplot as plt

img1 = cv.imread('C:/Users/dell/Desktop/mayun1.png')

img2 = cv.imread('C:/Users/dell/Desktop/mayun2.png')

img1 = cv.cvtColor(img1, cv.COLOR_RGB2GRAY)

img2 = cv.cvtColor(img2, cv.COLOR_RGB2GRAY)

orb = cv.ORB_create()

kp1, des1 = orb.detectAndCompute(img1, None)

kp2, des2 = orb.detectAndCompute(img2, None)

bf = cv.BFMatcher(cv.NORM_HAMMING, crossCheck = True)

matches = bf.match(des1, des2)

matches = sorted(matches, key = lambda x:x.distance)

img3 = cv.drawMatches(img1, kp1, img2, kp2, matches[: 20], None, flags=2)

plt.imshow(img3), plt.show()

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?