文章目录

0. Vscode环境配置

安装以下插件

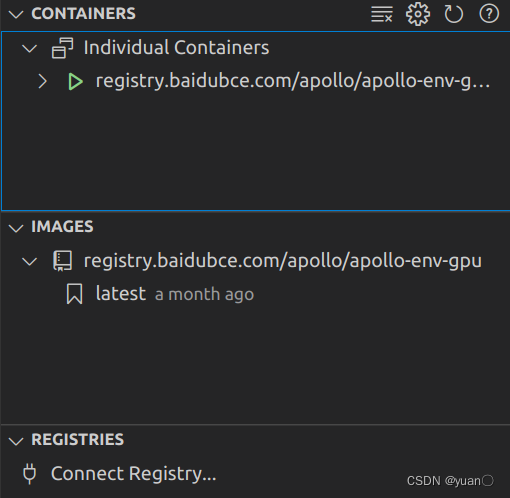

0.1 vscode使用docker

在安装完Apollo后,点击vscode左侧的docker图标,可以看到左栏有以下信息.

Individual Containers是创建的容器

IMAGES是镜像

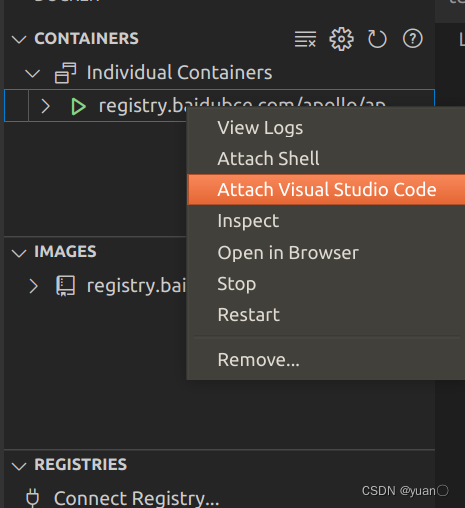

右击有绿色图标的那一行(注意容器名称),如图所示,点击Attach Visual Studio Code,之后就可以进入docker了.

1. Apollo 8.0 软件包安装

PS:接下来的步骤默认已正常安装Apollo,设置完相应的环境、容器。

按照官方教程操作即可.

1.1 Cyber 组件扩展

本文档以 example-component 为例,描述如何简单的扩展开发并编译、运行一个 Cyber 组件 ,为开发者熟悉 Apollo 平台打下基础。您可以通过编译并运行 example-component,进一步观察、学习 Apollo 的编译过程。

Quickstart 工程中的 example-component 的源码是基于 Cyber RT 扩展 Apollo 功能组件的 demo,如果您对如何编写一个 component,dag 文件和 launch 文件感兴趣,您可以在QuickStart工程中找到 example-component 的完整源码。

example-component 的目录结构如下所示:

├── BUILD

├── cyberfile.xml

├── example-components.BUILD

├── example.dag

├── example.launch

├── proto

│ ├── BUILD

│ └── examples.proto

└── src

├── BUILD

├── common_component_example.cc

├── common_component_example.h

├── timer_common_component_example.cc

└── timer_common_component_example.h

1.1.1 步骤一:下载 quickstart 项目

下载项目代码:

git clone https://github.com/ApolloAuto/application-demo.git

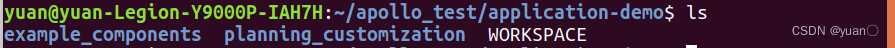

application-demo文件中含有以下内容:

在这一节中,我们只需用到example_components.

将该文件夹复制到工作空间目录(apollo_app).

1.1.2 步骤二:进入 Apollo Docker 环境

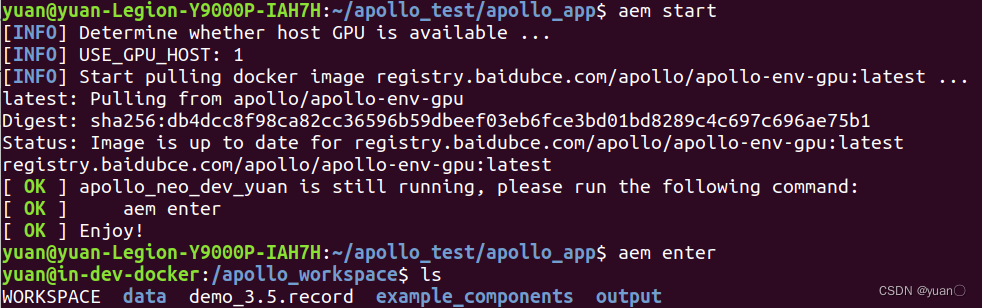

启动容器:

aem start

输入以下命令进入 Apollo:

aem enter

1.1.3 步骤三:编译 component

通过以下命令编译 component:

buildtool build --packages example_components

# or

buildtool build -p example_components

- –packages 参数指定了编译工作空间指定的 package 的路径,本例中指定了 example_components,

- 调用脚本编译命令时,当前所在目录即为工作空间目录,请务必在工作空间下使用脚本编译命令。

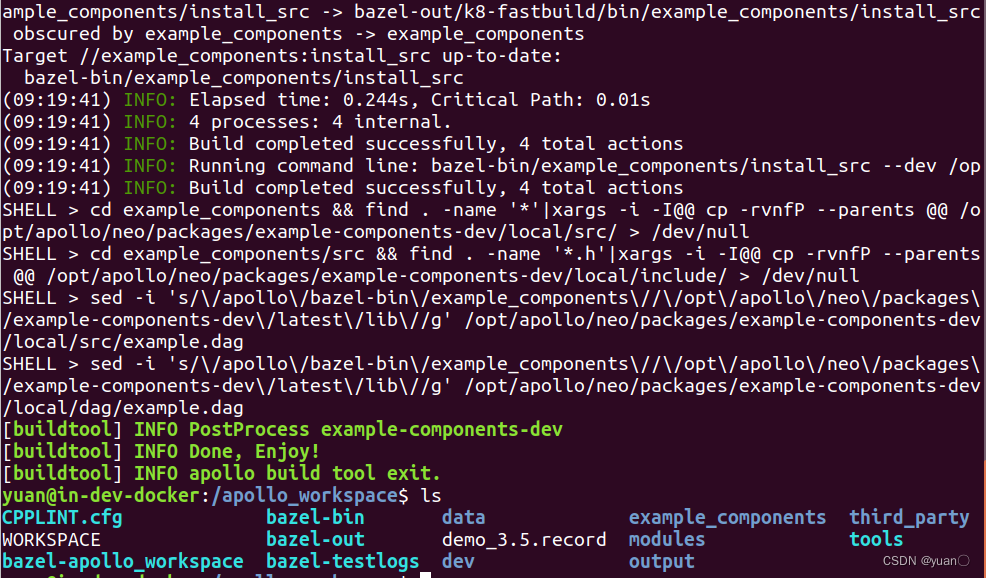

编译完成

1.1.4 步骤四:运行 component

运行以下命令:

cyber_launch start example_components/example.launch

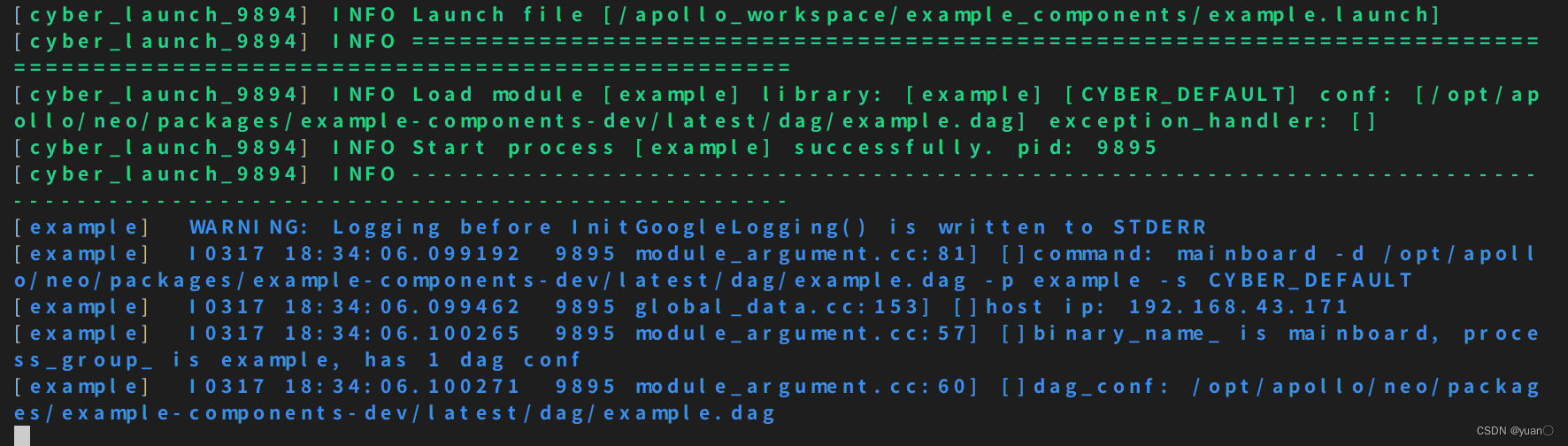

如果一切正常,终端会显示以下内容:

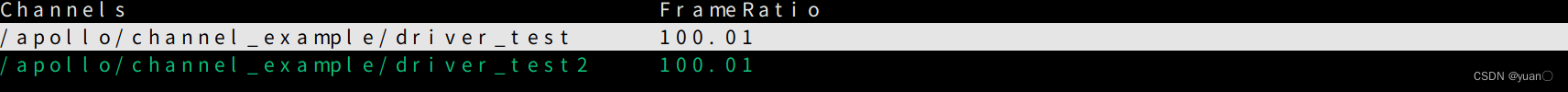

这个时候可以打开另一个终端,运行 cyber_monitor,观察 channel 中的数据:

cyber_monitor

(计数器)

common_component_example.h

#include <memory>

#include "cyber/component/component.h"

#include "examples/proto/examples.pb.h"

using apollo::cyber::Component;

using apollo::cyber::ComponentBase;

using apollo::cyber::examples::proto::Driver;

using apollo::cyber::examples::proto::Chatter;

// 有两个消息源,继承以Driver和Chatter为参数的Component模版类

class CommonComponentSample : public Component<Driver, Chatter> {

public:

bool Init() override;

// Proc() 函数的两个参数表示两个channel中的最新的信息

bool Proc(const std::shared_ptr<Driver>& msg0,

const std::shared_ptr<Chatter>& msg1) override;

};

// 将CommonComopnentSample注册在cyber中

CYBER_REGISTER_COMPONENT(CommonComponentSample)

common_component_example.cc

#include "examples/common_component_example/common_component_example.h"

// 在加载component时调用

bool CommonComponentSample::Init() {

AINFO << "Commontest component init";

return true;

}

// 在主channel,也就是Driver有消息到达时调用

bool CommonComponentSample::Proc(const std::shared_ptr<Driver>& msg0,

const std::shared_ptr<Chatter>& msg1) {

// 将两个消息的序号格式化输出

AINFO << "Start common component Proc [" << msg0->msg_id() << "] ["

<< msg1->seq() << "]";

return true;

}

1.2 感知激光雷达功能测试

步骤一:启动 Apollo Docker 环境并进入

创建工作空间:

mkdir apollo_v8.0

cd apollo_v8.0

输入以下命令以 GPU 模式进入容器环境:

aem start_gpu

输入以下命令进入容器:

aem enter

初始化工作空间:

aem init

步骤二:下载 record 数据包

输入以下命令下载数据包:

wget https://apollo-system.bj.bcebos.com/dataset/6.0_edu/sensor_rgb.tar.xz

创建目录并将下载好的安装包解压到该目录中:

sudo mkdir -p ./data/bag/

sudo tar -xzvf sensor_rgb.tar.xz -C ./data/bag/

步骤三:安装 DreamView

在同一个终端,输入以下命令,安装 DreamView 程序。

buildtool install --legacy dreamview-dev monitor-dev

步骤四:安装 transform、perception 和 localization

在同一个终端,输入以下命令,安装 perception 程序。

buildtool install --legacy perception-dev

注:如果您对感知包有二次开发的需求,使用下述命令安装 perception 程序。

buildtool install perception-dev

使用上述命令 perception 源码会被下载至工作空间中的 modules 中,对源码进行二次开发后使用下述命令进行编译。

buildtool build --gpu --packages modules/perception

输入以下命令安装 localization 、v2x 和 transform 程序。

buildtool install --legacy localization-dev v2x-dev transform-dev

步骤五:模块运行

在同一个终端,输入以下命令,启动 Apollo 的 DreamView 程序。

aem bootstrap start

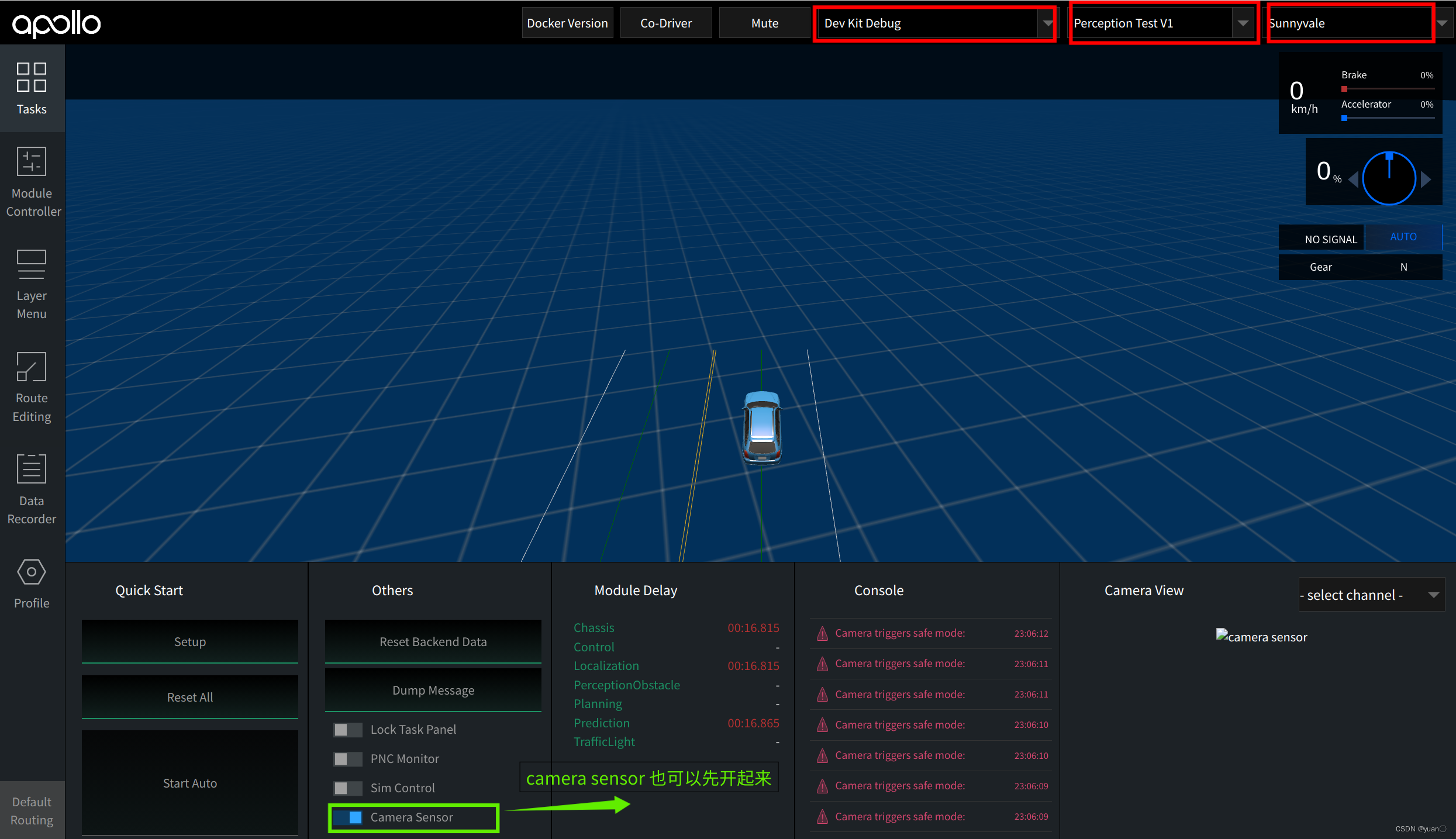

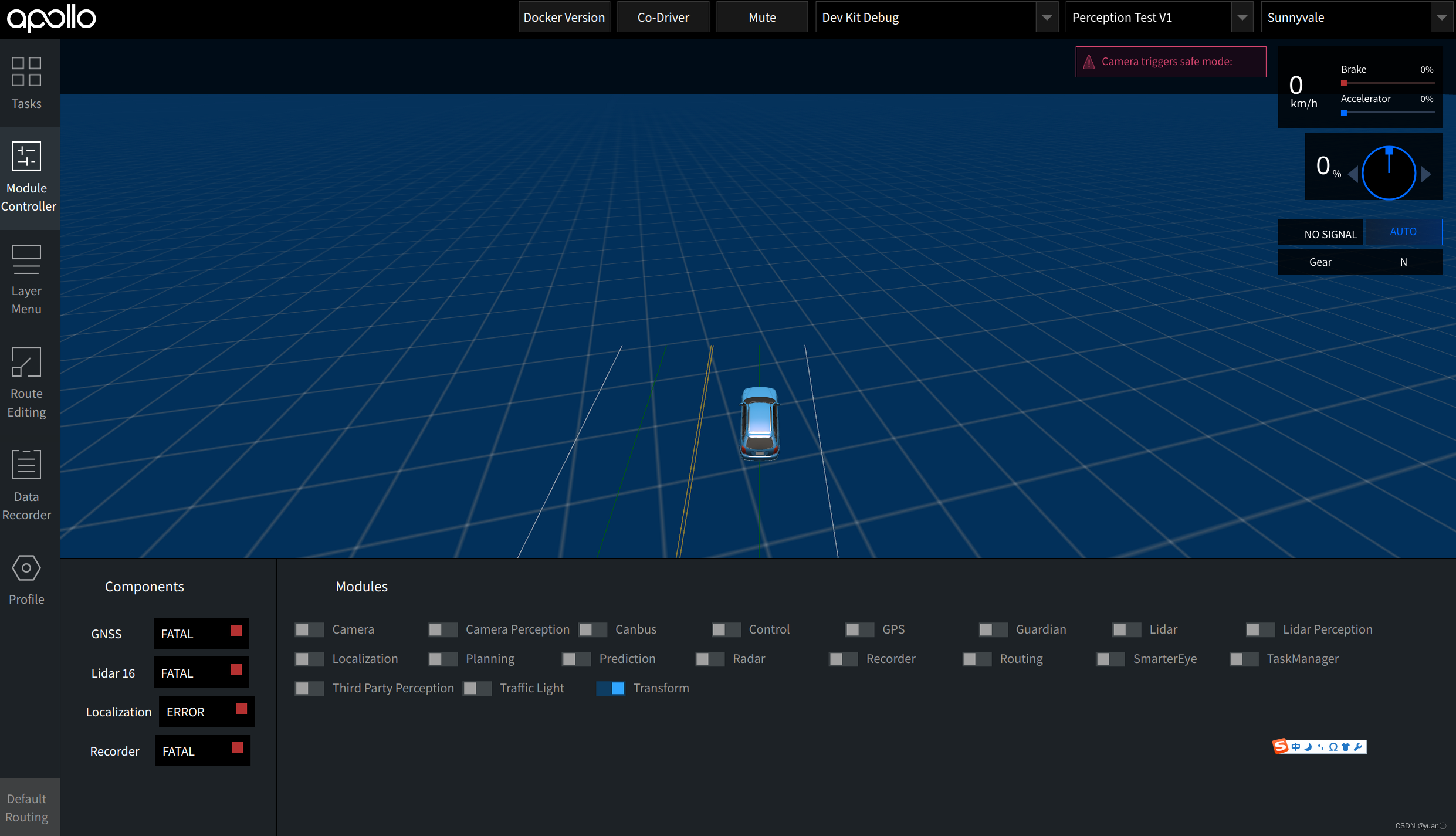

打开浏览器输入localhost:8888地址出现 DreamView 页面,选择正确的模式、车型、地图。

点击页面左侧状态栏Module Controller模块启动 transform 模块:

使用 mainboard 方式启动激光雷达模块:

mainboard -d /apollo/modules/perception/production/dag/dag_streaming_perception_lidar.dag

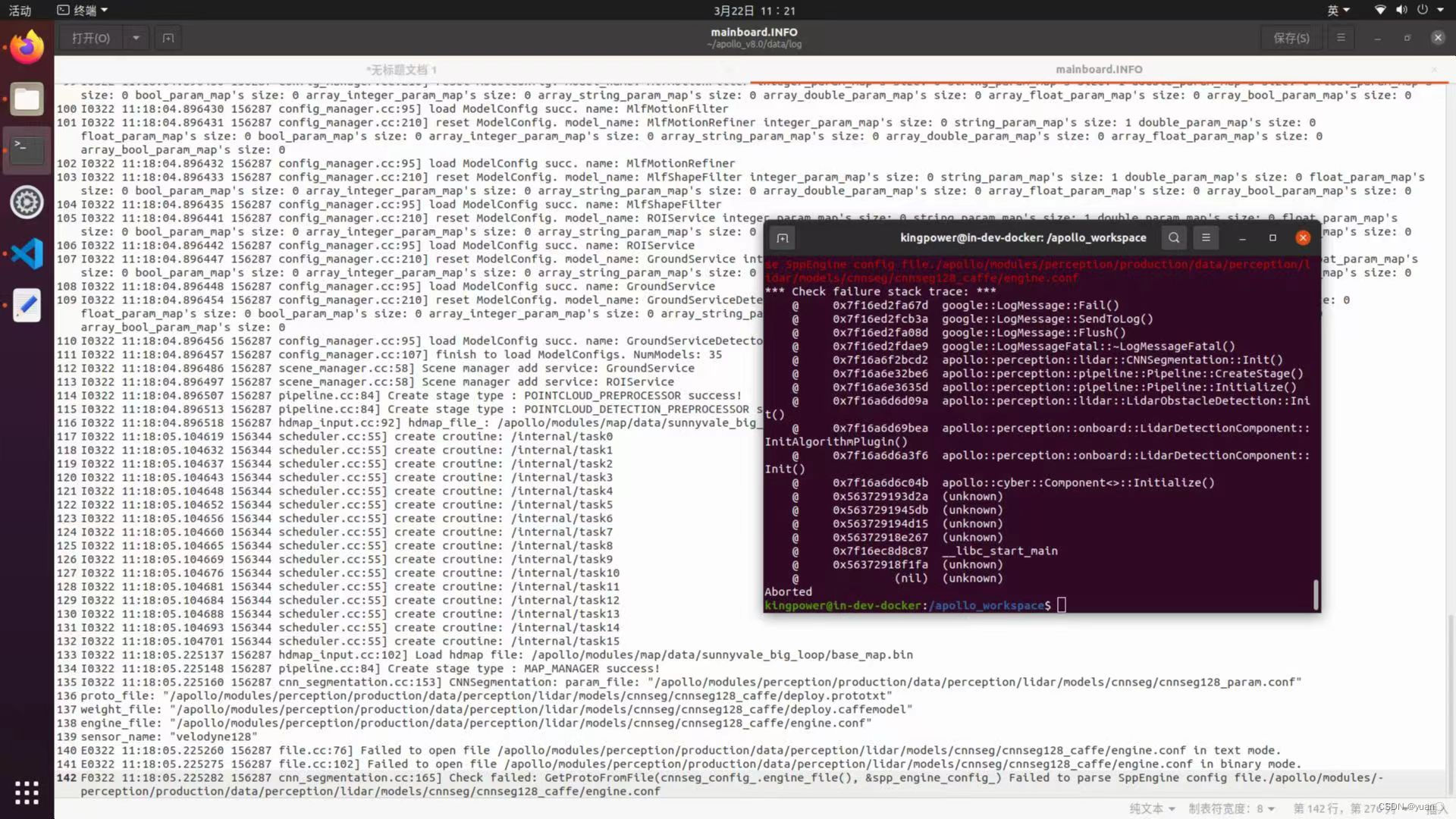

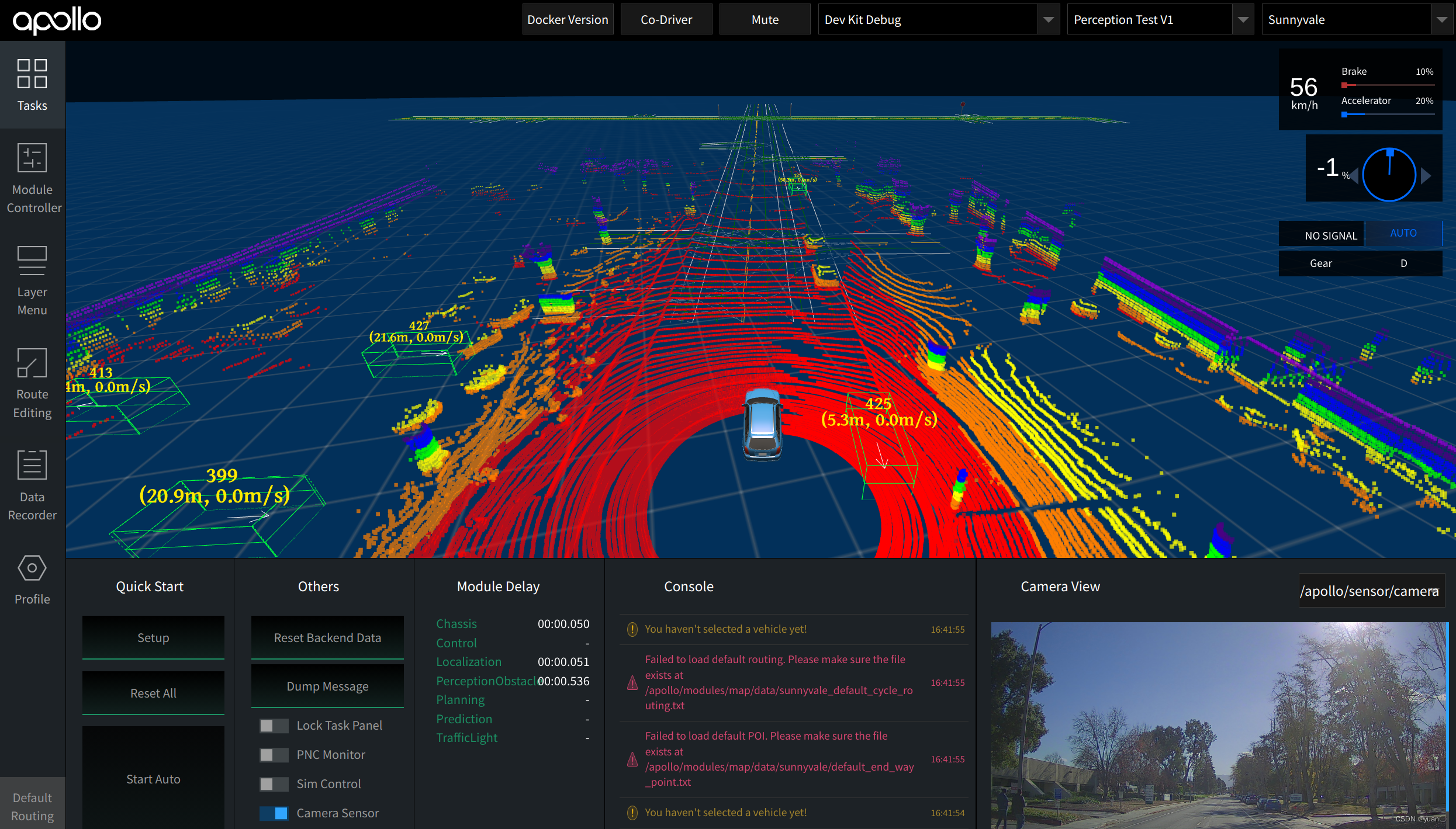

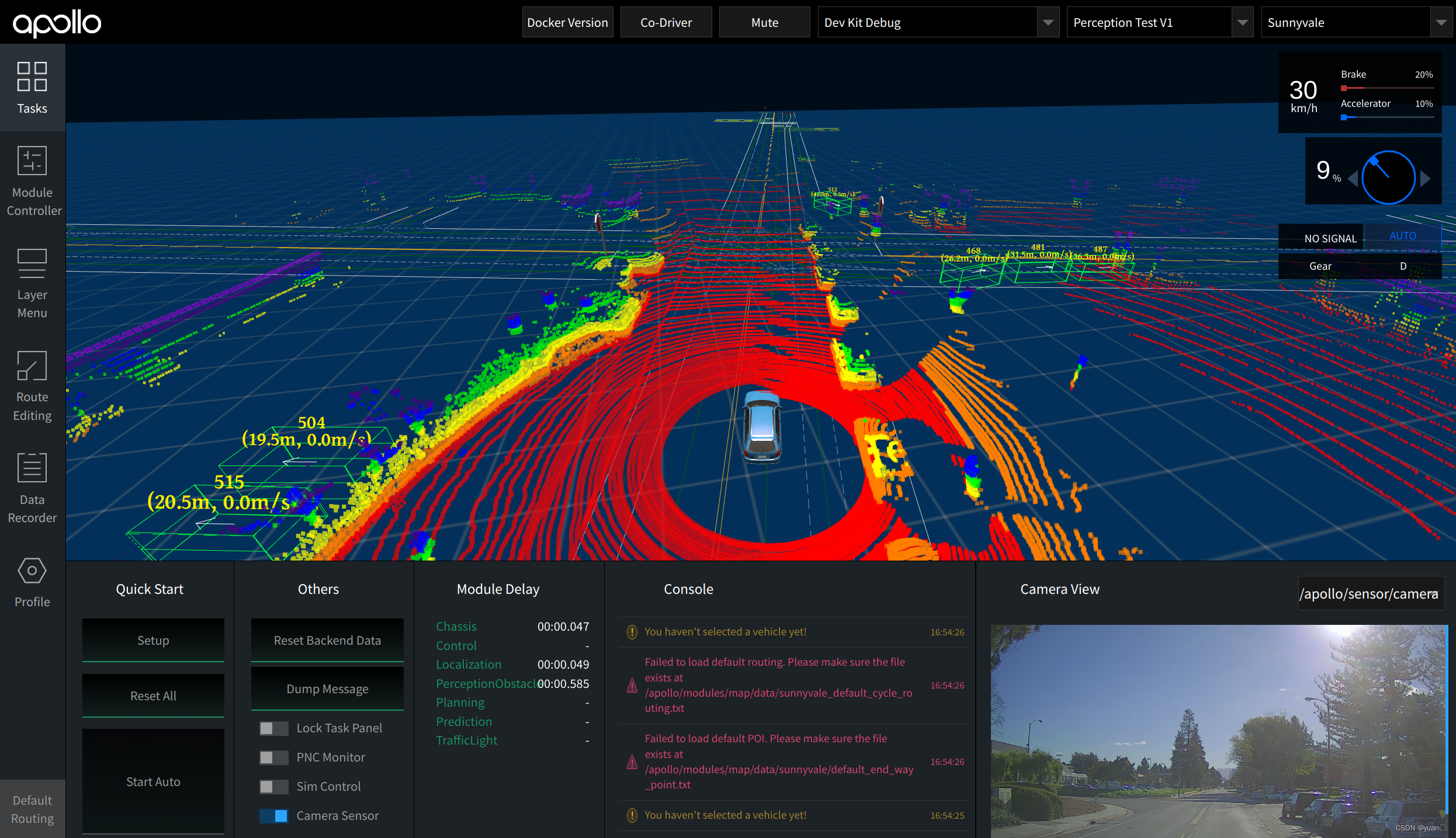

启动成功后如图所示:

步骤六:结果验证

播放数据包:需要使用-k参数屏蔽掉数据包中包含的感知通道数据。

cyber_recorder play -f ./data/bag/sensor_rgb.record -k /perception/vehicle/obstacles /apollo/perception/obstacles /apollo/perception/traffic_light /apollo/prediction

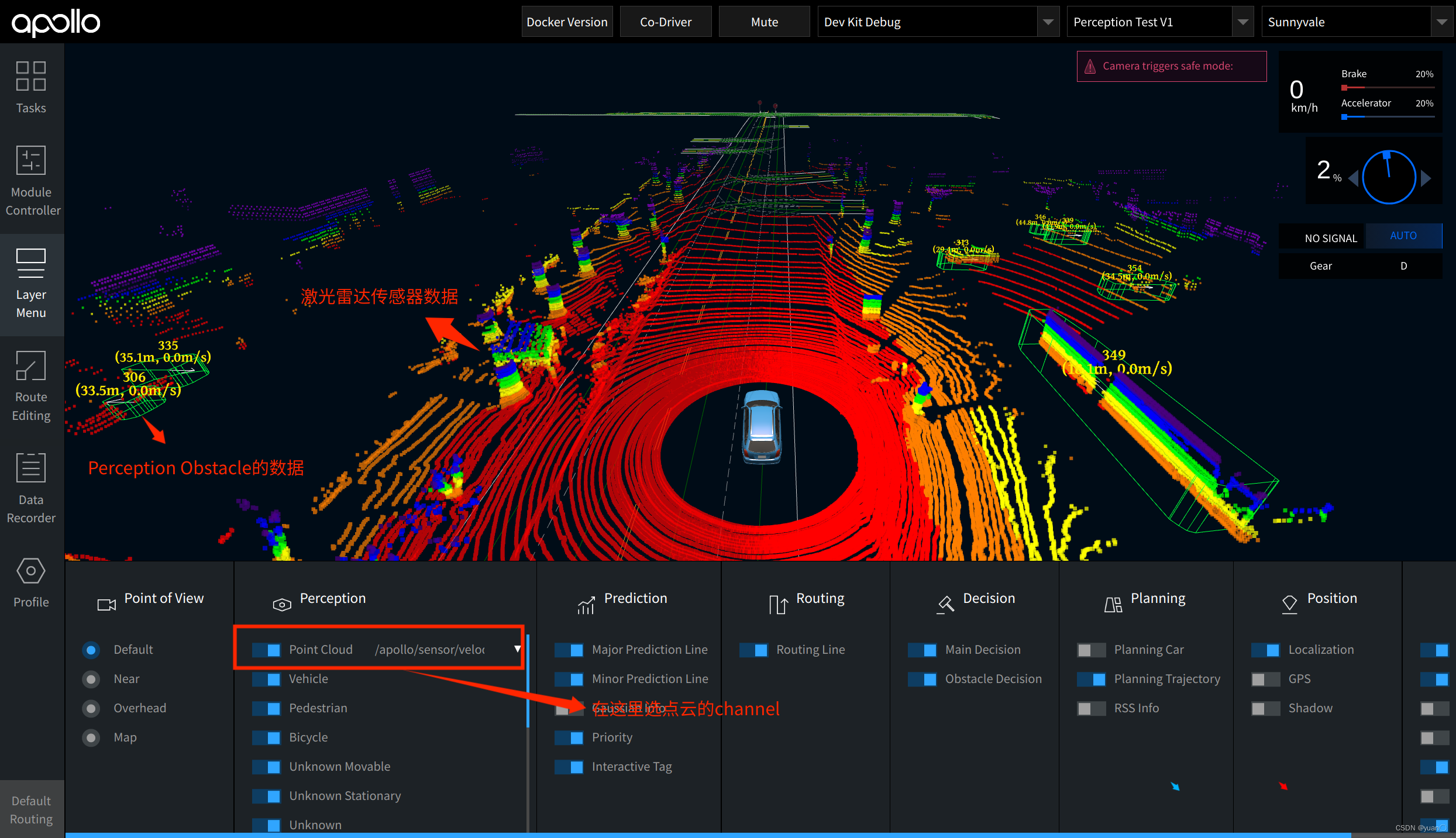

验证检测结果: 在 DreamView 中查看感知结果。并且打开dreamview左侧工具栏中的LayerMenu,将Perception中的Point Cloud打开,选择相应通道查看点云数据。查看3D检测结果是否能和Lidar传感器数据对应的上。

CNN-SEG

PS:

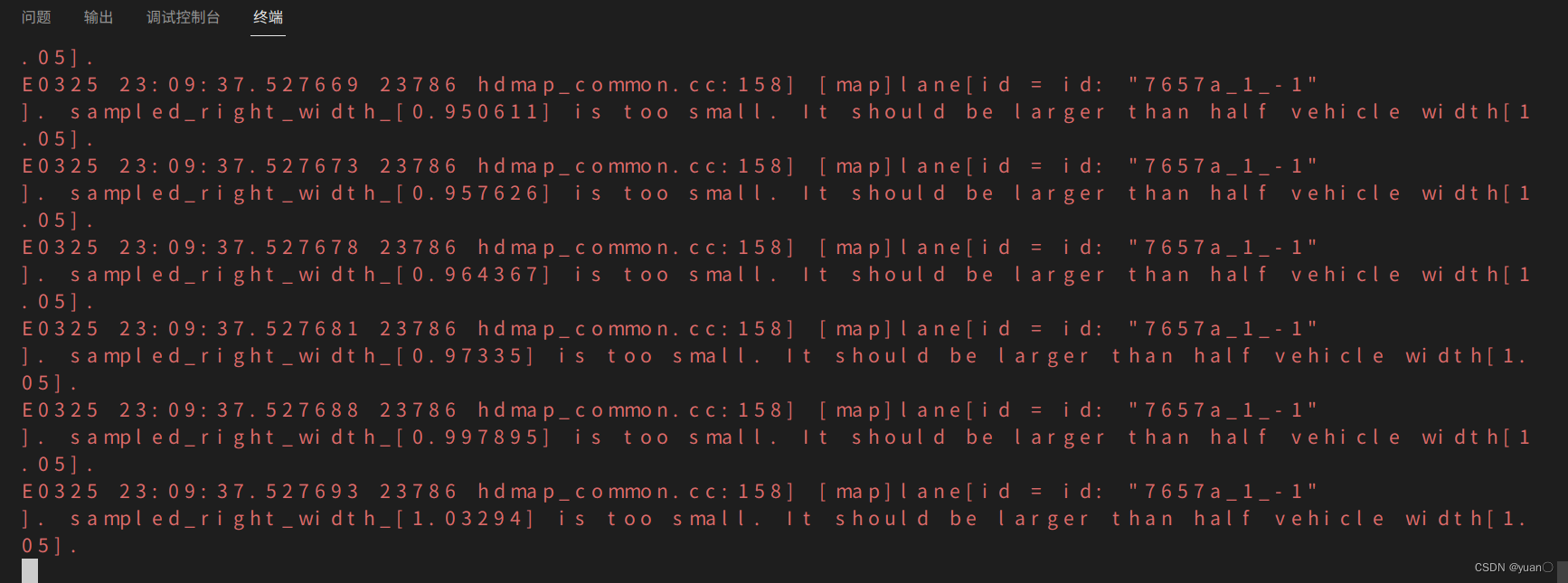

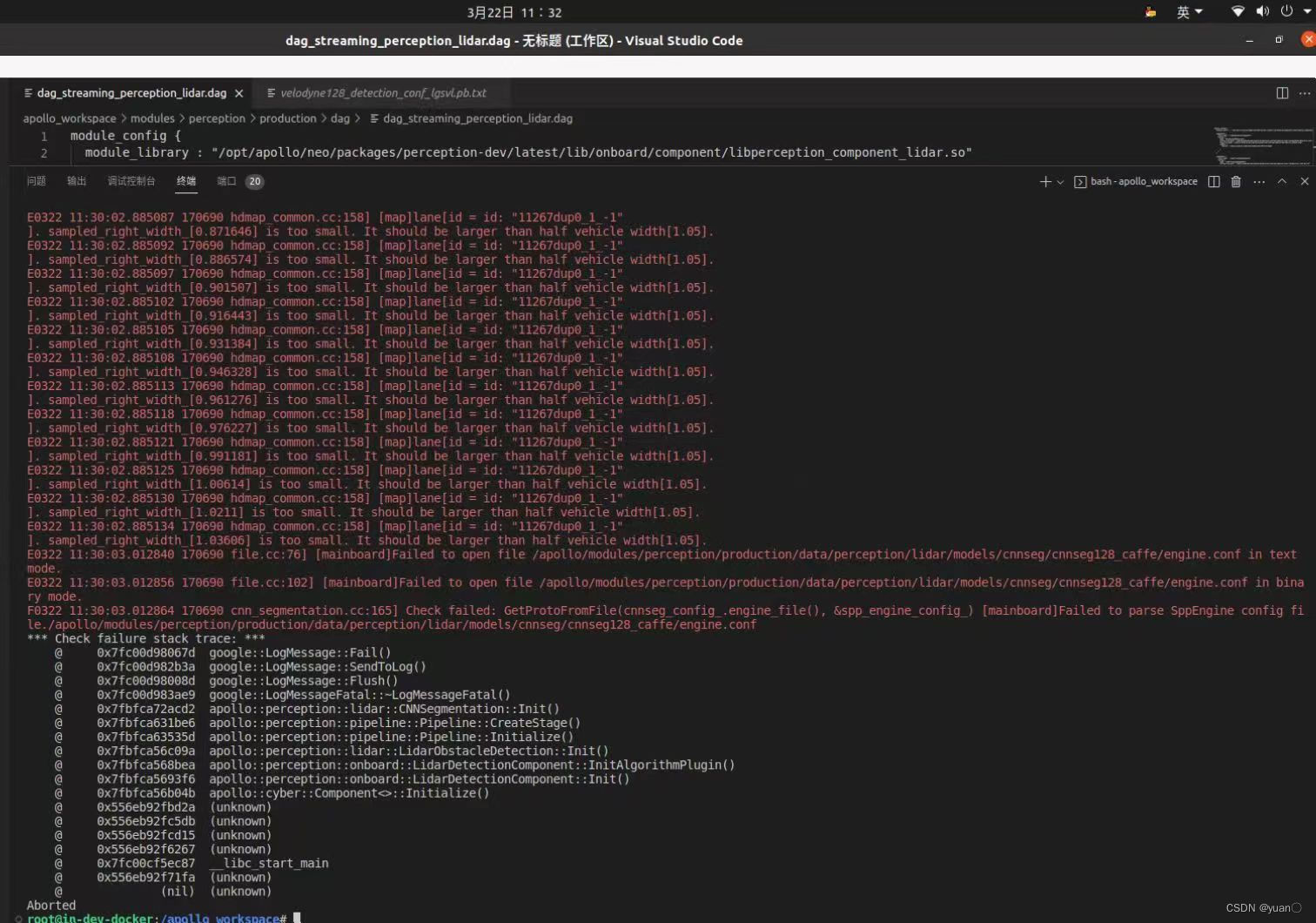

刚按教程做的时候,可能会遇到以下问题:

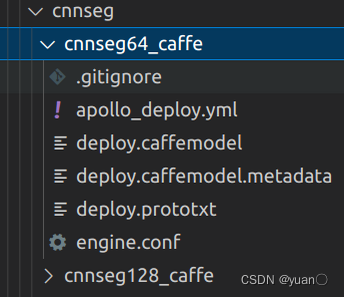

查看日志,发现engine.conf文件找不到,应该是cnnseg128_caffe的模型文件缺失,遂又查看了一下cnnseg部分的模型配置文件,果然没有.

解决方案:

-

查看模型是否有可以替换的,可以尝试替换.(比如说,cnnseg128_caffe中没有模型文件,可以看看cnnseg64_caffe中有没有,若有可以进行替换)记得更改

apollo/modules/perception/pipeline/config/lidar_detection_pipeline.pb.txt中相应部分的配置.

-

我准备了一个百度网盘,里面有相应的模型文件,去对应的路径寻找相应的模型文件,然后下载,更改

apollo/modules/perception/pipeline/config/lidar_detection_pipeline.pb.txt中相应部分的配置.

链接: https://pan.baidu.com/s/1MI3A-tchDn8h3Pm9SenB6w?pwd=vrp7 提取码: vrp7 -

根据以下教程,替换自己的模型文件

1.如何添加新的lidar检测算法

2.lidar训练到部署quick start

point_pillars

报错如下:

terminate called after throwing an instance of 'c10::Error'

what(): open file failed, file path: /apollo/modules/perception/production/data/perception/lidar/models/detection/point_pillars_torch/pts_voxel_encoder.zip

Exception raised from FileAdapter at ../caffe2/serialize/file_adapter.cc:11 (most recent call first):

frame #0: c10::Error::Error(c10::SourceLocation, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >) + 0x6b (0x7f63c470924b in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libc10.so)

frame #1: caffe2::serialize::FileAdapter::FileAdapter(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) + 0x2e0 (0x7f63bedeac80 in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libtorch_cpu.so)

frame #2: torch::jit::load(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, c10::optional<c10::Device>, std::unordered_map<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::hash<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::equal_to<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::allocator<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > > > >&) + 0x40 (0x7f63c00b1030 in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libtorch_cpu.so)

frame #3: apollo::perception::lidar::PointPillars::InitTorch() + 0xa2 (0x7f64aee4cc5c in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #4: apollo::perception::lidar::PointPillars::PointPillars(bool, float, float, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) + 0x3e8 (0x7f64aee4a7c2 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #5: apollo::perception::lidar::PointPillarsDetection::Init(apollo::perception::pipeline::StageConfig const&) + 0xe5 (0x7f64aee448d9 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #6: apollo::perception::pipeline::Pipeline::CreateStage(apollo::perception::pipeline::StageType const&) + 0x6be (0x7f64aebf8ed0 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #7: apollo::perception::pipeline::Pipeline::Initialize(apollo::perception::pipeline::PipelineConfig const&) + 0x3bf (0x7f64aebf7d51 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #8: apollo::perception::lidar::LidarObstacleDetection::Init(apollo::perception::pipeline::PipelineConfig const&) + 0x129 (0x7f64aea15387 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #9: apollo::perception::onboard::LidarDetectionComponent::InitAlgorithmPlugin() + 0x1b5 (0x7f64ae95dd59 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #10: apollo::perception::onboard::LidarDetectionComponent::Init() + 0x510 (0x7f64ae95d6d2 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #11: apollo::cyber::Component<apollo::drivers::PointCloud, apollo::cyber::NullType, apollo::cyber::NullType, apollo::cyber::NullType>::Initialize(apollo::cyber::proto::ComponentConfig const&) + 0x1b3 (0x7f64ae9a7603 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #12: <unknown function> + 0x11dd2a (0x561e50103d2a in mainboard)

frame #13: <unknown function> + 0x11e5db (0x561e501045db in mainboard)

frame #14: <unknown function> + 0x11ed15 (0x561e50104d15 in mainboard)

frame #15: <unknown function> + 0x118267 (0x561e500fe267 in mainboard)

frame #16: __libc_start_main + 0xe7 (0x7f64f9cf3c87 in /lib/x86_64-linux-gnu/libc.so.6)

frame #17: <unknown function> + 0x1191fa (0x561e500ff1fa in mainboard)

把point_pillars文件夹名称改为point_pillars_torch,再次运行,成功.

mask-pillars 报错

同样的问题,同样的解决方法.

terminate called after throwing an instance of 'c10::Error'

what(): open file failed, file path: /apollo/modules/perception/production/data/perception/lidar/models/detection/mask_pillars_torch/pts_voxel_encoder.zip

Exception raised from FileAdapter at ../caffe2/serialize/file_adapter.cc:11 (most recent call first):

frame #0: c10::Error::Error(c10::SourceLocation, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >) + 0x6b (0x7fe02160224b in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libc10.so)

frame #1: caffe2::serialize::FileAdapter::FileAdapter(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) + 0x2e0 (0x7fe01bce3c80 in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libtorch_cpu.so)

frame #2: torch::jit::load(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, c10::optional<c10::Device>, std::unordered_map<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> >, std::hash<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::equal_to<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > >, std::allocator<std::pair<std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > > > >&) + 0x40 (0x7fe01cfaa030 in /opt/apollo/neo/lib/3rd-libtorch-cpu-dev/libtorch_cpu.so)

frame #3: apollo::perception::lidar::PointPillars::InitTorch() + 0xa2 (0x7fe10ae4cc5c in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #4: apollo::perception::lidar::PointPillars::PointPillars(bool, float, float, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) + 0x3e8 (0x7fe10ae4a7c2 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #5: apollo::perception::lidar::MaskPillarsDetection::Init(apollo::perception::pipeline::StageConfig const&) + 0xc1 (0x7fe10ae3ea6b in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #6: apollo::perception::pipeline::Pipeline::CreateStage(apollo::perception::pipeline::StageType const&) + 0x6be (0x7fe10abf8ed0 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #7: apollo::perception::pipeline::Pipeline::Initialize(apollo::perception::pipeline::PipelineConfig const&) + 0x3bf (0x7fe10abf7d51 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #8: apollo::perception::lidar::LidarObstacleDetection::Init(apollo::perception::pipeline::PipelineConfig const&) + 0x129 (0x7fe10aa15387 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #9: apollo::perception::onboard::LidarDetectionComponent::InitAlgorithmPlugin() + 0x1b5 (0x7fe10a95dd59 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #10: apollo::perception::onboard::LidarDetectionComponent::Init() + 0x510 (0x7fe10a95d6d2 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #11: apollo::cyber::Component<apollo::drivers::PointCloud, apollo::cyber::NullType, apollo::cyber::NullType, apollo::cyber::NullType>::Initialize(apollo::cyber::proto::ComponentConfig const&) + 0x1b3 (0x7fe10a9a7603 in /opt/apollo/neo/packages/perception-dev/latest/lib/onboard/component/libperception_component_lidar.so)

frame #12: <unknown function> + 0x11dd2a (0x5561909b6d2a in mainboard)

frame #13: <unknown function> + 0x11e5db (0x5561909b75db in mainboard)

frame #14: <unknown function> + 0x11ed15 (0x5561909b7d15 in mainboard)

frame #15: <unknown function> + 0x118267 (0x5561909b1267 in mainboard)

frame #16: __libc_start_main + 0xe7 (0x7fe156901c87 in /lib/x86_64-linux-gnu/libc.so.6)

frame #17: <unknown function> + 0x1191fa (0x5561909b21fa in mainboard)

center-point

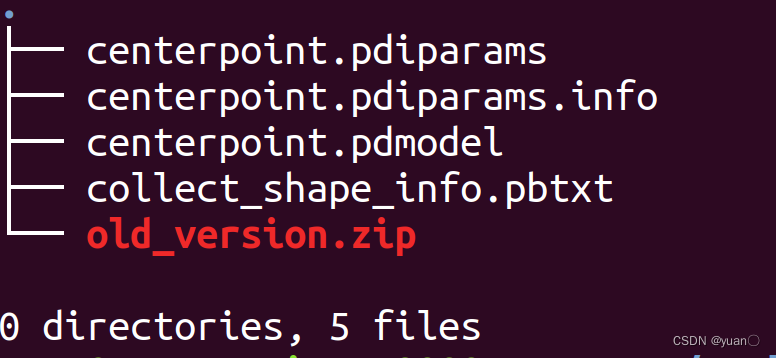

文件结构

terminate called after throwing an instance of 'phi::enforce::EnforceNotMet'

what(): (NotFound) Cannot open file /apollo/modules/perception/production/data/perception/lidar/models/detection/CenterPoint_paddle/centerpoint.pdmodel, please confirm whether the file is normal.

[Hint: Expected static_cast<bool>(fin.is_open()) == true, but received static_cast<bool>(fin.is_open()):0 != true:1.] (at /apollo/data/Paddle/paddle/fluid/inference/api/analysis_predictor.cc:1901)

Aborted (core dumped)

更改之后

I0328 18:01:38.070505 19982 analysis_predictor.cc:1318] ======= optimize end =======

I0328 18:01:38.075392 19982 naive_executor.cc:110] --- skip [feed], feed -> data

terminate called after throwing an instance of 'phi::enforce::EnforceNotMet'

what(): (NotFound) Operator (hard_voxelize) is not registered.

[Hint: op_info_ptr should not be null.] (at /apollo/data/Paddle/paddle/fluid/framework/op_info.h:156)

Aborted (core dumped)

github同款问题:

1.感知模块启动自己训练的CENTER_POINT_DETECTION模型,运行出现如下错误 #14839

2.使用飞桨的容器进行CenterPoint模型训练,并将其部署在apollo中,启动用是出现aborted的情况,请问是什么原因导致的 #276

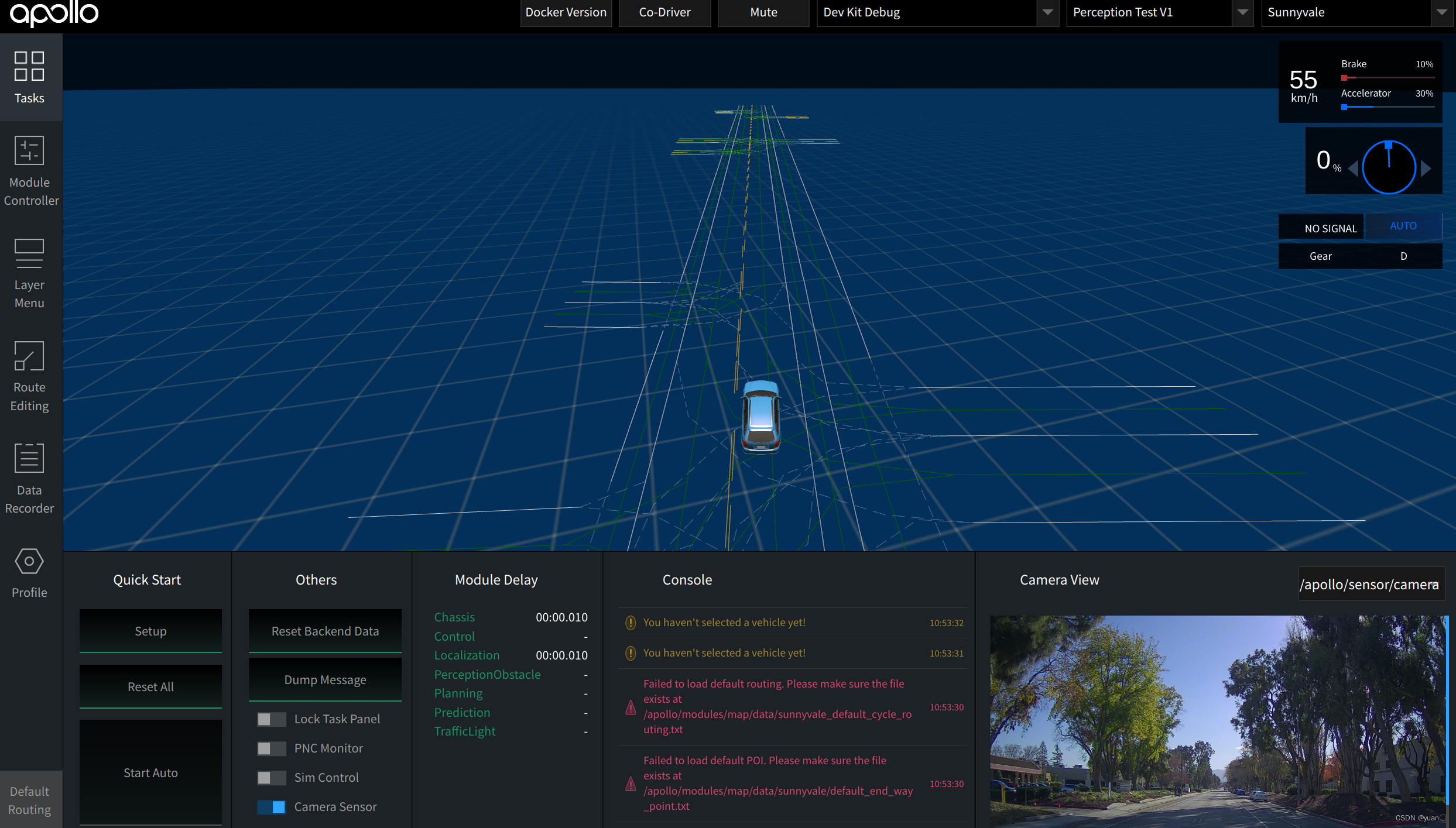

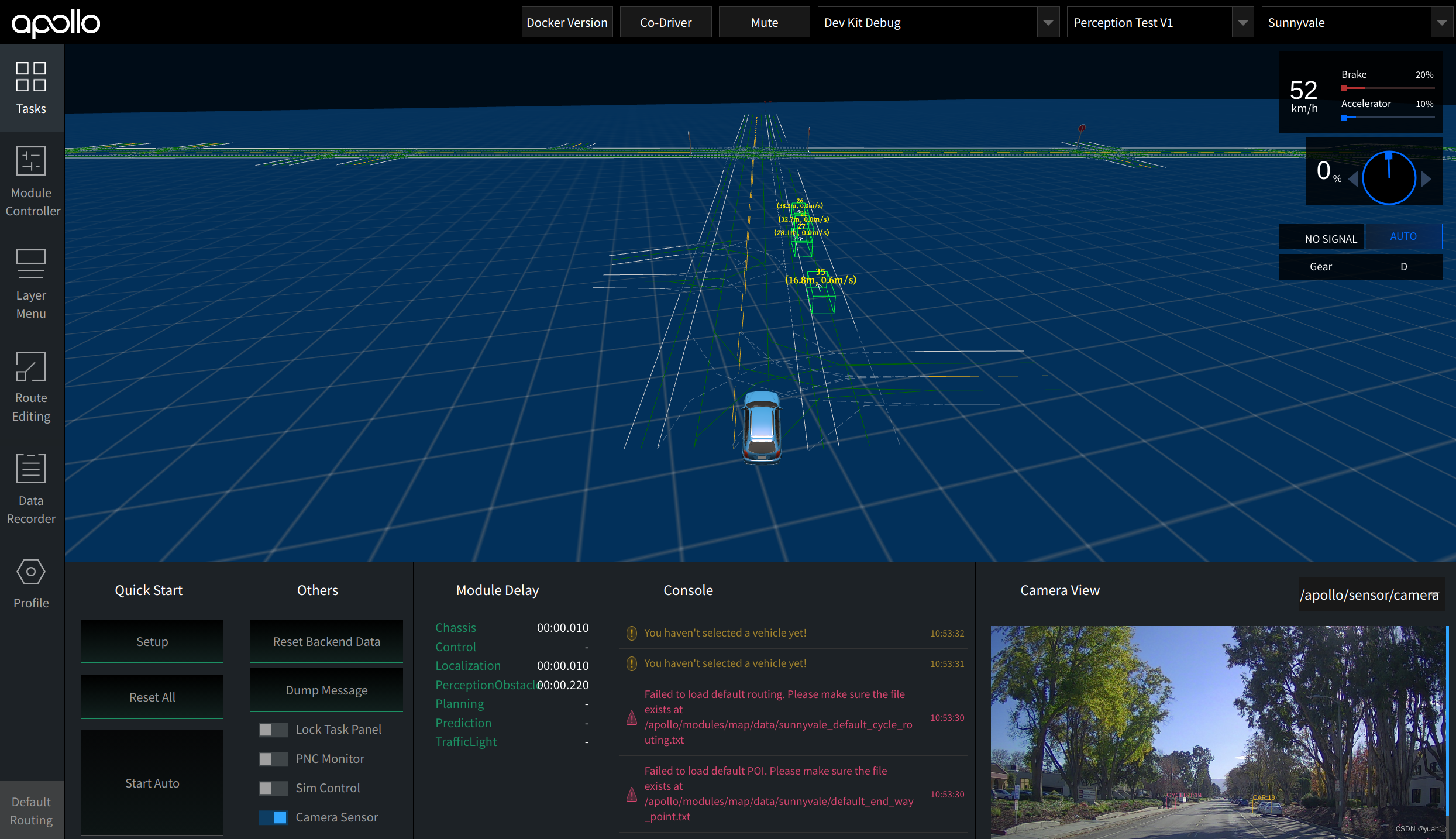

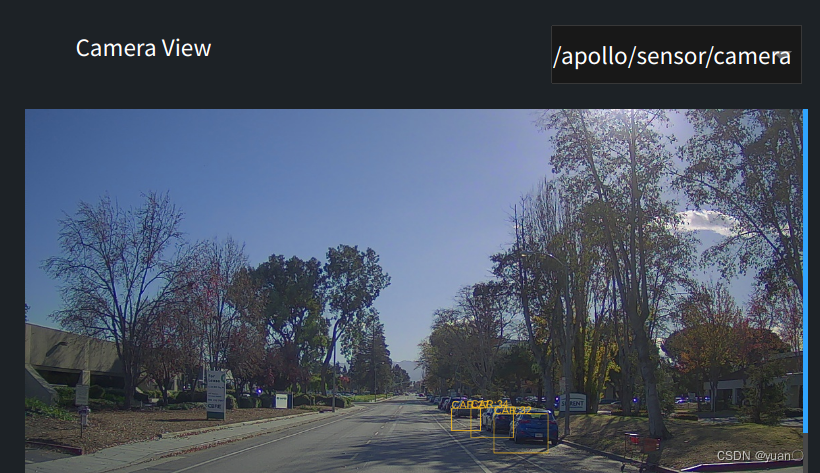

1.3 视觉功能测试

步骤1-5与激光雷达部分一致

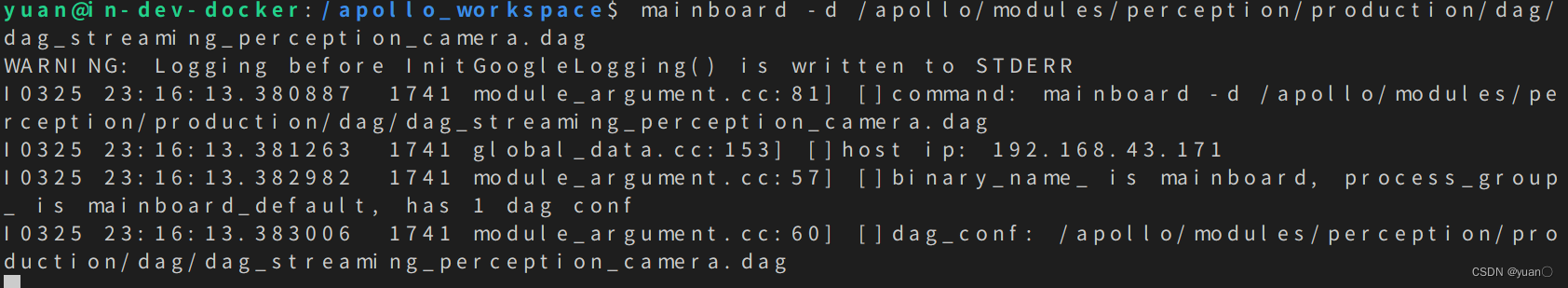

使用 mainboard 方式启动camera模块:

mainboard -d /apollo/modules/perception/production/dag/dag_streaming_perception_camera.dag

启动成功后如图所示:

步骤六:结果验证

播放数据包:需要使用-k参数屏蔽掉数据包中包含的感知通道数据。

cyber_recorder play -f ./data/bag/sensor_rgb.record -k /perception/vehicle/obstacles /apollo/perception/obstacles /apollo/perception/traffic_light /apollo/prediction

可能会比较卡顿😂😂😂,检测效果可能不会特别理想.

SMOKE

2760

2760

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?