合辑传送门 -->> 数据分析-合辑

搜狗新闻(文本分析)

涉及到的有TF-IDF关键词提取、词云、LDA建模、分类

搜狗新闻语料:链接: https://pan.baidu.com/s/1rdffayxzAwFhxuoxZobdwQ 提取码: dy8w

中文ttf及中文停用词表(当然也可以用别的):链接: https://pan.baidu.com/s/1t2lI9VlqKSS8TkmWxvSRLQ 提取码: nva7

目录

相关库和数据的导入

val.txt只含有5000条数据,如果想尝试全部数据可改成‘train.txt’。

import pandas as pd

import jieba #jieba分词器

from wordcloud import WordCloud

import matplotlib.pyplot as plt

import matplotlib

df = pd.read_table('val.txt',names = ['category','theme','URL','content'],encoding='utf-8')

df =df.dropna()分词

使用jieba分词器对每条新闻内容进行分词

content = df.content.values.tolist()

print(content[1000])

content_S = []

for i in range(df.shape[0]):

content_S.append(jieba.lcut(content[i]))

df_content = pd.DataFrame({'content':content_S})读取停用词表

stopwords = pd.read_csv('stopwords-master/zw_stopwords.txt',

index_col=False,sep='\t',

quoting=3,

names=['stopwords'],

encoding='utf-8')

stopwords = stopwords.stopwords.values.tolist()删除停用词

这里删除的方法比较费时,直接遍历比对。

print('清洗前的数据第1000条数据的分词情况:',len(df_content.content[1000]))

all_word = [] # 存储除停用词外的所有词(可重复)

content_word = [] # 按新闻条数一次存入清洗后的新闻列表

su = len(df_content.content)

for line in df_content.content:

line_clean = []

# print(line)

for i in line:

if i in stopwords:

continue

line_clean.append(i)

all_word.append(i)

content_word.append(line_clean)

print('清洗后的数据第1000条数据的分词情况:',len(content_word[1000]))

# 清理完的数据

df_content = pd.DataFrame({'contents_clean':content_word})

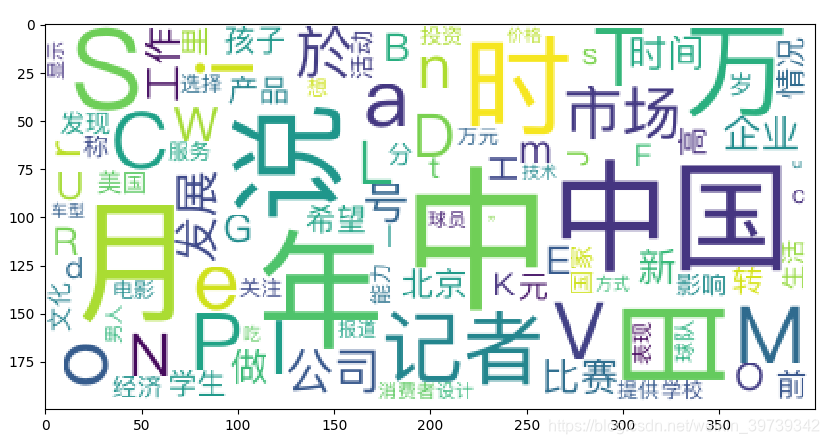

df_all_words = pd.DataFrame({'all_words':all_word})词云生成

# 得到词频数据

words_count = df_all_words['all_words'].value_counts()

words_count = pd.DataFrame({'val':words_count})

words_count.sort_values(by='val', inplace=True, ascending = False)

print(words_count.head(50))

# 生成词云

matplotlib.rcParams['figure.figsize'] = (10.0,5.0)

cloud = WordCloud(font_path='./pingfangziti/apple-black-smaple.ttf',

background_color='white',

max_font_size=80)

word_frequence = {words_count.index[x]:words_count['val'][x] for x in range(100)}

print(word_frequence)

cloud = cloud.fit_words(word_frequence)

plt.imshow(cloud)

plt.show()效果是这样的(当然还是很多不同的风格):

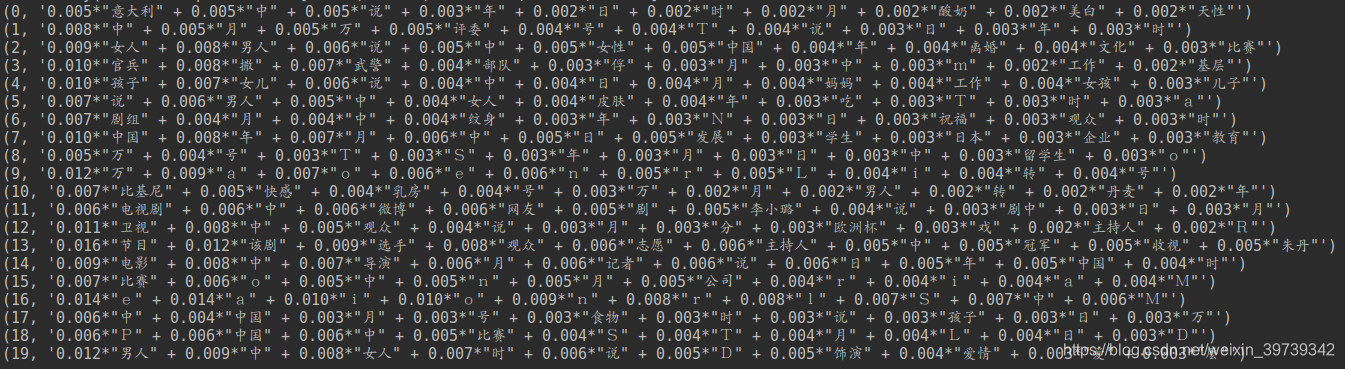

LDA建模

from gensim import corpora,models,similarities

import gensim

# 映射 相当于词袋

dictionary = corpora.Dictionary(content_word)

corpus = [dictionary.doc2bow(sen) for sen in content_word]

print(dictionary)

# LDA模型 设置20个主题分类

lda = gensim.models.ldamodel.LdaModel(corpus=corpus,id2word=dictionary,num_topics=20)

# 输出分类主题的结果

for topic in lda.print_topics(num_topics=20,num_words=10):

print(topic)

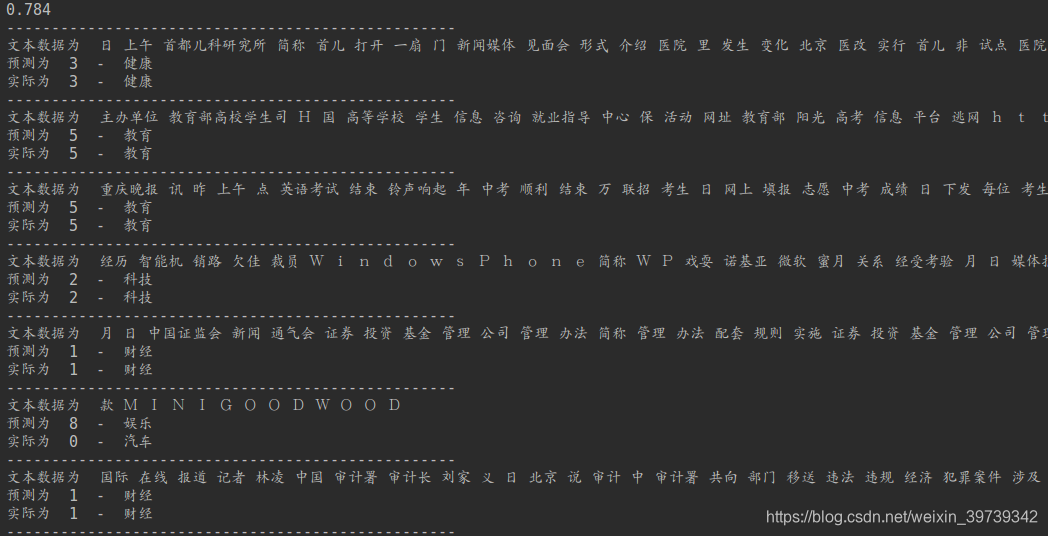

利用贝叶斯模型进行新闻分类

# 标签文本的替换

print(df_content.label.unique())

label_man = {}

num = 0

for i in df_content.label.unique().tolist():

label_man[i]=num

num+=1

df_content['label'] = df_content['label'].map(label_man)

# 最终我们的标签

print(df_content.label.unique())

# 对文本数据进行格式调整

print(df_content['contents_clean'][1000])

words = []

for line in df_content['contents_clean'].tolist():

words.append(' '.join(line))

df_content['contents_clean'] = words

# 最终的文本数据格式

print(df_content['contents_clean'][1000])

from sklearn.feature_extraction.text import CountVectorizer

# 建立模型 获取向量表示形式

vec = CountVectorizer(analyzer='word',max_features=4000,lowercase=False)

vec.fit(words)

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(df_content['contents_clean'],

df_content['label'],

random_state=42)

fier = MultinomialNB()

fier.fit(vec.transform(X_train),y_train)

print(fier.score(vec.transform(X_test),y_test))

pre = fier.predict(vec.transform(X_test[:10]))

value = X_test.tolist()

value_y = y_test.tolist()

key = {0:'汽车',1:'财经', 2:'科技', 3:'健康', 4:'体育', 5:'教育', 6:'文化', 7:'军事', 8:'娱乐', 9:'时尚'}

for i in range(10):

print('-'*50)

print('文本数据为 ',value[i])

print('预测为 ',pre[i],' - ',key[pre[i]])

print('实际为 ',value_y[i],' - ',key[value_y[i]])

1098

1098

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?