2D手眼标定

9点标定是一种二维手眼标定方法,其中的重要假设为标定板所在平面与实际检测物体处于同一平面,相机所在平面与标定板平面的关系固定不变,可以相对标定板所在平面xy方向平移,z方向保持不变。有了以上假设,那么相机的成像面与标定板平面为仿射变换关系。仿射变换矩阵有6个未知数,那么至少需要3对点进行求解。

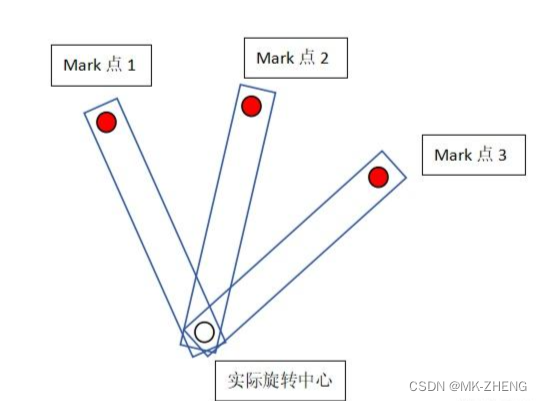

12点标定是将实际旋转中心与夹爪中心位置(长度)标定出来。首先还是9点标定(夹爪中心来做),然后用夹爪带着一个mark点(能被相机看到的),在相机视野内走3个点(mark点在9点标定所在平面高度),跟前面9点标定将这三个像素坐标点变换为机械坐标,拟合为圆,圆心即为实际旋转中心点,由此构建出实际旋转中心点与夹爪中心点坐标关系(或者长度关系)。后面求解实际坐标旋转的时候可以用到。

标定步骤

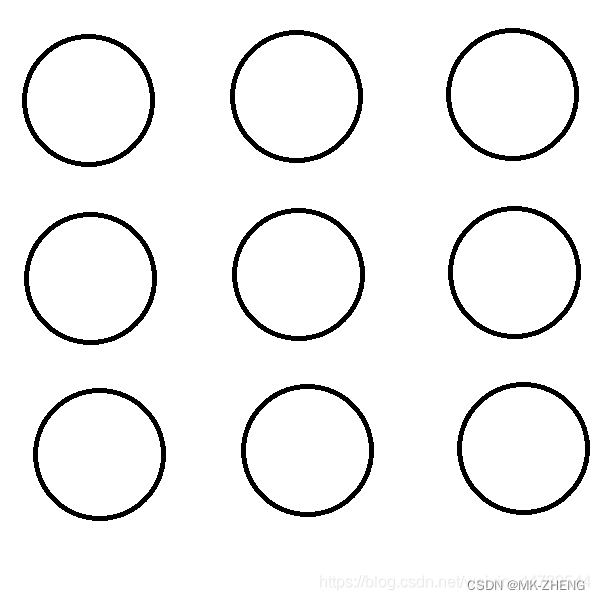

1、首先我们需要准备一块,标定板。如果条件不足,可以使用白纸画上九个圆进行代替。

2、相机位置,机械手位置全部固定好,标定针固定在机械手上,固定好后不能够再移动。

标定针的位置一定要与夹手或吸盘之内的工具同一位置高度。

3、将标定板放到相机下方,位置区域要与机械手工作的区域一样,包括高度必须尽量一致,这是标定准确度的关键。

4、调整好相机焦距,拍照,然后识别9个圆圆心的坐标并进行记录。

5、将机械手依次移动到9个圆的圆心位置,记下机械手坐标

做完以上五步,我们会得到两个点集。一个为9个圆圆心坐标(points_camera),一个为9个圆心对应的机械手坐标(points_robot)。

#include “include/opencv2/video/tracking.hpp”

void CMFCTestDlg::OnBnClickedBtn9pointcalib()

{

std::vector points_camera;

std::vector points_robot;

/*points_camera.push_back(Point2f(1372.36f, 869.00F));

points_camera.push_back(Point2f(1758.86f, 979.07f));

points_camera.push_back(Point2f(2145.75f, 1090.03f));

points_camera.push_back(Point2f(2040.02f, 1461.64f));

points_camera.push_back(Point2f(1935.01f, 1833.96f));

points_camera.push_back(Point2f(1546.79f, 1724.21f));

points_camera.push_back(Point2f(1158.53f, 1613.17f));

points_camera.push_back(Point2f(1265.07f, 1240.49f));

points_camera.push_back(Point2f(1652.6f, 1351.27f));

points_robot.push_back(Point2f(98.884f, 320.881f));

points_robot.push_back(Point2f(109.81f, 322.27f));

points_robot.push_back(Point2f(120.62f, 323.695f));

points_robot.push_back(Point2f(121.88f, 313.154f));

points_robot.push_back(Point2f(123.11f, 302.671f));

points_robot.push_back(Point2f(112.23f, 301.107f));

points_robot.push_back(Point2f(101.626f, 299.816f));

points_robot.push_back(Point2f(100.343f, 310.447f));

points_robot.push_back(Point2f(111.083f, 311.665f));*/

points_camera.push_back(Point2f(1516.14f,1119.48f));

points_camera.push_back(Point2f(1516.24f,967.751f));

points_camera.push_back(Point2f(1668.55f,967.29f));

points_camera.push_back(Point2f(1668.44f,1118.93f));

points_camera.push_back(Point2f(1668.54f,1270.57f));

points_camera.push_back(Point2f(1516.22f,1271.37f));

points_camera.push_back(Point2f(1364.21f,1272.15f));

points_camera.push_back(Point2f(1364.08f,1120.42f));

points_camera.push_back(Point2f(1364.08f,968.496f));

points_robot.push_back(Point2f(35.7173f,18.8248f));

points_robot.push_back(Point2f(40.7173f, 18.8248f));

points_robot.push_back(Point2f(40.7173f, 13.8248f));

points_robot.push_back(Point2f(35.7173f, 13.8248f));

points_robot.push_back(Point2f(30.7173f, 13.8248f));

points_robot.push_back(Point2f(30.7173f, 18.8248f));

points_robot.push_back(Point2f(30.7173f, 23.8248f));

points_robot.push_back(Point2f(35.7173f, 23.8248f));

points_robot.push_back(Point2f(40.7173f, 23.8248f));

/*for (int i = 0;i < 9;i++)

{

points_camera.push_back(Point2f(i+1, i+1));

points_robot.push_back(Point2f(2*(i + 1), 2*(i + 1)));

}*/

//变换成 2*3 矩阵

Mat affine;

estimateRigidTransform(points_camera, points_robot, true).convertTo(affine, CV_32F);

if (affine.empty())

{

affine = LocalAffineEstimate(points_camera, points_robot, true);

}

float A, B, C, D, E, F;

A = affine.at<float>(0, 0);

B = affine.at<float>(0, 1);

C = affine.at<float>(0, 2);

D = affine.at<float>(1, 0);

E = affine.at<float>(1, 1);

F = affine.at<float>(1, 2);

//坐标转换

//Point2f src = Point2f(1652.6f, 1351.27f);

//Point2f src = Point2f(100, 100);

Point2f src = Point2f(1516.14f, 1119.48f);

Point2f dst;

dst.x = A * src.x + B * src.y + C;

dst.y = D * src.x + E * src.y + F;

//RMS 标定偏差

std::vector<Point2f> points_Calc;

double sumX = 0, sumY = 0;

for (int i = 0;i < points_camera.size();i++)

{

Point2f pt;

pt.x = A * points_camera[i].x + B * points_camera[i].y + C;

pt.y = D * points_camera[i].x + E * points_camera[i].y + F;

points_Calc.push_back(pt);

sumX += pow(points_robot[i].x - points_Calc[i].x,2);

sumY += pow(points_robot[i].y - points_Calc[i].y, 2);

}

double rmsX, rmsY;

rmsX = sqrt(sumX / points_camera.size());

rmsY = sqrt(sumY / points_camera.size());

CString str;

str.Format("偏差值:%0.3f,%0.3f", rmsX, rmsY);

AfxMessageBox(str);

}

Mat CMFCTestDlg::LocalAffineEstimate(const std::vector& shape1, const std::vector& shape2,

bool fullAfine)

{

Mat out(2, 3, CV_32F);

int siz = 2 * (int)shape1.size();

if (fullAfine)

{

Mat matM(siz, 6, CV_32F);

Mat matP(siz, 1, CV_32F);

int contPt = 0;

for (int ii = 0; ii<siz; ii++)

{

Mat therow = Mat::zeros(1, 6, CV_32F);

if (ii % 2 == 0)

{

therow.at<float>(0, 0) = shape1[contPt].x;

therow.at<float>(0, 1) = shape1[contPt].y;

therow.at<float>(0, 2) = 1;

therow.row(0).copyTo(matM.row(ii));

matP.at<float>(ii, 0) = shape2[contPt].x;

}

else

{

therow.at<float>(0, 3) = shape1[contPt].x;

therow.at<float>(0, 4) = shape1[contPt].y;

therow.at<float>(0, 5) = 1;

therow.row(0).copyTo(matM.row(ii));

matP.at<float>(ii, 0) = shape2[contPt].y;

contPt++;

}

}

Mat sol;

solve(matM, matP, sol, DECOMP_SVD);

out = sol.reshape(0, 2);

}

else

{

Mat matM(siz, 4, CV_32F);

Mat matP(siz, 1, CV_32F);

int contPt = 0;

for (int ii = 0; ii<siz; ii++)

{

Mat therow = Mat::zeros(1, 4, CV_32F);

if (ii % 2 == 0)

{

therow.at<float>(0, 0) = shape1[contPt].x;

therow.at<float>(0, 1) = shape1[contPt].y;

therow.at<float>(0, 2) = 1;

therow.row(0).copyTo(matM.row(ii));

matP.at<float>(ii, 0) = shape2[contPt].x;

}

else

{

therow.at<float>(0, 0) = -shape1[contPt].y;

therow.at<float>(0, 1) = shape1[contPt].x;

therow.at<float>(0, 3) = 1;

therow.row(0).copyTo(matM.row(ii));

matP.at<float>(ii, 0) = shape2[contPt].y;

contPt++;

}

}

Mat sol;

solve(matM, matP, sol, DECOMP_SVD);

out.at<float>(0, 0) = sol.at<float>(0, 0);

out.at<float>(0, 1) = sol.at<float>(1, 0);

out.at<float>(0, 2) = sol.at<float>(2, 0);

out.at<float>(1, 0) = -sol.at<float>(1, 0);

out.at<float>(1, 1) = sol.at<float>(0, 0);

out.at<float>(1, 2) = sol.at<float>(3, 0);

}

return out;

}

得出来的6个double类型的参数,就是我们此次标定最终得到的标定参数了。

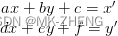

之后我们把检测得到的图像坐标(t_px,t_py)代入,就可以得到与之相对应的机械手坐标(t_rx,t_ry)

t_rx= (A * t_px) + B * t_py + C);

t_ry= (D * t_px) + E * t_py+ F);

至此标定结束,我们可以控制相机拍照进行定位,然后转换成机械手坐标。

函数estimateRigidTransform 定义如下:

Mat estimateRigidTransform(InputArraysrc,InputArraydst,boolfullAffine)

前两个参数,可以是 :src=srcImage (变换之前的图片Mat) dst=transImage(变换之后的图片Mat)

也可以: src=array(变换之前的关键点Array) dst=array(变换之后的关键点Array)

第三个参数: 1(全仿射变换,包括:rotation, translation, scaling,shearing,reflection)

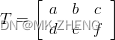

其主要原理为:如果我们有一个点变换之前是[x,y,1],变换后是[x’,y’,1] 则fullAffine表示如下:

TX=Y

展开后表示

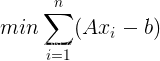

如果我们想求这【a-f】 6个变量需要有6个方程,也就是3组点。但是比三个点多呢?

比如:20个点。那就是用最小方差。

在完成上述9点标定的基础上:

带动mark点在相机视野范围内旋转3次(机械手初始位置),拍摄3张mark点图像,拟合出实际旋转中心点坐标(像素坐标、机械坐标);

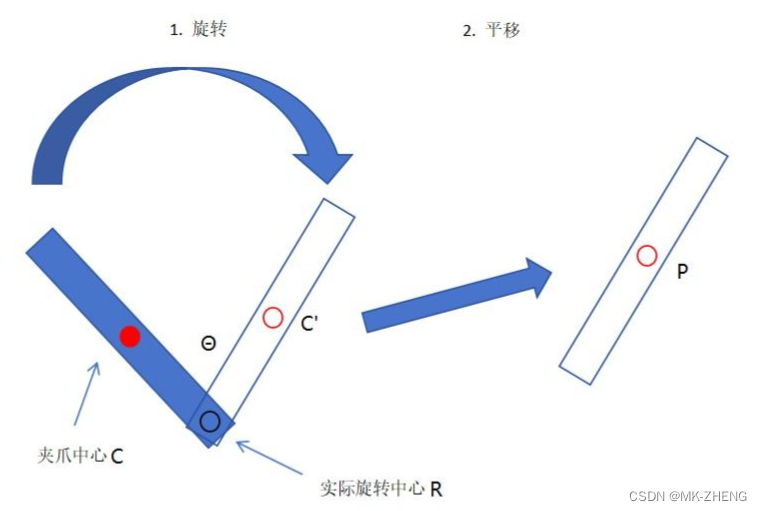

12点标定使用步骤(旋转+平移)

1、在初始位置旋转角度θ使得夹爪与工件姿态相同(θ是通过图像处理得到);

2、旋转。vector_angle_to_rigid(R.x, R.y, 0, R.x, R.y, θ, HomMat2D),都是像素坐标,HomMat2D是夹爪旋转的仿射变换矩阵;

3、旋转。夹爪中心旋转前后坐标变换:C’=HomMat2D * C,affine_trans_point_2d(HomMat2D, C.x, C.y, C’.x, C’.y)旋转中心像素坐标可以通过图像得到;

4、平移。工件中心像素坐标P通过图像处理可得,并用9点标定获得机械坐标。vector_angle_to_rigid(C’.x, C’.y, 0, P.x, P.y, 0, HomMat2D), 这里函数内都是机械坐标。

5、平移。

1496

1496

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?