StoryDiffusion: Consistent Self-Attention for Long-Range Image and Video Generation

2024.5.2

| Video1 | Video2 | Video3 |

|---|---|---|

|  |  |

Abstract

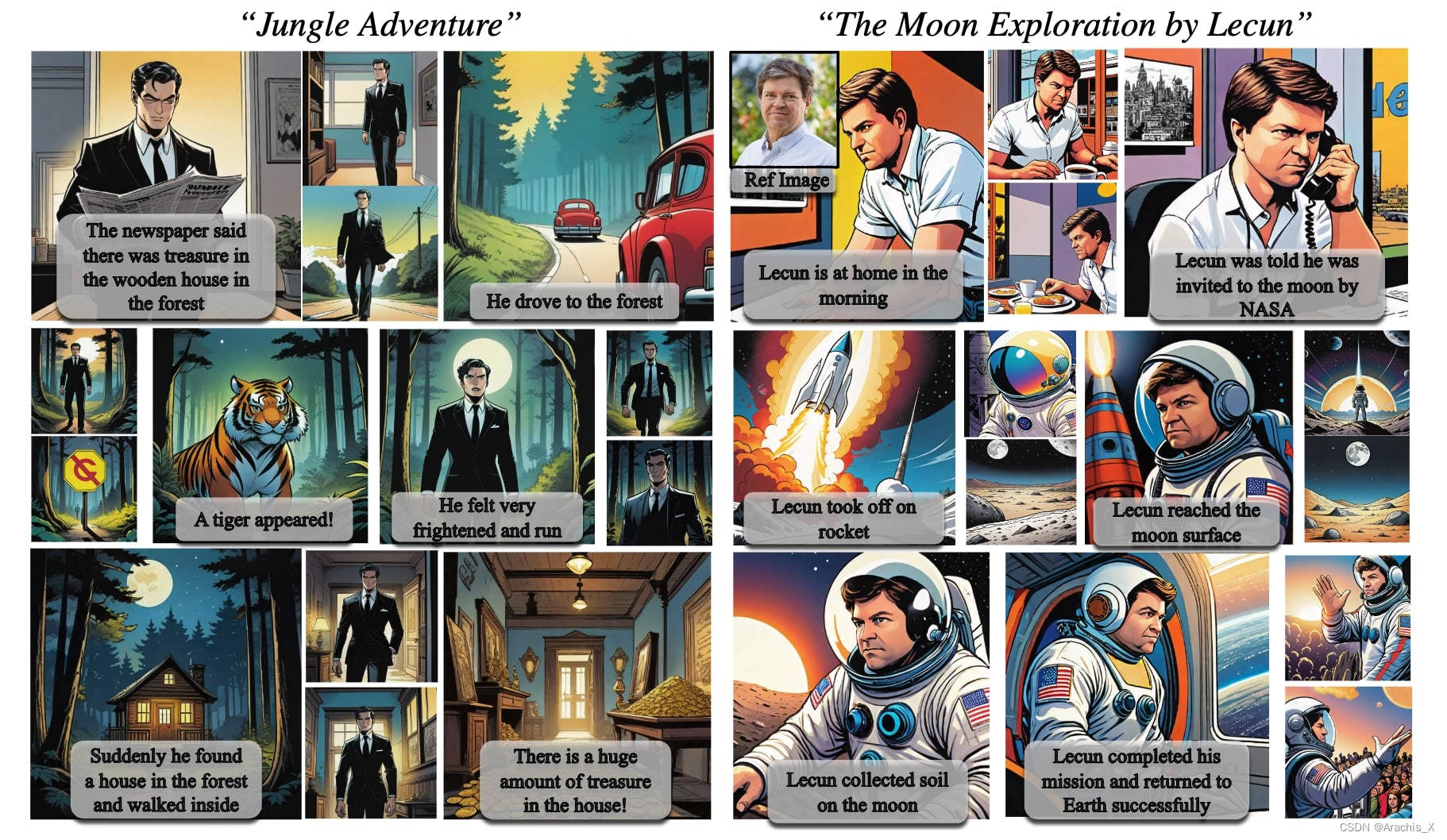

For recent diffusion-based generative models, maintaining consistent content across a series of generated images, especially those containing subjects and complex details, presents a significant challenge. In this paper, we propose a new way of self-attention calculation, termed Consistent Self-Attention, that significantly boosts the consistency between the generated images and augments prevalent pretrained diffusion-based text-to-image models in a zero-shot manner. To extend our method to long-range video generation, we further introduce a novel semantic space temporal motion prediction module, named Semantic Motion Predictor. It is trained to estimate the motion conditions between two provided images in the semantic spaces. This module converts the generated sequence of images into videos with smooth transitions and consistent subjects that are significantly more stable than the modules based on latent spaces only, especially in the context of long video generation. By merging these two novel components, our framework, referred to as StoryDiffusion, can describe a text-based story with consistent images or videos encompassing a rich variety of contents. The proposed StoryDiffusion encompasses pioneering explorations in visual story generation with the presentation of images and videos, which we hope could inspire more research from the aspect of architectural modifications. Our code is made publicly available at https://github.com/HVision-NKU/StoryDiffusion.

对于最新的基于扩散的生成模型来说,在生成的一系列图像中保持内容的一致性,尤其是那些包含主题和复杂细节的图像,是一个巨大的挑战。

在本文中,我们提出了一种新的自我注意力计算方法,称为 “一致的自我注意力”(Consistent Self-Attention),它能显著提高生成图像之间的一致性,并以zero-shot的方式增强普遍的基于扩散的预训练文本到图像模型。

为了将我们的方法扩展到长距离视频生成,我们进一步引入了一个新颖的语义空间时间运动预测模块,名为 “语义运动预测器”。

经过训练后,该模块能在语义空间中估算出所提供的两幅图像之间的运动状况。该模块可将生成的图像序列转换为具有平滑过渡和一致主体的视频,其稳定性明显高于仅基于潜空间的模块,尤其是在生成长视频的情况下。

通过合并这两个新颖的组件,我们的框架(称为 StoryDiffusion)可以用包含丰富内容的一致图像或视频来描述基于文本的故事。

我们提出的 StoryDiffusion 包含了在视觉故事生成中对图像和视频呈现的开创性探索,我们希望它能从架构修改方面启发更多的研究。

802

802

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?