@inproceedings{ram2017deepfuse,

title={Deepfuse: A deep unsupervised approach for exposure fusion with extreme exposure image pairs},

author={Ram Prabhakar, K and Sai Srikar, V and Venkatesh Babu, R},

booktitle={Proceedings of the IEEE international conference on computer vision},

pages={4714–4722},

year={2017}

}

论文级别:ICCV 2017

影响因子:-

文章目录

📖论文解读

作者提出了一种解决多曝光融合(MEF)的网络DeepFuse。

这是第一篇使用CNN进行MEF的论文

同时发布了新的基准数据集

🔑关键词

多曝光图像融合,CNN,

💭核心思想

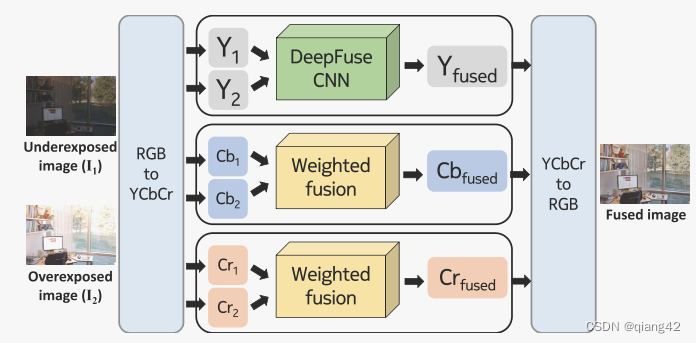

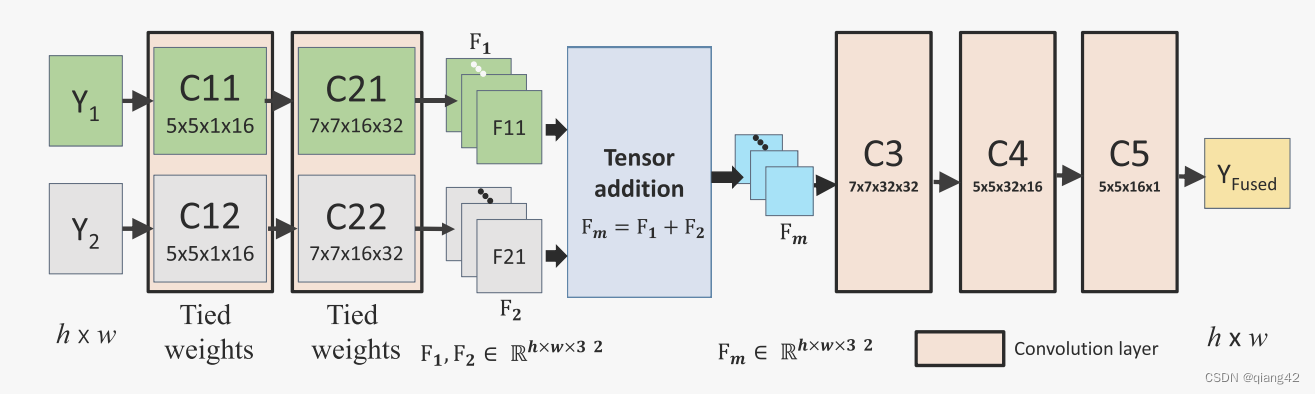

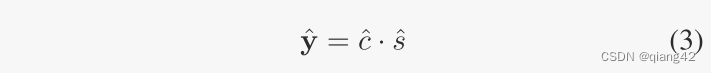

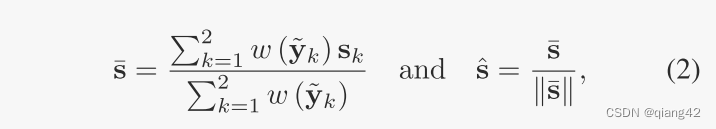

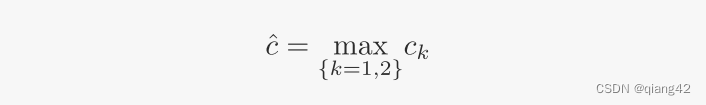

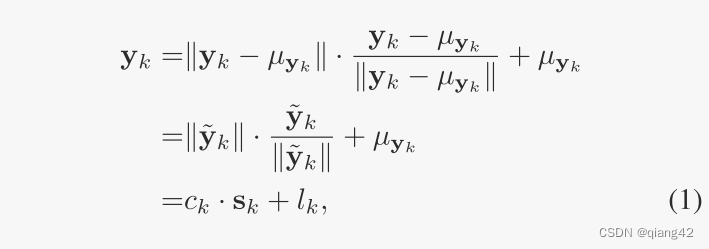

分别将RGB源图像转换为YCbCr,然后分别对每个通道(Y,Cb,Cr)提取特征(增加通道数但是不下采样),通过特征融合(相加),通过特征重构层生成该通道的融合图像,最后将融合后的Y,Cb,Cr转换到RGB生成最终融合图像。

🪢网络结构

网络结构很简单明了,如下图所示。图1黑框内部分的详细说明为图2所示。

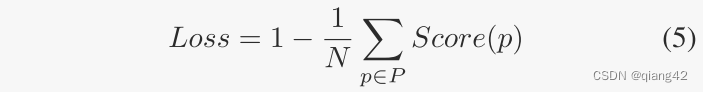

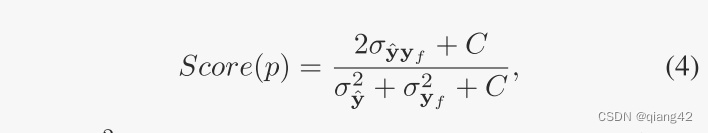

📉损失函数

这篇论文太早了,损失函数比较奇怪,我没有慢慢看,有兴趣的同学可以去看原文。

N是总像素数,P是所有像素的集合。

🔢数据集

图像融合数据集链接

[图像融合常用数据集整理]

🎢训练设置

🔬实验

📏评价指标

- MEF SSIM score

参考资料

✨✨✨强烈推荐必看博客 [图像融合定量指标分析]

🥅Baseline

- DeepFuse

🔬实验结果

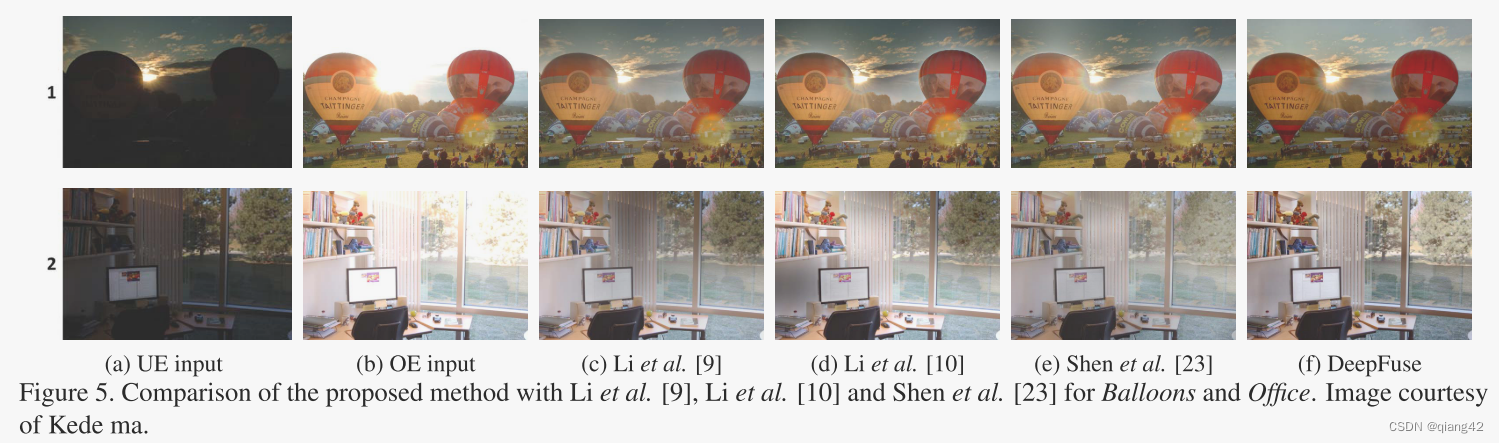

更多实验结果及分析可以查看原文:

📖[论文下载地址]

🚀传送门

📑图像融合相关论文阅读笔记

📑[GANMcC: A Generative Adversarial Network With Multiclassification Constraints for IVIF]

📑[DIDFuse: Deep Image Decomposition for Infrared and Visible Image Fusion]

📑[IFCNN: A general image fusion framework based on convolutional neural network]

📑[(PMGI) Rethinking the image fusion: A fast unified image fusion network based on proportional maintenance of gradient and intensity]

📑[SDNet: A Versatile Squeeze-and-Decomposition Network for Real-Time Image Fusion]

📑[DDcGAN: A Dual-Discriminator Conditional Generative Adversarial Network for Multi-Resolution Image Fusion]

📑[FusionGAN: A generative adversarial network for infrared and visible image fusion]

📑[PIAFusion: A progressive infrared and visible image fusion network based on illumination aw]

📑[Visible and Infrared Image Fusion Using Deep Learning]

📑[CDDFuse: Correlation-Driven Dual-Branch Feature Decomposition for Multi-Modality Image Fusion]

📑[U2Fusion: A Unified Unsupervised Image Fusion Network]

📚图像融合论文baseline总结

📑其他论文

📑[3D目标检测综述:Multi-Modal 3D Object Detection in Autonomous Driving:A Survey]

🎈其他总结

🎈[CVPR2023、ICCV2023论文题目汇总及词频统计]

✨精品文章总结

✨[图像融合论文及代码整理最全大合集]

✨[图像融合常用数据集整理]

如有疑问可联系:420269520@qq.com;

码字不易,【关注,收藏,点赞】一键三连是我持续更新的动力,祝各位早发paper,顺利毕业~

415

415

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?