摘要

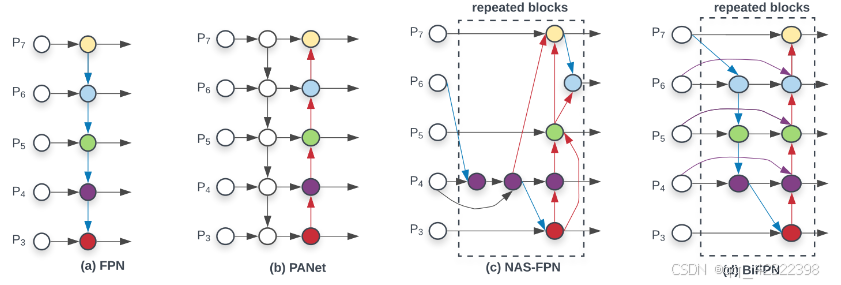

Bi-directional Feature Pyramid Network(BIFPN)是一种用于多尺度目标检测的高效特征金字塔网络架构。BIFPN通过引入双向特征融合机制,增强了不同层次特征之间的信息交流,显著提高了网络对多尺度物体的识别能力。该架构利用底向上的路径传递高分辨率特征,同时通过顶向下的路径增强低分辨率特征,从而实现高效的信息融合。BIFPN的设计不仅提高了特征的表达能力,还减少了计算成本和模型复杂度。在多项目标检测任务中,BIFPN表现出优越的性能,相比于传统特征金字塔网络,其在准确性和推理速度上均有显著提升。

创新点

1、双向特征融合:BIFPN引入了双向路径的特征融合机制,允许高分辨率和低分辨率特征之间的双向信息流动,增强了不同层次特征的互补性。

2、高效的信息整合:通过底向上的路径和顶向下的路径,BIFPN有效整合了来自不同尺度的特征,提升了对多尺度目标的检测能力。

3、 改进的计算效率:BIFPN在保留高性能的同时,通过设计优化减少了计算复杂度,允许在保持准确性的基础上加快推理速度。

4、自适应特征重用:BIFPN支持特征的自适应重用,使得网络能够更加灵活地适应不同的输入和任务,提高了模型的通用性。

5、模块化设计:该架构采用模块化设计,使其易于集成到现有的目标检测框架中,促进了模型的可扩展性和实用性。

代码实现

核心代码

import torch

import torch.nn as nn

__all__ = ['BiFPN_Concat']

def autopad(k, p=None, d=1):

if d > 1:

k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

class Conv(nn.Module):

default_act = nn.SiLU() # default activation

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def forward_fuse(self, x):

return self.act(self.conv(x))

class BiFPN_Concat(nn.Module):

def __init__(self, c1, c2):

super(BiFPN_Concat, self).__init__()

self.w1_weight = nn.Parameter(torch.ones(2, dtype=torch.float32), requires_grad=True)

self.w2_weight = nn.Parameter(torch.ones(3, dtype=torch.float32), requires_grad=True)

self.epsilon = 0.0001

self.conv = Conv(c1, c2, 1, 1, 0)

self.act = nn.ReLU()

def forward(self, x):

if len(x) == 2:

w = self.w1_weight

weight = w / (torch.sum(w, dim=0) + self.epsilon)

x = self.conv(self.act(weight[0] * x[0] + weight[1] * x[1]))

elif len(x) == 3:

w = self.w2_weight

weight = w / (torch.sum(w, dim=0) + self.epsilon)

x = self.conv(self.act(weight[0] * x[0] + weight[1] * x[1] + weight[2] * x[2]))

return x

实现

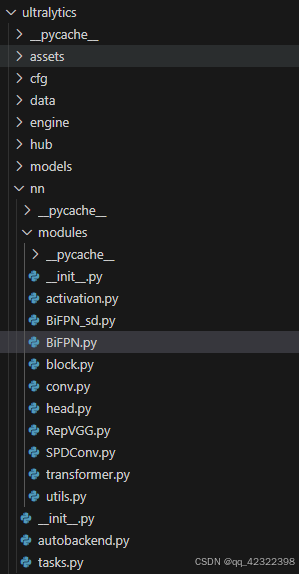

在ultralytics/nn/modules/下新建SPDConv.py,并将代码写入。

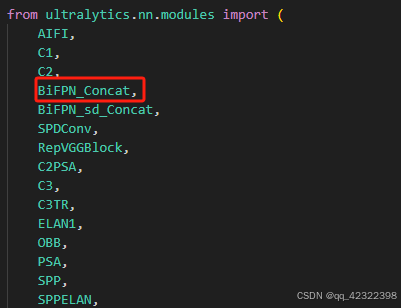

在ultralytics\nn\modules_init_.py中加入

from .BiFPN import *

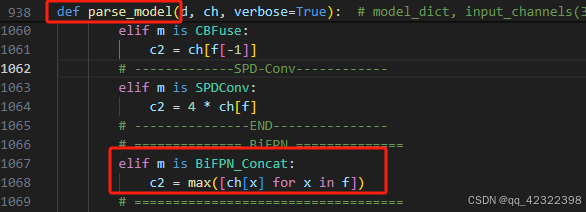

ultralytics/nn/ 目录下的 tasks.py 文件,找到 parse_model 函数添加以下代码

elif m is BiFPN_Concat:

c2 = max([ch[x] for x in f])

添加头文件

在 ultralytics\cfg\models\Yolov11\ 目录下新建 YAML 文件 yolov11-BIFPN.yaml,复制下列代码粘贴至此处,添加BIFPN方式一:

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0 backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 · 320 × 320 × 64

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 · 160 × 160 × 128

- [-1, 3, C2f, [128, True]] # 2 · 160 × 160 × 128

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 · 80 × 80 × 256

- [-1, 6, C2f, [256, True]] # 4 · 80 × 80 × 256

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 · 40 × 40 × 512

- [-1, 6, C2f, [512, True]] # 6 · 40 × 40 × 512

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32 · 20 × 20 × 1024

- [-1, 3, C2f, [1024, True]] # 8 · 20 × 20 × 1024

- [-1, 1, SPPF, [1024, 5]] # 9 · 20 × 20 × 1024

# YOLOv8.0-P2 head

head:

- [-1, 1, Conv, [512, 1, 1]] # 10 · 20 × 20 × 512

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 11 · 40 × 40 × 512

- [[-1, 6], 1, BiFPN_Concat, [256, 256]] # cat backbone P4 · 40 × 40 × 512(11) + 40 × 40 × 512(6) 注:YOLOv8s通道数是默认参数的一半!

- [-1, 3, C2f, [512]] # 13 · 40 × 40 × 512

- [-1, 1, Conv, [256, 1, 1]] # 14 · 40 × 40 × 256

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 15 · 80 × 80 × 256

- [[-1, 4], 1, BiFPN_Concat, [128, 128]] # cat backbone P3 · 80 × 80 × 256(15) + 80 × 80 × 256(4)

- [-1, 3, C2f, [256]] # 17 (P3/8-small) · 80 × 80 × 256

- [-1, 1, Conv, [128, 1, 1]] # 18 · 80 × 80 × 128

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 19 · 160 × 160 × 128

- [[-1, 2], 1, BiFPN_Concat, [64, 64]] # cat backbone P2 · 160 × 160 × 128(19) + 160 × 160 × 128(2)

- [-1, 3, C2f, [128]] # 21 (P2/4-tiny) · 160 × 160 × 128

- [4, 1, Conv, [128, 1, 1]] # 22 · 80 × 80 × 128

- [-2, 1, Conv, [128, 3, 2]] # 23 · 80 × 80 × 128

- [[-1, -2, 18], 1, BiFPN_Concat, [64, 64]] # cat head P3 · 80 × 80 × 128(23) + 80 × 80 × 128(22) + 80 × 80 × 128(18)

- [-1, 3, C2f, [256]] # 25 (P3/8-small) · 80 × 80 × 256

- [6, 1, Conv, [256, 1, 1]] # 26 · 40 × 40 × 256

- [-2, 1, Conv, [256, 3, 2]] # 27 · 40 × 40 × 256

- [[-1, -2, 14], 1, BiFPN_Concat, [128, 128]] # cat head P4 · 40 × 40 × 256(27) + 40 × 40 × 256(26) + 40 × 40 × 256(14)

- [-1, 3, C2f, [512]] # 29 (P4/16-medium) · 40 × 40 × 512

- [[21, 25, 29], 1, Detect, [nc]] # Detect(P2, P3, P4)

添加BIFPN方式二:

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0 backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 · 320 × 320 × 64

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 · 160 × 160 × 128

- [-1, 3, C2f, [128, True]] # 2 · 160 × 160 × 128

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 · 80 × 80 × 256

- [-1, 6, C2f, [256, True]] # 4 · 80 × 80 × 256

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 · 40 × 40 × 512

- [-1, 6, C2f, [512, True]] # 6 · 40 × 40 × 512

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32 · 20 × 20 × 1024

- [-1, 3, C2f, [1024, True]] # 8 · 20 × 20 × 1024

- [-1, 1, SPPF, [1024, 5]] # 9 · 20 × 20 × 1024

# YOLOv8.0-P2 head

head:

- [-1, 1, Conv, [512, 1, 1]] # 10 · 20 × 20 × 512

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 11 · 40 × 40 × 512

- [[-1, 6], 1, BiFPN_Concat, [256, 256]] # cat backbone P4 · 40 × 40 × 512(11) + 40 × 40 × 512(6)

- [-1, 3, C2f, [512]] # 13 · 40 × 40 × 512

- [-1, 1, Conv, [256, 1, 1]] # 14 · 40 × 40 × 256

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 15 · 80 × 80 × 256

- [[-1, 4], 1, BiFPN_Concat, [128, 128]] # cat backbone P3 · 80 × 80 × 256(15) + 80 × 80 × 256(4)

- [-1, 3, C2f, [256]] # 17 (P3/8-small) · 80 × 80 × 256

- [-1, 1, Conv, [128, 1, 1]] # 18 · 80 × 80 × 128

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 19 · 160 × 160 × 128

- [[-1, 2], 1, BiFPN_Concat, [64, 64]] # cat backbone P2 · 160 × 160 × 128(19) + 160 × 160 × 128(2)

- [-1, 3, C2f, [128]] # 21 (P2/4-tiny) · 160 × 160 × 128

- [2, 1, Conv, [128, 3, 2]] # 22 · 80 × 80 × 128

- [-2, 1, Conv, [128, 3, 2]] # 23 · 80 × 80 × 128

- [[-1, -2, 18], 1, BiFPN_Concat, [64, 64]] # cat head P3 · 80 × 80 × 128(23) + 80 × 80 × 128(22) + 80 × 80 × 128(18)

- [-1, 3, C2f, [256]] # 25 (P3/8-small) · 80 × 80 × 256

- [4, 1, Conv, [256, 3, 2]] # 26 · 40 × 40 × 256

- [-2, 1, Conv, [256, 3, 2]] # 27 · 40 × 40 × 256

- [[-1, -2, 14], 1, BiFPN_Concat, [128, 128]] # cat head P4 · 40 × 40 × 256(27) + 40 × 40 × 256(26) + 40 × 40 × 256(14)

- [-1, 3, C2f, [512]] # 29 (P4/16-medium) · 40 × 40 × 512

- [[21, 25, 29], 1, Detect, [nc]] # Detect(P2, P3, P4)

添加BIFPN方式三:

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0 backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 · 320 × 320 × 64

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 · 160 × 160 × 128

- [-1, 3, C2f, [128, True]] # 2 · 160 × 160 × 128

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 · 80 × 80 × 256

- [-1, 6, C2f, [256, True]] # 4 · 80 × 80 × 256

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 · 40 × 40 × 512

- [-1, 6, C2f, [512, True]] # 6 · 40 × 40 × 512

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32 · 20 × 20 × 1024

- [-1, 3, C2f, [1024, True]] # 8 · 20 × 20 × 1024

- [-1, 1, SPPF, [1024, 5]] # 9 · 20 × 20 × 1024

# YOLOv8.0-P2 head

head:

- [-1, 1, Conv, [512, 1, 1]] # 10 · 20 × 20 × 512

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 11 · 40 × 40 × 512

- [[-1, 6], 1, BiFPN_Concat, [256, 256]] # cat backbone P4 · 40 × 40 × 512(11) + 40 × 40 × 512(6)

- [-1, 3, C2f, [512]] # 13 · 40 × 40 × 512

- [-1, 1, Conv, [256, 1, 1]] # 14 · 40 × 40 × 256

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 15 · 80 × 80 × 256

- [[-1, 4], 1, BiFPN_Concat, [128, 128]] # cat backbone P3 · 80 × 80 × 256(15) + 80 × 80 × 256(4)

- [-1, 3, C2f, [256]] # 17 (P3/8-small) · 80 × 80 × 256

- [-1, 1, Conv, [128, 1, 1]] # 18 · 80 × 80 × 128

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 19 · 160 × 160 × 128

- [[-1, 2], 1, BiFPN_Concat, [64, 64]] # cat backbone P2 · 160 × 160 × 128(19) + 160 × 160 × 128(2)

- [-1, 3, C2f, [128]] # 21 (P2/4-tiny) · 160 × 160 × 128

- [-1, 1, Conv, [128, 3, 2]] # 22 · 80 × 80 × 128

- [[-1, 18], 1, BiFPN_Concat, [64, 64]] # cat head P3 · 80 × 80 × 128(22) + 80 × 80 × 128(18)

- [-1, 3, C2f, [256]] # 24 (P3/8-small) · 80 × 80 × 256

- [-1, 1, Conv, [256, 3, 2]] # 25 · 40 × 40 × 256

- [[-1, 14], 1, BiFPN_Concat, [128, 128]] # cat head P4 · 40 × 40 × 256(25) + 40 × 40 × 256(14)

- [-1, 3, C2f, [512]] # 27 (P4/16-medium) · 40 × 40 × 512

- [[21, 24, 27], 1, Detect, [nc]] # Detect(P2, P3, P4)

训练代码

在 YOLOv8项目的主目录下新建 Python 源文件 train.py,将以下代码复制粘贴至该文件中。

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLO

if __name__ == '__main__':

model = YOLO(r'ultralytics\cfg\models\v8\yolov8-BIFPN.yaml') #SPDCOnv

model.train(

data=r'myway.yaml',

cache=False,

imgsz=960,

epochs=300,

single_cls=False, # 是否是单类别检测

batch=4,

close_mosaic=0,

workers=0,

device='0',

optimizer='SGD', # using SGD

# resume='runs/train/exp/weights/last.pt', # 如过想续训就设置 last.pt 的地址

amp=False, # 如果出现训练损失为 Nan 可以关闭 amp

project='runs/train',

name='exp',

)

1200

1200

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?