应用场景

当你的数据集存在标注数据占比较小,无标注数据占大头的时候,可以考虑下自监督学习来提高主干网络的视觉表征能力,有关自监督学习的论文可以参考这篇博文。

分离出YoloV5的backbone

将YoloV5的backbone写成一个图像分类网络

class YoloBackbone(BaseModel):

# YOLOv5 detection model

def __init__(self, cfg='yolov5s.yaml', ch=3, nc=2, anchors=None): # model, input channels, number of classes

super().__init__()

if isinstance(cfg, dict):

self.yaml = cfg # model dict

else: # is *.yaml

import yaml # for torch hub

self.yaml_file = Path(cfg).name

with open(cfg, encoding='ascii', errors='ignore') as f:

self.yaml = yaml.safe_load(f) # model dict

# Define model

ch = self.yaml['ch'] = self.yaml.get('ch', ch) # input channels

if nc and nc != self.yaml['nc']:

LOGGER.info(f"Overriding model.yaml nc={self.yaml['nc']} with nc={nc}")

self.yaml['nc'] = nc # override yaml value

if anchors:

LOGGER.info(f'Overriding model.yaml anchors with anchors={anchors}')

self.yaml['anchors'] = round(anchors) # override yaml value

self.model, self.save = parse_model(deepcopy(self.yaml), ch=[ch]) # model, savelist

self.names = [str(i) for i in range(self.yaml['nc'])] # default names

self.inplace = self.yaml.get('inplace', True)

self.model = self.model[:9]

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(512, nc)

# Init weights, biases

initialize_weights(self)

self.info()

LOGGER.info('')

def forward(self, x, augment=False, profile=False, visualize=False):

x = self._forward_once(x, profile, visualize)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x # single-scale inference, train

基于主干网络的自监督训练

这里的自监督学习来源于开源repo——lightly-ai。

# Note: The model and training settings do not follow the reference settings

# from the paper. The settings are chosen such that the example can easily be

# run on a small dataset with a single GPU.

import torch

from torch import nn

import torchvision.transforms.functional as F

from prefetch_generator import BackgroundGenerator

import tqdm

import copy

from copy import deepcopy

from lightly.data import LightlyDataset

from lightly.data import SimCLRCollateFunction

from lightly.loss import NegativeCosineSimilarity

from lightly.models.modules import BYOLProjectionHead, BYOLPredictionHead

from lightly.models.utils import deactivate_requires_grad

from lightly.models.utils import update_momentum

class BYOL(nn.Module):

def __init__(self, backbone):

super().__init__()

self.backbone = backbone

self.projection_head = BYOLProjectionHead(512, 1024, 512)

self.prediction_head = BYOLPredictionHead(512, 1024, 512)

self.backbone_momentum = deepcopy(self.backbone)

self.projection_head_momentum = deepcopy(self.projection_head)

deactivate_requires_grad(self.backbone_momentum)

deactivate_requires_grad(self.projection_head_momentum)

def forward(self, x):

y = self.backbone(x).flatten(start_dim=1)

z = self.projection_head(y)

p = self.prediction_head(z)

return p

def forward_momentum(self, x):

y = self.backbone_momentum(x).flatten(start_dim=1)

z = self.projection_head_momentum(y)

z = z.detach()

return z

def normalize_tensor(tensor, mean, std, inplace=False):

return F.normalize(tensor, mean, std, inplace)

if __name__ == "__main__":

import torchvision.transforms as transforms

import argparse

from models.common import *

from models.experimental import *

from utils.autoanchor import check_anchor_order

from utils.general import LOGGER, check_version, check_yaml, make_divisible, print_args

from utils.plots import feature_visualization

from utils.torch_utils import (fuse_conv_and_bn, initialize_weights, model_info, profile, scale_img, select_device,

time_sync)

from models.yolo import YoloBackbone

parser = argparse.ArgumentParser()

parser.add_argument('--cfg', type=str, default='yolov5s.yaml', help='model.yaml')

parser.add_argument('--batch-size', type=int, default=1, help='total batch size for all GPUs')

parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--profile', action='store_true', help='profile model speed')

parser.add_argument('--line-profile', action='store_true', help='profile model speed layer by layer')

parser.add_argument('--test', action='store_true', help='test all yolo*.yaml')

opt = parser.parse_args()

opt.cfg = check_yaml(opt.cfg) # check YAML

print_args(vars(opt))

# Create model

yoloBackbone = YoloBackbone(opt.cfg)

model = BYOL(yoloBackbone)

gpus = [0, 1]

# torch.cuda.set_device("cuda:{}".format(gpus[0]))

device = "cuda" if torch.cuda.is_available() else "cpu"

# model = nn.DataParallel(model, device_ids=gpus, output_device=gpus[0])

model.to(device)

# cifar10 = torchvision.datasets.CIFAR10("datasets/cifar10", download=True)

# dataset = LightlyDataset.from_torch_dataset(cifar10)

# or create a dataset from a folder containing images or videos:

mean=[0.485, 0.456, 0.406]

std=[0.229, 0.224, 0.225]

dataset = LightlyDataset("/data/ssl")

collate_fn = SimCLRCollateFunction(

input_size=640,

gaussian_blur=0.,

)

dataloader = torch.utils.data.DataLoader(

dataset,

batch_size=32,

collate_fn=collate_fn,

shuffle=True,

drop_last=True,

num_workers=0,

)

criterion = NegativeCosineSimilarity()

optimizer = torch.optim.SGD(model.parameters(), lr=0.06)

print("Starting Training")

best_avg_loss = 1000000

for epoch in range(300):

total_loss = 0

for (x0, x1), _, _ in tqdm.tqdm(BackgroundGenerator(dataloader)):

update_momentum(model.backbone, model.backbone_momentum, m=0.99)

update_momentum(model.projection_head, model.projection_head_momentum, m=0.99)

x0 = x0.to(device)

x1 = x1.to(device)

p0 = model(x0)

z0 = model.forward_momentum(x0)

p1 = model(x1)

z1 = model.forward_momentum(x1)

loss = 0.5 * (criterion(p0, z1) + criterion(p1, z0))

total_loss += loss.detach()

loss.backward()

optimizer.step()

optimizer.zero_grad()

avg_loss = total_loss / len(dataloader)

print(f"epoch: {epoch:>02}, loss: {avg_loss:.5f}")

if best_avg_loss > avg_loss:

torch.save(model.backbone.state_dict(), "best_yolopBackbone.pth")

print(f"Finding optimal model params. Loss is dropping from {best_avg_loss:.4f} to {avg_loss:.4f}")

best_avg_loss = avg_loss

基于冻结主干梯度的模型预训练

在train.py中修改两处地方

修改模型加载方式,加载自监督学习后的主干参数

pretrained_dict = torch.load("SSL.pth")

model.load_state_dict(pretrained_dict, strict=False)

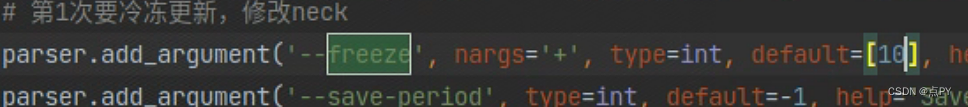

修改参数 freeze 设置为10

模型训练

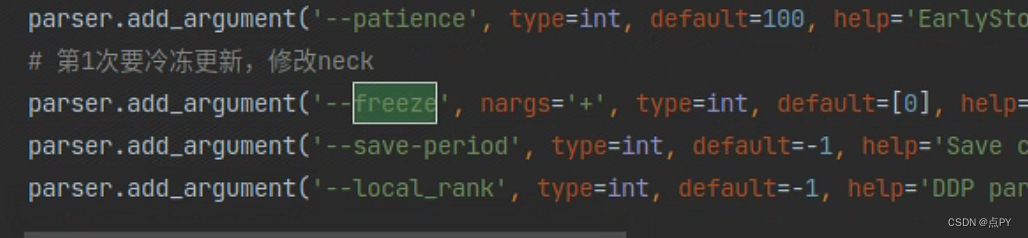

修改参数 freeze 设置为0

在train.py中修改两处地方

修改模型加载方式,加载预训练后的模型参数

pretrained_dict = torch.load("xxx/runs/train/expxx/weights/best.pth")

model.load_state_dict(pretrained_dict, strict=False)

1714

1714

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?